How to Convert PySpark String to Timestamp Type in Databricks?

This recipe will help you with know-how to convert a PySpark string to Timestamp in Databricks. | ProjectPro

Recipe Objective - How to Convert String to Timestamp in PySpark?

The to_timestamp() function in Apache PySpark is popularly used to convert String to the Timestamp(i.e., Timestamp Type). The default format of the Timestamp is "MM-dd-yyyy HH:mm: ss.SSS," and if the input is not in the specified form, it returns Null. The "to_timestamp(timestamping: Column, format: String)" is the syntax of the Timestamp() function where the first argument specifies the input of the timestamp string that is the column of the dataframe. The Second argument specifies an additional String argument which further defines the format of the input Timestamp and helps in the casting of the String from any form to the Timestamp type in the PySpark.

Table of Contents

System Requirements

-

Python (3.0 version)

-

Apache Spark (3.1.1 version)

Check out this recipe to understand the conversion from string to timestamp in Spark SQL. This recipe provides a step-by-step guide on how to convert a string to a timestamp in PySpark, covering essential concepts such as the to_timestamp() function and casting using the cast() function.

-

Setting up the Environment

Before diving into the conversion process, ensure you have the necessary environment set up. This includes having Python (version 3.0 or above) and Apache Spark (version 3.1.1 or above) installed.

Implementing the to_timestamp() functions in Databricks in PySpark

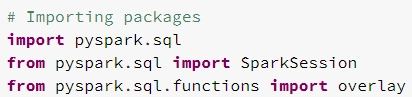

# Importing packages

from pyspark.sql import SparkSession

from pyspark.sql.functions import *

The SparkSession and all packages are imported in the environment to perform conversion of PySpark string to timestamp function.

-

Creating a Spark Session

Initialize a Spark session using the SparkSession.builder method. This is the starting point for creating a DataFrame and performing PySpark operations.

# Implementing String to Timestamp in Databricks in PySpark

spark = SparkSession.builder \

.appName('PySpark String to Timestamp') \

.getOrCreate()

-

Creating a DataFrame

Create a sample DataFrame with a string column representing timestamps. This will be the basis for the subsequent conversion operations.

dataframe = spark.createDataFrame(

data = [ ("1","2021-08-26 11:30:21.000")],

schema=["id","input_timestamp"])

dataframe.printSchema()

Also Check - Explain the creation of Dataframes in PySpark in Databricks

-

Converting PySpark String to Timestamp

Utilize the to_timestamp() function to convert the string column (input_timestamp) to a timestamp column (timestamp) within the DataFrame.

# Converting String to Timestamp

dataframe.withColumn("timestamp",to_timestamp("input_timestamp")) \

.show(truncate=False)

-

Cast String to Timestamp in PySpark

In cases where the timestamp format is not standard, employ the cast() function to convert the timestamp column back to a string after using to_timestamp().

# Using Cast to convert String to Timestamp

dataframe.withColumn('timestamp', \

to_timestamp('input_timestamp').cast('string')) \

.show(truncate=False)

_function_in_Databricks_in_PySpark.webp)

The "dataframe" value is created in which the data is defined—using the to_timestamp() function that is converting the String to Timestamp (TimestampType) in the PySpark. Using the cast() function, the conversion of string to timestamp occurs when the timestamp is not in the custom format and is first converted into the appropriate timestamp format.

Practice more Databricks Operations with ProjectPro!

Converting strings to timestamp types is crucial for effective data processing in Databricks. However, theoretical knowledge alone is insufficient. To truly solidify your skills, it's essential to gain practical experience through real-world projects. ProjectPro offers a diverse repository of solved projects in data science and big data. By immersing yourself in hands-on exercises provided by ProjectPro, you can enhance your proficiency in Databricks operations and ensure a seamless transition from theory to practical application.

Download Materials