Explain Spark Application with example

In this tutorial, we will go through a simple spark application. We will be making use of pyspark for the example.

Explain Spark Application with example

A Spark application is a self-contained calculation that generates a result using user-supplied code. Even when a Spark application isn't running a job, it can have processes operating on its behalf.

Access Snowflake Real Time Data Warehousing Project with Source Code

Let us see the code for creating a very basic spark application –

Code:

import pyspark

from pyspark.sql import SparkSession

spark = SparkSession.builder.appName('SparkApplicationExample').getOrCreate()

sc=spark.sparkContext

nums= sc.parallelize([1,2,3,4])

print(nums.take(1))

Output:

[1]

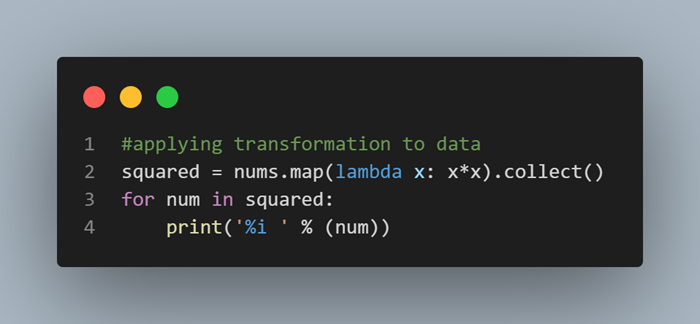

We can even apply transformations such as -

Explore PySpark Machine Learning Tutorial to take your PySpark skills to the next level!

Code:

#applying transformation to data

squared = nums.map(lambda x: x*x).collect()

for num in squared:

print('%i ' % (num))

Output:

1

4

9

16