How to Save a PySpark Dataframe to a CSV File?

Discover the quickest and most effective way to export PySpark DataFrames to CSV files with this comprehensive recipe guide. | ProjectPro

Recipe Objective: How to Save a PySpark Dataframe to a CSV File?

Are you working with PySpark and looking for a seamless way to save your DataFrame to a CSV file? You're in the right place! Check out this recipe to explore various methods and optimizations for efficiently saving your PySpark DataFrame as a CSV file.

Table of Contents

Prerequisites - Saving PySpark Dataframe to CSV

Before proceeding with the recipe, make sure the following installations are done on your local EC2 instance.

-

Single node Hadoop - click here

-

Apache Hive - click here

-

Apache Spark - click here

-

PySpark - click here

Steps to set up an environment

-

In the AWS, create an EC2 instance and log in to Cloudera Manager with your public IP mentioned in the EC2 instance. Login to putty/terminal and check if PySpark is installed. If not installed, please find the links provided above for installations.

-

Type "<your public IP>:7180" in the web browser and log in to Cloudera Manager, where you can check if Hadoop, Hive, and Spark are installed.

-

If they are not visible in the Cloudera cluster, you may add them by clicking on the "Add Services" in the cluster to add the required services in your local instance.

Build Classification and Clustering Models with PySpark and MLlib

How to Save a PySpark Dataframe as a CSV File - Step-by-Step Guide

Here is a step-by-step implementation on saving a PySpark DataFrame to CSV file -

Step 1: Set up the environment

This step involves setting up the variables for Pyspark, Java, Spark, and python libraries:

Please note that these paths may vary in one's EC2 instance. Provide the full path where these are stored in your instance.

Step 2: Import the Spark session

This step involves importing the spark session and initializing it. You can name your application and master program at this step. We provide appName as "demo," and the master program is set as "local" in this recipe.

Step 3: Create a DataFrame

Let’s demonstrate this recipe by creating a dataframe using the "users_json.json" file. Make sure that the file is present in the HDFS. Check for the same using the command:

hadoop fs -ls <full path to the location of file in HDFS>

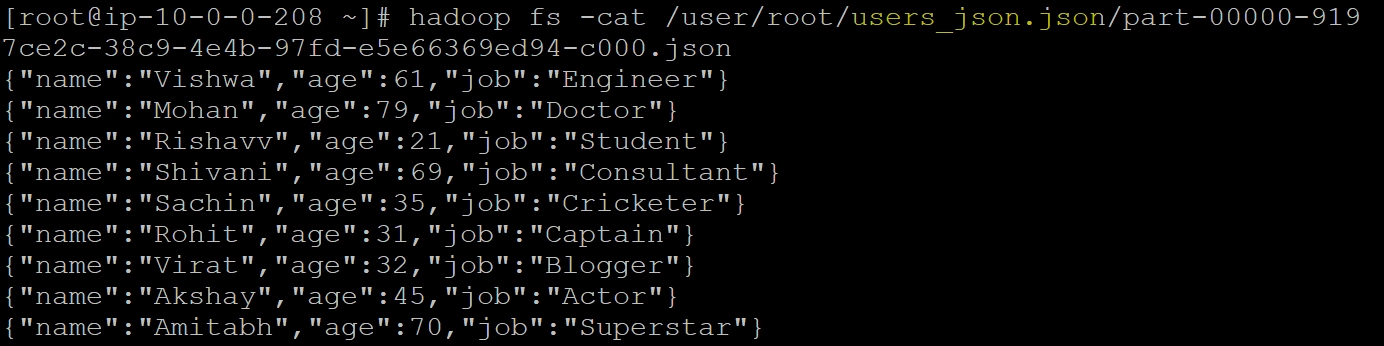

The JSON file "users_json.json" used in this recipe to create the DataFrame is as below.

Step 4: Read the JSON File

The next step involves reading the JSON file into a dataframe (here, "df") using the code spark.read.json("users_json.json) and checking the data present in this dataframe.

PySpark Project-Build a Data Pipeline using Hive and Cassandra

Step 5: Store the DataFrame as a CSV File

Store this DataFrame as a CSV file using the code df.write.csv("csv_users.csv") where "df" is our dataframe, and "csv_users.csv" is the name of the CSV file we create upon saving this dataframe.

Step 6: Check the Schema

Now check the schema and data in the dataframe upon saving it as a CSV file.

This is how a dataframe can be saved as a CSV file using PySpark.

Best PySpark Tutorial For Beginners With Examples

Elevate Your PySpark Skills with ProjectPro!

Saving a PySpark DataFrame to a CSV file is a fundamental operation in data processing. By understanding the methods available and incorporating optimizations, you can streamline this process and enhance the efficiency of your PySpark workflows. However, real-world projects provide the practical experience needed to navigate the complexities of PySpark effectively. ProjectPro, with its repository of over 270+ data science and big data projects, offers a dynamic platform for learners to apply their knowledge in a hands-on, professional context. Redirect your learning journey to ProjectPro to gain practical expertise, build a compelling portfolio, and stand out in the competitive landscape of big data analytics.