Hadoop Architecture Explained-The What, How and Why

Learn about Hadoop architecture and its components, including HDFS, YARN, and MapReduce. Explore its applications and potential with ProjectPro.

Understanding the Hadoop architecture now gets easier! This blog will give you an in-depth insight into the architecture of Hadoop and its significant components- HDFS, YARN, and MapReduce. We will also look at how each component in the Hadoop ecosystem plays a vital role in making Hadoop efficient for big data processing.

Design a Hadoop Architecture

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectTable of Contents

- Hadoop Architecture - The Inception

- Utilizing Hadoop Application Architecture

- Apache Hadoop Architecture Overview

- Apache Hadoop Architecture Explanation (With Diagrams)

- Hadoop Common Architecture

- Hadoop HDFS Architecture / Hadoop Master Slave Architecture

- Hadoop YARN Architecture

- Big Data Hadoop Architecture Design – Best Practices

- Latest Version of Hadoop Architecture (Version 3.3.3)

- Case Studies of Hadoop Architecture

- FAQs on Hadoop Architecture

Hadoop Architecture - The Inception

Hortonworks' founder predicted that by the end of 2020, 75% of Fortune 2000 companies would be running 1000-node Hadoop clusters in production. The tiny toy elephant in the big data room has become the most popular big data solution globally. However, implementing Hadoop in production still needs deployment and management challenges like scalability, flexibility, and cost-effectiveness.

Many organizations that venture into enterprise adoption of Hadoop by business users or by an analytics group within the company do not know how an excellent Hadoop physical architecture design should be and how a Hadoop cluster works in production. This lack of knowledge leads to creating a Hadoop cluster that is more complex than is necessary for a particular big data application making it a pricey implementation. One of the main reasons behind developing Apache Hadoop was to have a low–cost, redundant data store that would allow organizations to leverage big data analytics at an economical cost and maximize the business's profitability.

A good Hadoop architectural design requires various design considerations regarding computing power, networking, and storage. This blog post explains the Hadoop architecture and the factors to consider when designing and building a Hadoop cluster for production success. But before we dive into Hadoop's architecture, let us look at what Hadoop is and the reasons behind its popularity.

Utilizing Hadoop Application Architecture

“Data, Data everywhere; not a disk to save it.” This quote feels so relatable to most of us today as we usually run out of space on our Laptops or mobile phones. Hard disks are now becoming more popular as a solution to this problem. But, what about companies like Facebook and Google, where their users are constantly generating new data in pictures, posts, etc., every millisecond? How do these companies handle their data so smoothly that every time we log in to our accounts on these websites, we can access all our chats, emails, etc., without any difficulties? The answer is that these tech giants use frameworks like Apache Hadoop. These frameworks make accessing information from large datasets easy using simple programming models. These frameworks achieve this feat through distributed processing of the datasets across clusters of computers.

To understand this better, consider the case where we want to transfer 100 GB of data in 10 minutes. One way to achieve this is to have one colossal computer that can transmit at lightning speed of 10GB in one minute. Another method is to store the data in 10 computers and then let each computer transfer the data at 1GB/min. The latter task of parallel processing sounds more straightforward than the former, which is the task that Apache Hadoop achieves.

Let us now take a look at how Apache Hadoop implements this parallel processing by understanding the architecture of Hadoop.

Here's what valued users are saying about ProjectPro

Gautam Vermani

Data Consultant at Confidential

Anand Kumpatla

Sr Data Scientist @ Doubleslash Software Solutions Pvt Ltd

Not sure what you are looking for?

View All ProjectsApache Hadoop Architecture Overview

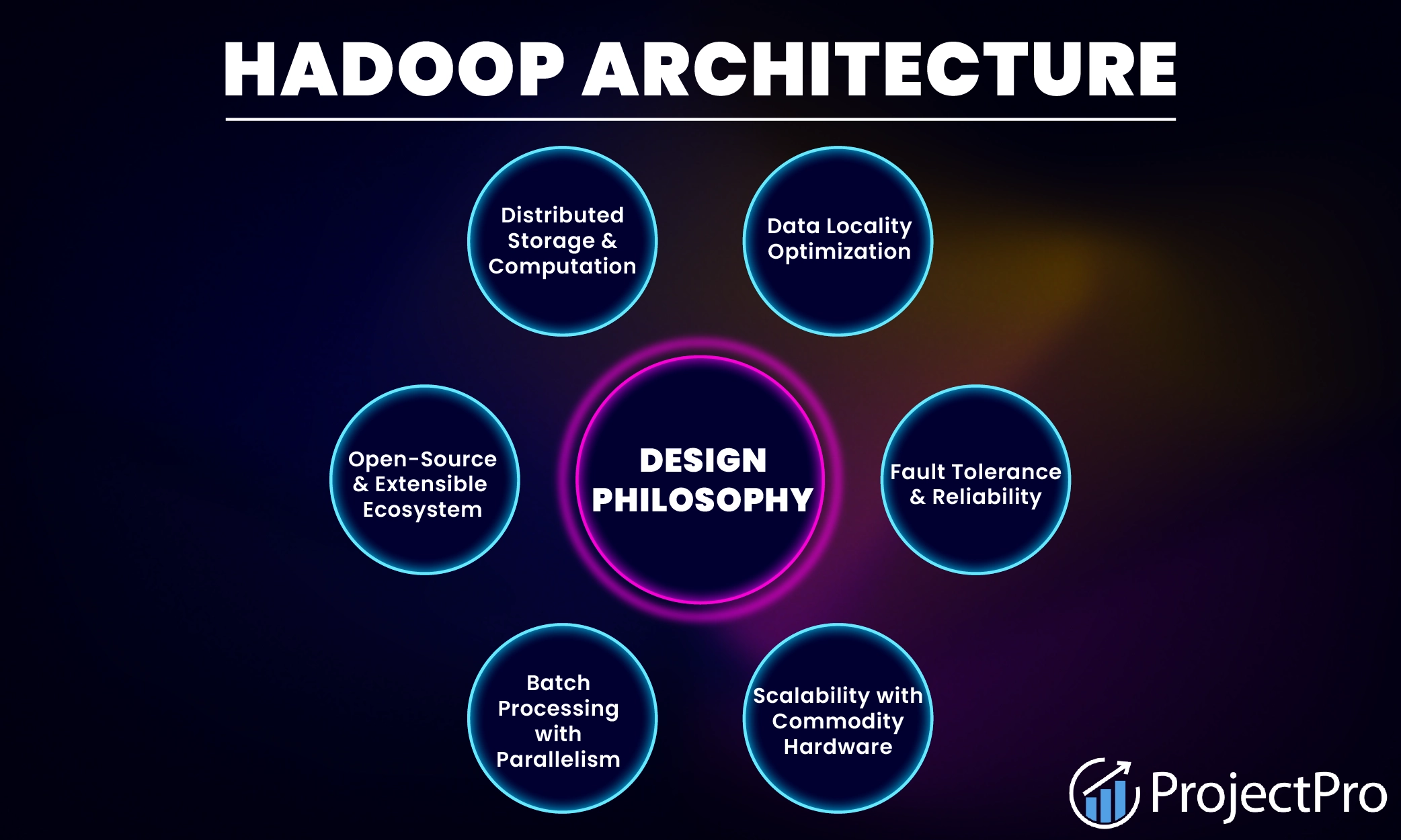

Apache Hadoop offers a scalable, flexible, and reliable distributed computing big data framework for a cluster of systems with storage capacity and local computing power leveraging commodity hardware. Hadoop follows a Master-Slave architecture to transform and analyze large datasets using the Hadoop MapReduce paradigm.

The Hadoop architecture in cloud computing typically consists of multiple components, including:-

-

Hadoop Common– It contains libraries and utilities that other Hadoop modules require.

-

Hadoop Distributed File System (HDFS)– The Hadoop distributed file system stores data on commodity machines, providing high aggregate bandwidth across the cluster, patterned after the UNIX file system, and offers POSIX-like semantics.

-

Hadoop YARN – This platform is in charge of managing computing resources in clusters and utilizing them for planning users' applications.

-

Hadoop MapReduce – This application of the MapReduce programming model is useful for large-scale data processing. Both Hadoop's MapReduce and HDFS take inspiration from Google's papers on MapReduce and Google File System.

-

Hadoop Ozone – This is a scalable, redundant, and distributed object store for Hadoop. This is a new addition (introduced in 2020) to the Hadoop family, and unlike HDFS, it can handle both small and large files.

New Projects

Apache Hadoop Architecture Explanation (With Diagrams)

Hadoop's skill set requires thoughtful knowledge of every layer in the Hadoop stack, from understanding the Hadoop physical architecture's various components, designing a Hadoop cluster, performance tuning it, and setting up the top chain responsible for data processing.

In the previous section, we talked about how one can use more machines to transfer data quickly. But there are specific problems: the more devices are in use, the higher the chances of hardware failure. Hadoop Distributed File System (HDFS) addresses this problem as it stores multiple copies of the data so one can access another copy in case of failure. Another problem with using various machines is that there has to be one concise way of combining the data using a standard programming model. Hadoop's MapReduce solves this problem. It allows reading and writing data from the machines (or disks) by performing computations over sets of keys and values. Both HDFS and MapReduce form the core of Hadoop architecture's functionalities.

Exploring Hadoop Ecosystem Architecture - HDFS, YARN, and MapReduce

Hadoop follows a master-slave architecture design for data storage and distributed processing using HDFS and MapReduce. The master node for data storage is Hadoop HDFS, the NameNode, and the master node for parallel data processing using Hadoop MapReduce is the Job Tracker. The slave nodes in the Hadoop physical architecture are the other machines in the Hadoop cluster that store data and perform complex computations. Every slave node has a Task Tracker daemon and a DataNode that synchronizes the processes with the Job Tracker and NameNode. In Hadoop architectural implementation, you can set up the master or slave systems in the cloud or on-premise.

Below is the Hadoop Architecture Diagram-

Image Credit: OpenSource.com

The big data Hadoop architecture has mainly four layers in it. Let us understand the Hadoop architecture diagram and its layers in detail-

1. Distributed Storage Layer

A Hadoop cluster consists of several nodes, each having its own disk space, memory, bandwidth, and processing. HDFS considers each disk drive and slave node in the cluster as inconsistent, and HDFS holds three copies of each data set throughout the cluster as a backup. The HDFS master node (NameNode) maintains each data block's information and copies.

2. Cluster Resource Management

Initially, MapReduce was responsible for the tasks of resource management as well as data processing in a Hadoop cluster. The architecture brought YARN into the scenario to overcome this situation. YARN is the ideal tool for handling resource management when allocating resources to various Hadoop frameworks such as Apache Pig, Hive, etc.

3. Processing Framework Layer

This layer comprises frameworks responsible for evaluating and processing any data that enters the cluster. The incoming datasets are either structured or unstructured, and these datasets are further mapped, mixed, categorized, integrated, and broken down into smaller, more easily manageable chunks of data. These data processing tasks distribute over numerous nodes as near as possible to the data servers.

4. Application Programming Interface

Adding YARN to the Hadoop physical architecture has caused several data processing frameworks and APIs to emerge, such as HBase, Hive, Apache Pig, SQL, etc. A robust Hadoop ecosystem includes programs that focus on search platforms, data streaming, user-friendly APIs, networking, safety, etc.

After understanding these layers, let us look at what role each of these layers/frameworks/tools plays. The following section will explain hadoop architecture in detail, covering the architecture of each component.

Gain expertise in big data tools and frameworks with exciting big data projects for students.

Hadoop Common Architecture

Hadoop Common is a collection of libraries and utilities that other Hadoop components require. Another name for Hadoop common is Hadoop Stack. Hadoop Common forms the base of the Hadoop framework. The critical responsibilities of Hadoop Common are:

a) Supplying source code and documents and a contribution section.

b) Performing basic tasks- abstracting the file system, generalizing the operating system, etc.

c) Supporting the Hadoop Framework by keeping Java Archive files (JARs) and scripts needed to initiate Hadoop.

Hadoop HDFS Architecture / Hadoop Master Slave Architecture

A file on HDFS splits into multiple blocks, each replicating within the Hadoop cluster. A block on HDFS is a blob of data within the underlying local file system with a default size of 64MB. You can extend the size of a block up to 256 MB based on the requirements.

Check out the HDFS architecture diagram below:

Image Credit: Apache.org

The Hadoop Distributed File System, also known as Apache HDFS, is a file system that divides files into blocks of a specific size and stores them across multiple machines in a cluster. HDFS distinguishes itself from other distributed file systems in several ways because of its fault-tolerant capabilities and its suitability for deployment on low-cost hardware.

NameNode and DataNode are the two critical components of the Hadoop HDFS architecture. DataNodes are the servers that store application data, and NameNodes are the servers that store file system metadata. HDFS replicates the file content on multiple DataNodes based on the replication factor. The NameNode and DataNode communicate with each other using TCP-based protocols. For the architecture to be performance efficient, HDFS must satisfy specific pre-requisites –

-

All the hard drives should have a high throughput.

-

Good network speed to manage intermediate data transfer and block replications.

NameNode

NameNode is a single master server that exists within the HDFS cluster. All the files and directories in the Hadoop distributed file system namespace are represented on the NameNode by Inodes that contain attributes like permissions, modification timestamp, disk space quota, namespace quota, and access times. NameNode maps the entire file system structure into memory. Two files, fsimage, and edits, are helpful for persistence during restarts.

-

The fsimage file contains the Inodes and the list of blocks that define the metadata. It has a complete snapshot of the file systems metadata at any time.

-

The edits file contains any modifications that take place on the content of the fsimage file. Incremental changes like renaming or appending data to the file are stored in the edit log to ensure durability instead of creating a new fsimage snapshot every time there is any modification in the namespace.

When the NameNode begins, the fsimage file is loaded, and then the contents of the edits file are applied to recover the latest state of the file system. The only problem with this is that the edits file grows and consumes all the disk space over time, slowing down the restart process. If the Hadoop cluster does not restart for months, there will be massive downtime as the edit file size increases. This is when Secondary NameNode comes to the rescue. Secondary NameNode gets the fsimage and edits log from the primary NameNode at regular intervals and loads both the fsimage and edit logs file to the main memory by applying each operation from the edits log file to fsimage. Secondary NameNode copies the new fsimage file to the primary NameNode and will update the modified time of the fsimage file to the fstime file to track when the fsimage file updates.

DataNode

DataNode manages the state of an HDFS node and interacts with the data blocks. A DataNode can perform CPU-intensive jobs like semantic and language analysis, statistics, and machine learning tasks and I/O-intensive jobs like clustering, import, export, search, decompression, and indexing. A DataNode needs a lot of I/O for data processing and transfer.

Every DataNode connects to the NameNode and performs a handshake to verify the namespace ID and the software version of the DataNode. If neither of them matches, the DataNode shuts down automatically. A DataNode verifies the block replicas in its ownership by sending a block report to the NameNode. When the DataNode registers, the first block report is sent. DataNode sends a heartbeat to the NameNode every 3 seconds to confirm the DataNode is operating and the block replicas are available.

There are a few other components in the HDFS- Master-Slave Architecture of Hadoop:

-

File Blocks

The smallest unit of storage on a computer system is the block. Actual data always remains in HDFS regarding blocks, and the default block size in Hadoop is 128MB or 256 MB.

-

Replication Management

HDFS uses a replication method to enable fault tolerance. It involves replicating and storing the data block on various Data nodes, and the replication factor is at 3.

Hadoop YARN Architecture

YARN in Hadoop YARN stands for Yet Another Resource Negotiator, which came into the picture after the Hadoop 2.x versions. The critical function of YARN is to supply the computational resources required for applications' execution by distinguishing between resource management and job scheduling.

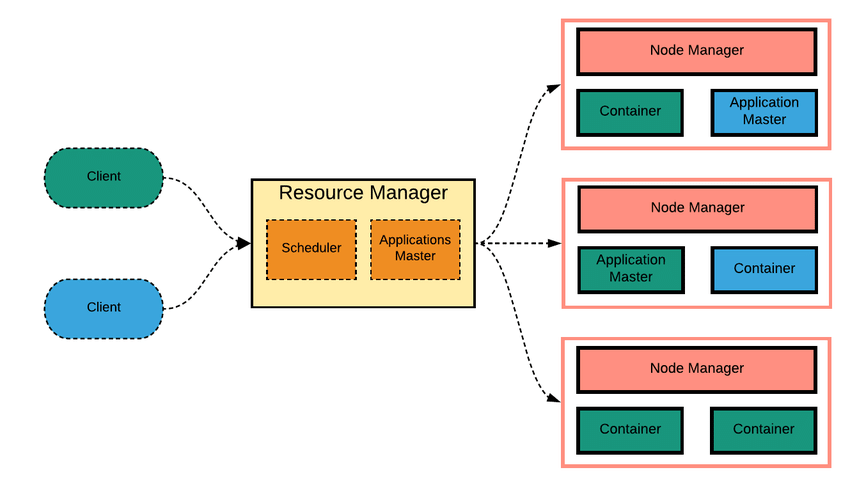

Hadoop YARN Infrastructure

The two essential characteristics of YARN infrastructure are Resource Manager (RM) and Node Manager (NM). Let us consider the profile of YARN in a bit more detail.

Source: ResearchGate

a) Resource Manager (RM):

In the two-level cluster, the Resource Manager is the master of the YARN infrastructure. It lies in the top tier (one per cluster) and is aware of where the lower levels, or in other words, slave nodes, are present. It knows how many resources the slave nodes have. The Resource Manager performs various functions, the most important being Resource Scheduling, deciding how to allocate resources.

It comprises two primary components-

-

Scheduler: Assigns resources to multiple operating processes and schedules resources based on the application's demands. It does not check or track application progress.

-

Application Master: Acknowledging job entries from clients or tracking and resuming application masters in the event of failure is the responsibility of the application manager.

b) Node Manager (NM):

In the two-level cluster, the Node Manager (Application Manager) lies at the lower tier (many per cluster). It is the slave node of the YARN infrastructure. When the Node Manager is ready for execution, it declares itself to the Resource Manager. Resource Scheduler (that performs Resource Scheduling). The node can assign resources to the cluster, and its resource capacity is the memory and other resources volume. During the execution, the Resource Scheduler (one that performs Resource Scheduling) controls how to utilize this capacity.

Essential Functions of Hadoop YARN

1. Hadoop YARN promotes the dynamic distribution of cluster resources between various Hadoop components and frameworks like MapReduce, Impala, and Spark.

2. On the same Hadoop data collection, YARN supports a range of access engines (both open-source and private).

3. The ResourceManager component of YARN is responsible for scheduling and managing the continually growing cluster, which processes vast amounts of data.

4. At present, YARN controls memory and CPU. In the future, we expect it to be responsible for allocating additional resources like disk and network I/O.

Hadoop MapReduce Architecture

The heart of the distributed computation platform Hadoop is its java-based programming paradigm Hadoop MapReduce. Map or Reduce is a particular type of directed acyclic graph that one can apply to many business use cases. Map function transforms the data into key-value pairs, and then the keys are sorted, where a reduce function is applied to merge the values based on the key into a single output.

How Does the Hadoop MapReduce Architecture Work?

Source: eduCBA

The execution of a MapReduce job begins when the client submits the job configuration to the Job Tracker that specifies the map combine and reduces functions and location for input and output data. On receiving the job configuration, the job tracker identifies the number of splits based on the input path and selects Task Trackers based on their network vicinity to the data sources. Job Tracker sends a request to the selected Task Trackers.

The processing of the Map phase begins when the Task Tracker extracts the input data from the splits. This invokes the map function for each record parsed by the “InputFormat,” which produces key-value pairs in the memory buffer. The memory buffer sorts into different reducer nodes by invoking the combined function. On completion of the map task, Task Tracker notifies the Job Tracker. When all Task Trackers are over, the Job Tracker tells the selected Task Trackers to begin the reduce phase. Task Tracker reads the region files and sorts the key-value pair for each key. It then invokes the reduce function, collecting the aggregated values into the output file.

Hadoop Ozone Architecture

Hadoop Ozone extends the Apache Hadoop that performs two crucial functions: object storage and semantic computing. It is a new project and has come after Hadoop 0.3.0 version.

A few salient features of Ozone are:

1. It can scale to tens of billions of files and blocks. This number is likely to increase in the future.

2. By implementing protocols like RAFT, Hadoop Ozone is an object store with solid consistency.

3. It can work efficiently in containerized environments like YARN and Kubernetes.

4. Hadoop Ozone holds up TDE and on-wire encryption. It combines with Kerberos infrastructure for access control.

5. It can implement various protocols like S3 and Hadoop File System APIs.

6. It is a fully replicated system that can overcome multiple failures.

Hadoop also provides various tools and frameworks for processing and analyzing big data, such as Pig, Hive, HBase, Spark, etc. These tools can be used with Hadoop to perform tasks such as data processing, querying, and analysis.

So, let us take a look at the Hadoop architecture based on these hadoop ecosystem tools:

Apache Hive Hadoop Architecture

Apache Hive is a data warehousing solution that allows users to query and analyze large datasets stored in HDFS. The Hive architecture is built on top of Hadoop, using its HDFS for data storage and MapReduce for data processing. The components of Apache Hive's Hadoop architecture are as follows:

-

Hive Server: Hive Server is the users' primary interface for submitting queries to Apache Hive.

-

Metastore: The Metastore is a central repository that stores metadata about the tables, partitions, and columns in Apache Hive.

-

Hive Driver: The Hive Driver is responsible for executing queries submitted by clients.

-

Hive Compiler: The Hive Compiler translates HiveQL queries into MapReduce jobs that can be executed on a Hadoop cluster.

-

Hive Executor: The Hive Executor executes the MapReduce jobs generated by the Hive Compiler.

Pig Hadoop Architecture

The Pig architecture in Hadoop is a powerful tool for analyzing large data sets in a distributed computing environment. It uses a scripting language called Pig Latin to transform and manipulate data. This language is then compiled into MapReduce jobs which run on a Hadoop cluster.

The components of the Pig on Hadoop architecture include:

-

Pig Latin: A scripting language used for data processing in Pig.

-

Pig Engine: For parsing Pig Latin scripts and compiling them into a series of MapReduce jobs.

-

MapReduce: MapReduce is a programming model for processing large data sets in a distributed computing environment.

-

Hadoop Distributed File System (HDFS): HDFS is a distributed file system used by Hadoop to store large data sets.

-

Execution Environment: The Pig Hadoop architecture can be executed on a Hadoop cluster.

Big Data Hadoop Architecture Design – Best Practices

-

Use good-quality commodity servers to make it cost-efficient and easier to scale out for complex business use cases. One of the ideal configurations for Hadoop big data architecture is six-core processors, 96 GB of memory, and 104 TB of local hard drives. Although it is an exemplary configuration, it's not an absolute one.

-

Move the processing close to the data instead of separating the two for faster and more efficient data processing.

-

Hadoop scales and performs better with local drives, so use Just a Bunch of Disks (JBOD) with replication instead of a redundant array of independent disks (RAID).

-

Design multi-tenancy architecture by sharing the compute capacity with the capacity scheduler and HDFS storage.

-

Do not edit the metadata files as it can corrupt the state of the Hadoop cluster.

Theoretical knowledge is not enough to crack any Big Data interview. Get your hands dirty on Hadoop projects for practice and master your Big Data skills!

Latest Version of Hadoop Architecture (Version 3.3.3)

The Apache Hadoop 3.3 line came up with its third stable release, Apache Hadoop version 3.3.3, on 17th May 2022. The latest Hadoop version has several significant improvements, such as bug fixes, upgrades, etc. Let us look at the new features and upgrades in the latest version of the Hadoop application architecture-

-

Supports ARM architecture- The Advanced RISC Machine (ARM) is a 32-bit reduced instruction set (RISC) processor architecture. Some of the features of the ARM architecture include load/store architecture, single-cycle instruction execution, consistent 16x32 bit register file, link register, etc.

-

Supports Guava shaded version- Due to compatibility and vulnerability issues, relying on Guava's implementation in Hadoop has been challenging. Guava upgrades tend to break APIs, making it difficult to ensure backward compatibility between Hadoop versions and clients/downstream applications. The latest Hadoop version uses a shaded version of Guava from Hadoop-thirdparty, preventing Guava version incompatibilities with downstream applications.

-

S3 Code upgrades-Hadoop's 'S3A' client provides high-speed IO against the Amazon S3 object store and similar solutions. Its features include direct reading and writing of S3 objects, interoperability with regular S3 clients, support for per-bucket settings, etc. There are many improvements to the S3A code in the latest Hadoop release, including Delegation Token support, better handling of 404 caching, S3guard performance, and resilience improvements.

Case Studies of Hadoop Architecture

Facebook Hadoop Architecture

With 1.59 billion accounts (approximately 1/5th of the world's total population), 10+ million videos uploaded monthly, 1+ billion content pieces shared weekly, and more than 1 billion photos uploaded monthly – Facebook uses Hadoop to interact with petabytes of data. Facebook runs the world's largest Hadoop Cluster, with over 4000 machines storing hundreds of millions of gigabytes of data. The most significant Hadoop cluster at Facebook has about 2500 CPU cores and 1 PB of disk space. The big data engineers at Facebook load more than 250 GB of compressed data (greater than 2 TB of uncompressed data) into HDFS daily and hundreds of Hadoop jobs are running daily on these datasets.

Where is the data stored on Facebook?

135 TB of compressed data is scanned daily, and 4 TB of compressed information is added daily. Wondering where all this data is stored? Facebook has a Hadoop/Hive warehouse with a two-level network topology having 4800 cores and 5.5 PB storing up to 12TB per node. 7500+ Hadoop Hive jobs run in the production cluster daily with an average of 80K compute hours. Non-engineers, i.e., analysts at Facebook, use Hadoop through Hive, and approximately 200 people/month run jobs on Apache Hadoop.

Hadoop/Hive warehouse at Facebook uses a two-level network topology -

-

4 Gbit/sec to top-level rack switch

-

1 Gbit/sec from node to rack switch

Yahoo Hadoop Architecture

Hadoop at Yahoo has 36 different Hadoop clusters spread across Apache HBase, Storm, and YARN, totaling 60,000 servers made from 100's different hardware configurations built up over generations. Yahoo runs the largest multi-tenant Hadoop installation globally with a broad set of use cases, and Yahoo runs 850,000 Hadoop jobs daily.

Last.FM Hadoop Architecture

Last.FM is an online community of music lovers and an internet radio website. It provides streaming services such as BTS, Taylor Swift, Billie Eilish, etc. It also recommends music and events. Approximately 25 million people use Last.FM every month and generate large amounts of data.

Why does Last.FM need Hadoop?

Given that millions of people use Last.FM, many of them must be streaming a song right now, as you are reading our blog. This information is relevant to the website, and thus it intends to store, manage and process this data. And that is why Hadoop was adopted by Last.FM as it solved many problems. It implemented Apache's framework in early 2006 and realized it in production a few months later. Hadoop now plays a vital role in Last.FM's infrastructure as it has two Hadoop clusters implemented with over 50 machines, 300 cores, and 100 TB of disk space. About a hundred daily jobs, including music chart evaluation, A/B tests, and analysis log files, are run on the Hadoop clusters.

Let us now explore a few advantages that the website got to enjoy after implementing Hadoop:

-

Hadoops' component HDFS allowed redundant backups for the files (user streaming data, weblogs, etc.) saved on the distributed filesystem cost-effectively.

-

As Hadoop runs on commodity hardware (affordable computer hardware), it was easy for the Last.FM to achieve scalability.

-

As Hadoop is freely accessible, it didn't compromise the company's financial constraints. The Apache Hadoop community is highly active, its code is open source, and it was easy for them to customize the framework according to their needs.

-

Hadoop supports a flexible framework for executing allocated computing algorithms with a relatively simple learning curve.

After reading the three cases of Hadoop implementation, you must be curious to explore more such companies utilizing Apache Hadoop. You can go through the following list to explore more: PoweredBy - HADOOP2 - Apache Software Foundation. The list contains all the Hadoop framework institutions for education and production purposes. You will find several tech giants like Adobe, Amazon, Alibaba, AOL, eBay, and even educational institutions like ETH Zurich Systems Group, Cornell University Web Lab, and ESPOL University.

To learn more about Hadoop architecture and its applications, it is recommended to explore Top Hadoop Projects for Practice with Source Code. You can also check out the ProjectPro Repository, which contains over 270+ solved end-to-end industry-grade projects based on data science and big data. So, don't wait and get your hands on mastering big data and Hadoop frameworks.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

FAQs on Hadoop Architecture

1. What are the four main components of the Hadoop architecture?

The four main components of the Hadoop architecture are-

-

Hadoop HDFS

-

Hadoop Yarn (Yet Another Resource)

-

Hadoop MapReduce, and

-

Hadoop Common

2. Which big data architecture Hadoop is based on?

Hadoop Architecture is based on master-slave architecture- one master node and multiple slave nodes.

3. What is Hadoop used for?

Hadoop is used for faster and more efficient big data storage with support for parallel processing and easy retrieval of big data.

4. What is Hadoop cluster architecture?

The Hadoop cluster architecture typically consists of a single master node and multiple slave nodes that collaborate to manage and analyze large amounts of data in a distributed and fault-tolerant manner.

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,