Impala vs Hive: Difference between Sql on Hadoop components

Impala vs Hive -Apache Hive is a data warehouse infrastructure built on Hadoop whereas Cloudera Impala is open source analytic MPP database for Hadoop.

Hadoop has continued to grow and develop ever since it was introduced in the market 10 years ago. Every new release and abstraction on Hadoop is used to improve one or the other drawback in data processing, storage and analysis. Apache Hive was introduced by Facebook to manage and process the large datasets in the distributed storage in Hadoop.

Create A Data Pipeline based on Messaging Using PySpark Hive

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectApache Hive is an abstraction on Hadoop MapReduce and has its own SQL like language HiveQL. Cloudera Impala was developed to resolve the limitations posed by the low interaction of Hadoop Sql. Cloudera Impala provides low latency high-performance SQL-like queries to process and analyze data with only one condition that the data be stored on Hadoop clusters.

Data explosion in the past decade has not disappointed big data enthusiasts one bit. It has thrown up a number of challenges and created new industries which require continuous improvements and innovations in the way we leverage technology.

Big Data keeps getting bigger. It continues to pressurize existing data querying, processing, and analytic platforms to improve their capabilities without compromising on the quality and speed. A number of comparisons have been drawn and they often present contrasting results. Cloudera Impala and Apache Hive are being discussed as two fierce competitors vying for acceptance in the database querying space. While Hadoop has clearly emerged as the favorite data warehousing tool, the Cloudera Impala vs Hive debate refuses to settle down.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

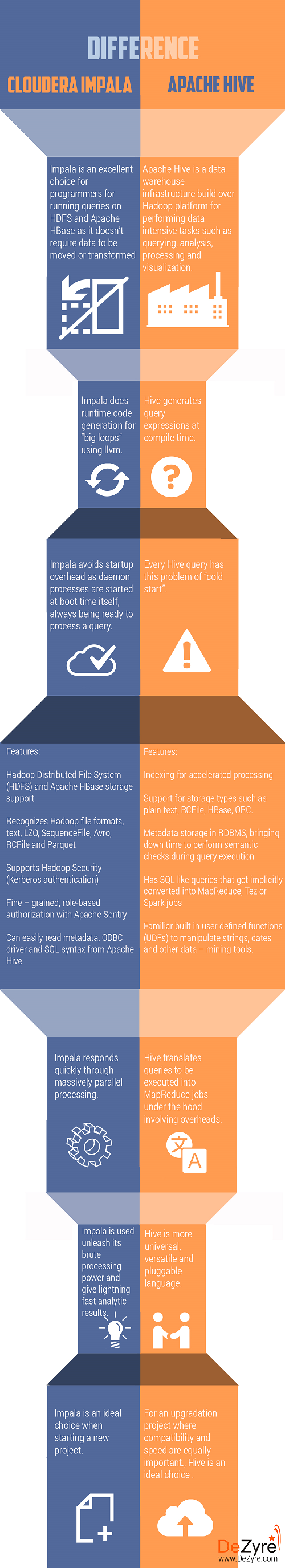

Hive vs Impala -Infographic

We try to dive deeper into the capabilities of Impala , Hive to see if there is a clear winner or are these two champions in their own rights on different turfs. We begin by prodding each of these individually before getting into a head-to-head comparison.

New Projects

What is Impala?

Step aside, the SQL engines claiming to do parallel processing! Impala’s open source Massively Parallel Processing (MPP) SQL engine is here, armed with all the power to push you aside. The only condition it needs is data be stored in a cluster of computers running Apache Hadoop, which, given Hadoop’s dominance in data warehousing, isn’t uncommon. Cloudera Impala was announced on the world stage in October 2012 and after a successful beta run, was made available to the general public in May 2013.

Cloudera Impala is an excellent choice for programmers for running queries on HDFS and Apache HBase as it doesn’t require data to be moved or transformed prior to processing. Cloudera Impala easily integrates with the Hadoop ecosystem, as its file and data formats, metadata, security and resource management frameworks are the same as those used by MapReduce, Apache Hive, Apache Pig, and other Hadoop software. It is architected specifically to assimilate the strengths of Hadoop and the familiarity of SQL support and multi-user performance of the traditional database. Its unified resource management across frameworks has made it the de facto standard for open-source interactive business intelligence tasks.

Ace Your Next Job Interview with Mock Interviews from Experts to Improve Your Skills and Boost Confidence!

Cloudera Impala has the following two technologies that give other processing languages a run for their money:

Columnar Storage

Data is stored in a columnar fashion which achieves a high compression ratio and efficient scanning.

Get More Practice, More Big Data and Analytics Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

Tree Architecture

This is fundamental to attaining a massively parallel distributed multi–level serving tree for pushing down a query to the tree and then aggregating the results from the leaves.

Image Credit:cwiki.apache.org

Impala massively improves on the performance parameters as it eliminates the need to migrate huge data sets to dedicated processing systems or convert data formats prior to analysis. Salient features of Impala include:

- Hadoop Distributed File System (HDFS) and Apache HBase storage support

- Recognizes Hadoop file formats, text, LZO, SequenceFile, Avro, RCFile, and Parquet

- Supports Hadoop Security (Kerberos authentication)

- Fine – grained, role-based authorization with Apache Sentry

- Can easily read metadata, ODBC driver, and SQL syntax from Apache Hive

Impala’s rise within a short span of a little over 2 years can be gauged from the fact that Amazon Web Services and MapR have both added support for it.

Here's what valued users are saying about ProjectPro

Ameeruddin Mohammed

ETL (Abintio) developer at IBM

Anand Kumpatla

Sr Data Scientist @ Doubleslash Software Solutions Pvt Ltd

Not sure what you are looking for?

View All ProjectsApache Hive

Initially developed by Facebook, Apache Hive is a data warehouse infrastructure build over Hadoop platform for performing data intensive tasks such as querying, analysis, processing and visualization. Apache Hive is versatile in its usage as it supports the analysis of large datasets stored in Hadoop’s HDFS and other compatible file systems such as Amazon S3. To keep the traditional database query designers interested, it provides an SQL – like language (HiveQL) with schema on read and transparently converts queries to MapReduce, Apache Tez and Spark jobs. Other features of Hive include:

- Indexing for accelerated processing

- Support for different storage types such as plain text, RCFile, HBase, ORC, and others

- Metadata storage in RDBMS, bringing downtime to perform semantic checks during query execution

- Has SQL like queries that get implicitly converted into MapReduce, Tez or Spark jobs

- Familiar built-in user-defined functions (UDFs) to manipulate strings, dates and other data – mining tools.

If you are looking for an advanced analytics language which would allow you to leverage your familiarity with SQL (without writing MapReduce jobs separately) then Apache Hive is definitely the way to go. HiveQL queries anyway get converted into a corresponding MapReduce job which executes on the cluster and gives you the final output. Hive (and its underlying SQL like language HiveQL) does have its limitations though and if you have really fine-grained, complex processing requirements at hand you would definitely want to take a look at MapReduce.

Build Professional SQL Projects for Data Analysis with ProjectPro

Difference between Hive and Impala -

Hive is written in Java. Hive provides a SQL-like interface to allow querying of data from various databases and file systems within the Hadoop ecosystem. SQL queries have to be implemented in the MapReduce Java API to allow querying of the data. Hive provides an SQL abstraction to perform SQL-like queries(known as HiveQL) in Java without having to implement queries in the low-level Java API. Impala is written in C++ and Java. Impala uses the same syntax as Hive and provides the necessary abstraction to perform SQL-like queries without worrying about the low-level APIs in Java or C++, which run the query execution.

Hive vs Impala - Performance

Hive is built over MapReduce and hence is slower than Impala for less complex queries due to many I/O operations that have to run, for single query execution. Hence, Hive is primarily used for batch processing and ETLs (extract, transform, load). Hive is better able to handle longer-running, more complex queries on much larger datasets. Since Impala is not built over the MapReduce algorithms, the latency is reduced allowing Impala to run faster than Hive. Impala supports in-memory data processing, which means that it accessed data that is stored on the Hadoop data nodes without movement of data. Impala has a very efficient run-time execution framework, inter-process communication, parallel processing and metadata caching.

Impala has been shown to have a performance lead over Hive by benchmarks of both Cloudera (Impala’s vendor) and AMPLab. Benchmarks have been observed to be notorious about biasing due to minor software tricks and hardware settings. However, it is worthwhile to take a deeper look at this constantly observed difference. The following reasons come to the fore as possible causes:

- Cloudera Impala being a native query language, avoids startup overhead which is commonly seen in MapReduce/Tez based jobs (MapReduce programs take time before all nodes are running at full capacity). In Hive, every query has this problem of “cold start” whereas Impala daemon processes are started at boot time itself, always being ready to process a query.

- Hadoop reuses JVM instances to reduce startup overhead partially but introduces another problem when large haps are in use. Cloudera benchmark have 384 GB memory which is a big challenge for the garbage collector of the reused JVM instances.

- MapReduce materializes all intermediate results, which enables better scalability and fault tolerance (while slowing down data processing). Impala streams intermediate results between executors (trading off scalability).

- Hive generates query expressions at compile time whereas Impala does runtime code generation for “big loops”.

-

Apache Hive might not be ideal for interactive computing whereas Impala is meant for interactive computing.

-

Hive is batch based Hadoop MapReduce whereas Impala is more like MPP database.

-

Hive supports complex types but Impala does not.

-

Apache Hive is fault-tolerant whereas Impala does not support fault tolerance. When a hive query is run and if the DataNode goes down while the query is being executed, the output of the query will be produced as Hive is fault tolerant. However, that is not the case with Impala. If a query execution fails in Impala it has to be started all over again.

Build an Awesome Job Winning Project Portfolio with Solved End-to-End Big Data Projects

Hive transforms SQL queries into Apache Spark or Apache Hadoop jobs making it a good choice for long-running ETL jobs for which it is desirable to have fault tolerance because developers do not want to re-run a long-running job after executing it for several hours.

Image Credit : csdn.net

The above graph demonstrates that Cloudera Impala is 6 to 69 times faster than Apache Hive. To conclude, Impala does have a number of performance-related advantages over Hive but it also depends upon the kind of task at hand. That being said, Jamie Thomson has found some really interesting results through dumb querying published on sqlblog.com, especially in terms of execution time. For all its performance related advantages Impala does have few serious issues to consider. Being written in C/C++, it will not understand every format, especially those written in java. If you are starting something fresh then Cloudera Impala would be the way to go but when you have to take up an upgradation project where compatibility becomes as important a factor as (or may be more important than) speed, Apache Hive would nudge ahead.

In practical terms, Apache Hive and Cloudera Impala need not necessarily be competitors. As both- Hive Hadoop, Impala have a MapReduce foundation for executing queries, there can be scenarios where you are able to use them together and get the best of both worlds – compatibility and performance. Hive is the more universal, versatile, and pluggable language. Once data integration and storage has been done, Cloudera Impala can be called upon to unleash its brute processing power and give lightning-fast analytic results.

Hive Vs Impala - Use cases

Apache Impala, supported by the Cloudera Enterprise, was primarily designed for speed. It is written in C++, a CPU-efficient language that allows fast query execution and metadata caching. Impala is optimized to execute queries at low latency. Impala has also been designed with a very efficient execution framework at run-time, as it makes use of code generation, inter-process communication, massive support for parallelism and performs metadata caching. This makes the query engine impala a perfect choice to work on a data mart. A data mart is a subset of a data warehouse that is focused on a specific functional area of an organization. Data warehouses span the entire enterprise, whereas data marts are usually specific to a line of business or a particular department. Due to Impala’s efficient run-time execution framework, Impala is a good choice for working with ad-hoc queries and allows exploring the data in an iterative manner. Even if the query is changed several times at a moment’s notice, Impala provides a very speedy response time.

Apache Hive was primarily built to handle sophistication. This makes it a little more difficult for beginners to get comfortable with it. However, Hive is very effective when it comes to running complex queries, possibly requiring heavy transformations and/or multiple joins. The latency in Hive is higher since queries take longer to execute since queries go through planning and ramp-up prior to execution. Hive is also very fault-tolerant. If a part of a long-running query fails, Hive will ensure that this part of the query is reassigned and tried again. Hive supports query federation, where queries are allowed to run across several components and databases. Due to the level of sophistication provided by Hive, it is a good choice for the implementation of data warehouses or for EDW (enterprise data warehouse) use cases. EDWs generally demand workloads that require taking multiple dimensions into consideration. In many cases, EDWs are required to process queries that are much more complex than those processed by data marts. These queries involve complex data types, scheduled queries, and orchestration of queries for regular data extraction and to populate data marts. Hive is better able to handle complex queries and longer-running queries on large-scale datasets. Hive’s latency is not suitable for ad-hoc queries or for interactive computing, as its latency may slow the queries down when compared to Impala.

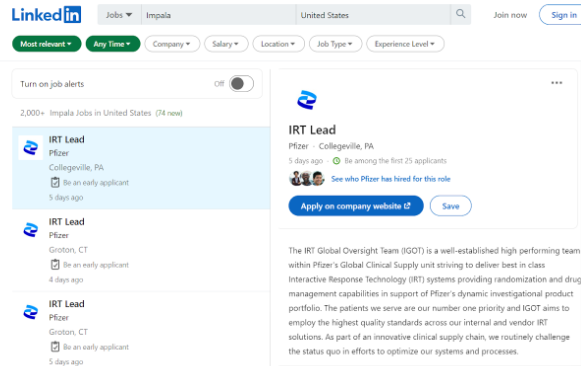

Hive vs Impala - Job Market

In the job market, Hive seems to be more popular than Impala. From the screenshots above, here is data according to LinkedIn for job openings in the United States for engineers with Hive and Impala skills. We can see that there are over 12,000 job openings for engineers with Hive skills. For Impala, we can see a little over 2000 job openings. But one has to keep in mind that these are jobs which involve a specialization in these fields.

These two screenshots are taken from Payscale, and give us an idea of the average salary one can expect for having a good skill set in Apache Hive and with Cloudera Impala. The average salary in the United States for professionals with a good understanding of Apache Hive is $101, 931 per annum and it is $91,123 per annum for professionals with a good understanding of Cloudera Impala.

Hive vs Impala - Interview Questions

If you are preparing for a job interview that requires you to be thorough with Apache Hive, here are a few questions you should be prepared to answer.

-

What is Hive and why do we need it?

-

What are the different types of tables that are available in Hive?

-

Is Hive suitable for OLTP systems? Explain your answer.

-

What are metastores in Hive?

-

What are the differences between Pig and Hive?

-

What is the difference between HBase and Hive?

-

Explain the different types of partitioning in Hive.

-

How is bucketing different from partitioning in Hive?

-

What are the components of a query processor in Hive?

-

Explain the different types of join that can be used in Hive.

-

How does data transfer happen from HDFS to Hive?

-

Mention the different components of the Hive architecture.

-

How can you optimize Hive performance?

-

What is the use of explode in Hive?

-

By default, where does the data in Hive get stored?

-

How does Hive maintain its fault tolerance?

-

What is the purpose of Hcatalog?

-

Is it possible to delete the DBPROPERTY in Hive?

-

How can you list all the databases which begin with the letter ‘k’?

-

What do you understand by the term ‘schema on read’?

You can refer to the following article for a detailed list of interview Questions and Answers for Hive.

Here is a list of questions to be prepared for if you are attending a job interview and Cloudera Impala is an important skill for that particular job.

-

What is Impala and why is it needed?

-

What are some important features of Impala?

-

What are the components of Impala?

-

How can data access be controlled in Impala?

-

What are distinct operators in Impala?

-

How can one perform troubleshooting for Impala?

-

Mention some differences between Impala and SQL.

-

What are some use cases where Impala is preferred when compared to Hive?

-

Can Impala be used for complex query processing?

-

Does Impala use caching?

-

Is MapReduce required for Impala?

-

What is Impala’s aggregation strategy?

-

Differentiate between HBase and Impala.

-

How does Impala process join queries on large tables?

-

What is the maximum number of rows that are supported in a table?

-

How does Impala handle errors?

-

Mention some use cases of Impala.

-

How does Impala manage metadata?

-

Does Impala support UPDATE statements?

-

What happens if the return value of a query data set exceeds the amount of available memory?

Hive vs. Impala vs. Spark

|

Apache Hive |

Cloudera Impala |

Apache Spark SQL |

|

|

Latency |

Hive is built on Hadoop’s MapReduce and hence has a higher latency when it comes to processing queries. Hive was built mainly for sophistication and not speed. |

Impala was built primarily for speed and hence has a very low latency. |

Spark is a very fast query execution engineer and can run queries upto 10-100 faster than MapReduce. |

|

Throughput |

Among the three, Hive has the lowest throughput. |

Among the three, Impala has the highest throughput. |

Spark has a higher throughput than Hive, but not as high as that of Impala. Impala’s throughput is almost seven times that of Spark. |

|

Use cases |

Hive is ideal for situations where multiuser support is required and complex queries which has to span multiple databases are frequently performed. |

Impala is best suited for business interactive workloads where a low latency is required, and queries have to be interactive. |

Spark SQL is suitable for building Spark pipelines. The data here is primarily used for analytics purposes. |

|

Fault tolerance |

Hive can recover from mid-query faults. |

Impala is not fault-tolerant. If query execution fails mid-query, then the query has to be executed again. |

Spark is able to recover from mid-query faults. |

|

Popularity (according to DB engine) |

As of July 2021, Hive has a total score of 82.68 and is ranked at number 14. |

As of July 2021, Impala has a total score of 19.56 and is ranked at number 36. |

As of July 2021, Spark SQL has a total score of 21.39 and is ranked at number 34. |

|

Query complexity |

Hive is built to handle long running queries which require multiple transformations and joins. |

Impala is built to handle shorter queries on large data sets, but due to its low latency, it is ideal for interactive computing. |

Spark provides support for both short and long running queries. |

Related Posts

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,