Python Chatbot Project-Learn to build a chatbot from Scratch

Python Chatbot Project Machine Learning-Explore chatbot implementation steps in detail to learn how to build a chatbot in python from scratch.

The chatbot market is anticipated to grow at a CAGR of 23.5% reaching USD 10.5 billion by end of 2026.

Facebook has over 300,000 active chatbots.

According to a Uberall report, 80 % of customers have had a positive experience using a chatbot.

According to IBM, organizations spend over $1.3 trillion annually to address novel customer queries and chatbots can be of great help in cutting down the cost to as much as 30%.

No doubt, chatbots are our new friends and are projected to be a continuing technology trend in AI. Chatbots can be fun, if built well as they make tedious things easy and entertaining. So let's kickstart the learning journey with a hands-on python chatbot project that will teach you step by step on how to build a chatbot from scratch in Python.

Table of Contents

- How to build a Python Chatbot from Scratch?

- How to Build a Chatbot in Python - Concepts to Learn Before Writing Simple Chatbot Code in Python

- How to Create a Chatbot in Python from Scratch- Here’s the Recipe

- Step-1: Connecting with Google Drive Files and Folders

- Step-2: Importing Relevant Libraries

- Step-3: Reading the JSON file

- Step-4: Identifying Feature and Target for the NLP Model

- Step-5: Making the data Machine-friendly

- Step-6: Building the Neural Network Model

- Step-7: Pre-processing the User’s Input

- Step-8: Calling the Relevant Functions and interacting with the ChatBot

How to build a Python Chatbot from Scratch?

Did you recently try to ask Google Assistant (GA) what’s up? Well, I did, and here is what GA said.

Me: Hey Google! What’s up?

GA: Hey! I’ve been looking into ways to stay safe. Wearing a mask in public, washing your hands, and social distancing slows the spread of coronavirus 😷. I hope you and your loved ones are safe and healthy.

What a sweet and kind reply from GA! Well, in case you don’t know, Google Assistant is actually an advanced version of a chatbot that is basically (as per Oxford English dictionary) a computer program designed to simulate conversation with human users, especially over the internet. It is one of the most popular applications of Natural Language Processing (NLP)- the exciting subdomain of Artificial Intelligence that deals with the interaction between computers and humans using the natural language. In this python chatbot tutorial, we’ll use exciting NLP libraries and learn how to make a chatbot from scratch in Python.

Image Credit : freepik.com

Create Your First Chatbot with RASA NLU Model and Python

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectHow to Build a Chatbot in Python - Concepts to Learn Before Writing Simple Chatbot Code in Python

Before we dive into technicalities, let me comfort you by informing you that building your own Chatbot with Python is like cooking chickpea nuggets. You may have to work a little hard in preparing for it but the result will definitely be worth it.

BUT…

Understanding the recipe requires you to understand a few terms in detail. Don’t worry, we’ll help you with it but if you think you know about them already, you may directly jump to the Recipe section.

So, these are the three things that you need to know beforehand to learn how to create a chatbot in Python from scratch -

1. Neural Network

2. Bag-of-Words Model

3. Lemmatization

Here's what valued users are saying about ProjectPro

Savvy Sahai

Data Science Intern, Capgemini

Ed Godalle

Director Data Analytics at EY / EY Tech

Not sure what you are looking for?

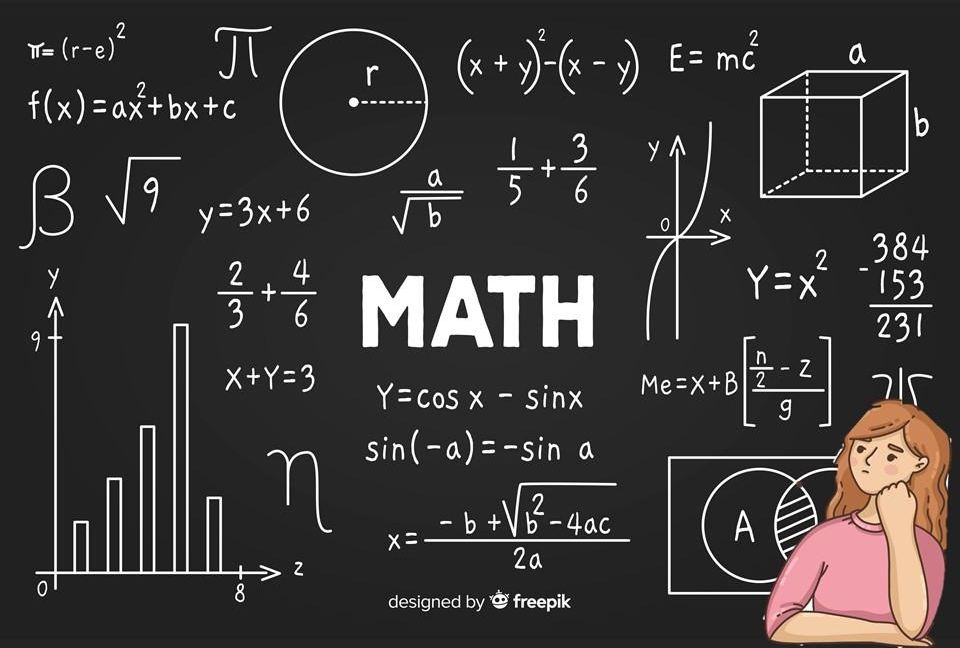

View All ProjectsNeural Network

It is a deep learning algorithm that resembles the way neurons in our brain process information (hence the name). It is widely used to realize the pattern between the input features and the corresponding output in a dataset. Here is the basic neural network architecture -

Image Credit : alexlenail.me/NN-SVG/

Don’t be afraid of this complicated neural network architecture image. We’ll simplify things for you.

The purple-colored circles represent the input vector, xi where i =1, 2, ….., D which is nothing else but one feature of our dataset. The blue-colored circles are the neurons of hidden layers. These are the layers that will learn the math required to relate our input with the output. Finally, we have pink-colored circles which form the output layer. The dimension of the output layer depends on the number of different classes that we have. For example, let us say we have 5x4 sized dataset where we have 5 input vectors, each having some value for 4 features: A, B, C, and D. Assume that we want to classify each row as good or bad and we use the number 0 to represent good and 1 to represent bad. Then, the neural network is supposed to have 4 neurons at the input layer and 2 neurons at the output.

Recommended Reading:

Okay, so now that you have a rough idea of the deep learning algorithm, it is time that you plunge into the pool of mathematics related to this algorithm.

Image Credit: freepik.com

New Projects

Neural Network algorithm involves two steps:

-

Forward Pass through a Feed-Forward Neural Network

-

Backpropagation of Error to train Neural Network

Upskill yourself for your dream job with industry-level big data projects with source code

1. Forward Pass through a Feed-Forward Neural Network

This step involves connecting the input layer to the output layer through a number of hidden layers. The neurons of the first layer (l=1) receive a weighted sum of elements of the input vector (xáµ¢) along with a bias term b, as shown in Fig. 2. After that, each neuron transforms this weighted sum received at the input, a, using a differentiable, nonlinear activation function h(·) to give output z.

Hidden layers of neural network architecture.

Image Credit : alexlenail.me/NN-SVG/

For a neuron of subsequent layers, a weighted sum of outputs of all the neurons of the previous layer along with a bias term is passed as input. This has been represented in Fig. 3. The layers of the subsequent layers to transform the input received using activation functions.

This process continues till the output of the last layer’s (l=L) neurons has been evaluated. These neurons at the output layer are responsible for identifying the class the input vector belongs to. The input vector is labeled with the class whose corresponding neuron has the highest output value.

Get Closer To Your Dream of Becoming a Data Scientist with 70+ Solved End-to-End ML Projects

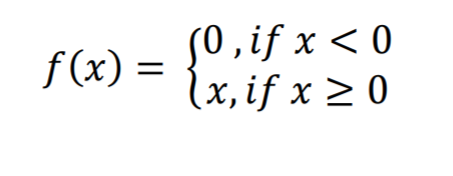

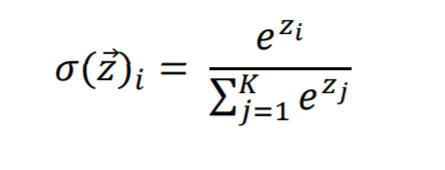

Please note that the activation functions can be different for each layer. The two activation functions that we will use for our ChatBot, which are also most commonly used are Rectified Linear Unit (ReLu) function and Softmax function. The former will be used for hidden layers while the latter is used for the output layer. The softmax function is usually used at the output for it gives probabilistic output. The ReLU function is defined as:

And the softmax function is defined as:

Now, it’s time to move on to the second step of the algorithm that is used in building this chatbot application project.

2. Backpropagation of Error to train Neural Network

This step is the most important one because the original task of the Neural Network algorithm is to find the correct set of weights for all the layers that lead to the correct output and this step is all about finding those correct weights and biases. Consider an input vector that has been passed to the network and say, we know that it belongs to class A. Assume the output layer gives the highest value for class B. There is therefore an error in our prediction. Now, since we can only compute errors at the output, we have to propagate this error backward to learn the correct set of weights and biases.

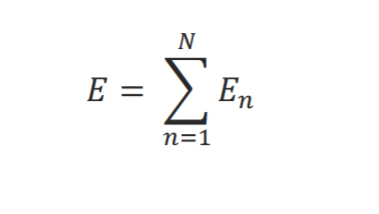

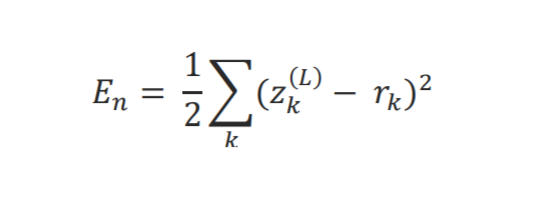

Let us define the error function for the network:

Here Eâ‚™ is the error for a single pattern vector: xâ‚™ and is defined as,

k = 1,2,3,…, NÊŸ; zâ‚– is the output value of the káµ—Ê° neuron of the output layer L, and râ‚– is the desired response for the káµ—Ê° neuron of the output layer L.

Please note this is not the only error(/loss) function that can be defined. There are numerous ways to define one and you can read about them all here: keras.io/api/losses/. Also, there are a variety of algorithms that are then used to minimize the errors and you can explore them all in this interesting paper here: arxiv.org/1609.04747.pdf Depending on the problem that one is dealing with, appropriate loss functions and optimisers are chosen.

Bag-of-Words(BoW) Model

Now, recall from your high school classes that a computer only understands numbers. Therefore, if we want to apply a neural network algorithm on the text, it is important that we convert it to numbers first. And one way to achieve this is using the Bag-of-words (BoW) model. It is one of the most common models used to represent text through numbers so that machine learning algorithms can be applied on it.

Let us try to understand this model through an example. Consider the following 5 sentences:

“Hi! I am Rakesh.”

“Hello! Kiran this side.”

“Hi! My name is Kriti.”

“Hey! I am Leena.”

“Hello everyone! Myself Srishti.”

Now, say these sentences constitute our input dataset. For the BoW model, we first need to prepare vocabulary for our dataset, that is, we need to find out the unique words from our sentences. In our case, the vocabulary would look like this:

hi, i, am, rakesh, hello, kiran, this, side, my, name, is, kriti, hey, leena, everyone, myself, srishti.

After this, we have to represent our sentences using this vocabulary and its size. In our case, we have 17 words in our library, So, we will represent each sentence using 17 numbers. We will mark ‘1’ where the word is present and ‘0’ where the word is absent.

“Hi! I am Rakesh.”: 1 1 1 1 0 0 0 0 0 0 0 0 0 0 0 0 0

“Hello! Kiran this side.”: 0 0 0 0 1 1 1 1 0 0 0 0 0 0 0 0 0

“Hi! My name is Kriti.” 1 0 0 0 0 0 0 0 1 1 1 1 0 0 0 0 0

“Hey! I am Leena.” 0 1 1 0 0 0 0 0 0 0 0 0 1 1 0 0 0

“Hello everyone! Myself Srishti.” 0 0 0 0 1 0 0 0 0 0 0 0 0 0 1 1 1

Now, notice that we haven’t considered punctuations while converting our text into numbers. That is actually because they are not of that much significance when the dataset is large. We thus have to preprocess our text before using the Bag-of-words model. Few of the basic steps are converting the whole text into lowercase, removing the punctuations, correcting misspelled words, deleting helping verbs. But one among such is also Lemmatization and that we’ll understand in the next section.

Get FREE Access to Machine Learning Example Codes for Data Cleaning, Data Munging, and Data Visualization

Lemmatization

Most of us while searching for a word in a dictionary would have noticed that it contains the root form of the word. For example, if we are looking for the word ‘hibernating’, it will be there in the dictionary as ‘hibernate’. In a similar manner, to save on computation power, we need to reduce the words in our text to their root form and this process is called lemmatization. For example,

partying 🡪 party

eats 🡪 eat

studies 🡪 study

Kudos! You have made it this far. 👠In the next section of the python chatbot tutorial, you will find details on how to implement python chatbot code. Tighten your laces and get ready for the recipe!

Image Credit : freepik.com

Just like every other recipe starts with a list of Ingredients, we will also proceed in a similar fashion. So, here you go with the ingredients needed for the python chatbot tutorial.

Chatbot Python Tutorial - How to build a Chatbot from Scratch in Python

Ingredients Needed to Make a Chatbot Application Project:

1. A Google Account for using Google Colab Notebook

2. A JSON file by the name 'intents.json', which will contain all the necessary text that is required to build our chatbot.

How to Create a Chatbot in Python from Scratch- Here’s the Recipe

Let us now explore step by step and unravel the answer of how to create a chatbot in Python.

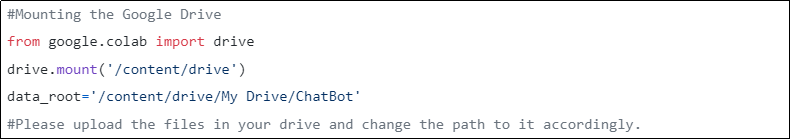

Step-1: Connecting with Google Drive Files and Folders

The first step is to create a folder by the name 'ChatBot' in your Google drive. After that, upload the 'intents.json' file in this folder and write the following code in a Google Colab notebook:

This will allow us to access the files that are there in Google Drive.

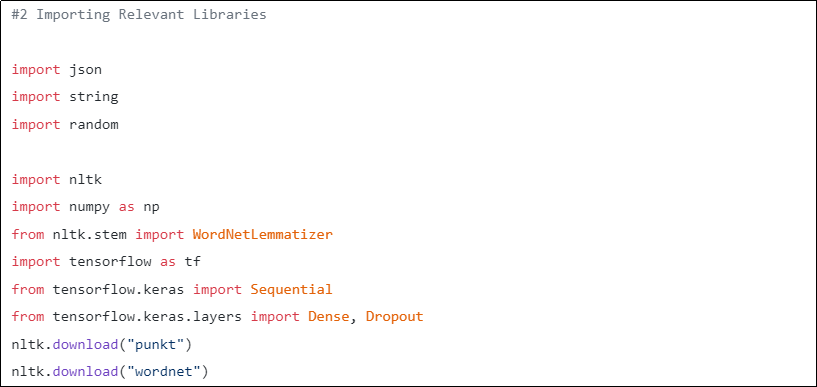

Step-2: Importing Relevant Libraries

The next step is the usual one where we will import the relevant libraries, the significance of which will become evident as we proceed.

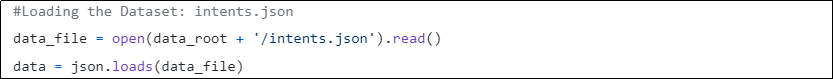

Step-3: Reading the JSON file

It's time to load our data into Python. We will use json.loads() for this and store the content of the file into a variable named 'data' (type: dict). Here is the code for it:

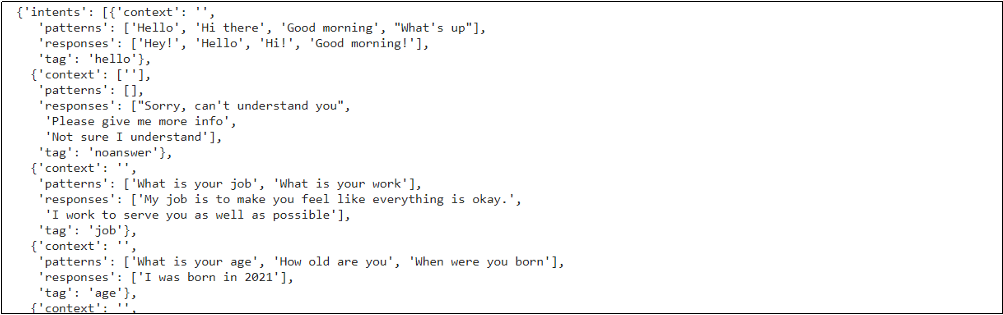

This is what the data that we have imported looks like.

So, as you can see, the dataset has an object called intents. The dataset has about 16 instances of intents, each having its own tag, context, patterns, and responses. If you thoroughly go through your dataset, you’ll understand that patterns are similar to the interactive statements that we expect from our users whereas responses are the replies to those statements. And, a tag is a one-word summary of the user’s query.

Now, the task at hand is to make our machine learn the pattern between patterns and tags so that when the user enters a statement, it can identify the appropriate tag and give one of the responses as output. And, the following steps will guide you on how to complete this task.

Get More Practice, More Data Science and Machine Learning Projects, and More guidance. Fast-Track Your Career Transition with ProjectPro

Step-4: Identifying Feature and Target for the NLP Model

Now, we will extract words from patterns and the corresponding tag to them. This has been achieved by iterating over each pattern using a nested for loop and tokenizing it using nltk.word_tokenize. The words have been stored in data_X and the corresponding tag to it has been stored in data_Y.

Two more lists: words and classes containing all tokens and corresponding tags have also been created. For the list words, the punctuations have not been added by using a simple conditional statement and the words have been converted into their root words using NLTK's WordNetLemmatizer(). This is an important step when writing a chatbot in Python as it will save us a lot of time when we will feed these words to our deep learning model. At last, both the lists have been sorted and these functions have been used to remove any duplicates.

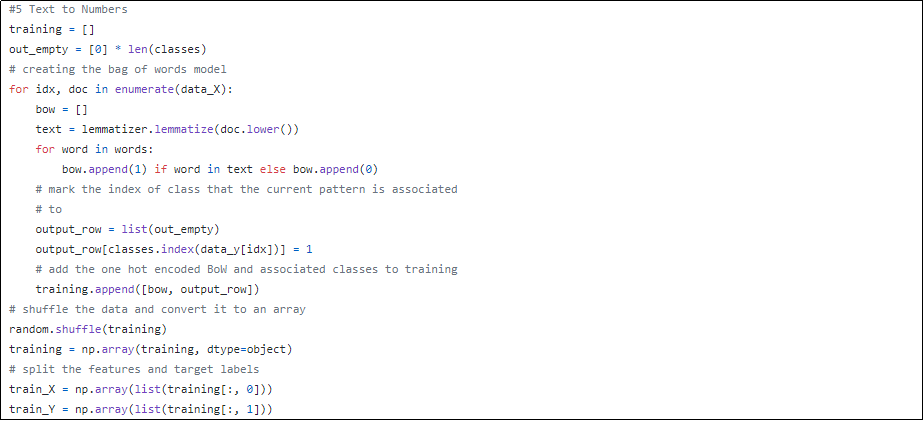

Step-5: Making the data Machine-friendly

In this step, we will convert our text into numbers using the bag-of-words (bow) model. Those who are aware of this must-have now understood the significance of the listed words and classes. And if you are not, don’t worry, we got your back. 😉

The two lists words and classes act as a vocabulary for patterns and tags respectively. We’ll use them to create an array of numbers of size the same as the length of vocabulary lists. The array will have values 1 if the word is present in the pattern/tag being read (from data_X) and 0 if it is absent.

The data has thus been converted into numbers and stored in two arrays: train_X and train_Y where the former represents features and the latter represents target variables.

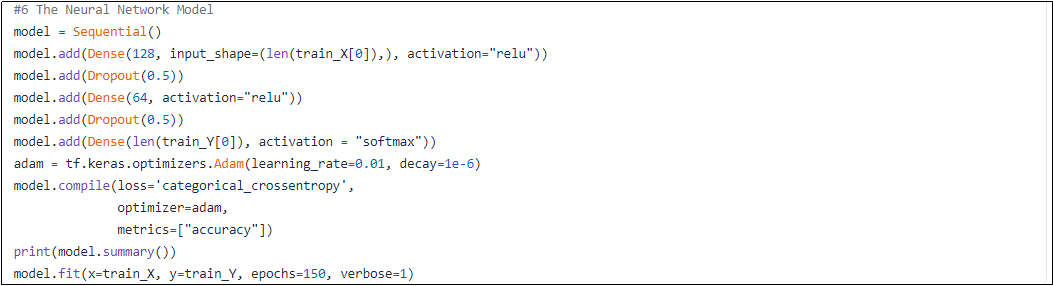

Step-6: Building the Neural Network Model

Next, we will create a neural network using Keras’ Sequential model. The input to this network will be the array train_X created in the previous step. These would then traverse through the model of 3 different layers with the first having 128 neurons, the second having 64 neurons, and the last layer having the same number of neurons as the length of one element of train_Y (Obvious, right?). Next, to reach the correct weights, we have chosen the Adam optimizer and defined our error function using the categorical cross-entropy function. And, the metric we have chosen is accuracy. There are of course a lot of other metrics that you might be interested in and you can explore about them here: Keras. We’ll train the python chatbot model about 150 times so that it reaches the desired accuracy.

Access Python Chatbot Project Solution and Make a Chatbot on Your Own.

Don’t forget to notice that we have used a Dropout layer which helps in preventing overfitting during training.

That’s it. We are now almost done with making our Chatbot. Give yourself a pat on the back for you have made it this far. ðŸ‘ðŸ‘

Image Credit: freepik.com

So, now that we have taught our machine about how to link the pattern in a user’s input to a relevant tag, we are all set to test it. But, wait. You do remember that the user will enter their input in string format, right? Okay, good. So, this means we will have to preprocess that data too because our machine only gets numbers.

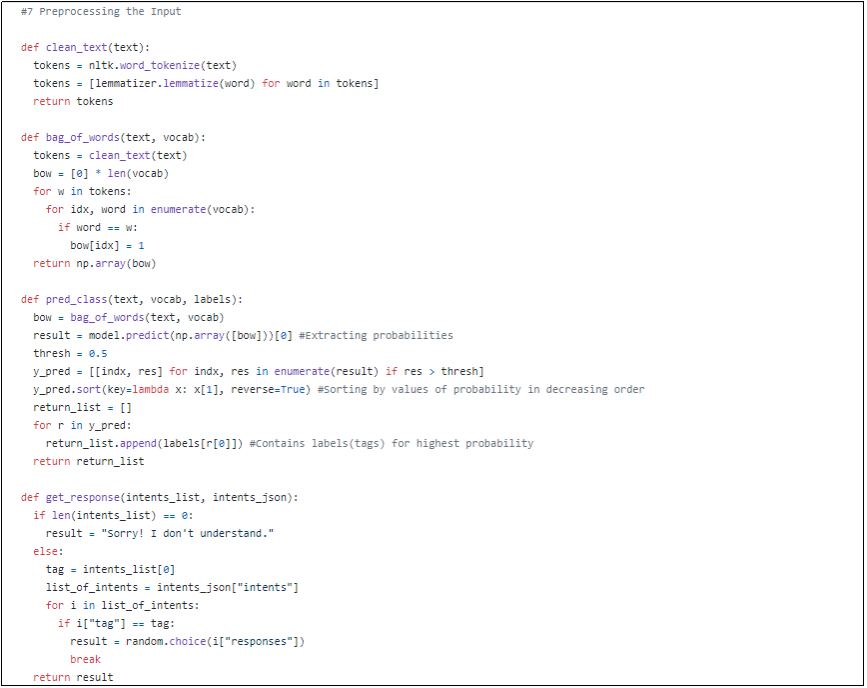

Step-7: Pre-processing the User’s Input

In this step of the tutorial on how to build a chatbot in Python, we will create a few easy functions that will convert the user’s input query to arrays and predict the relevant tag for it. Our code for the Python Chatbot will then allow the machine to pick one of the responses corresponding to that tag and submit it as output.

Let us quickly discuss these functions in more detail:

-

Clean_text(text): This function receives text (string) as an input and then tokenizes it using the nltk.word_tokenize(). Each token is then converted into its root form using a lemmatizer. The output is basically a list of words in their root form.

-

Bag_of_words(text, vocab): This function calls the above function, converts the text into an array using the bag-of-words model using the input vocabulary, and then returns the same array.

-

Pred_class(text, vocab, labels): This function takes text, vocab, and labels as input and returns a list that contains a tag corresponding to the highest probability.

-

Get_response(intents_list, intents_json): This function takes in the tag returned by the previous function and uses it to randomly choose a response corresponding to the same tag in intent.json. And, if the intents_list is empty, that is when the probability does not cross the threshold, we will pass the string “Sorry! I don’t understand” as ChatBot’s response.

Finally, we have reached the last step. Excited enough?

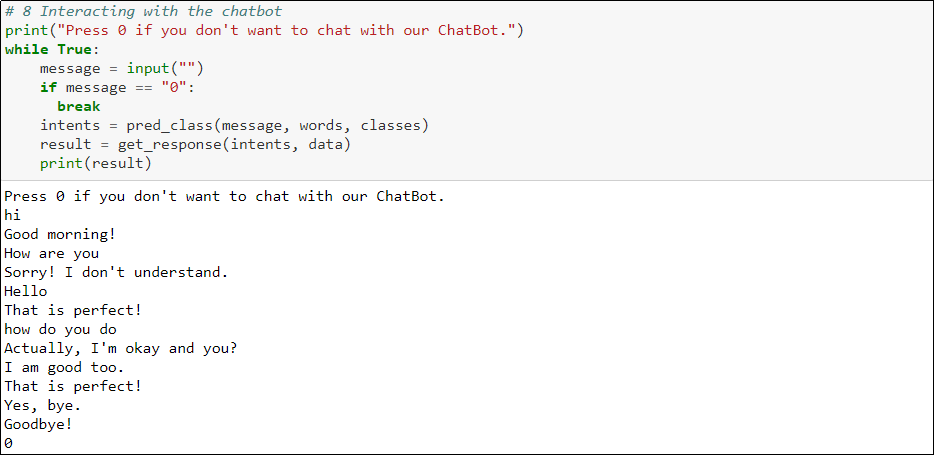

Step-8: Calling the Relevant Functions and interacting with the ChatBot

We now just have to take the input from the user and call the previously defined functions. Yes, that’s it.

Now, you can play around with your ChatBot as much as you want. To improve its responses, try to edit your intents.json here and add more instances of intents and responses in it.

Have fun! By the way, here is how I did:

FAQs on Chatbot with Python Project

Can Python be used for a Chatbot?

Yes, Python is commonly used for building chatbots due to its ease of use and a wide range of libraries. Its natural language processing (NLP) capabilities and frameworks like NLTK and spaCy make it ideal for developing conversational interfaces.

What is simple chatbot in Python?

A simple chatbot in Python is a basic conversational program that responds to user inputs using predefined rules or patterns. It processes user messages, matches them with available responses, and generates relevant replies, often lacking the complexity of machine learning-based bots.

Is Python good for making bots?

Python is a popular choice for creating various types of bots due to its versatility and abundant libraries. Whether it's chatbots, web crawlers, or automation bots, Python's simplicity, extensive ecosystem, and NLP tools make it well-suited for developing effective and efficient bots.

About the Author

Manika

Manika Nagpal is a versatile professional with a strong background in both Physics and Data Science. As a Senior Analyst at ProjectPro, she leverages her expertise in data science and writing to create engaging and insightful blogs that help businesses and individuals stay up-to-date with the