Spark SQL vs. Apache Drill-War of the SQL-on-Hadoop Tools

Spark SQL vs. Apache Drill- Decide which SQL-on-Hadoop tool to use for your next big data project for interactive queries and faster data analytics on Hadoop

SQL is the largest workload, that organizations run on Hadoop clusters because a mix and match of SQL like interface with a distributed computing architecture like Hadoop, for big data processing, allows them to query data in powerful ways. SQL-on-Hadoop Engines are a class of analytical tools that combine SQL like querying with Hadoop framework elements. Hadoop has made way into Enterprise Data Warehouse Space - thanks to SQL-on-Hadoop tools which support rich SQL feature set with sub second response time for SQL queries on Hadoop.

Spark SQL - zero to hero

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectWith big data analytics hitting mainstream IT and gaining a lot of exposure, the ability to deal with wide varieties and data formats, remains an ongoing effort with development of novel SQL-on-Hadoop offerings. With many different SQL-on-Hadoop engines, it is difficult to choose which SQL engine performs the best, because there is no “one size fits all” solution. Picking the right SQL-on-Hadoop tool is a challenging task. This post sheds some light on the functional and performance aspects of Spark SQL vs. Apache Drill to help decide which SQL engine should big data professionals choose, for their next project.

Within the big data landscape there are diverse approaches to access, analyse and manipulate data in Hadoop. SQL is the heart of data query languages and several tools have been built to bring SQL to Hadoop. Organizations can choose from various SQL-on-Hadoop engines, as they are not all created equal. They all look the same but are very different internally. There is no single SQL-on-Hadoop tool that performs well for all queries. Choosing the right SQL-on-Hadoop tool requires evaluating various requirements like non-traditional data, storage format, SQL dialect, User Defined Functions (UDF’s), handling multi-user workloads, data federation, etc. Apache Spark SQL and Apache Drill are two top level Apache projects that fit into some of the best SQL-on-Hadoop tools. Spark SQL is an integral part of Hadoop’s cousin Apache Spark and is supported by the Databricks community whereas Apache Drill is mainly supported by the MapR community.

Spark SQL

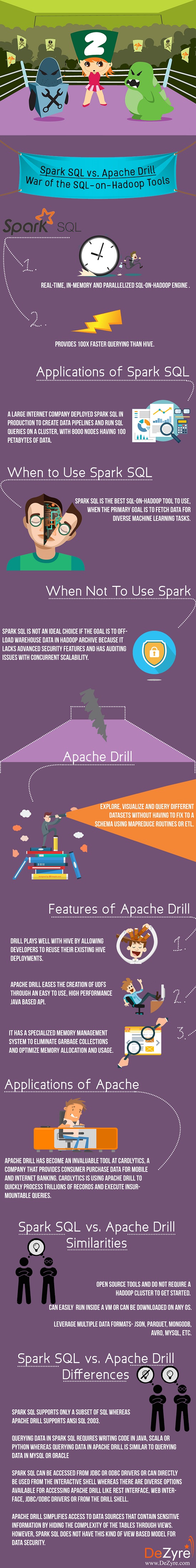

Spark SQL is used for real-time, in-memory and parallelized SQL-on-Hadoop engine that borrows some of its features from the predecessor Shark to retain Hive compatibility and provides 100X faster querying than Hive. It is used for manipulating and ingesting data in various formats like JSON, Hive, EDW’s or Parquet. Spark SQL allows users to do several advanced analytics with data from stream processing to machine learning. Spark SQL is not a general purpose SQL layer for exploratory analysis but it reuses Hive metastore and provides compatibility with existing Hive queries, data and user defined functions. Spark SQL supports only a subset of SQL functionality and users have to write code in Python, Java or Scala to execute a query.

The advantage of using Spark SQL-on-Hadoop tool over other tools, is the ease of using Machine Learning with SQL. It might not be an ideal choice for doing SQL based ETL/Batch processing as the users have to write Scala program code to perform complex joins and transformations. The best way to use Spark SQL is combine it with machine learning and use it as an interactive query tool. Spark SQL scales on 1000s of nodes and multi hour queries to provide complete mid-query fault tolerance - making it performance efficient.

New Projects

Features of SparkSQL

- Spark SQL supports various features for large scale data analysis including semi structured data, query federation and various data types for machine learning.

- Extensible Catalyst Optimizer in Spark SQL makes it easy to add various optimization rules, data types and data source using Scala programming language.

- It is easier to write data pipelines using Spark SQL that combine relational and procedural processing with substantial speedups over other SQL-on-Hadoop tools.

- It provides security through encryption using SSL for HTTP protocols and Akka.

Applications of Spark SQL

A large internet company deployed Spark SQL in production to create data pipelines and run SQL queries on a cluster, with 8000 nodes having 100 petabytes of data.

When to Use Spark SQL

Spark SQL is the best SQL-on-Hadoop tool to use, when the primary goal is to fetch data for diverse machine learning tasks.

When Not To Use Spark SQL

Spark SQL is not an ideal choice if the goal is to offload warehouse data in Hadoop archive because it lacks advanced security features and has auditing issues with concurrent scalability.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

Apache Drill

Apache Drill (inspired by Google Dremel) allows users to explore, visualize and query different datasets without having to fix to a schema using MapReduce routines or ETL. It is the first distributed SQL query engine that has a schema-free JSON model like Elastic search or MongoDB NoSQL. Using Apache Drill, data can be queried just by mentioning the path in the SQL query to a NOSQL database, Amazon S3 bucket or a Hadoop directory. Apache Drill defines the schema on-the-go (schema discovery) so that users can directly query the data unlike traditional SQL query engines where the schema has to be taken into consideration first. When using Apache Drill, developers need not code and build applications like Hive to extract data, normal SQL queries will help the user get data from any data source and in any specific format.

Drill uses a hierarchical columnar data model for treating data like a group of tables, irrespective of how the data is actually modelled. Apache Drill is useful for professionals already working with SQL databases and BI, as it helps them move up big data workloads through the tools they are already familiar with. Drill provides industry standard API support like RESTful, ANSI SQL and JDBC/ODBC drivers which can be accessed by most of the BI tools like Pentaho, Tableau, and Qlikview.

“Apache Drill closes a gap around self-service SQL queries in Hadoop, especially on complex, dynamic NoSQL data types. Drill’s performance advantages for Hadoop data access, combined with the Qlik associative experience, enables our customers to continue discovering business value from a wide range of data.”-said Mike Foster, strategic alliances technology officer at Qlik.

Here's what valued users are saying about ProjectPro

Ameeruddin Mohammed

ETL (Abintio) developer at IBM

Abhinav Agarwal

Graduate Student at Northwestern University

Not sure what you are looking for?

View All ProjectsFeatures of Apache Drill

- Drill plays well with Hive by allowing developers to reuse their existing Hive deployments.

- Apache Drill eases the creation of UDFs through an easy to use, high performance Java based API.

- It has a specialized memory management system to eliminate garbage collections and optimize memory allocation and usage.

Applications of Apache Drill

Apache Drill has become an invaluable tool at Cardlytics, a company that provides consumer purchase data for mobile and internet banking. Cardlytics is using Apache Drill to quickly process trillions of records and execute insurmountable queries.

Spark SQL vs Apache Drill

Similarities between Spark SQL and Apache Drill

- Spark SQL and Apache Drill are both open source and do not require a Hadoop cluster to get started.

- Both the SQL-on-Hadoop tools can easily be run inside a VM or can be downloaded on any OS.

- Both Spark SQL and Apache Drill leverage multiple data formats- JSON, Parquet, MongoDB, Avro, MySQL, etc.

Get More Practice, More Big Data and Analytics Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

Differences between Spark SQL and Apache Drill

- Spark SQL supports only a subset of SQL whereas Apache Drill supports ANSI SQL 2003.

- Querying data in Spark SQL requires writing code in Java, Scala or Python whereas querying data in Apache Drill is similar to querying data in MySQL or Oracle

- Spark SQL can be accessed from JDBC or ODBC drivers or can directly be used from the interactive shell whereas there are diverse options available for accessing Apache Drill like ReST interface, Web interface, JDBC/ODBC drivers or from the Drill shell.

- Apache Drill simplifies access to data sources that contain sensitive information by hiding the complexity of the tables through views. Views and impersonation allow fine grained access to data - to restrict data access to other users and ensure privacy and data security for sensitive information. However, Spark SQL does not have this kind of view based model for data security.

Making a Choice of the Best SQL-on-Hadoop Tools

Which SQL-on-Hadoop tool will you use- Spark SQL or Apache Drill?

There is no one, SQL-on-Hadoop tool, that fits all. The biggest advantage of using Spark SQL is Spark SQL is an ideal choice when the objective is not just to query data but work with it in an algorithmic manner that requires complex math, statistics and Machine Learning whereas Apache Drill suits best when the main objective is to only query data from disparate sources.

Build an Awesome Job Winning Project Portfolio with Solved End-to-End Big Data Projects

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,