Senior Applied Scientist, Amazon

Director of Business Intelligence , CouponFollow

Data Scientist, Credit Suisse

Data Scientist, Boeing

Build a movie recommender system on Azure using Spark SQL to analyse the movielens dataset . Deploy Azure data factory, data pipelines and visualise the analysis.

Get started today

Request for free demo with us.

Schedule 60-minute live interactive 1-to-1 video sessions with experts.

Unlimited number of sessions with no extra charges. Yes, unlimited!

Give us 72 hours prior notice with a problem statement so we can match you to the right expert.

Schedule recurring sessions, once a week or bi-weekly, or monthly.

If you find a favorite expert, schedule all future sessions with them.

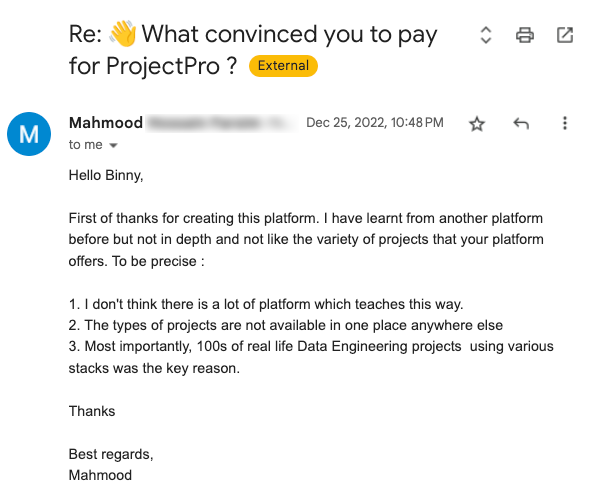

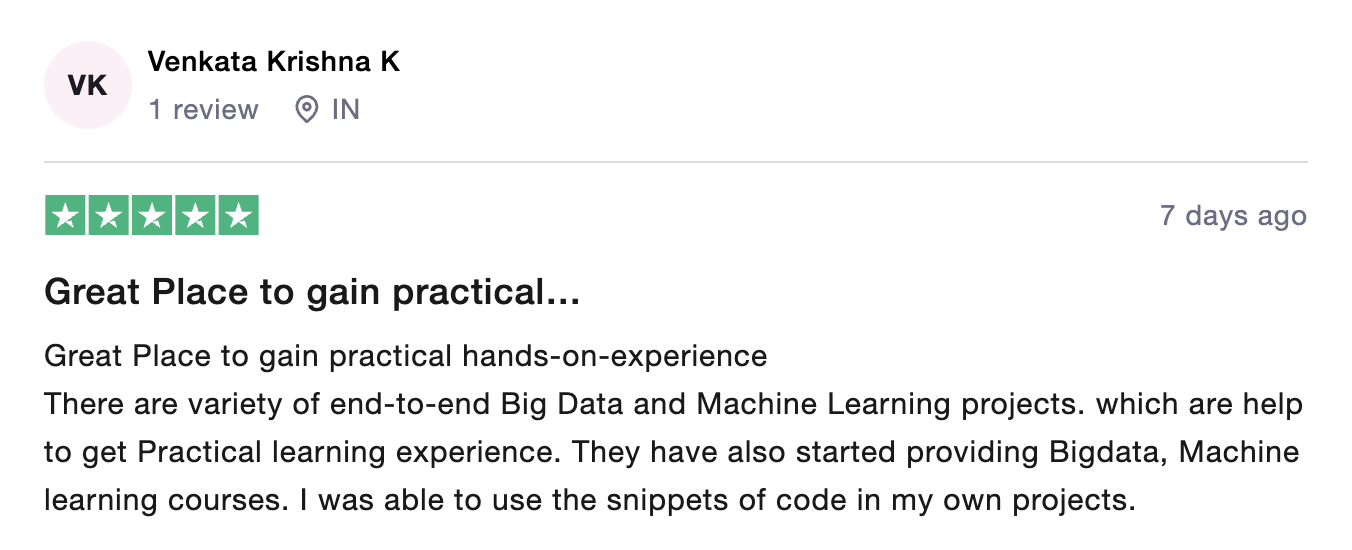

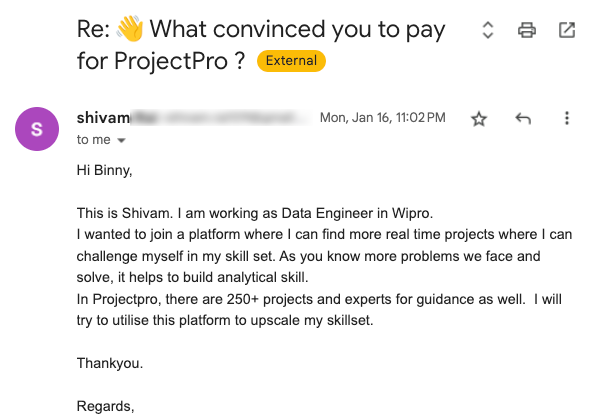

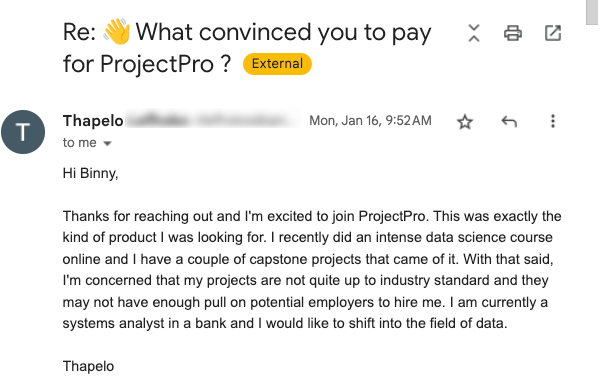

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

250+ end-to-end project solutions

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

15 new projects added every month

New projects every month to help you stay updated in the latest tools and tactics.

500,000 lines of code

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

600+ hours of videos

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

Cloud Lab Workspace

New projects every month to help you stay updated in the latest tools and tactics.

Unlimited 1:1 sessions

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

Technical Support

Chat with our technical experts to solve any issues you face while building your projects.

7 Days risk-free trial

We offer an unconditional 7-day money-back guarantee. Use the product for 7 days and if you don't like it we will make a 100% full refund. No terms or conditions.

Payment Options

0% interest monthly payment schemes available for all countries.

What is Dataset Analysis?

Dataset Analysis is defined as manipulating or processing unstructured data or raw data to draw valuable insights and conclusions that will help derive critical decisions that will add some business value. The dataset analysis process is followed by organizing the dataset, transforming the dataset, visualizing the dataset, and finally modeling the dataset to derive predictions for solving the business problems, making informed decisions, and effectively planning for the future.

Data Pipeline:

Data pipeline involves extracting or capturing data using various tools, storing raw data, cleaning, validating data, transforming data into a query-worthy format, visualizing KPIs, and orchestration of the above process. It refers to a system for moving data from one system to another. The data may or may not be transformed, and it may be processed in real-time (or streaming) instead of batches.

What is the Agenda of the project?

The Agenda of the project involves deriving Movie Recommendations using Python and Spark on Microsoft Azure. We first understand the problem and download the Movielens dataset from the grouplens website. Then a subscription is set up for using Microsoft Azure, and categorization of resources is done into a resource group. A standard storage account is a setup to store all the data required for serving movie recommendations using Python and Spark on Azure, followed by creating a standard storage blob account in the same resource group. Firstly, we make containers in a standard storage account and standard storage blob account and upload the movielens zip file dataset in its standard storage blob account. Then we create an Azure data factory, a copy data pipeline, and start link storage for standard blob storage account in the Azure data factory. We are copying data from Azure blob storage to Azure data lake storage using a copy data pipeline in the Azure data factory. It is followed by creating the databricks workspace, cluster on databricks, and accessing Azure data lake storage from databricks. We are creating mount points and extracting the zip file to get CSV files. Finally, we upload files into databricks, read the datasets into Spark dataframes in databricks, and analyze the dataset to get the movie recommendations.

Usage of Dataset:

Here we are going to use Movielens data in the following ways:

Extraction: During the extraction process, the Movielens data zip file is extracted to get the CSV files out of it in two ways: the Databricks local file system(DFS) and the Azure data factory(ADF) copy pipeline.

Transformation and Load: During the transformation and load process, the uploaded dataset in Spark is read into Spark dataframes. Data tags are also read into Spark in Databricks, and output is displayed through Bar chart. And dataset is finally analyzed in Databricks into Spark, and movies are recommended.

Data Analysis:

From the grouplens website, data is downloaded containing names of movies, ratings given to the movies, links of the movies, and tags assigned to the movies.

Resource manager is created in Azure to categorize the resources required, followed by a Storage account.

The Copy Data pipeline is created to copy the data from Azure blob storage to Azure data lake storage in the Azure data factory.

The Databricks workspace and cluster are created and accessed Azure data lake storage from databricks followed by the creation of Mount pairs.

The extraction process is done by extracting the Movielens data zip file to get the CSV files out of it using the Databricks file system(DFS) and using the Azure data factory(ADF).

In the transformation and load process, the uploaded dataset in Spark is read into Spark dataframes. Data tags are read into Spark in Databricks.

Finally, data is analyzed into Spark in Databricks using mount points, and data is visualized using bar charts.

Recommended

Projects

7 Popular Azure ETL Tools for Data Engineers in 2024

Explore the top seven Azure ETL tools reshaping the landscape in 2024. Revolutionize your data workflows with these robust solutions. | ProjectPro

Your A-Z Guide to AWS Data Engineer Certification Roadmap

The ultimate AWS Data Engineer Certification Roadmap - a step-by-step guide for mastering data engineering on Amazon Web Services. | ProjectPro

Learning Artificial Intelligence with Python as a Beginner

Explore the world of AI with Python through our blog, from basics to hands-on projects, making learning an exciting journey.

Get a free demo