Data Scientist, SwissRe

Data Engineer, Microsoft

Senior Applied Scientist, Amazon

Big Data & Analytics architect, Amazon

In this AWS Project, you will build an end-to-end log analytics solution to collect, ingest and process data. The processed data can be analysed to monitor the health of production systems on AWS.

What is the business problem that we are facing and its solution on it.

Get started today

Request for free demo with us.

Schedule 60-minute live interactive 1-to-1 video sessions with experts.

Unlimited number of sessions with no extra charges. Yes, unlimited!

Give us 72 hours prior notice with a problem statement so we can match you to the right expert.

Schedule recurring sessions, once a week or bi-weekly, or monthly.

If you find a favorite expert, schedule all future sessions with them.

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

250+ end-to-end project solutions

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

15 new projects added every month

New projects every month to help you stay updated in the latest tools and tactics.

500,000 lines of code

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

600+ hours of videos

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

Cloud Lab Workspace

New projects every month to help you stay updated in the latest tools and tactics.

Unlimited 1:1 sessions

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

Technical Support

Chat with our technical experts to solve any issues you face while building your projects.

7 Days risk-free trial

We offer an unconditional 7-day money-back guarantee. Use the product for 7 days and if you don't like it we will make a 100% full refund. No terms or conditions.

Payment Options

0% interest monthly payment schemes available for all countries.

Business Problem

The common big data use case that we are going to take is “Log Analytics” where there is a requirement for analysing the log data which comes from various sources such as websites, mobile devices, sensors and applications. The tracking of application availability, fraud detection, and SLA monitoring can be achieved using log analytics. Automated trigger can be setup. The logs from different sources can be transformed to common format for easy query execution.

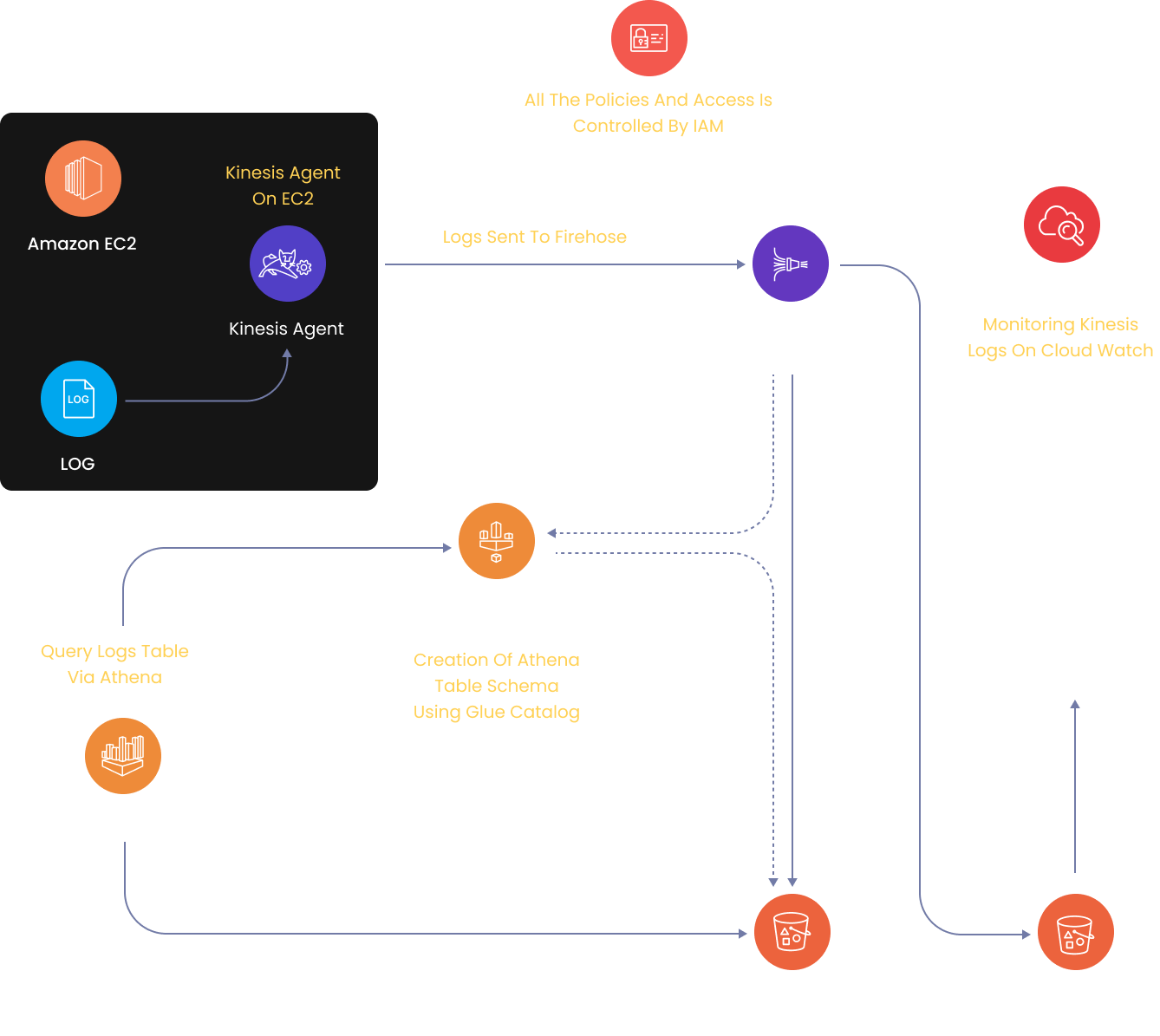

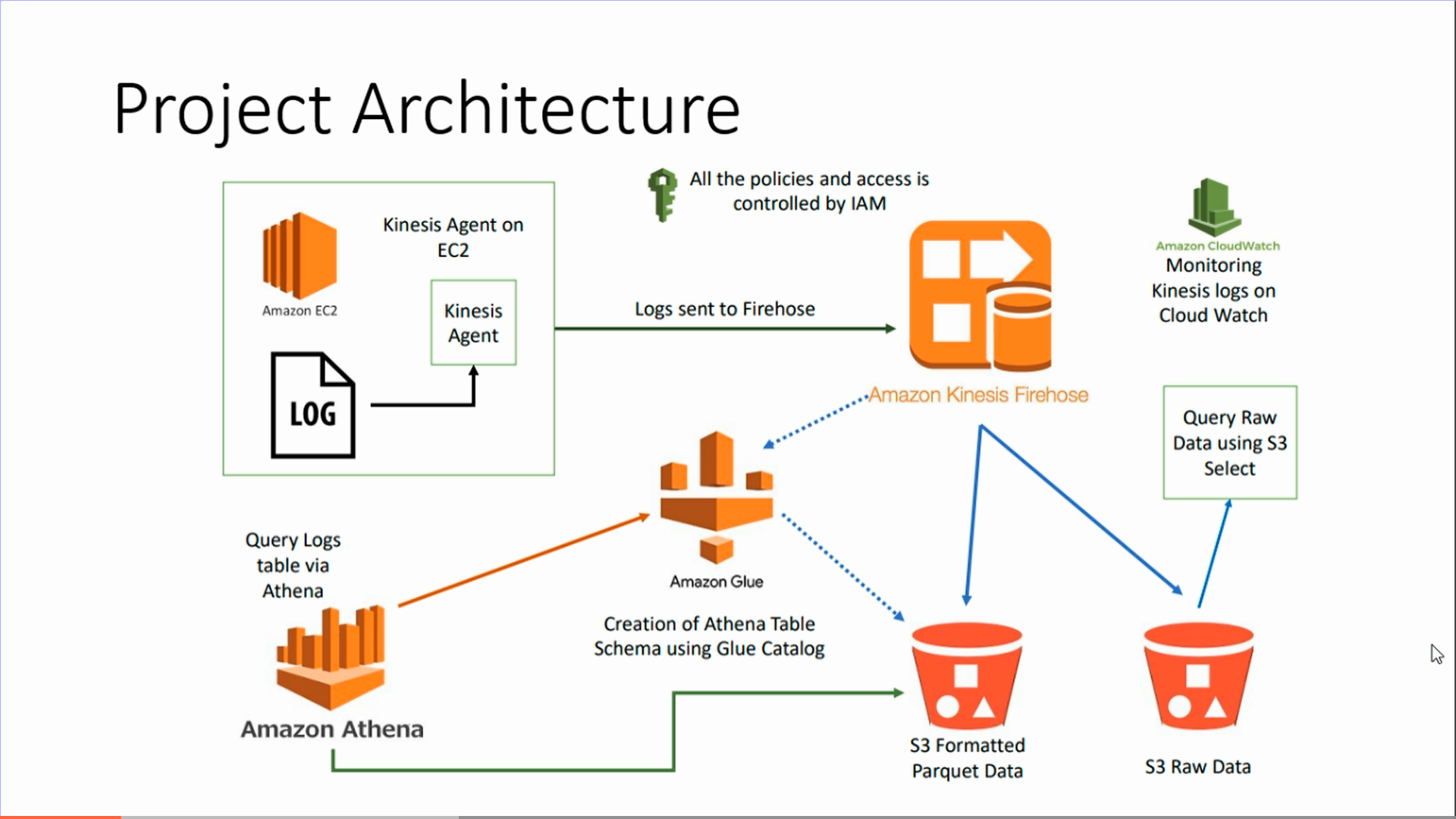

Project Architecture

Solution by using AWS Native Services:

As now a days various applications are running over the cloud so the logs from these applications can be parsed and stored in S3. End to end log analytics solution that collects, ingests, processes and loads both the batch data and streaming data. Processed data will be available to users in near real time. The solution is highly reliable, cost effective, scales automatically to varying data volumes and require almost no IT administration.

Services that we are going to use:

Amazon S3 – This is easy to use service with a simple web services interface to store and retrieve any amount of data from anywhere on the web. It is a safe place to store the files. The data is spread across multiple devices and facilities, this is object based service and the file size can be from 0 bytes to 5 TB for uploading. There is unlimited storage and the files are stored in buckets.

AWS IAM – This is nothing but identity and access management which enables us to manage access to AWS services and resources securely. We can create and manage AWS users and groups, use permissions to allow and deny their access to AWS resources. It is a feature of AWS with no additional charge.

AWS EC2 – It is a service which provides resizable compute capacity in cloud and designed to make web-scale cloud computing easier. We can launch instances with a variety of operating systems.

AWS kinesis firehose – In this the delivery stream is the underlying entity of firehose. Use the firehose by creating a delivery stream to a specified destination and send the data to it. The record is the data of interest which is our data producer sends to a delivery stream which can be large as 1000KB. The data producers send records to a delivery stream.

AWS Glue – It is a fully managed ETL service in which we can categorise our data, clean it, enrich it and move it reliably between various data stores. It is simple and cost effective.

AWS Athena – It is an interactive query service for S3 in which there is no need to load data it stays in S3. It is server less and supports many data formats e.g CSV, JSON, ORC, Parquet, AVRO.

AWS Cloud Watch – It monitors our AWS resources and applications that we run on AWS in real time.

Project Execution:

Recommended

Projects

Microsoft Fabric - All-in-one AI-Powered Analytics Solution

Microsoft Fabric - The ultimate AI-driven analytics solution. From data integration to predictive modeling, revolutionize your decision-making process.|ProjectPro

How to Learn Airflow From Scratch in 2024?

The ultimate curated collection of premier resources tailored to guide you to learn Apache Airflow from the ground up in 2024. | ProjectPro

Chain of Thought Prompting in LLMs : A Beginner's Guide

Discover Chain of Thought Prompting – a way to have more interesting conversations with smart computers!

Get a free demo