Solved end-to-end Data Science & Big Data projects

Get ready to use Data Science and Big Data projects for solving real-world business problems

START PROJECTBig Data Project Categories

Apache Hadoop Projects

27 Projects

Apache Hive Projects

22 Projects

Apache Kafka Projects

8 Projects

Apache Flume Projects

4 Projects

Spark MLlib Projects

4 Projects

Apache Sqoop Projects

2 Projects

Trending Big Data Projects

GCP Project to Learn using BigQuery for Exploring Data

Learn using GCP BigQuery for exploring and preparing data for analysis and transformation of your datasets.

View Project Details

SQL Project for Data Analysis using Oracle Database-Part 6

In this SQL project, you will learn the basics of data wrangling with SQL to perform operations on missing data, unwanted features and duplicated records.

View Project Details

Python and MongoDB Project for Beginners with Source Code-Part 1

In this Python and MongoDB Project, you learn to do data analysis using PyMongo on MongoDB Atlas Cluster.

View Project Details

Data Science Project Categories

Data Science Projects in R

22 Projects

NLP Projects

14 Projects

Neural Network Projects

11 Projects

Tensorflow Projects

4 Projects

IoT Projects

1 Projects

H2O R Projects

1 Projects

Trending Data Science Projects

Forecasting Business KPI's with Tensorflow and Python

In this machine learning project, you will use the video clip of an IPL match played between CSK and RCB to forecast key performance indicators like the number of appearances of a brand logo, the frames, and the shortest and longest area percentage in the video.

View Project Details

MLOps Project for a Mask R-CNN on GCP using uWSGI Flask

MLOps on GCP - Solved end-to-end MLOps Project to deploy a Mask RCNN Model for Image Segmentation as a Web Application using uWSGI Flask, Docker, and TensorFlow.

View Project Details

Linear Regression Model Project in Python for Beginners Part 2

Machine Learning Linear Regression Project for Beginners in Python to Build a Multiple Linear Regression Model on Soccer Player Dataset.

View Project Details

Customer Love

Unlimited 1:1 Live Interactive Sessions

- 60-minute live session

Schedule 60-minute live interactive 1-to-1 video sessions with experts.

- No extra charges

Unlimited number of sessions with no extra charges. Yes, unlimited!

- We match you to the right expert

Give us 72 hours prior notice with a problem statement so we can match you to the right expert.

- Schedule recurring sessions

Schedule recurring sessions, once a week or bi-weekly, or monthly.

- Pick your favorite expert

If you find a favorite expert, schedule all future sessions with them.

-

Use the 1-to-1 sessions to

- Troubleshoot your projects

- Customize our templates to your use-case

- Build a project portfolio

- Brainstorm architecture design

- Bring any project, even from outside ProjectPro

- Mock interview practice

- Career guidance

- Resume review

Latest Blogs

How to Ace Databricks Certified Data Engineer Associate Exam?

Prepare effectively and maximize your chances of success with this guide to master the Databricks Certified Data Engineer Associate Exam. | ProjectPro

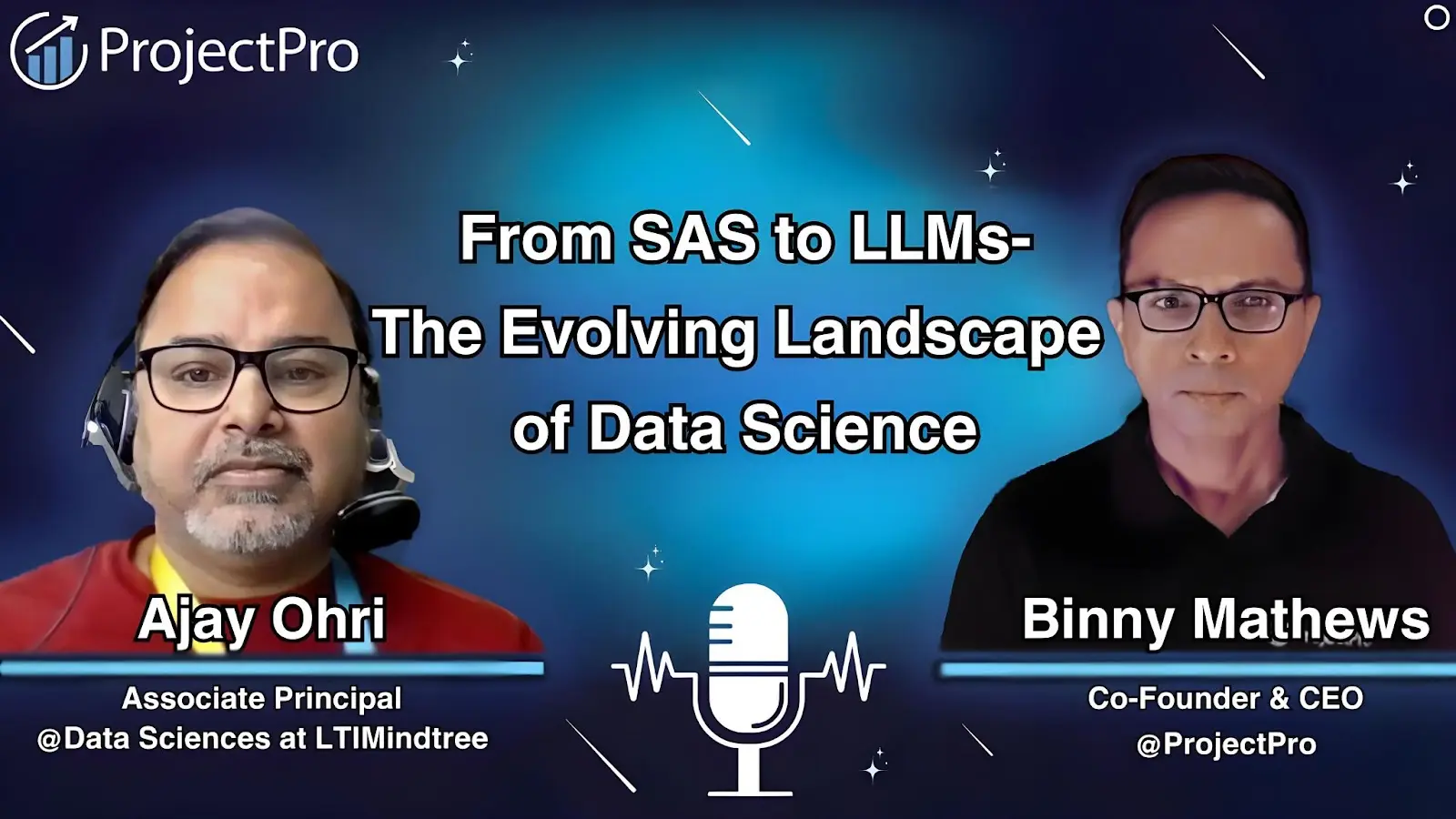

Evolution of Data Science: From SAS to LLMs

Explore the evolution of data science from early SAS to cutting-edge LLMs and discover industry-transforming use cases with insights from an industry expert.

20+ Natural Language Processing Datasets for Your Next Project

Use these 20+ Natural Language Processing Datasets for your next project and make your portfolio stand out.

We power Data Science & Data Engineering

projects at

Join more than

115,000+ developers worldwide

Get a free demo