How to Create a Simulated Dataset for Cluster Analysis in Python?

This tutorial will help you understand how to simulate datasets for cluster analysis using Python—step-by-step instructions and Examples | ProjectPro

Cluster analysis is a powerful technique used in various fields to uncover hidden patterns within data. However, you need data to decide which clustering algorithm to use. This tutorial will help you create a simulated dataset for cluster analysis in Python so you can experiment with clustering algorithms and gain insights from your data.

Table of Contents

- Importance of Datasets for Cluster Analysis

- Where Can I Find Good Datasets for Clustering?

- Why are Clusters in Python Crucial for Data Analysis?

- How to Cluster Data in Python?

- Clustering Dataset Example

- How to Create Simulated Data for Clustering in Python?

- Become a Machine Learning Expert with ProjectPro!

Importance of Datasets for Cluster Analysis

A dataset serves as the foundation upon which clustering algorithms operate. The observations or data points will be grouped into clusters based on their similarities. A high-quality dataset ensures the accuracy and reliability of clustering results, enabling informed decision-making. With a representative dataset, clusters may accurately reflect the underlying structure of the data, leading to accurate conclusions and effective strategies. Therefore, a carefully curated dataset is essential for conducting meaningful and insightful cluster analysis, facilitating a better understanding of complex data relationships, and driving actionable outcomes.

Where Can I Find Good Datasets for Clustering?

Good datasets for clustering can be found across various online repositories and databases dedicated to machine learning datasets, such as Kaggle, ProjectPro Repository, UCI Machine Learning Repository, and OpenML. These platforms offer multiple datasets across different domains, including retail, healthcare, and finance, suitable for clustering tasks. Additionally, governmental organizations and research institutions often provide access to datasets for public use. When selecting a dataset, it's essential to consider factors like data size, quality, and relevance to your specific clustering objectives.

Why are Clusters in Python Crucial for Data Analysis?

Clusters in Python help group similar data points, enabling insights extraction, pattern recognition, and data segmentation. They provide methods like K-means, hierarchical clustering, and DBSCAN, facilitating exploratory data analysis, anomaly detection, and classification tasks. This helps analysts understand complex datasets, identify trends, and make informed decisions, enhancing the efficiency and accuracy of data analysis processes.

How to Cluster Data in Python?

Python offers various libraries and methods to perform clustering, with some of the most commonly used ones being K-Means, Hierarchical Clustering, and DBSCAN. Depending on your specific dataset and requirements, you may need to experiment with different algorithms and parameters to achieve the best clustering results. Additionally, it's essential to preprocess your data appropriately and evaluate the quality of the clusters obtained.

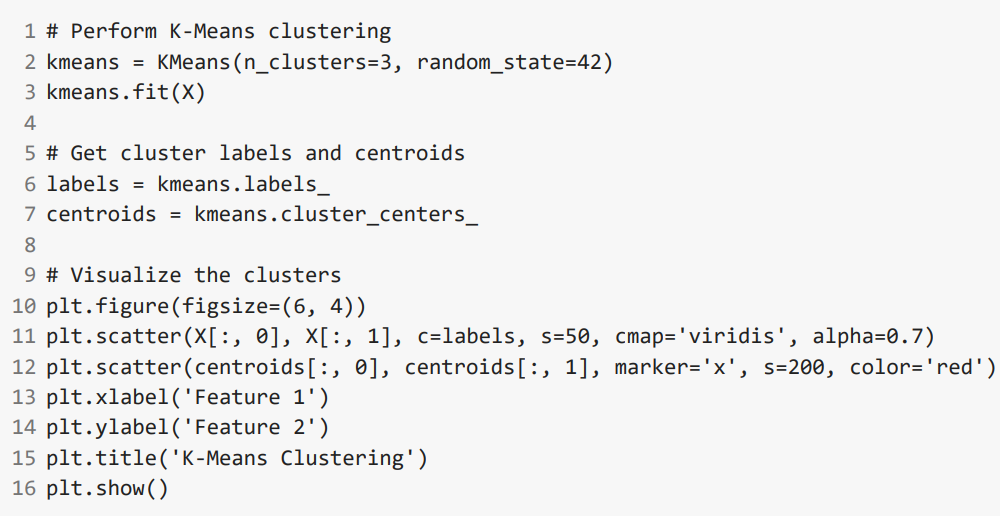

Clustering Dataset Example

Let's create a simple example dataset and demonstrate how to perform clustering on it using Python. We'll generate synthetic data with two features and create three clusters for this example.

How to Create Simulated Data for Clustering in Python?

Step 1 - Import the library - GridSearchCv

from sklearn.datasets import make_blobs

import matplotlib.pyplot as plt

import pandas as pd

Here, we have imported modules pandas and make_blobs from different libraries. We will understand how to use these later in the code snippet. For now, just have a look at these imports.

Step 2 - Generating the data

Here we are using make_blobs to generate cluster data. We have stored features and targets.

-

n_samples: It signifies the number of samples(row) we want in our dataset. By default it is set to 100

-

n_features: It signifies the number of features(columns) we want in our dataset. By default it is set to 20

-

centers: It signifies the number of centers of clusters we want in the final dataset.

-

cluster_std: It signifies the standard deviation of the clusters.

features, clusters = make_blobs(n_samples = 2000,

n_features = 10,

centers = 5,

cluster_std = 0.4,

shuffle = True)

Step 3 - Viewing the dataset

We are viewing the first five rows of the dataset.

print("Feature Matrix: ");

print(pd.DataFrame(features, columns=["Feature 1", "Feature 2", "Feature 3",

"Feature 4", "Feature 5", "Feature 6", "Feature 7", "Feature 8",

"Feature 9", "Feature 10"]).head())

Step 4 - Plotting the dataset

We are plotting a scatter plot of the dataset.

plt.scatter(features[:,0], features[:,1])

plt.show()

So the output comes as:-

Feature Matrix:

Feature 1 Feature 2 Feature 3 Feature 4 Feature 5 Feature 6

0 -3.250833 8.562522 9.593569 -3.485778 -7.546606 5.552687

1 9.054550 -7.848605 6.113184 -1.216320 0.938390 -0.014400

2 -3.283226 8.265441 9.444884 -4.683565 -9.065774 5.621277

3 9.046466 -7.939761 5.010928 -0.324473 0.564307 0.236226

4 -5.023092 3.376868 -1.774365 0.098546 -0.511007 2.635681

Feature 7 Feature 8 Feature 9 Feature 10

0 -2.705651 -5.992366 -1.286639 9.337890

1 4.675954 3.914470 2.751996 4.704688

2 -1.872878 -5.695557 -0.861680 9.692971

3 4.224936 4.444636 2.813714 4.280825

4 -3.561718 -4.892824 0.898923 -0.429435

Become a Machine Learning Expert with ProjectPro!

From exploring new algorithms and tuning hyperparameters to understanding the behavior of existing models, the ability to generate simulated datasets helps you advance your understanding of cluster analysis in Python. ProjectPro offers access to over 270 projects focused on data science and machine learning. These projects let individuals apply their knowledge in practical scenarios and gain hands-on experience, which is essential for becoming a machine learning expert. Each project has step-by-step video tutorials, hands-on labs, and personalized guidance to learn data science techniques in diverse contexts and gain valuable insights to drive decision-making processes.

Download Materials