How To Check a Model’s Recall Score Using Cross-Validation in Python?

This recipe will show you how to check models' recall score using cross-validation in Python.

Table of Contents

Cross Validation Recall Score Python Code Recipe Objective

Worried about your machine learning model's accuracy? Let's put it to the test and check your model's recall score using cross-validation in Python. Follow ProjectPro’s Python Code recipe to see just how well your model is performing!

Accelerate Your Data Science Career With End-to-End Solved Data Science Project Solutions

Why You Need to Check Model’s Cross Val Recall Score?

Checking a model's recall score using cross-validation in Python is an important step in evaluating the performance of a machine learning model. Here are some reasons why you might want to do this:

-

Imbalanced Data: If your dataset has an imbalanced class distribution, meaning one class has significantly more or fewer samples than the other classes, accuracy can be a misleading metric for evaluating the model's performance. Recall, on the other hand, measures the proportion of actual positives that are correctly identified by the model, regardless of the number of false positives. Therefore, evaluating recall using cross-validation can help to provide a more accurate estimate of the model's performance.

-

Model Generalization: Cross-validation helps to evaluate how well the model generalizes to new data. By testing the model on multiple subsets of the data, you can get a better sense of its ability to make accurate predictions on unseen data. Evaluating recall using cross-validation can help to ensure that the model is not overfitting the training data and is able to make accurate predictions on new data.

-

Hyperparameter Tuning: Cross-validation can be used to tune the hyperparameters of a machine learning model, such as the regularization parameter or the learning rate. Evaluating recall using cross-validation can help to identify the best set of hyperparameters that optimize the model's performance on the test data.

-

Model Comparison: Cross-validation can also be used to compare the performance of multiple models on the same dataset. By evaluating recall using cross-validation, you can determine which model performs the best on the test data, which can help to guide your decision-making process when selecting a model for deployment.

Checking a model's recall score using cross-validation in Python is an important step in evaluating the performance of a machine learning model, particularly when dealing with imbalanced data or when you need to ensure that the model generalizes well to new data.

Steps to Check Model’s Recall Score Using Cross-validation in Python

Below are a few easy-to-follow steps to check your model’s cross-validation recall score in Python.

Step 1 - Import The Library

from sklearn.model_selection import cross_val_score from sklearn.tree import DecisionTreeClassifier from sklearn import datasets

We have only imported cross_val_score, DecisionTreeClassifier, and required datasets.

Step 2 - Setting Up The Data

We have imported an inbuilt breast cancer dataset to train the model. We have stored data in X and target in y.

cancer = datasets.load_breast_cancer() X = cancer.data y = cancer.target

Step 3 - Model and Cross-validation

We have used DecisionTreeClassifier as a model and also used the cross-validation method. In cross-validation, we passed the model, scoring metric as recall and cv as 5. We have computed the mean and standard deviation of the cross-validation score.

dtree = DecisionTreeClassifier() print(cross_val_score(dtree, X, y, scoring="recall", cv = 5)) mean_score = cross_val_score(dtree, X, y, scoring="recall", cv = 5).mean() std_score = cross_val_score(dtree, X, y, scoring="recall", cv = 5).std() print(mean_score) print(std_score)

So the output comes-

[0.90277778 0.93055556 0.95774648 0.95774648 0.84507042]

0.9160015649452269

0.03188194241586345

Cross Validation Techniques

Here are some of the cross-validation methods you can use for evaluating your machine learning models’ performance and accuracy-

-

K-fold Cross-validation

This is one of the most popular cross-validation techniques. This approach divides the data into k equal subsets, then trains and tests the model k times, using each subset as the test set once.

Here is a sample K-fold cross-validation Python code without the sklearn library:

-

Stratified K-fold Cross-validation

The stratified K-fold cross-validation is another form of cross-validation that ensures each fold has a similar distribution of classes to the dataset's original distribution. This is especially helpful when working with unbalanced datasets.

-

Leave-one-out Cross-validation

This is another cross-validation method in which a single instance serves as the test set, and the remaining data is used for training. The process is repeated for each instance, and the outcomes are aggregated.

Take Your Python Coding Skills To The Next Level With Industry-level Data Science Python Projects

Overview of Precision-Recall Metrics

This section will help you understand the Precision and Recall metrics for assessing the performance of your machine learning models.

Can Precision-Recall be the Same?

The recall score assesses the proportion of true positive predictions made out of all positive predictions, while the precision score determines the proportion of true positive predictions made out of all positive instances. Therefore, even though the overall number of true positives is the same, precision and recall cannot be the same because they have different values.

Precision-Recall Calculation Using Cross-validation

Let us consider a ‘precision and recall’ simple example to show the steps for precision-recall curve calculation using cross-validation:

-

Load the data first, then preprocess it as required. Depending on your needs, you can use techniques like scaling, normalization, feature extraction, or any other type of data processing.

-

Next, specify the cross-validation method and classifier you want to use. In this case, we will use stratified k-fold cross-validation with five folds and logistic regression as the classifier.

-

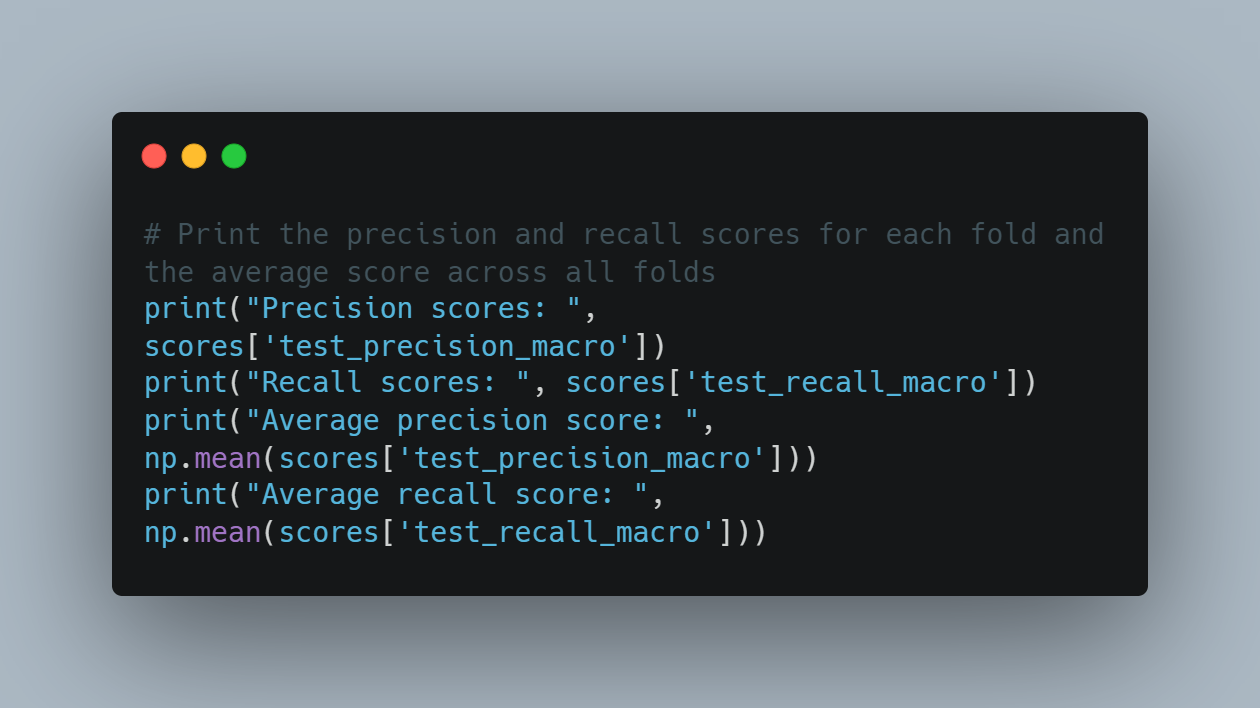

Now, use the cross_validate function to calculate the precision and recall scores.

-

Print the average scores across all folds and the precision and recall scores for each fold.

You don't have to remember all the machine learning algorithms by heart because of amazing libraries in Python. Work on these Machine Learning Projects in Python with code to know more!

FAQs on Cross Validation Python Code

-

What is the 4-fold cross-validation accuracy?

The 4-fold cross-validation accuracy is a performance metric that evaluates the accuracy of a machine learning model. It entails splitting the dataset into four equal sections, training the model on the three sections, evaluating it on the fourth, repeating the steps four times, and aggregating the outputs.

-

Does cross-validation improve accuracy?

Yes, cross-validation can improve accuracy by providing a more reliable estimate of a model's performance. By testing the model on multiple data subsets, cross-validation helps reduce overfitting and improve the model's performance.

-

What is the concept of cross-validation?

Cross-validation is a technique for assessing the performance of a machine learning model. The process entails splitting up the available data into various subsets, training the model on some subsets, and testing it on the remaining subsets to ensure accuracy.

-

What is accuracy precision-recall?

Accuracy, precision, and recall are evaluation metrics used to measure the performance of machine learning (classification) models. The proportion of correct predictions is measured by accuracy, the proportion of true positive predictions is measured by precision, and the recall measures the proportion of correctly predicted positive events.

Join Millions of Satisfied Developers and Enterprises to Maximize Your Productivity and ROI with ProjectPro - Read ProjectPro Reviews Now!

Download Materials