How to Write a Hive SQL Query to Create Tables & Query Data?

Create tables & extract insights using Hive SQL. This recipe provides a practical roadmap to Hive, making big data analytics a breeze. | ProjectPro

Recipe Objective - How to Write a Hive SQL Query to Create Tables & Query Data?

Hive is a powerful data warehousing and SQL-like query language that facilitates the management and analysis of large datasets in a Hadoop ecosystem. It is widely used for querying, summarizing, and analyzing structured and semi-structured data. Check out this recipe to go through the process of creating tables and querying data in Hive using SQL queries. It will also cover the essential steps and provide examples to help you understand the concepts involved.

Understanding Hive SQL

Before diving into writing SQL queries in Hive, it's essential to understand the basics of Hive SQL and its syntax. Hive SQL is similar to traditional SQL, but it has its own set of commands and functions tailored for distributed data processing in Hadoop.

Hadoop Project to Perform Hive Analytics using SQL and Scala

Hive SQL Syntax

Hive SQL follows a syntax that's similar to other SQL dialects, but with some unique features for handling big data. A typical Hive SQL query consists of:

-

SELECT: To retrieve data from tables.

-

FROM: To specify the source table.

-

WHERE: To filter data based on conditions.

-

GROUP BY: To group data.

-

HAVING: To filter grouped data.

-

ORDER BY: To sort the result.

-

JOIN: To combine data from multiple tables.

Hive SQL Examples

Here are some Hive SQL examples for common data operations:

-

Aggregations: Compute sums, averages, or other aggregations on data.

-

Joins: Combine data from multiple tables.

-

Subqueries: Use subqueries within your SQL statements.

-

Conditional Logic: Implement conditional statements in your queries.

How to Write a Hive SQL Query to Create Tables - Step-by-Step Guide

Here is a step-by-step guide on crafting Hive SQL queries to master the art of table creation in Hive for efficient data management.

System requirements: Hive SQL Query to Create Tables

-

Install Ubuntu in the virtual machine Click Here

-

Install single node Hadoop machine Click Here

-

Install Apache Hive Click Here

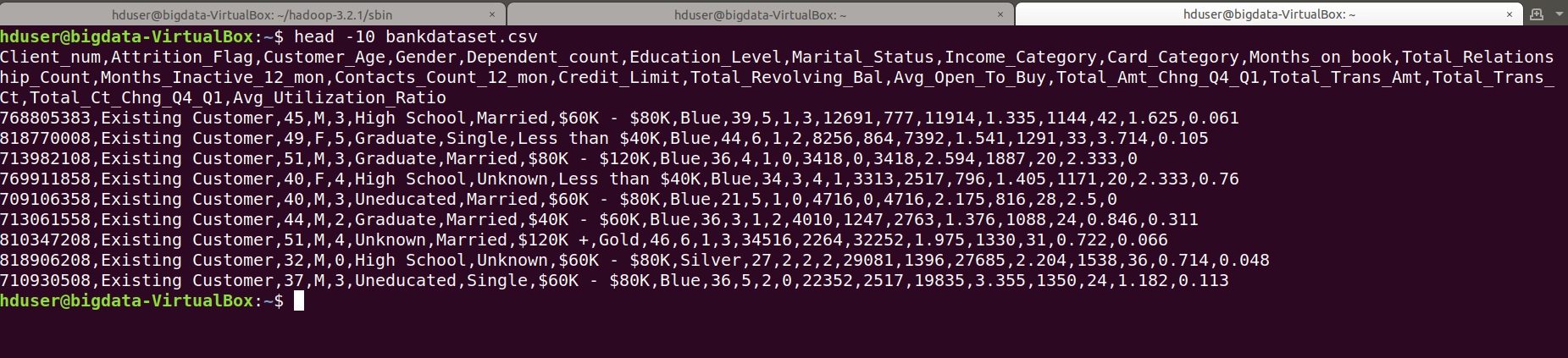

Step 1: Prepare a Dataset

Here we are using the bank related comma separated values (csv) dataset for the create hive table in local.

Use linux command to check data as follows:

head -10 listbankdataset.csv

Data is looks as follows :

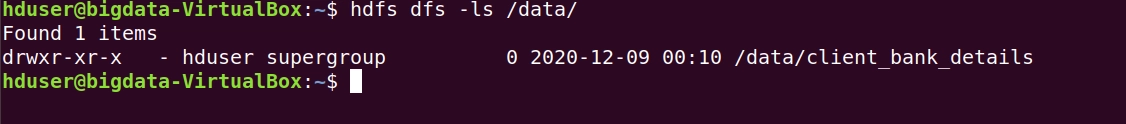

Step 2 : Copy the CSV Data to HDFS (Hadoop Distributed File System)

Here we are going to copy the csv file to HDFS. Before that we need to create a directory to store the file. To create a directory in the HDFS:

hduser@bigdata-VirtualBox:~$ hdfs dfs -mkdir /data hduser@bigdata-VirtualBox:~$ hdfs dfs -mkdir /data/client_bank_details/

above command as follows:

![]()

In the above commands we create /data folder 1st then inside the data folder created another folder client_bank_details.

To check whether a directory is created or not.

To copy the csv file use below command

hduser@bigdata-VirtualBox:~$ hdfs dfs -put /home/hduser/listbankdataset.csv /data/client_bank_details/

To verify the file is exists or not in directory path follow as below:

hduser@bigdata-VirtualBox:~$ hdfs dfs -ls /data/client_bank_details/

![]()

Note: In our scenario directory path in the hadoop is /data/client_bank_details/

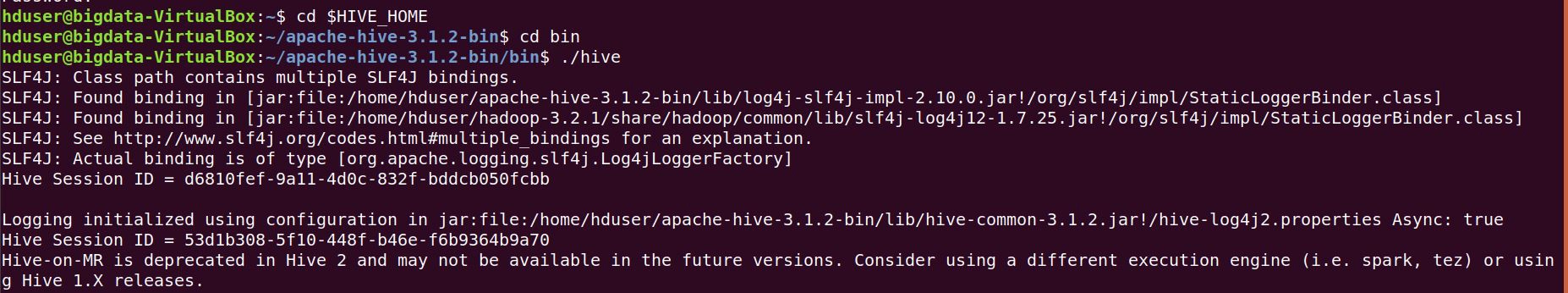

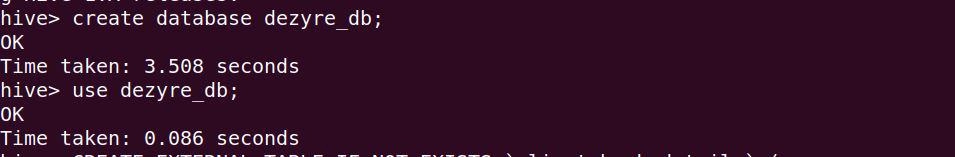

Before create an external table open the hive shell and we need to create a database as follows : Open the hive shell

To create a database :

Create database dezyre_db; use dezyre_db;

Retail Analytics Project Example using Sqoop, HDFS, and Hive

Step 3 : Create an External Hive Table and Load the Data

Here we are going create an external hive table on the top of the csv file for that use below hive script to create a table as follows :

CREATE EXTERNAL TABLE IF NOT EXISTS `client_bank_details` ( `client_num` string, `attrition_flag` string, `customer_age` string, `gender` string, `dependent_count` string, `education_level` string, `marital_atatus` string, `Income_xategory` string, `card_xategory` string, `months_on_book` string, `total_relationship_count` string, `months_inactive_12_mon` string, `contacts_count_12_mon` string, `credit_cimit` string, `total_revolving_bal` string, `avg_open_to_buy` string, `total_amt_chng_q4_q1` string, `total_trans_amt` string, `total_trans_ct` string, `total_ct_chng_q4_q1` string, `avg_utilization_ratio` string ) ROW FORMAT SERDE 'org.apache.hadoop.hive.serde2.OpenCSVSerde' WITH SERDEPROPERTIES ( "separatorChar" = ",", "quoteChar" = "\"" ) STORED AS TEXTFILE LOCATION '/data/client_bank_details' TBLPROPERTIES ("skip.header.line.count"="1");

In the above hql script we are creating an external by specifying the data format and location and also skipping the headers of the csv file.

above command looks as follows:

Airline Dataset Analysis using Hadoop, Hive, Pig and Athena

Step 4 : Query and Verify the Data

To query the data using below query in hive shell

Select * from client_bank_details limit 10;

Output of the above code:

Explore More about Hive SQL Operations with ProjectPro!

Mastering Hive SQL is a valuable skill for data professionals. The ability to create tables and query data using Hive SQL is essential in the world of data science and big data. However, the real magic happens when you apply your knowledge in real-world projects. To gain practical experience and deepen your expertise, consider exploring ProjectPro. This one-stop platform offers a repository of over 270+ projects, providing you with the hands-on experience you need to excel in the field of data science and big data. Don't just learn Hive SQL, put it to use with ProjectPro!