Top 100 AWS Interview Questions and Answers for 2024

Prepare for your cloud computing job interview with this list of top AWS Interview Questions for Freshers and Experienced and land a top gig as AWS expert.

Land your dream job with these AWS interview questions and answers suitable for multiple AWS Cloud computing roles starting from beginner to advanced levels.

How to add a time input widget in streamlit

Downloadable solution code | Explanatory videos | Tech Support

Start Project“I would like to become an AWS Solution Architect. What do you think are the most commonly asked AWS Architect Interview questions that I will have to answer during my interview?”

We often get asked this question by beginners and professionals looking to land a top cloud computing gig. If you are looking for an AWS cloud computing job, you will need to prepare a list of questions that can help you excel at your next cloud computing job interview. ProjectPro brings you the list of top AWS interview questions curated in close collaboration with a panel of AWS cloud computing experts.

Table of Contents

- Top AWS Interview Questions and Answers for 2024

- AWS Basic Interview Questions

- Advanced AWS Interview Questions and Answers

- Scenario-Based AWS Interview Questions

- AWS EC2 Interview Questions and Answers

- AWS S3 Interview Questions and Answers

- AWS IAM Interview Questions and Answers

- AWS Cloud Engineer Interview Questions and Answers

- AWS Technical Interview Questions

- Terraform AWS Interview Questions and Answers

- AWS Glue Interview Questions and Answers

- AWS Lambda Interview Questions and Answers

- AWS Interview Questions and Answers for DevOps

- AWS Interview Questions and Answers for Java Developer

- AWS Interview Questions for Testers

- AWS Data Engineer Interview Questions and Answers

- Infosys AWS Interview Questions

- FAQs on AWS Interview Questions and Answers

Top AWS Interview Questions and Answers for 2024

AWS Basic Interview Questions

Below are some commonly-asked AWS Interview Questions and Answers for Freshers to gain a better understanding of the various AWS services and their implementation.

1. Differentiate between on-demand instances and spot instances.

Spot Instances are spare unused Elastic Compute Cloud (EC2) instances that one can bid for. Once the bid exceeds the existing spot price (which changes in real-time based on demand and supply), the spot instance will be launched. If the spot price exceeds the bid price, the instance can go away anytime and terminate within 2 minutes of notice. The best way to decide on the optimal bid price for a spot instance is to check the price history of the last 90 days available on the AWS console. The advantage of spot instances is that they are cost-effective, and the drawback is that they can be terminated anytime. Spot instances are ideal to use when –

-

There are optional nice-to-have tasks.

-

You have flexible workloads that can run when there is enough computing capacity.

-

Tasks that require extra computing capacity to improve performance.

On-demand instances are made available whenever you require them, and you need to pay for the time you use them hourly. These instances can be released when they are no longer required and do not require any upfront commitment. The availability of these instances is guaranteed by AWS, unlike spot instances.

The best practice is to launch a couple of on-demand instances which can maintain a minimum level of guaranteed compute resources for the application and add on a few spot instances whenever there is an opportunity.

Here's what valued users are saying about ProjectPro

Ed Godalle

Director Data Analytics at EY / EY Tech

Ameeruddin Mohammed

ETL (Abintio) developer at IBM

Not sure what you are looking for?

View All Projects2. What is the boot time for an instance store-backed instance?

The boot time for an Amazon Instance Store -Backed AMI is usually less than 5 minutes.

3. Is it possible to vertically scale on an Amazon Instance? If yes, how?

Following are the steps to scale an Amazon Instance vertically –

-

Spin up a larger Amazon instance than the existing one.

-

Pause the existing instance to remove the root ebs volume from the server and discard.

-

Stop the live running instance and detach its root volume.

-

Make a note of the unique device ID and attach that root volume to the new server.

-

Start the instance again.

4. Differentiate between vertical and horizontal scaling in AWS.

The main difference between vertical and horizontal scaling is how you add compute resources to your infrastructure. In vertical scaling, more power is added to the existing machine. In contrast, in horizontal scaling, additional resources are added to the system with the addition of more machines into the network so that the workload and processing are shared among multiple devices. The best way to understand the difference is to imagine retiring your Toyota and buying a Ferrari because you need more horsepower. This is vertical scaling. Another way to get that added horsepower is not to ditch the Toyota for the Ferrari but buy another car. This can be related to horizontal scaling, where you drive several cars simultaneously.

When the users are up to 100, an Amazon EC2 instance alone is enough to run the entire web application or the database until the traffic ramps up. Under such circumstances, when the traffic ramps up, it is better to scale vertically by increasing the capacity of the EC2 instance to meet the increasing demands of the application. AWS supports instances up to 128 virtual cores or 488GB RAM.

When the users for your application grow up to 1000 or more, vertical cannot handle requests, and there is a need for horizontal scaling, which is achieved through a distributed file system, clustering, and load balancing.

New Projects

5. What is the total number of buckets that can be created in AWS by default?

100 buckets can be created in each of the AWS accounts. If additional buckets are required, increase the bucket limit by submitting a service limit increase.

6. Differentiate between Amazon RDS, Redshift, and Dynamo DB.

|

Features |

Amazon RDS |

Redshift |

Dynamo DB |

|

Computing Resources |

Instances with 64 vCPU and 244 GB RAM

|

Nodes with vCPU and 244 GB RAM |

Not specified, SaaS-Software as a Service. |

|

Maintenance Window |

30 minutes every week. |

30 minutes every week. |

No impact |

|

Database Engine |

MySQL, Oracle DB, SQL Server, Amazon Aurora, Postgre SQL |

Redshift |

NoSQL |

|

Primary Usage Feature |

Conventional Databases |

Data warehouse |

Database for dynamically modified data |

|

Multi A-Z Replication |

Additional Service |

Manual |

In-built |

7. An organization wants to deploy a two-tier web application on AWS. The application requires complex query processing and table joins. However, the company has limited resources and requires high availability. Which is the best configuration for the company based on the requirements?

DynamoDB deals with core problems of database storage, scalability, management, reliability, and performance but does not have an RDBMS’s functionalities. DynamoDB does not support complex joins or query processing, or complex transactions. You can run a relational engine on Amazon RDS or Amazon EC2 for this kind of functionality.

8. What should be the instance’s tenancy attribute for running it on single-tenant hardware?

The instance tenancy attribute must be set to a dedicated instance, and other values might not be appropriate for this operation.

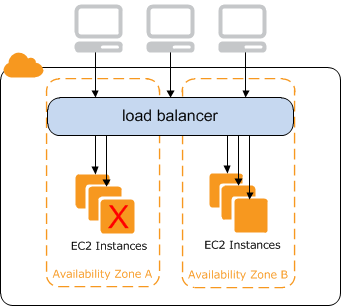

9. What are the important features of a classic load balancer in Amazon Elastic Compute Cloud (EC2)?

-

The high availability feature ensures that the traffic is distributed among Amazon EC2 instances in single or multiple availability zones. This ensures a high scale of availability for incoming traffic.

-

Classic load balancer can decide whether to route the traffic based on the health check’s results.

-

You can implement secure load balancing within a network by creating security groups in a VPC.

-

Classic load balancer supports sticky sessions, which ensures a user’s traffic is always routed to the same instance for a seamless experience.

10. What parameters will you consider when choosing the availability zone?

Performance, pricing, latency, and response time are factors to consider when selecting the availability zone.

11. Which instance will you use for deploying a 4-node Hadoop cluster in AWS?

We can use a c4.8x large instance or i2.large for this, but using a c4.8x will require a better configuration on the PC.

12. How will you bind the user session with a specific instance in ELB (Elastic Load Balancer)?

This can be achieved by enabling Sticky Session.

13. What are the possible connection issues you encounter when connecting to an Amazon EC2 instance?

-

Unprotected private key file

-

Server refused key

-

Connection timed out

-

No supported authentication method available

-

Host key not found,permission denied.

-

User key not recognized by the server, permission denied.

14. Can you run multiple websites on an Amazon EC2 server using a single IP address?

More than one elastic IP is required to run multiple websites on Amazon EC2.

15. What happens when you reboot an Amazon EC2 instance?

Rebooting an instance is just similar to rebooting a PC. You do not return to the image’s original state. However, the hard disk contents are the same as before the reboot.

16. How is stopping an Amazon EC2 instance different from terminating it?

Stopping an Amazon EC2 instance result in a normal shutdown being performed on the instance, and the instance is moved to a stop state. However, when an EC2 instance is terminated, it is transferred to a stopped state, and any EBS volumes attached to it are deleted and cannot be recovered.

Advanced AWS Interview Questions and Answers

Here are a few AWS Interview Questions and Answers for experienced professionals to further strengthen their knowledge of AWS services useful in cloud computing.

17. Mention the native AWS security logging capabilities.

AWS CloudTrail:

This AWS service facilitates security analysis, compliance auditing, and resource change tracking of an AWS environment. It can also provide a history of AWS API calls for a particular account. CloudTrail is an essential AWS service required to understand AWS use and should be enabled in all AWS regions for all AWS accounts, irrespective of where the services are deployed. CloudTrail delivers log files and an optional log file integrity validation to a designated Amazon S3 (Amazon Simple Storage Service) bucket once almost every five minutes. When new logs have been delivered, AWS CloudTrail may be configured to send messages using Amazon Simple Notification Service (Amazon SNS). It can also integrate with AWS CloudWatch Logs and AWS Lambda for processing purposes.

AWS Config:

AWS Config is another significant AWS service that can create an AWS resource inventory, send notifications for configuration changes and maintain relationships among AWS resources. It provides a timeline of changes in resource configuration for specific services. If multiple changes occur over a short interval, then only the cumulative changes are recorded. Snapshots of changes are stored in a configured Amazon S3 bucket and can be set to send Amazon SNS notifications when resource changes are detected in AWS. Apart from tracking resource changes, AWS Config should be enabled to troubleshoot or perform any security analysis and demonstrate compliance over time or at a specific time interval.

AWS Detailed Billing Reports:

Detailed billing reports show the cost breakdown by the hour, day, or month, by a particular product or product resource, by each account in a company, or by customer-defined tags. Billing reports indicate how AWS resources are consumed and can be used to audit a company’s consumption of AWS services. AWS publishes detailed billing reports to a specified S3 bucket in CSV format several times daily.

Amazon S3 (Simple Storage Service) Access Logs:

Amazon S3 Access logs record information about individual requests made to the Amazon S3 buckets and can be used to analyze traffic patterns, troubleshoot, and perform security and access auditing. The access logs are delivered to designated target S3 buckets on a best-effort basis. They can help users learn about the customer base, define access policies, and set lifecycle policies.

Elastic Load Balancing Access Logs:

Elastic Load Balancing Access logs record the individual requests made to a particular load balancer. They can also analyze traffic patterns, perform troubleshooting, and manage security and access auditing. The logs give information about the request processing durations. This data can improve user experiences by discovering user-facing errors generated by the load balancer and debugging any errors in communication between the load balancers and back-end web servers. Elastic Load Balancing access logs get delivered to a configured target S3 bucket based on the user requirements at five or sixty-minute intervals.

Amazon CloudFront Access Logs:

Amazon CloudFront Access logs record individual requests made to CloudFront distributions. Like the previous two access logs, Amazon CloudFront Access Logs can also be used to analyze traffic patterns, perform any troubleshooting required, and for security and access auditing. Users can use these access logs to gather insight about the customer base, define access policies, and set lifecycle policies. CloudFront Access logs get delivered to a configured S3 bucket on a best-effort basis.

Amazon Redshift Logs:

Amazon Redshift logs collect and record information concerning database connections, any changes to user definitions, and activity. The logs can be used for security monitoring and troubleshooting any database-related issues. Redshift logs get delivered to a designated S3 bucket.

Amazon Relational Database Service (RDS) Logs:

RDS logs record information on access, errors, performance, and database operation. They make it possible to analyze the security, performance, and operation of AWS-managed databases. RDS logs can be viewed or downloaded using the Amazon RDS console, the Amazon RDS API, or the AWS command-line interface. The log files may also be queried from a specific database table.

Amazon Relational Database Service (RDS) logs capture information about database access, performance, errors, and operation. These logs allow security, performance, and operation analysis of the AWS-managed databases. Customers can view, watch, or download these database logs using the Amazon RDS console, the AWS Command Line Interface, or the Amazon RDS API. the log files may also be queried by using DB engine-specific database tables.

Amazon VPC Flow Logs:

Amazon VPC Flow logs collect information specific to the IP traffic, incoming and outgoing from the Amazon Virtual Private Cloud (Amazon VPC) network interfaces. They can be applied, as per requirements, at the VPC, subnet, or individual Elastic Network Interface level. VPC Flow log data is stored using Amazon CloudWatch Logs. To perform any additional processing or analysis, the VPC Flow log data can be exported using Amazon CloudWatch. It is recommended to enable Amazon VPC flow logs for debugging or monitoring policies that require capturing and visualizing network flow data.

Centralized Log Management Options:

Various options are available in AWS for centrally managing log data. Most of the AWS audit and access logs data are delivered to Amazon S3 buckets, which users can configure.

Consolidation of all the Amazon S3-based logs into a centralized, secure bucket makes it easier to organize, manage and work with the data for further analysis and processing. The Amazon CloudWatch logs provide a centralized service where log data can be aggregated.

18. What is a DDoS attack, and how can you handle it?

A Denial of Service (DoS) attack occurs when a malicious attempt affects the availability of a particular system, such as an application or a website, to the end-users. A DDoS attack or a Distributed Denial of Service attack occurs when the attacker uses multiple sources to generate the attack.DDoS attacks are generally segregated based on the layer of the Open Systems Interconnection (OSI) model that they attack. The most common DDoS attacks tend to be at the Network, Transport, Presentation, and Application layers, corresponding to layers 3, 4, 6, and 7, respectively.

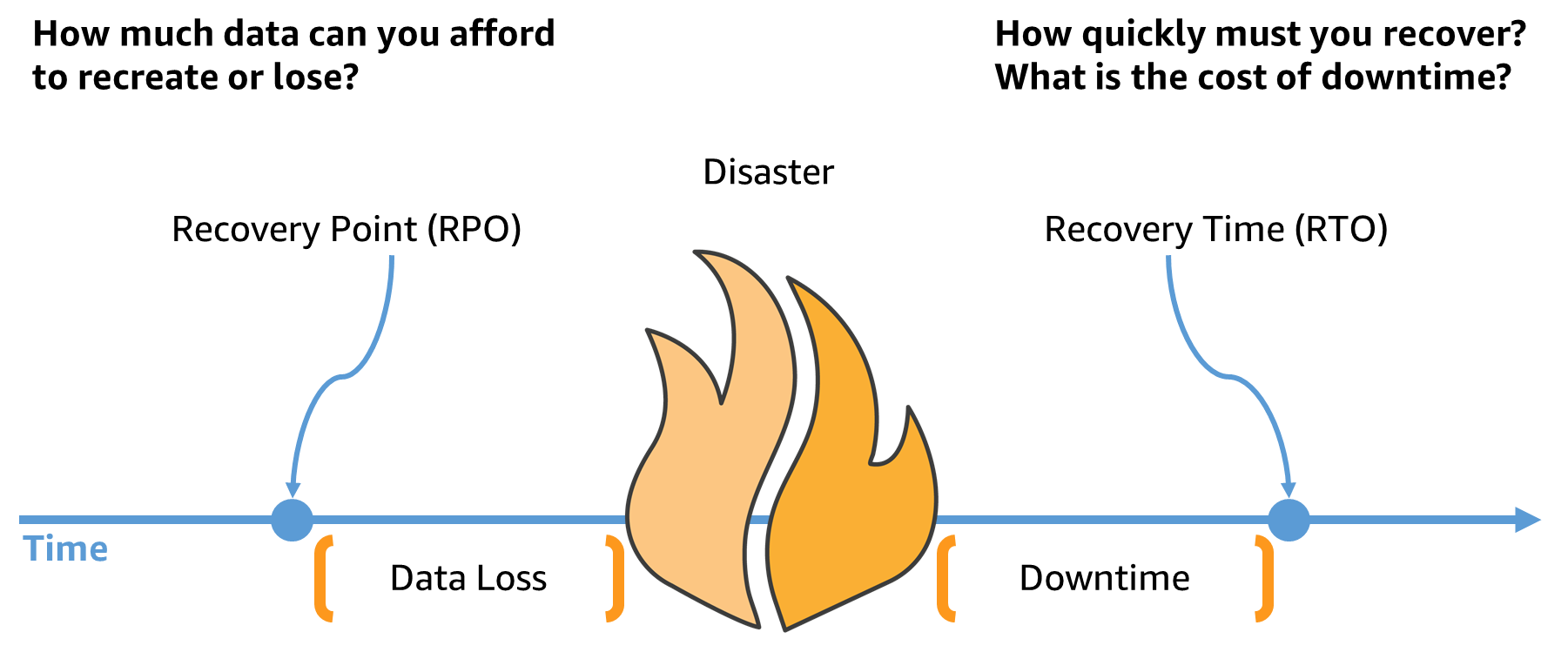

19. What are RTO and RPO in AWS?

The Disaster Recovery (DR) Strategy involves having backups for the data and redundant workload components. RTO and RPO are objectives used to restore the workload and define recovery objectives on downtime and data loss.

Recovery Time Objective or RTO is the maximum acceptable delay between the interruption of a service and its restoration. It determines an acceptable time window during which a service can remain unavailable.

Recovery Point Objective or RPO is the maximum amount of time allowed since the last data recovery point. It is used to determine what can be considered an acceptable loss of data from the last recovery point to the service interruption.

RPO and RTO are set by the organization using AWS and have to be set based on business needs. The cost of recovery and the probability of disruption can help an organization determine the RPO and RTO.

Upskill yourself for your dream job with industry-level big data projects with source code.

20. How can you automate EC2 backup by using EBS?

AWS EC2 instances can be backed up by creating snapshots of EBS volumes. The snapshots are stored with the help of Amazon S3. Snapshots can capture all the data contained in EBS volumes and create exact copies of this data. The snapshots can then be copied and transferred into another AWS region, ensuring safe and reliable storage of sensitive data.

Before running AWS EC2 backup, it is recommended to stop the instance or detach the EBS volume that will be backed up. This ensures that any failures or errors that occur will not affect newly created snapshots.

The following steps must be followed to back up an Amazon EC2 instance:

-

Sign in to the AWS account, and launch the AWS console.

-

Launch the EC2 Management Console from the Services option.

-

From the list of running instances, select the instance that has to be backed up.

-

Find the Amazon EBS volumes attached locally to that particular instance.

-

List the snapshots of each of the volumes, and specify a retention period for the snapshots. A snapshot has to be created of each volume too.

-

Remember to remove snapshots that are older than the retention period.

21. Explain how one can add an existing instance to a new Auto Scaling group?

To add an existing instance to a new Auto Scaling group:

-

Open the EC2 console.

-

From the instances, select the instance that is to be added

-

Go to Actions -> Instance Setting -> Attach to Auto Scaling Group

-

Select a new Auto Scaling group and link this particular group to the instance.

Scenario-Based AWS Interview Questions

22. You have a web server on an EC2 instance. Your instance can get to the web but nobody can get to your web server. How will you troubleshoot this issue?

23. What steps will you perform to enable a server in a private subnet of a VPC to download updates from the web?

24. How will you build a self-healing AWS cloud architecture?

25. How will you design an Amazon Web Services cloud architecture for failure?

26. As an AWS solution architect, how will you implement disaster recovery on AWS?

27. You run a news website in the eu-west-1 region, which updates every 15 minutes. The website is accessed by audiences across the globe and uses an auto-scaling group behind an Elastic load balancer and Amazon relation database service. Static content for the application is on Amazon S3 and is distributed using CloudFront. The auto-scaling group is set to trigger a scale-up event with 60% CPU utilization. You use an extra large DB instance with 10.000 Provisioned IOPS that gives CPU Utilization of around 80% with freeable memory in the 2GB range. The web analytics report shows that the load time for the web pages is an average of 2 seconds, but the SEO consultant suggests that you bring the average load time of your pages to less than 0.5 seconds. What will you do to improve the website's page load time for your users?

28. How will you right-size a system for normal and peak traffic situations?

29. Tell us about a situation where you were given feedback that made you change your architectural design strategy.

30. What challenges are you looking forward to for the position as an AWS solutions architect?

31. Describe a successful AWS project which reflects your design and implementation experience with AWS Solutions Architecture.

32. How will you design an e-commerce application using AWS services?

33. What characteristics will you consider when designing an Amazon Cloud solution?

34. When would you prefer to use provisioned IOPS over Standard RDS storage?

35. What do you think AWS is missing from a solutions architect’s perspective?

36. What if Google decides to host YouTube.com on AWS? How will you design the solution architecture?

AWS EC2 Interview Questions and Answers

37. Is it possible to cast-off S3 with EC2 instances? If yes, how?

It is possible to cast-off S3 with EC2 instances using root approaches backed by native occurrence storage.

38. How can you safeguard EC2 instances running on a VPC?

AWS Security groups associated with EC2 instances can help you safeguard EC2 instances running in a VPC by providing security at the protocol and port access level. You can configure both INBOUND and OUTBOUND traffic to enable secured access for the EC2 instance.AWS security groups are much similar to a firewall-they contain a set of rules which filter the traffic coming into and out of an EC2 instance and deny any unauthorized access to EC2 instances.

39. How many EC2 instances can be used in a VPC?

There is a limit of running up to a total of 20 on-demand instances across the instance family, you can purchase 20 reserved instances and request spot instances as per your dynamic spot limit region.

40. What are some of the key best practices for security in Amazon EC2?

-

Create individual AWS IAM (Identity and Access Management) users to control access to your AWS recourses. Creating separate IAM users provides separate credentials for every user, making it possible to assign different permissions to each user based on the access requirements.

-

Secure the AWS Root account and its access keys.

-

Harden EC2 instances by disabling unnecessary services and applications by installing only necessary software and tools on EC2 instances.

-

Grant the least privileges by opening up permissions that are required to perform a specific task and not more than that. Additional permissions can be granted as required.

-

Define and review the security group rules regularly.

-

Have a well-defined, strong password policy for all users.

-

Deploy anti-virus software on the AWS network to protect it from Trojans, Viruses, etc.

41. A distributed application that processes huge amounts of data across various EC2 instances. The application is designed to recover gracefully from EC2 instance failures. How will you accomplish this in a cost-effective manner?

An on-demand or reserved instance will not be ideal in this case, as the task here is not continuous. Moreover. Launching an on-demand instance whenever work comes up makes no sense because on-demand instances are expensive. In this case, the ideal choice would be to opt for a spot instance owing to its cost-effectiveness and no long-term commitments.

AWS S3 Interview Questions and Answers

42. Will you use encryption for S3?

It is better to consider encryption for sensitive data on S3 as it is a proprietary technology.

43. How can you send a request to Amazon S3?

Using the REST API or the AWS SDK wrapper libraries, which wrap the underlying Amazon S3 REST API.

44. What is the difference between Amazon S3 and EBS?

|

|

Amazon S3 |

EBS |

|

Paradigm |

Object Store |

Filesystem |

|

Security |

Private Key or Public Key |

Visible only to your EC2 |

|

Redundancy |

Across data centers |

Within the data center |

|

Performance |

Fast |

Superfast |

45. Summarize the S3 Lifecycle Policy.

AWS provides a Lifecycle Policy in S3 as a storage cost optimizer. In fact, it enables the establishment of data retention policies for S3 objects within buckets. It is possible to manage data securely and set up rules so that it moves between different object classes on a dynamic basis and is removed when it is no longer required.

46. What does the AWS S3 object lock feature do?

We can store objects using the WORM (write-once-read-many) approach using the S3 object lock. An S3 user can use this feature to prevent data overwriting or deletion for a set period of time or indefinitely. Several organizations typically use S3 object locks to satisfy legal requirements that require WORM storage.

AWS IAM Interview Questions and Answers

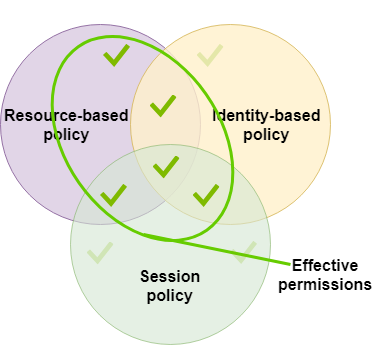

47. What do you understand by AWS policies?

In AWS, policies are objects that regulate the permissions of an entity (users, groups, or roles) or an AWS resource. In AWS, policies are saved as JSON objects. Identity-based policies, resource-based policies, permissions borders, Organizations SCPs, ACLs, and session policies are the six categories of policies that AWS offers.

48. What does AWS IAM MFA support mean?

MFA refers to Multi-Factor Authentication. AWS IAM MFA adds an extra layer of security by requesting a user's username and password and a code generated by the MFA device connected to the user account for accessing the AWS management console.

49. How do IAM roles work?

IAM Role is an IAM Identity formed in an AWS account and granted particular authorization policies. These policies outline what each IAM (Identity and Access Management) role is allowed and prohibited to perform within the AWS account. IAM roles do not store login credentials or access keys; instead, a temporary security credential is created specifically for each role session. These are typically used to grant access to users, services, or applications that need explicit permission to use an AWS resource.

50. What happens if you have an AWS IAM statement that enables a principal to conduct an activity on a resource and another statement that restricts that same action on the same resource?

If more than one statement is applicable, the Deny effect always succeeds.

51. Which identities are available in the Principal element?

IAM roles & roles from within your AWS accounts are the most important type of identities. In addition, you can define federated users, role sessions, and a complete AWS account. AWS services like ec2, cloudtrail, or dynamodb rank as the second most significant type of principal.

AWS Cloud Engineer Interview Questions and Answers

52. If you have half of the workload on the public cloud while the other half is on local storage, what architecture will you use for this?

Hybrid Cloud Architecture.

53. What does an AWS Availability Zone mean?

AWS availability zones must be traversed to access the resources that AWS has to offer. Applications will be designed effectively for fault tolerance. Availability Zones have low latency communications with one another to efficiently support fault tolerance.

54. What does "data center" mean for Amazon Web Services (AWS)?

According to the Amazon Web Services concept, the data center consists of the physical servers that power the offered AWS resources. Each availability zone will certainly include one or more AWS data centers to offer Amazon Web Services customers the necessary assistance and support.

55. How does an API gateway (rest APIs) track user requests?

As user queries move via the Amazon API Gateway REST APIs to the underlying services, we can track and examine them using AWS X-Ray.

56. What distinguishes an EMR task node from a core node?

A core node comprises software components that execute operations and store data in a Hadoop Distributed File System or HDFS. There is always one core node in multi-node clusters. Software elements that exclusively execute tasks are found in task nodes. Additionally, it is optional and doesn't properly store data in HDFS.

AWS Technical Interview Questions

57. A content management system running on an EC2 instance is approaching 100% CPU utilization. How will you reduce the load on the EC2 instance?

This can be done by attaching a load balancer to an auto scaling group to efficiently distribute load among multiple instances.

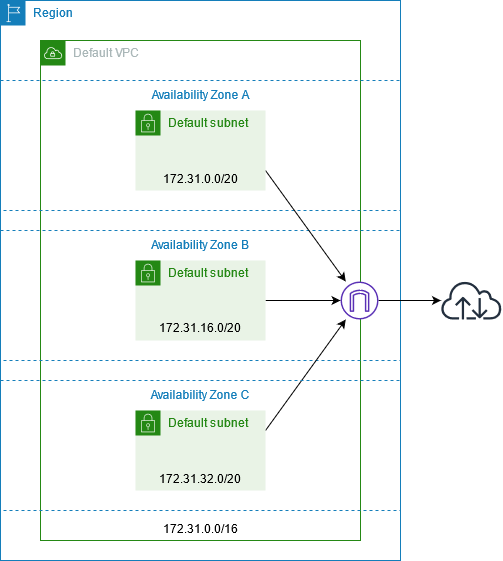

58. What happens when you launch instances in Amazon VPC?

Each instance has a default IP address when launched in Amazon VPC. This approach is considered ideal when connecting cloud resources with data centers.

59. Can you modify the private IP address of an EC2 instance while it is running in a VPC?

It is not possible to change the primary private IP addresses. However, secondary IP addresses can be assigned, unassigned, or moved between instances at any given point.

60. You are launching an instance under the free usage tier from AMI, having a snapshot size of 50GB. How will you launch the instance under the free usage tier?

It is not possible to launch this instance under the free usage tier.

61. Which load balancer will you use to make routing decisions at the application or transport layer that supports VPC or EC2?

Classic Load Balancer.

Terraform AWS Interview Questions and Answers

62. What is the Terraform provider?

Terraform is a platform for managing and configuring infrastructure resources, including computer systems, virtual machines (VMs), network switches, containers, etc. An API provider is in charge of meaningful API interactions that reveal resources. Terraform works with a wide range of cloud service providers.

63. Can we develop on-premise infrastructure using Terraform?

It is possible to build on-premise infrastructure using Terraform. We can choose from a wide range of options to determine which vendor best satisfies our needs.

64. Can you set up several providers using Terraform?

Terraform enables multi-provider deployments, including SDN management and on-premise applications like OpenStack and VMware.

65. What causes a duplicate resource error to be ignored during terraform application?

Here are some of the possible reasons:

-

Rebuild the resources using Terraform after deleting them from the cloud provider's API.

-

Eliminate some resources from the code to prevent Terraform from managing them.

-

Import resources into Terraform, then remove any code that attempts to copy them.

66. What is a Resource Graph in Terraform?

The resources are represented using a resource graph. You can create and modify different resources at the same time. To change the configuration of the graph, Terraform develops a strategy. It immediately creates a framework to help us identify drawbacks.

AWS Glue Interview Questions and Answers

67. How does AWS Glue Data Catalog work?

AWS Glue Data Catalog is a managed AWS service that enables you to store, annotate, and exchange metadata in the AWS Cloud. Each AWS account and region has a different set of AWS Glue Data Catalogs. It establishes a single location where several systems can store and obtain metadata to keep data in data silos and query and modify the data using that metadata. AWS Identity and Access Management (IAM) policies restrict access to the data sources managed by the AWS Glue Data Catalog.

68. What exactly does the AWS Glue Schema Registry do?

You can validate and control the lifecycle of streaming data using registered Apache Avro schemas by the AWS Glue Schema Registry. Schema Registry is useful for Apache Kafka, AWS Lambda, Amazon Managed Streaming for Apache Kafka (MSK), Amazon Kinesis Data Streams, Apache Flink, and Amazon Kinesis Data Analytics for Apache Flink.

Prepare for Your Next Big Data Job Interview with Kafka Interview Questions and Answers

69. What relationship exists between AWS Glue and AWS Lake Formation?

The shared infrastructure of AWS Glue, which provides serverless architecture, console controls, ETL code development, and task monitoring, is beneficial for AWS Lake Formation.

70. How can AWS Glue Schema Registry keep applications highly available?

The Schema Registry storage and control layer supports the AWS Glue SLA, and the serializers and deserializers employ best-practice caching techniques to maximize client schema availability.

71. What do you understand by the AWS Glue database?

The AWS Glue Data Catalog database is a container for storing tables. You create a database when you launch a crawler or manually add a table. The database list in the AWS Glue console contains a list of all of your databases.

AWS Lambda Interview Questions and Answers

72. What are the best security techniques in Lambda?

In Lambda, you can find some of the best alternatives for security. When it comes to limiting access to resources, you can use Identity Access and Management. Another option that extends permissions is a privilege. Access might be restricted to unreliable or unauthorized hosts. The security group's regulations can be reviewed over time to maintain the pace.

73. What does Amazon elastic block store mean?

It is a virtual storage area network that allows for the execution of tasks. Users do not need to worry about data loss even if a disk in the RAID is damaged because it can accept flaws easily. Elastic Block Storage allows for the provisioning and allocation of storage. It can also be linked to the API if necessary.

74. How much time can an AWS Lambda function run for?

After making the requests to AWS Lambda, the entire execution must occur within 300 seconds. Although the timeout is set at 3 seconds by default, you can change it to any value between 1 and 300 seconds.

75. Is the infrastructure that supports AWS Lambda accessible?

No, the foundation on which AWS Lambda runs is inaccessible after it begins managing the compute infrastructure on the user's behalf. It enables Lambda to carry out health checks, deploy security patches, and execute other standard maintenance.

76. Do the AWS Lambda-based functions remain operational if the code or configuration changes?

Yes. When a Lambda function is updated, there will be a limited timeframe, less than a minute—during which both the old and new versions of the function can handle requests.

AWS Interview Questions and Answers for DevOps

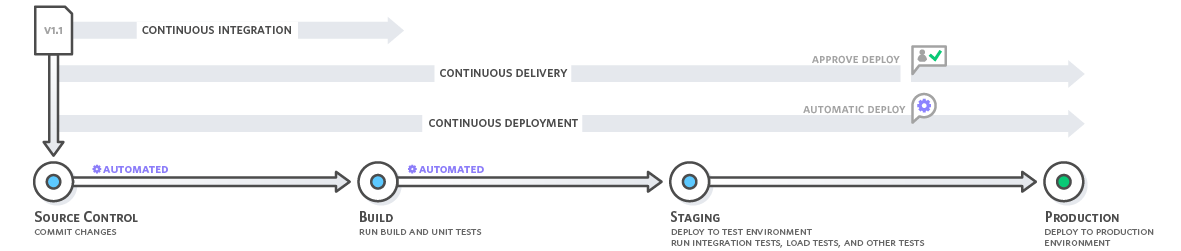

77. What does AWS DevOps' CodePipeline mean?

AWS offers a service called CodePipeline that offers continuous integration and continuous delivery features. It also offers provisions for infrastructure upgrades. The user-defined set release model protocols make it very simple to perform tasks like building, testing, and deploying after each build.

78. How can AWS DevOps manage continuous integration and deployment?

The source code for an application must be stored and versioned using AWS Developer tools. The application is then built, tested, and deployed automatically using the services to an AWS instance or a local environment. When implementing continuous integration and deployment services, it is better to start with CodePipeline and use CodeBuild and CodeDeploy as necessary.

79. What role does CodeBuild play in the release process automation?

Setting up CodeBuild first, then connecting it directly with the AWS CodePipeline, makes it simple to set up and configure the release process. This makes it possible to add build steps continually, and as a result, AWS handles the processes for continuous integration and continuous deployment.

80. Is it possible to use Jenkins and AWS CodeBuild together with AWS DevOps?

It is simple to combine AWS CodeBuild with Jenkins to perform and execute jobs in Jenkins. Creating and manually controlling each worker node in Jenkins is no longer necessary because build jobs are pushed to CodeBuild and then executed there.

81. How does CloudFormation vary from AWS Elastic Beanstalk?

AWS Elastic BeanStalk and CloudFormation are two core services by AWS. Their architecture makes it simple for them to work together. EBS offers an environment in which cloud-deployed applications can be deployed. To manage the lifecycle of the apps, this is incorporated with CloudFormation's tools. This makes using several AWS resources quite simple. This ensures great scalability in terms of using it for various applications, from older applications to container-based solutions.

Theoretical knowledge is not enough to crack any Big Data interview. Get your hands dirty on Hadoop projects for practice and master your Big Data skills!

AWS Interview Questions and Answers for Java Developer

82. Is one Elastic IP enough for all the instances you have been running?

There are both public and private addresses for the instances. Until the Amazon EC2 or instance is terminated or disabled, the private and public addresses are still associated with them. Elastic addresses can be used in place of these addresses, and they remain with the instance as long as the user doesn't explicitly disconnect them. There will be a need for more than one Elastic IP if numerous websites are hosted on an EC2 server.

83. What networking performance metrics can you expect when launching instances in a cluster placement group?

The following factors affect network performance:

-

Type of instance

-

Network performance criteria

When instances are launched in a cluster placement group, one should expect the following:

-

Single flow of 10 Gbps.

-

20 Gbps full-duplex

-

The network traffic will be restricted to 5 Gbps irrespective of the placement unit.

84. What can you do to increase data transfer rates in Snowball?

The following techniques can speed up data transport solution in Snowballs:

-

Execute multiple copy operations simultaneously.

-

Copy data to a single snowball from many workstations.

-

To reduce the encryption overhead, it is best to transfer large files into small batches of smaller files.

-

Removing any additional hops.

85. Consider a scenario where you want to change your current domain name registration to Amazon Route S3 without affecting your web traffic. How can you do it?

Below are the steps to transfer your domain name registration to Amazon Route S3:

-

Obtain a list of the DNS records related to your domain name.

-

Create a hosted zone using the Route 53 Management Console, which will store your domain's DNS records, and launch the transfer operation from there.

-

Get in touch with the domain name registrar you used to register. Examine the transfer processes.

-

When the registrar communicates the need for the new name server delegations, your DNS requests will be processed.

86. How do you send a request to Amazon S3?

There are different options for submitting requests to Amazon S3:

-

Use REST APIs.

-

Use AWS SDK Wrapper Libraries.

AWS Interview Questions for Testers

87. Mention the default tables you receive while establishing an AWS VPC.

When building an AWS VPC, we get the three default tables- Network ACL, Security Group, and Route table.

88. How do you ensure the security of your VPC?

To regulate the security of your VPC, use security groups, network access control lists (ACLs), and flow logs.

89. What does security group mean?

In AWS, security groups, which are essentially virtual firewalls, are used to regulate the inbound and outbound traffic to instances. You can manage traffic depending on different criteria, such as protocol, port, and source and destination.

90. What purpose does the ELB gateway load balancer endpoint serve?

Application servers in the service consumer virtual private cloud (VPC) and virtual appliances in that VPC are connected privately using ELB gateway load balancer endpoints.

91. How is a VPC protected by the AWS Network Firewall?

The stateful firewall by AWS Network firewall protects against unauthorized access to your Virtual Private Cloud (VPC) by monitoring connections and identifying protocols. This service's intrusion prevention program uses active flow inspection to detect and rectify loopholes in security using single-based detection. This AWS service employs web filtering to block known malicious URLs.

AWS Data Engineer Interview Questions and Answers

92. What type of performance can you expect from Elastic Block Storage service? How do you back it up and enhance the performance?

The performance of elastic block storage varies, i.e., it can go above the SLA performance level and drop below it. SLA provides an average disk I/O rate which can, at times, frustrate performance experts who yearn for reliable and consistent disk throughput on a server. Virtual AWS instances do not behave this way. One can back up EBS volumes through a graphical user interface like elasticfox or the snapshot facility through an API call. Also, the performance can be improved by using Linux software raid and striping across four volumes.

93. Imagine that you have an AWS application that requires 24x7 availability and can be down only for a maximum of 15 minutes. How will you ensure that the database hosted on your EBS volume is backed up?

Automated backups are the key processes as they work in the background without requiring manual intervention. Whenever there is a need to back up the data, AWS API and AWS CLI play a vital role in automating the process through scripts. The best way is to prepare for a timely backup of the EBS of the EC2 instance. The EBS snapshot should be stored on Amazon S3 (Amazon Simple Storage Service) and can be used to recover the database instance in case of any failure or downtime.

94. You create a Route 53 latency record set from your domain to a system in Singapore and a similar record to a machine in Oregon. When a user in India visits your domain, to which location will he be routed?

Assuming that the application is hosted on an Amazon EC2 instance and multiple instances of the applications are deployed on different EC2 regions. The request is most likely to go to Singapore because Amazon Route 53 is based on latency, and it routes the requests based on the location that is likely to give the fastest response possible.

95. How will you access the data on EBS in AWS?

Elastic block storage, as the name indicates, provides persistent, highly available, and high-performance block-level storage that can be attached to a running EC2 instance. The storage can be formatted and mounted as a file system, or the raw storage can be accessed directly.

96. How will you configure an instance with the application and its dependencies and make it ready to serve traffic?

You can achieve this with the use of lifecycle hooks. They are powerful as they let you pause the creation or termination of an instance so that you can sneak peek in and perform custom actions like configuring the instance, downloading the required files, and any other steps that are required to make the instance ready. Every auto-scaling group can have multiple lifecycle hooks.

Infosys AWS Interview Questions

97. What do AWS export and import mean?

AWS Import/Export enables you to move data across AWS (Amazon S3 buckets, Amazon EBS snapshots, or Amazon Glacier vaults) using portable storage devices.

98. What do you understand by AMI?

AMI refers to Amazon Machine Image. It's a template that includes the details (an operating system, an application server, and apps) necessary to start an instance. It is a replica of the AMI executing as a virtual server in the cloud.

99. Define the relationship between an instance and AMI.

You can launch instances from a single AMI. An instance type specifies the hardware of the host computer that hosts your instance. Each type of instance offers different cloud computing and memory resources. Once an instance has been launched, it becomes a standard host and can be used in the same way as any other computer.

100. Compare AWS with OpenStack.

|

Services |

AWS |

OpenStack |

|

User Interface |

GUI-Console API-EC2 API CLI -Available |

GUI-Console API-EC2 API CLI -Available |

|

Computation |

EC2 |

Nova |

|

File Storage |

S3 |

Swift |

|

Block Storage |

EBS |

Cinder |

|

Networking |

IP addressing Egress, Load Balancing Firewall (DNS), VPC |

IP addressing load balancing firewall (DNS) |

|

Big Data |

Elastic MapReduce |

- |

If you are willing to leverage various AWS resources and want to push forward on AWS certifications, these AWS interview questions will help you get through the door. However, you will also need hands-on and real-life exposure to AWS projects to successfully work on this cloud computing platform. Check out the ProjectPro repository to get your hands on some industry-level Data Science and Big Data projects to learn how to leverage AWS services efficiently.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

FAQs on AWS Interview Questions and Answers

-

Is AWS Interview difficult?

AWS interview is slightly tricky but with the right amount of preparation and AWS training you can surely crack this interview.

-

How do I prepare for Amazon AWS Interview?

You can prepare for the Amazon AWS interview by learning the fundamentals of AWS services and gaining hands-on experience by working on real-world AWS projects on Github and ProjectPro. Another important step is to check out Amazon AWS interview questions to understand the topics you need to know for this interview.

-

Is AWS good for career?

Yes, AWS is good for your career because it offers higher salaries and a wide range of job opportunities, including those for cloud architects, cloud developers, cloud engineers, cloud network engineers, and more.

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,