25 Data Science Project Ideas for Beginners with Source Code

Data science projects for Beginners-Apply your data science skills to interesting data science project ideas and solve real-world data science problems.

You’ve got your eyes on a rewarding job in data science with your name written all over the data scientist job title. You know you have the data science skills required for the job. Problem is, you don’t have anything that can prove you have a versatile data science skillset. Anyone can mention on their data science resume that they’re a skilled data scientist – hiring managers will want you to back it up with some solid examples otherwise be ready to get dropped like a bad AOL connection. But how can you stand out like a bug-free production-quality data science code and show hiring managers that you’re worth your salt? Easy – Data science projects.

The most effective way to do it is to do it!

Recommender System Machine Learning Project for Beginners-1

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectWell, we believe in this. And this blog is a great medium for beginners to make their hands dirty on data science whilst working on some cool and interesting data science project ideas. We encourage you to try and have fun exploring diverse data science and machine learning projects.

Table of Contents

- Data Science Projects – 5 Reasons They Are Important for A Successful Data Science Career

- Data Science Project Ideas for Beginners Getting Started With Data Science in 2023

- Top 25 Data Science Projects for Beginners with Source Code in 2023

- Easy Data Science Projects for Absolute Beginners

- Basic Data Science Projects for Beginners on Kaggle

- Finance Data Science Projects for Beginners

- Python Data Science Projects for Beginners

- Data Science Projects in R for Beginners

- Elevate your Data Science Skills with ProjectPro!

- FAQs

Data Science Projects – 5 Reasons They Are Important for A Successful Data Science Career

With IBM predicting 700,000 data science job openings by end of 2020, data science is—and always will be—the hottest career choice with demand for data specialists growing to grow progressively as the market expands. It takes an average of 60 days to fill an open data science position and 70 days on average to fill a senior data scientist position. CEOs and hiring managers at top tech companies tell us that they are looking for professionals who can solve real-world data science problems and link their work to business value. As there is no common language for gleaning meaningful insights, and different data science problems require knowledge of different data science tools and technologies, attracting skilled data science talent is difficult and requires a different approach. Today, companies are hiring professionals based on their ability to perform applied data science rather than just theoretical skills. The best way to learn data science and acquire a very practical data science skillset is to start working on data science projects.

A few years ago most of the data science job openings requested a Masters or a Ph.D. in Mathematics, Statistics, or any of the STEM subjects as a must-have. However, over the last few years, things have changed.

The huge data science skills gap and the evolution of data science job roles have compelled employers to hire people who can deliver value to a business in the fastest possible time. Only by working with popular data science tools and practicing a variety of interesting data science projects you can understand how data infrastructures work in reality.

Also, as an increasing number of organizations migrate their machine learning solutions and data to the cloud, it is necessary for data scientists to have an understanding of diverse tools and technologies related to this to stay up-to-date.

With the advent of various machine learning frameworks and libraries that epitomize the complexity behind machine learning algorithms, employers have realized that applying data science practically requires diverse skills that cannot be acquired through academic learning alone.

New Projects

A data scientist needs to be a Jack of all trades but master of some. Unless you are working for tech giants like Google or Facebook, you will not be working solely on modeling the data where you use data pulled by data engineers. Often many companies lack resources in data science teams so to deliver maximum benefit to the business you will have to work across the complete end-to-end data science product development life cycle. Working on end-to-end solved data science projects can make you win over this situation.

Plus, data science beginners can add these data science mini projects to their data science portfolio, making it easier to land a data science job or find lucrative career opportunities and even negotiate a higher salary based on their exposure to a variety of interesting data science projects.

To build a successful career as a data scientist, it is a must for data specialists to work with diverse projects on data science to boost their confidence about the skills they have learned or would like to master.

Data Science Project Ideas for Beginners Getting Started With Data Science in 2023

For those of you looking already working in the data science industry or looking to break into the world of data science with your first data science job, the number of processes, machine learning algorithms, knowledge extraction systems, data science tools, and technologies that you are expected to know can be overwhelming. Python, R, NLTK, TensorFlow, Keras, Tableau, Jupyter, iPython Notebook, Matplotlib. The list goes on … But never fear! We have collated 10+ data science projects for beginners to get you started and point you to the appropriate resources on ProjectPro for further understanding.

Get Closer To Your Dream of Becoming a Data Scientist with 70+ Solved End-to-End ML Projects

Top 25 Data Science Projects for Beginners with Source Code in 2023

1) Churn Prediction in Telecom

2)Sentiment Analysis of Product Reviews

3) Price Recommendation using Machine Learning

4) PUBG Finish Placement Prediction

6) Building a Recommender System

7) Employee Access-Challenge as a Classification Problem

8) Survival Prediction using Machine Learning

9) Personalized Medicine Recommending System

10) Image Masking

12) Fraud Detection as a Classification Problem

13) Macro-economic Trends Prediction

14) Credit Analysis

15) Model Insurance Claim Severity

16) Build a Chatbot from Scratch in Python using NLTK

17) Market Basket Analysis using Apriori

18) Build a Resume Parser using NLP -Spacy

19) Build an Image Classifier Using Tensorflow

20) House Price Prediction using Machine Learning

21) Recommendation System for Retail Stores

23) Human Activity Recognition

24) Stock Market Prediction

25) Wine Quality Prediction

Let’s dive in.

Easy Data Science Projects for Absolute Beginners

In this section, we will discuss a few simpel and easy data science projects for beginners that will be implemented in no time.

1) Churn Prediction in Telecom Industry using Logistic Regression

According to EuropeanBusinessReview, telecommunication providers lose close to $65 million a month from customer churn. Isn’t that expensive? With many emerging telecom giants, the competition in the telecom sector is increasing and the chances of customers discontinuing a service are high. This is often referred to as Customer Churn in Telecom. Telecommunication providers that focus on quality service, lower-cost subscription plans, availability of content and features whilst creating positive customer service experiences have high chances of customer retention. The good news is that all these factors can be measured with different layers of data about billing history, subscription plans, cost of content, network/bandwidth utilization, and more to get a 360-degree view of the customer. This 360-degree view of customer data can be leveraged for predictive analytics to identify patterns and various trends that influence customer satisfaction and help reduce churn in telecom.

Considering that customer churn in telecom is expensive and inevitable, leveraging analytics to understand the factors that influence customer attrition, identifying customers that are most likely to churn, and offering them discounts can be a great way to reduce it. In this data science project, you will build a logistic regression machine learning model to understand the correlation between the different variables in the dataset and customer churn. This end-to-end churn prediction machine learning model using R will tweak the problem of unsatisfied customers and make the revenue flowing for the telecom company.

Here's what valued users are saying about ProjectPro

Ed Godalle

Director Data Analytics at EY / EY Tech

Jingwei Li

Graduate Research assistance at Stony Brook University

Not sure what you are looking for?

View All Projects2) Pairwise Reviews Ranking- Sentiment Analysis of Product Reviews

Product reviews from users are the key for businesses to make strategic decisions as they give an in-depth understanding of what the users actually want for a better experience. Today, almost all businesses have reviews and rating section on their website to understand if a user’s experience has been positive, negative, or neutral. With an overload of puzzling reviews and feedback on the product, it is not possible to read each of those reviews manually. Not only this, most of the time the feedback has many shorthand words and spelling mistakes that could be difficult to decipher. This is where sentiment analysis comes to the rescue.

In this data science project, you will use a natural language processing technique to pre-process and extract relevant features from the reviews and rating dataset. Use semi-supervised learning methodology to apply the pairwise ranking approach to rank reviews and also further segregate them to perform sentiment analysis. The developed model will help businesses maximize user satisfaction efficiently by prioritizing product updates that are likely to have the most positive impact.

Access the end-to-end Data Science Project Solution for Pairwise Ranking of Product Reviews

3) Price Recommendation for Online Sellers

e-commerce platforms today are extensively driven by machine learning algorithms, right from quality checking and inventory management to sales demographics and product recommendations, all use machine learning. One more interesting business use case that e-commerce apps and websites are trying to solve is to eliminate human interference in providing price suggestions to the sellers on their marketplace to speed up the efficiency of the shopping website or app. That’s when price recommendation using machine learning comes to play.

In this data science project, you will build a machine learning model that will automatically suggest the right product prices to online sellers as accurately as possible. This is a challenging data science problem since similar products that have very slight differences like additional specifications, different brand names, the demand for the product can have different product prices. Price prediction modeling becomes even more challenging when there are lakhs of products, which is the case with most of the eCommerce platforms.

Build a Price Recommendation Model using Machine Learning Regression

Basic Data Science Projects for Beginners on Kaggle

In this section, you will explore simple data science projects for beginners in the field that have the dataset available on Kaggle.

4) PUBG FINISH Placement Prediction

With millions of active players and over 50 million copies sold- Player Unknown’s Battlegrounds enjoys huge popularity across the globe and is among the top five best-selling games of all time. PUBG is a game where n different number of people play with n different strategies and predicting the finish placement is definitely a challenging task.

In this data science project, you will basically develop a winning formula i.e. build a model to predict the finishing placement of a player against without a player playing the game.

Let’s Play and Build PUBG Finish Placement Prediction Model

Download the PUBG Finish Placement Prediction Kaggle Dataset

5) Walmart Store’s Sales Forecasting

Ecommerce & Retail use big data and data science to optimize business processes and for profitable decision making. Various tasks like predicting sales, offering product recommendations to customers, inventory management, etc. are elegantly managed with the use of data science techniques. Walmart has used data science techniques to make precise forecasts across their 11,500 generating revenue of $482.13 billion in 2016. As it is clear from the name of this data science project, you will work on Walmart store dataset that consists of 143 weeks of transaction records of sales across 45 Walmart stores and their 99 departments.

Problem Statement

This is an interesting data science problem that involves forecasting future sales across various departments within different Walmart outlets. The challenging aspect of this data science project is to forecast the sales on 4 major holidays – Labor Day, Christmas, Thanksgiving and Super Bowl. The selected holiday markdown events are the ones when Walmart makes highest sales and by forecasting sales for these events they want to ensure that there is sufficient product supply to meet the demand. The dataset contains various details like markdown discounts, consumer price index, whether the week was a holiday, temperature, store size, store type and unemployment rate.

Objectives of the Data Science Project Using Walmart Dataset

Forecast Walmart store sales across various departments using the historical Walmart dataset.

Predict which departments are affected with the holiday markdown events and the extent of impact.

Get More Practice, More Data Science and Machine Learning Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

What will you learn from this data science project?

Learn about the various data types, control structures and looping concepts in R programming language.

Learn to explore and manipulate data with R language

Learn about popular R packages – forecast, plyr, reshape.

Learn about Time Series analysis.

Access the Solution to Kaggle Data Science Challenge -Walmart Store Sales Forecasting

Recommended Reading –

- Top 10 Machine Learning Projects for Beginners

- Top 20 IoT Project Ideas for Beginners

- 8 Machine Learning Projects to Practice for August

- 15 Machine Learning Projects GitHub for Beginners

- 20 Machine Learning Projects That Will Get You Hired

- 15 Data Visualization Projects for Beginners with Source Code

- 10 MLOps Projects Ideas for Beginners to Practice

- 15 Object Detection Project Ideas with Source Code for Practice

- Is Data Science Hard to learn? (Answer: NO!)

- Access Job Recommendation System Project with Source Code

6) Building a Recommender System -Expedia Hotel Recommendations

Everybody wants their products to be personalized and behave the way they want them to be. A recommender system aims to model the preference of a product for a particular user. This data science project aims to study the Expedia Online Hotel Booking System by recommending hotels to users based on their preferences. Expedia dataset was made available as a data science challenge on Kaggle to contextualize customer data and predict the probability of a customer likely to stay at 100 different hotel groups.

Problem Statement

The Expedia dataset consists of 37,670,293 entries in the training set and 2,528,243 entries in the test set. Expedia Hotel Recommendations dataset has data from 2013 to 2014 as the training set and the data for 2015 as the test set. The dataset contains details about check-in and check-out dates, user location, destination details, origin-destination distance, and the actual bookings made. Also, it has 149 latent features which have been extracted from the hotel reviews provided by travelers that are dependent on hotel services like proximity to tourist attractions, cleanliness, laundry service, etc. All the user id’s that present in the test set is present in the training set.

Objectives of the Data Science Project Using Expedia Dataset

Predict the likelihood a user will stay at 100 different hotel groups.

Rank the predictions and returns the top 5 most likely hotel clusters for each user's search query in the test set.

What will you learn from this data science project?

Learn to explore the data with Python Pandas library

Learn to implement a multi-class classification problem

Learn to build a Recommendation System

Tackle various challenges posed by the Expedia Dataset – Curse of Dimensionality, Ranking Requirement, and Missing Data.

Access the Solution to Kaggle Data Science Challenge - Expedia Hotel Recommendations

7) Amazon- Employee Access Data Science Challenge

Employees might have to apply for various resources during their career at a company. Determining various resource access privileges for employees is a popular real-world data science challenge for many giant companies like Google and Amazon. For companies like Amazon because of their highly complicated employee and resource situations, earlier this was done by various human resource administrators. Amazon was interested in automating the process of providing access to various computer resources to its employees to save money and time.

Problem Statement

Amazon- Employee Access Data Science Challenge dataset consists of historical data of 2010 -2011 recorded by human resource administrators at Amazon Inc. The training set consists of 32769 samples and the test set consists of 58922 samples. Every dataset sample has eight features that indicate a different role or group of an Amazon employee.

The objective of the Amazon-Employee Access Data Science Challenge

Build an employee access control system that will automatically approve or reject employee resource applications.

What will you learn from this data science project?

Learn to work with a highly imbalanced dataset.

Build a random forest model for automatically determining resource access privileges of employees.

Learn data exploration with Python Pandas library.

Explore the usage of Python data science libraries – Sci-Kit and NumPy

Access the Solution to Kaggle Data Science Challenge - Amazon-Employee Access Challenge

8) Predict the Survival of Titanic Passengers – Would you survive the Titanic?

This is one of the popular projects related to data science in the global community for data science beginners because the solution to this data science problem provides a clear understanding of what a typical data science project consists of.

Problem Statement

This data science problem involves predicting the fate of passengers aboard the RMS Titanic that famously sank in the Atlantic Ocean on collision with an iceberg during its voyage from UK to New York. The aim of this data science project is to predict which passengers would have survived on the Titanic based on their personal characteristics like age, sex, class of ticket, etc.

Access Data Science and Machine Learning Code Examples for FREE

Objectives of the Data Science Project Using RMS Titanic Dataset

Find out what kind of people were likely to survive.

Predict which passengers survived the disaster.

What will you learn from this data science project?

Learn about the various data types, control structures and looping concepts in Python.

You will learn to apply machine learning libraries in Python to a binary classification problem.

Usage of Python NumPy Library

Usage of Python Pandas Library

Usage of Python Matplotlib Library

Access the Solution to Kaggle Data Science Challenge - Predict the Survial of Titanic Passengers

9) Personalized Medicine Recommending System

The recent talk of the town among Cancer Researchers is how treating diseases like Cancer using Genetic Testing will be a revolution in the universe of Cancer Research. This dreamy revolution has been partially realized because of the significant efforts of clinical pathologists. The pathologist first sequences a cancer tumour gene and then figures out the interpretation of genetic mutations manually. This is quite a tedious process and takes a lot of time as the pathologist has to go look for evidence in clinical literature to derive interpretations. But, this process can be made smooth if we implement Machine Learning algorithms.

If you want to explore the field that integrates both Medicine and Artificial Intelligence, this project will be a good start in that direction.

Problem Statement

Automate the task of classifying every single genetic mutation of the cancer tumour using the dataset prepared by Memorial Sloan Kettering Cancer Center (MSKCC). The dataset contains mutations labelled as tumour growth (drivers) and neutral mutations (passengers). The dataset has been annotated by world-renowned researchers and oncologists manually.

The objective of the Personalized Medicine Recommending System Data Science Project

To design an automated system that can classify genetic mutations in the cancer tumour into classes of drivers and passengers using the MSKCC dataset.

What will you learn from this data science project?

Understanding the implementation of Natural Language Processing techniques.

Merging two dataframes together

Utilizing the word_cloud library

Difference between Stemming and Lemmatization

Implementing the Tf_Idf Vectorizer

Applying Long Short Term Memory (LSTM) Deep Learning model

Access the full solution to this real-world Data Science Project: Keras Deep Learning Projects-Personalized Cancer Treatment Kaggle

10) Image Masking

Often we come across images from which we wish to remove background and utilize them for specific purposes. Carvana, an online start-up, has attempted to build an automated photo studio that clicks 16 photographs of each vehicle in its inventory. Cavana captures these photographs with bright reflects in high resolution. However, sometimes the cars in the background make it difficult for their customers to look at their choice vehicle closely. Thus, an automated tool that can remove background noise from the captured images and only highlight the image’s subject would work like magic for the startup and save tons of hours for their photo editors. You can also implement such an Image Masking system that automatically removes the background noise.

Problem Statement

Using the Carvana Dataset, implement a neural network algorithm to design an Image Masking system that removes photo studio background. This implementation will make it easy to prepare images containing backgrounds that bring the car features into the limelight.

The objective of the Image Masking Data Science Project

Develop an automated Image Masking System that can draw a boundary around the subject of interest in the image.

What will you learn from this data science project?

Nuts and bolts of Image Masking

Working with Tensorflow and Keras Framework

Using Data Augmentation Techniques for improving training performance of the algorithm

Changing various features of the image such as brightness, contrast, etc.

Training a neural network algorithm

Presenting Masked Images as the output

Access the full solution to this real-world Data Science Project: Kaggle Carvana Image Masking Challenge Solution with Keras

Finance Data Science Projects for Beginners

In this section, you will find a list of projects ideas for beginners in data science from the finance industry

11) Loan Default Prediction Project using Gradient Booster

Loans are the core revenue generators for banks as a major part of the profit for banks comes directly from the interest of these loans. However, the loan approval process is intensive with so much validation and verification based on multiple factors. And even after so much verification, banks still are not assured if a person will be able to repay the loan without any difficulties. Today, almost all banks use machine learning to automate the loan eligibility process in real-time based on various factors like Credit Score, Marital and Job Status, Gender, Existing Loans, Total Number of Dependents, Income, and Expenses, and others.

Access Data Science and Machine Learning Code Examples for FREE

This is an interesting data science project in the financial domain where you will build a predictive model to automate the process of targeting the right applicants for loans. This data science problem is a classification problem where you use the information about a loan applicant to predict if they will be able to repay the loan or not. You will begin by exploratory data analysis, followed by pre-processing, and finally testing the developed model. On completion of this project, you will develop a solid understanding of solving classification problems using machine learning.

Build a Loan Default Prediction Model Now

12)

Credit Card Fraud Detection as a Classification Problem

This is an interesting data science problem for data scientists, who want to get out of their comfort zone by tackling classification problems by having a large imbalance in the size of the target groups. Credit Card Fraud Detection is usually viewed as a classification problem with the objective of classifying the transactions made on a particular credit card as fraudulent or legitimate. There are not enough credit card transaction datasets available for practice as banks do not want to reveal their customer data due to privacy concerns.

Problem Statement

This data science project aims to help data scientists develop an intelligent credit card fraud detection model for identifying fraudulent credit card transactions from highly imbalanced and anonymous credit card transactional datasets. To solve this project related to data science, the popular Kaggle dataset containing credit card transactions made in September 2013 by European cardholders. This credit card transactional dataset consists of 284,807 transactions of which 492 (0.172%) transactions were fraudulent. It is a highly unbalanced dataset as the positive class i.e. the number of frauds accounts only for 0.172% of all the credit card transactions in the dataset. There are 28 anonymized features in the dataset that are obtained by feature normalization using principal component analysis. There are two additional features in the dataset that have not been anonymized – the time when the transaction was made and the amount in dollars. This will help detect the overall cost of fraud.

Access Data Science and Machine Learning Code Examples for FREE

Objectives of the Data Science Project Using Credit Card Dataset

Identify the number of fraudulent transactions in the dataset.

Predict the accuracy of the model developed.

What will you learn from this data science project?

Learn to handle imbalanced data.

Implement a classifier model using Python or R programming language.

Compare the accuracy of the model.

Access the Solution to Kaggle Data Science Challenge - Credit Card Fraud Detection

Get More Practice, More Data Science and Machine Learning Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

13) Macro-economic Trends Prediction

Often we hear from the news channels that XYZ country is going to be one of the biggest economies in the world in the year 2030. If you have ever wondered what is the basis for such statements, then allow me to help you. These news channels rely on statisticians-cum-Data Scientists to come up with such predictions. These data scientists analyze several financial datasets of various countries and then submit their conclusions which then make the headlines. Well, if you are interested in a project that revolves around this area, then you are at the right page.

Problem Statement

Design a macro-economic trends predictor using Machine Learning algorithms on a Financial Dataset from Kaggle.

The objective of the Macro-economics Trends Data Science Project

To build a system that can forecast financial trends.

What will you learn from this data science project?

- Handling null values in a Dataset.

- Implementing Exploratory Data Analysis Techniques

- Understanding Linear Regression, Ridge Regression, XGBoost, elasticnet models.

- Analyzing which model works best by plotting relevant graphs

Access the full solution to this real-world Data Science Project: Machine Learning Project-Two Sigma Financial Modeling Challenge.

14) Credit Analysis

Many multinational companies of the Banking sector have now started relying on Artificial intelligence techniques that allow them to classify loan applications. They request their customers to submit specific details about themselves.

They then utilize these details and implement machine learning algorithms on the collected data to understand the ability of their customers to repay the loan they have applied for. You can also attempt to build a project around this using the German Credit Dataset.

Problem Statement

Use the German Credit Dataset to classify loan applications. The dataset contains information of about 1,000 loan applicants. And for each applicant, we have 20 feature variables. Out of these 20 attributes, three can take continuous values, and the remaining seventeen can take discrete values.

The objective of the Credit Analysis Data Science Project

The aim is to extract essential features from the dataset and use those features for classification.

What will you learn from this data science project?

Implementing Logistic Regression algorithm and Extracting important features

Improving results of Logistic Regression using Random Forest

Training a Neural Network Algorithm

Using famous metrics in Machine Learning to analyze which algorithm is better

Access the full solution to this real-world Data Science Project: Data Science Project-Classification of German Credit Dataset

15) Modelling Insurance Claim Severity

Filing insurance claims and dealing with all the paperwork with an insurance broker or an agent is something that nobody wants to drain their time and energy on. To make the insurance claims process hassle-free, insurance companies across the globe are leveraging data science and machine learning to make this claims service process easier. This beginner-level data science project is about how insurance companies are predictive machine learning models to enhance customer service and make the claims service process smoother and faster.

Whenever a person files an insurance claim, an insurance agent reviews all the paperwork thoroughly and then decides on the claim amount to be sanctioned. This entire paperwork process to predict the cost and severity of the claim is time-taking. In this project, you will build a machine learning model to predict the claim severity based on the input data.

This project will make use of the Allstate Claims dataset that consists of 116 categorical variables and 14 continuous features, with over 300,000 rows of masked and anonymous data where each row represents an insurance claim.

Access the End-To-End Solution for this beginner Data Science Project on Predicting Insurance Claim Severity

View Dataset Used for this Beginner Level Project – Allstate Claims Severity Kaggle Dataset

Python Data Science Projects for Beginners

In this section, you will come across data science projects for beginners in python with source code for most of the project ideas.

16) Building a Chatbot with Python

Do you remember the last time you spoke to a customer service associate on call or via chat for an incorrect item delivered to you from Amazon, Flipkart, or Walmart? Most likely you would have had a conversation with a chatbot instead of a customer service agent. Gartner estimates that 85% of customer interactions will be handled by chatbots by 2022. So what exactly is a chatbot? How can you build an intelligent chatbot using Python?

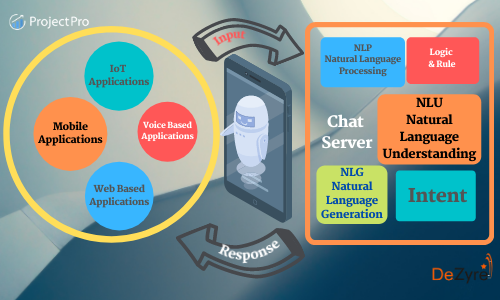

What is a Chatbot?

A chatbot is an AI-based digital assistant that can understand human capabilities and simulate human conversations in natural language to give prompt answers to their questions just like a real human would. Chatbots help businesses increase their operational efficiency by automating customer requests.

How does a Chatbot work?

The most important task of a chatbot is to analyze and understand the intent of a customer request to extract relevant entities. The bot then delivers an appropriate response to the user based on the analysis. Natural language processing plays a vital role in text analytics through chatbots making the interaction between the computer and human feel like a real human conversation. Every chatbot works by adopting the following three classification methods-

Pattern Matching – Makes use of pattern matches to group the text and produce a response

Natural Language Understanding (NLU) – The process of converting textual information into a structured data format that a machine can understand.

Natural Language Generation (NLG) – The process of transforming the structured data into text.

Get FREE Access to Machine Learning Example Codes for Data Cleaning, Data Munging, and Data Visualization

How to build your own chatbot?

In this data science project, you will use a leading and powerful Python library NLTK (Natural Language Toolkit) to work with text data.

Import the required data science libraries and load the data.

Use various pre-processing techniques like Tokenization and Lemmatization to pre-process the textual data.

Create training and test data.

Create a simple set of rules to train the chatbot.

Yay! It’s time to interact with your chatbot.

Are you excited to build a chatbot of your own? Build a conversational chatbot using Python from Scratch that understands what a customer is talking about and responds appropriately.

17) Market Basket Analysis in Python using Apriori Algorithm

Whenever you visit a retail supermarket, you will find that baby diapers and wipes, bread and butter, pizza base and cheese, beer, and chips are positioned together in the store for sales. This is what market basket analysis is all about – analyzing the association among products bought together by customers. Market basket analysis is a versatile use case in the retail industry that helps cross-sell products in a physical outlet and also helps e-commerce businesses recommend products to customers based on product associations. Apriori and FP growth are the most popular machine learning algorithms used for association learning to perform market basket analysis.

In this beginner-level data science project, you will perform Market Basket Analysis in Python using Apriori and FP Growth Algorithm based on association rules to discover hidden insights on how to improve product recommendations for customers. You will learn to apply various metrics like Support, Lift, and Confident to evaluate the association rules.

Learn how to anticipate customer behavior in the real-world – Access the Complete Solution to Python Data Science Project on Market Basket Analysis using Apriori and FP Growth.

18) Building a Resume Parser Using NLP(Spacy) and Machine Learning

Gone are the days when recruiters used to manually screen resumes for a long time. Sifting through thousands of candidates’ resumes for a job is no more a challenging task- all thanks to resume parsers. Resume parsers use machine learning technology to help recruiters search thousands of resumes in an intelligent manner so they can screen the right candidate for a job interview.

What is a Resume Parser?

A resume parser or a CV parser is a program that analyses and extracts CV/ Resume data according to the job description and returns machine-readable output that is suitable for storage, manipulation, and reporting by a computer. A resume parser stores the extracted information for each resume with a unique entry thereby helping recruiters get a list of relevant candidates for a specific search of keywords and phrases (skills). Resume parsers help recruiters set a specific criterion for a job, and candidate resumes that do not match the set criteria are filtered out automatically.

In this data science project, you will build an NLP algorithm that parses a resume and looks for the words (skills) mentioned in the job description. You will use the Phrase Matcher feature of the NLP library Spacy that does “word/phrase” matching for the resume documents. The resume parser then counts the occurrence of words (skills) under various categories for each resume that helps recruiters screen ideal candidates for a job.

Build a Resume Parser using NLP (Spacy)

19) Plant Identification using TensorFlow (Image Classifier)

Image classification is a fantastic application of deep learning where the objective is to classify all the pixels of an image into one of the defined classes. Plant image identification using deep learning is one of the most promising solutions towards bridging the gap between computer vision and botanical taxonomy. If you want to take your first step into the amazing world of computer vision, then this is definitely an interesting data science project idea to get started.

Build an Image Classifier for Plant Species Identification

20) House Price Prediction using Machine Learning

If you think real estate is one such industry that has been alienated by Machine Learning, then we’d like to inform you that it is not the case. The industry has been using Machine learning algorithms for a long time and a popular example of this is the website Zillow. Zillow has a tool called Zestimate that estimates the price of a house on the basis of public data. If you’re a beginner, it’d be a good idea to include this project in your list of data science projects.

Problem Statement

In this data science project, the task is to implement a regression machine-learning algorithm for predicting the price of a house by using the Zillow Dataset. The dataset contains about 60 features and contains 2 files ‘train_2016’ and ‘properties_2016’. The files are linked through each other via a feature called ‘parcelid’.

The objective of the House Price Prediction Data Science Project

To implement a machine learning model that can predict the best future sale predictions of houses.

What will you learn from this data science project?

Cleaning the dataset and techniques for replacing missing data values.

Using exciting data visualization libraries in Python: Matplotlib, seaborn.

Understanding the dataset using statistical methods.

How to know what features are relevant for training a machine learning algorithm

Training different machine learning algorithms for regression problems.

Access the full solution to this real-world Data Science Project: ML Project on Predicting House Prices with Zillow Data

21) Recommendation System for Retail Stores

In case you have tried shopping online, you must have seen the website trying to recommend you a few products. Have you ever wondered how such websites come up with products that you are highly likely to display interest in? Well, that’s because machine learning-based algorithms are running in the background, and this project is all about it.

The objective of the Recommendation System Data Science Project:

Work on the dataset of retail stores, build an efficient recommendation system for them and perform Market Basket Analysis.

What will you learn from this data science project?

This project will help you draw customer insights by performing Exploratory Data Analysis over the given dataset. You will learn about date-time and free items analysis, evaluating deals of the day, tending items selection by analysing the dataset at the item level. Additionally, you will explore the Apriori algorithm and association rules.

Tech Stack: Language: Python

Libraries: pandas, NumPy, seaborn, matplotlib, collections, mlxtend, wordcloud, networkx

Access the full solution to this real-world Data Science Project: Recommender System Machine Learning Project for Beginners-2

22) Fake News Detection

Fake news is spreading rapidly these days through social media, messaging apps, and other digital platforms. It is often created and circulated with the intent of misleading or manipulating people, and can have serious consequences, from influencing public opinion to impacting political outcomes and public health. With AI-based tools, these kind of news can be easily detected and used to tag them with a disclaimer.

What will you learn from this data science project?

The project will guide you on how to use NLP and deep learning models to build a system that can detect fake news. You will learn how to work on a sequence problem in NLP and use models like RNN, GRU, and LSTM to solve such problems. You will also learn how to implement text cleaning and text preprocessing methods like stopword removal, stemming, tokenization, padding, etc. Besides that, you will also get to explore text vectorixation and word embedding models.

Tech Stack: Language: Python

Libraries: Scikit-learn , Tensorflow , Keras, Glove, Flask, nltk, pandas, numpy

Access the full solution to this real-world Data Science Project: NLP and Deep Learning For Fake News Classification in Python

23) Human Activity Recognition

Gone are the days where we would use an analogue watch to check the time. With exciting watches being designed by multiple international brands, people are now gradually switching to smartwatches. Smartwatches are cool watches of the 21st century that have made their way into almost every household. The prime reason for this is the attractive features that they offer. From heart-rate monitoring, ECG monitoring, to workout-tracking, they can do almost anything.

If you have used one such watch, you can recall that it often tells you how well you slept. So, how come a device that never sleeps can guide you about your sleep? To find an answer to this, you can do a simple data science project that associates a dataset of a few people’s daily activities with the data collected by various sensors attached to those people.

Problem Statement

In this data science project, you are expected to use machine-learning algorithms to assign the Human Activity Recognition Dataset features a class out of these six: WALKING, WALKING_UPSTAIRS WALKING_DOWNSTAIRS, SITTING, STANDING, LAYING.

The objective of the Human Activity Recognition Data Science Project

To build a system that can classify human activities by taking specific features into account.

What will you learn from this data science project?

Implementing Exploratory Data Analysis Techniques

Using Amazon AWS to import datasets

Exploring Data Visualization Libraries to plot insightful graphs

Understanding Principal Component Analysis to shortlist relevant features

Cleaning Dataset before applying an algorithm to it

Using different machine learning algorithms like Logistic Regression, SVMs, Random Forest, Neural Networks, etc. for classification tasks

Selecting the best model using statistical metrics

Access the full solution to this real-world Data Science Project: Multiclass classification machine learning project in python analyses human activity recognition

Data Science Projects in R for Beginners

If you are specifically looking for data science projects for beginners in the R programming language then you must check out this section.

24) Stock Market Prediction

“Our favorite holding period is forever.”

Warren Buffet

For most stock investors, the favorite question is “How long should we hold a stock for?”. Every investor wants to know how not to act too fearful and too greedy. And not all of them have Warren Buffet to guide them at every stem. We’d suggest that you stop looking for him. Rather, build your stock market predictor with artificial intelligence tools like Machine Learning. And the approach to this is so simple that you can consider adding this to your Data Science Projects list.

Problem Statement

Build a Stock Market Forecasting system by implementing Machine learning algorithms on the EuroStockMarket Dataset. The dataset has closing prices of major European Stock Indices: Germany DAX (Ibis), Switzerland SMI, France CAC, and UK FTSE, for all business days.

The objective of the Stock Market Prediction Data Science Project

To predict the price of the stock using the mentioned dataset.

What will you learn from this data science project?

Handling a time series dataset in R programming language

Analyzing the trends in a time series dataset

Visualizing the time series data

Techniques like Holt Exponential Smoothing, FBProphet, LSTM, Etc.

Understanding correlation and autocorrelation, SARIMA, and Neural NetworksAccess the full solution to this real-world Data Science Project: Time Series Analysis Project in R on Stock Market forecasting

25) Wine Quality Prediction

On the weekend, most of us prefer having a fancy dinner with our loved ones. While the kids define a fancy dinner as one that has pasta, adults like to add a cherry on top by having a classic glass of red wine along with the Italian dish. But when it comes to shopping for that wine bottle, a few of us get confused about which is the best one to buy. Few believe that the longer it has been fermented, the better it’ll taste. Few suggest relatively sweeter wines are good quality wines. To know a precise answer, you can try building your wine Quality Predictor.

Problem Statement

Use the red wine dataset to build a Wine Quality Predictor System.

The objective of the Wine Quality Prediction Data Science Project

Analyze which chemical properties of a red wine influence its quality using a red wine dataset by Kaggle.

What will you learn from this data science project?

- Applying Data Analysis Techniques

- Implementing Machine Learning Regression Models

- Asking relevant questions and visualizing the answers to them by plotting certain graphs

Access the full solution to this real-world Data Science Project: Wine Quality Prediction in R using Kaggle Wine Dataset

Recommended Reading: Machine Learning NLP Interview Questions with Answers

Elevate your Data Science Skills with ProjectPro!

So, there you have it some interesting data science project ideas to start working your way into data science. No matter whichever data science project you choose to begin, you are sure to open up countless possibilities for developing your data science skills. Reading data science books and tutorials is definitely a great way of learning data science, but there’s no substitution for actually building end-to-end solutions for challenging data science problems. Working on diverse interesting data science projects is the perfect way to improve your data science skills and progress towards mastering them. Your hiring manager will be more impressed with your data science projects on GitHub or on your data science portfolio than a list of books that you’ve read.

ProjectPro offers data science projects in python with source code that have a taste of diverse data science problems from different business domains. Each of these data science projects is designed to develop knowledge of the most popular data science tools and in-demand data science skills that employers are looking for. Professionals build end-to-end solutions for real-world data science problems and work accordingly by modeling the solutions as per their needs. Some of these data science projects are in Python and some in R. Some of these projects on data science are simple and some hard. However, these data science projects are great for resumes, especially before important whiteboard data science interviews. Nobody wants to be a starving data scientist anymore and the best way to learn data science is to do data science. Look for as many data science projects online as you can get involved in working with. Each data science project you work on will become a building block towards mastering data science leading to bigger and better data scientist job opportunities. The world needs better Data Scientists- This is the best time to learn data science by working on interesting data science projects.

Get Access to 50+ solved data science and machine learning projects that have been designed to provide data science enthusiasts with experiential learning experiences. Join the Data Science Game by working on some cool and interesting Data Science Projects!!!

FAQs

1. How do you find data for your data science project?

You can find data for your projects on Google Dataset Search, UCI Machine Learning Repository, Kaggle, Github, Data.gov, and other major dataset search engines and paid data repositories.

2. What projects can I do with data science?

-

Wine Quality Prediction- When it comes to purchasing a wine bottle, many of us are wondering which is the most acceptable option. The Wine Quality Prediction Data Science Project uses a Kaggle red wine dataset to investigate which chemical features of a red wine determine its quality.

-

Walmart Store Sales Forecasting- This fascinating data science project entails estimating future sales across several departments within various Walmart locations. Walmart's chosen holiday markdown events are when they generate the most sales. By projecting sales for these occasions, they can ensure enough product availability to match demand.

-

Stock Market Prediction- This project entails using Machine Learning algorithms on the EuroStockMarket Dataset to create a Stock Market Forecasting system. The dataset includes closing prices for all business days for the following major European stock indices: Germany DAX (Ibis), Switzerland SMI, France CAC, and the United Kingdom FTSE.

3. How do you select the appropriate machine-learning model for a given problem?

To select the appropriate machine learning model for a given problem, first, the problem should be clearly defined, along with the type of data available. Then, based on the problem type and data characteristics, one should consider various factors such as the size of the dataset, the complexity of the model, the interpretability of the model, and the expected accuracy. The selection process usually involves experimenting with multiple models and selecting the one that provides the best results.

4. How do you handle overfitting or underfitting in your models?

To avoid overfitting or underfitting in machine learning models, one can use techniques such as regularization, early stopping, data augmentation, and hyperparameter tuning. Regularization reduces model complexity, early stopping prevents overfitting, data augmentation increases the training dataset size, and hyperparameter tuning adjusts model performance.

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,