AWS Kafka: Your Go-to Solution for Real-Time Data Streaming

Unlock the Power of Real-Time Data Streaming with AWS Kafka - Your Ultimate Guide to Seamless and Efficient Data Processing.| ProjectPro

Explore the full potential of AWS Kafka with this ultimate guide. Discover its key features, pricing details, architectural insights, step-by-step tutorials, and real-world use cases. Elevate your data processing skills with Amazon Managed Streaming for Apache Kafka, making real-time data streaming a breeze.

PySpark Project-Build a Data Pipeline using Kafka and Redshift

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectAccording to IDC, the worldwide streaming market for event-streaming software, such as Kafka, is likely to reach $5.3 billion in 2023 at a CAGR of 26.9%.

Modern applications and systems thrive on the ability to instantly process, analyze, and respond to data streams as they flow in, enabling businesses to make informed decisions, provide seamless user experiences, and stay ahead of the curve. For instance, Airbnb utilizes AWS Kafka to handle data from diverse sources such as property listings, user searches, and bookings, enabling them to adjust pricing and maximize revenue dynamically. Similarly, Netflix relies on AWS Kafka to manage and process massive data streams, guaranteeing uninterrupted streaming experiences worldwide. These real-world applications highlight how AWS Kafka empowers businesses to harness real-time insights, cementing its position as a transformative tool in the data-driven landscape. In other words, AWS Kafka provides the backbone for innovation in the digital world. So, let’s dive in straight to understand the fundamentals of AWS Kafka to unlock a world of possibilities and revolutionize how you can handle data.

Table of Contents

- Understanding AWS Kafka Fundamentals

- AWS Kafka - Key Features

- How does Kafka Work?

- Deep Dive into Apache Kafka Architecture

- AWS Kafka Pricing

- AWS Kafka Tutorial: Getting Started with Amazon MSK

- AWS Kafka Use Cases

- AWS Kafka vs Kinesis

- Best Practices for Running Apache Kafka on AWS

- Apache Kafka Project Ideas with Source Code

- Become a Kafka Pro with ProjectPro’s Guided Projects

- FAQs on AWS Kafka

Understanding AWS Kafka Fundamentals

Amazon Managed Streaming for Apache Kafka (Amazon MSK) is a fully managed service that simplifies the use of Apache Kafka for real-time data streaming. Kafka, a distributed event streaming platform, facilitates event publishing, subscription, and processing. Amazon MSK manages Kafka cluster provisioning, scaling, and management, freeing users to concentrate on app development.

For example, imagine a retail company that wants to analyze real-time customer purchase data from multiple online stores. They can use Amazon MSK to create and manage Kafka clusters, which act as the central hub for data streams. Online store applications can then publish purchase events to these Kafka topics, and analytical applications can subscribe to these topics to process and analyze the data in real time, enabling the company to make timely business decisions based on the incoming stream of events.

Why Kafka on AWS?

Amazon Web Services has recognized the growing need for real-time data streaming and analytics. In response to this demand, AWS has introduced Amazon Managed Streaming for Apache Kafka (Amazon MSK). This service streamlines the process of setting up, running, and expanding Apache Kafka clusters. This helps businesses to shift their focus from managing the underlying infrastructure to deriving valuable insights from their data.

However, the quest for instant insights presents a complex challenge. How can the overwhelming influx of real-time data be efficiently handled while ensuring reliability, scalability, and optimal performance? Modern enterprises need a solution to navigate these challenges and unlock the potential of real-time data insights. This is where AWS Kafka emerges as an indispensable answer, simplifying the intricate aspects of real-time data streaming. Whether the objective is to enhance supply chain efficiency, identify anomalies in financial transactions, or enable personalized recommendations, AWS Kafka stands as a powerful solution to unravel the intricacies of real-time data streaming and address the hurdles that come with it.

Amazon Web Services has acknowledged the growing demand for real-time data streaming and analytics. As a result, AWS offers Amazon Managed Streaming for Apache Kafka (Amazon MSK) that simplifies the deployment, operation, and scaling of Apache Kafka clusters. This integration empowers businesses to focus on extracting insights from their data rather than managing the underlying infrastructure.

New Projects

AWS Kafka - Key Features

Apache Kafka offers robust features, making it a cornerstone for building efficient, real-time data streaming applications. Let's delve into the essential elements that make AWS Kafka a preferred choice for processing and streaming data.

-

No Servers to Manage: AWS Kafka takes the hassle out of infrastructure management by handling server provisioning, maintenance, and scaling. This allows developers to focus on building applications rather than dealing with the complexities of server administration.

-

Highly Available: Ensure uninterrupted data availability with AWS Kafka's high availability capabilities. It replicates data across multiple Availability Zones, offering fault tolerance and minimizing the risk of data loss or downtime.

-

Highly Secure: Security is a top priority, and AWS Kafka provides robust encryption, authentication, and authorization mechanisms to safeguard your data streams. You can control access at various levels, ensuring data privacy and compliance with industry standards.

-

Deeply Integrated: Seamlessly integrate AWS Kafka with various AWS services, including analytics, storage, and machine learning offerings. This integration enhances the versatility of your data processing workflows and enables you to extract valuable insights from your data streams.

-

Open Source: Built on the foundation of the open-source Apache Kafka, AWS Kafka retains compatibility with Kafka APIs to populate data lakes while enhancing it with AWS-specific optimizations. This provides a familiar interface for Kafka users while leveraging the benefits of AWS infrastructure.

-

Lowest Cost: AWS Kafka offers a cost-effective solution with pay-as-you-go pricing. You only pay for the resources you consume, optimizing your budget and resource allocation.

-

Scalable: AWS Kafka enables horizontal scaling, allowing you to expand your data processing capabilities as your requirements grow.

-

Configurable: Tailor AWS Kafka to meet your specific use cases through comprehensive configuration options. Fine-tune parameters such as retention policies, partitioning, and replication factors to optimize performance.

-

Visible: Gain insights into your data streams and processing metrics through detailed monitoring and logging features. This transparency empowers you to troubleshoot issues, optimize performance, and ensure data quality.

-

Tiered Storage: AWS Kafka provides tiered storage options, allowing you to optimize costs by moving data to different storage layers based on usage patterns. This feature is particularly valuable for managing historical or less frequently accessed data.

Here's what valued users are saying about ProjectPro

Anand Kumpatla

Sr Data Scientist @ Doubleslash Software Solutions Pvt Ltd

Ameeruddin Mohammed

ETL (Abintio) developer at IBM

Not sure what you are looking for?

View All ProjectsHow does Kafka Work?

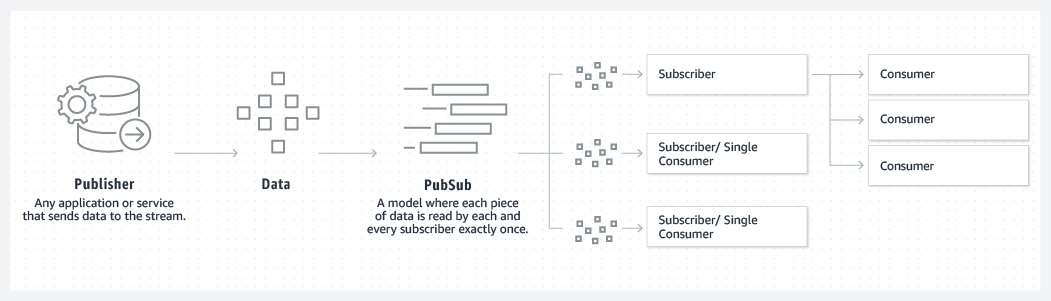

Kafka works by combining the queuing and publish-subscribe messaging models to offer the advantages of both. It utilizes a partitioned log model, where data is organized into ordered sequences of records called logs. These logs are divided into segments or partitions that correspond to various subscribers. This design permits multiple subscribers to the same topic while distributing data processing across numerous consumer instances, ensuring high scalability. Kafka's approach ensures replayability, enabling independent applications to read from data streams at their own pace.

Queuing

Source: https://aws.amazon.com/msk/what-is-kafka/

Publish-Subscribe

Source: https://aws.amazon.com/msk/what-is-kafka/

Upskill yourself for your dream job with industry-level big data projects with source code.

Deep Dive into Apache Kafka Architecture

Kafka's architecture employs a distinctive approach, employing separate topics where records are published. Each topic boasts a partitioned log, essentially a structured commit log that continuously appends new records while preserving their chronological order. These partitions are methodically distributed and replicated across multiple servers, effectively paving the way for remarkable scalability, fault tolerance, and parallelism. To ensure multi-subscriber support without compromising data order, Kafka assigns specific partitions to consumers within topics. This ingenious fusion of messaging models brings forth not only speed, scalability, and durability but also a robust storage system.

Source:

The architecture is underpinned by four APIs:

-

Producer API: Facilitating the publication of records to Kafka topics.

-

Consumer API: Enabling subscription to topics and seamless processing of record streams.

-

Streams API: Empowering applications to operate as stream processors, transforming input streams from topics into distinct output streams for varied topics.

-

Connector API: Streamlining the integration of external applications or data systems into the existing Kafka topics, further enhancing its flexibility and utility.

AWS Kafka Pricing

Amazon Managed Streaming for Apache Kafka (MSK) offers flexible pricing with no minimum fees or upfront commitments. You are not charged for Apache ZooKeeper nodes provisioned by MSK or data transfers within your clusters. Pricing is determined by the type of cluster you create: MSK clusters and MSK Serverless clusters. MSK clusters allow customizable capacity scaling, while Serverless clusters automatically handle capacity. MSK Connect enables Kafka Connect connectors. Private connectivity (AWS PrivateLink) is available at an hourly rate per cluster and authentication scheme, plus data processing charges.

For detailed pricing and examples, refer to the official documentation on AWS Kafka Pricing.

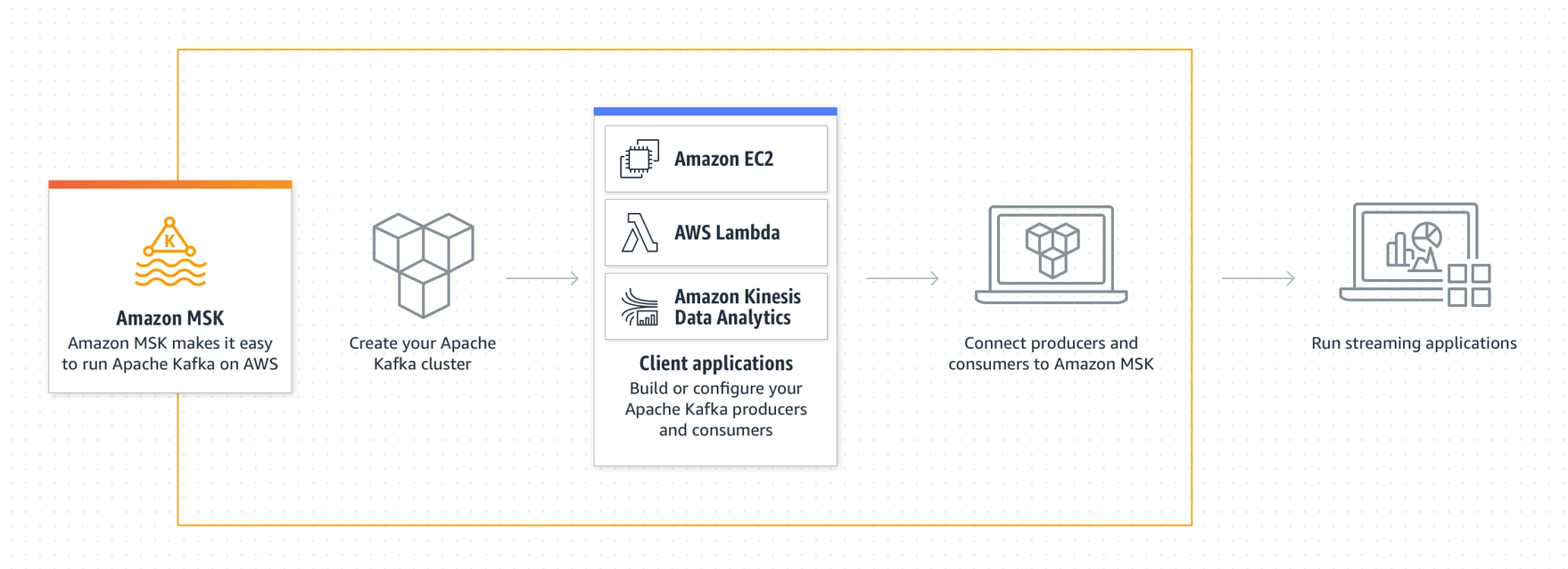

AWS Kafka Tutorial: Getting Started with Amazon MSK

This section provides you with a step-by-step guide on how to get started with Amazon MSK, from setting up a cluster to producing and consuming data, and monitoring the health of your cluster using metrics.

Step 1: Create an Amazon MSK Cluster

Learn how to create your own Amazon MSK cluster, which serves as the backbone of your Kafka-based data streaming infrastructure.

Step 2: Create an IAM Role

Set up an IAM role to ensure secure communication and interaction between your resources and the Amazon MSK cluster.

Step 3: Create a Client Machine

Discover how to set up a client machine to interact with your Amazon MSK cluster, enabling you to produce and consume data seamlessly.

Step 4: Create a Topic

Learn how to create a Kafka topic, an essential data-streaming and processing organizational unit.

Step 5: Produce and Consume Data

Dive into the process of producing and consuming data using your Amazon MSK cluster, a fundamental capability for any data streaming application.

Step 6: Use Amazon CloudWatch for Metrics

Explore how to monitor the health and performance of your Amazon MSK cluster using Amazon CloudWatch metrics, ensuring smooth operation.

Step 7: Cleanup - Delete AWS Resources

Finish the tutorial by learning how to properly delete the AWS resources you've created during this tutorial, ensuring that you're only billed for what you actually use.

Please note that this is a high-level overview of the steps. Each step may involve multiple detailed sub-steps and commands, and you should refer to the official AWS documentation for comprehensive instructions and command examples.

AWS Kafka Use Cases

Kafka is primarily used in building real-time streaming data pipelines and applications. Acting as the backbone of such channels, Kafka ensures the reliable processing and movement of data between different systems. It seamlessly ingests and stores streaming data, enabling the creation of dynamic applications that consume these data streams. Beyond its application in user activity tracking, Kafka is a versatile solution across various industries, from healthcare to technology giants. It is essential to record and track database changes, ensuring every create, read, update, and delete operation is meticulously recorded with minimal latency. Its other use cases include:

-

Log and Event Stream Processing: Leverage Amazon MSK to ingest and process log and event streams. Utilize Apache Zeppelin notebooks to express stream processing logic, enabling real-time insights from data streams.

-

Centralized Data Buses: Create real-time, centralized, and private data buses using Amazon MSK and Apache Kafka's log structure. This architecture facilitates efficient data sharing and communication between different components of your application.

-

Event-Driven Systems: Build event-driven systems that instantly ingest and respond to digital changes in your applications and business infrastructure. Amazon MSK enables you to handle real-time events and updates efficiently.

AWS Kafka vs Kinesis

AWS Kafka and Kinesis are both prominent real-time data streaming platforms, each with its distinct features. Kafka, an open-source solution, offers high throughput and fault-tolerant data streaming, making it suitable for complex data pipelines. On the other hand, Kinesis is a managed service that simplifies setup and maintenance, making it ideal for users seeking ease of use and integration with other AWS services. Kafka provides fine-grained control over data retention and processing, while Kinesis excels in auto-scaling and seamless integration with the AWS ecosystem.

Check out ProjectPro’s tool analyzer for more detailed information on the comparison of AWS Kafka and Kinesis.

Best Practices for Running Apache Kafka on AWS

Here are the essential best practices to run apache kafka that ensure a seamless and efficient operation of Apache Kafka, empowering you to harness its full potential for data streaming and processing in the AWS environment.

-

Deployment Considerations and Patterns: Choose appropriate deployment patterns, such as multi-broker clusters across availability zones for fault tolerance. Leverage managed Kafka services like Amazon MSK for simplified management.

-

Storage Options: Utilize Amazon EBS or EFS for broker storage, considering throughput and durability requirements. Segment log data onto different storage volumes for better performance.

-

Instance Types: Select EC2 instance types based on workload requirements. Utilize instances with high network bandwidth and CPU for optimal Kafka performance.

-

Networking: Configure VPC, subnets, and security groups for isolation and control communication. Use PrivateLink for secure communication within a VPC.

-

Upgrades: Plan and test Kafka version upgrades in a non-production environment. Use rolling upgrades to ensure minimal downtime during updates.

-

Performance Tuning: Adjust Kafka parameters (e.g., num.io.threads, num.network.threads) based on workload characteristics. Optimize OS-level settings and JVM parameters for improved throughput.

-

Monitoring: Implement comprehensive monitoring using CloudWatch, Amazon Managed Grafana or third-party tools. Monitor broker metrics, topic activity, consumer lag, and resource utilization.

-

Security: Enable encryption in transit and at rest. Use AWS Identity and Access Management (IAM) roles for fine-grained access control. Implement VPC network isolation, and consider using Amazon VPC peering or PrivateLink.

-

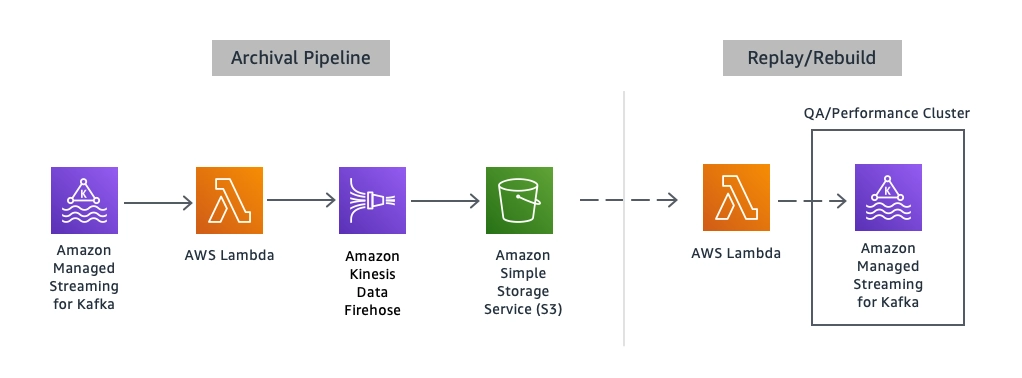

Backup and Restore: Regularly back up Kafka data and configurations. Utilize Amazon S3 for data backups—practice disaster recovery scenarios to ensure timely data restoration.

Theoretical knowledge is not enough to crack any Big Data interview. Get your hands dirty on Hadoop projects for practice and master your Big Data skills!

Apache Kafka Project Ideas with Source Code

Check out the following top three project ideas to understand the practical implementation of Kafka in real-world scenarios:

1. PySpark Project - Build a Data Pipeline

This PySpark ETL project focuses on building a streamlined data pipeline using PySpark, Confluent Kafka, and Amazon Redshift. Working on this project will help you learn how to build a PySpark data pipeline integrating Confluent Kafka for real-time streaming and Amazon Redshift for robust data warehousing. You will also learn how to perform ETL/ELT operations seamlessly, harnessing Kafka's distributed data storage and Redshift's managed petabyte-scale cloud data warehouse.

Source Code: PySpark Project-Build a Data Pipeline using Kafka and Redshift

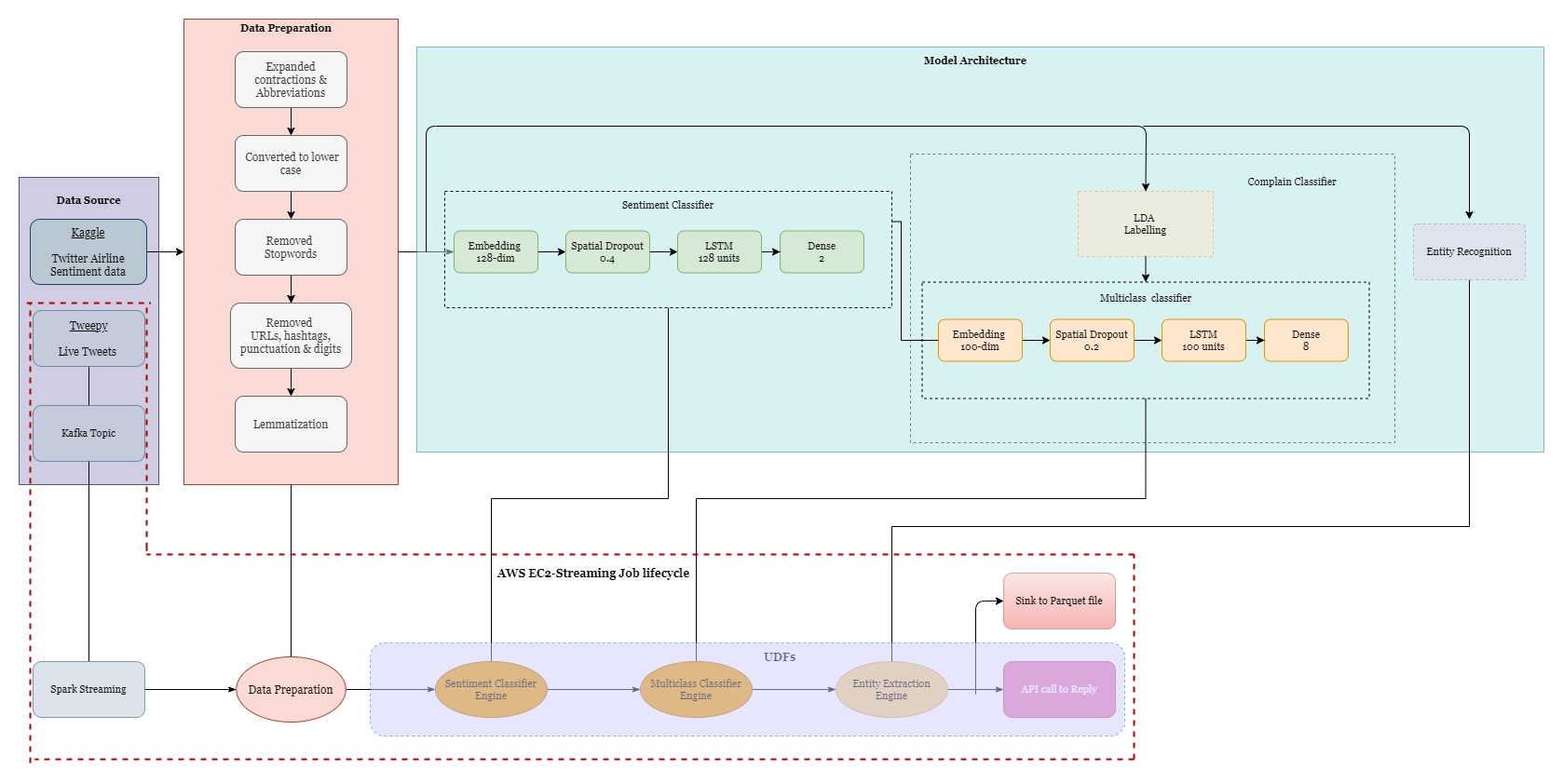

2. Deploy auto-reply Twitter Handle

This project emphasizes deploying an auto-reply Twitter handle using Kafka, Spark, and LSTM for enhanced customer support. The system ingests, and processes live tweets via Kafka and Spark, utilizing NLP pipelines for sentiment analysis, topic classification, and named entity recognition. Deep learning LSTM models predict query categories, enabling automated responses with custom ticket IDs. Implemented in Python with Flask, and various libraries, the project leverages AWS for final deployment, offering efficient real-time engagement and insights extraction from social media data.

Source Code: Deploying auto-reply Twitter handle with Kafka, Spark, and LSTM

3. Log Analytics Project

This Spark project utilizes NASA Kennedy Space Center's production logs for real-time log analytics. It employs Apache Spark, Python, and Kafka to process and analyze web server logs, yielding insights for network troubleshooting, security, customer service, and compliance. By utilizing Kafka Streaming, Spark, and Cassandra, it conducts real-time analysis of log data, supported by visualization through web apps like Dash and Plotly.

Source Code: Log Analytics Project with Spark Streaming and Kafka

Become a Kafka Pro with ProjectPro’s Guided Projects

Acquiring theoretical knowledge about AWS Kafka lays a solid foundation, but the journey to true mastery involves transforming that theory into practical expertise. So, if you're seeking a way to turn theoretical knowledge into practical know-how, consider exploring ProjectPro. With its solved and guided project solutions, you'll unravel Kafka's complexities and develop the skills needed to become a Kafka expert. So, don’t wait and start your Kafka learning adventure with ProjectPro today and empower yourself with the expertise to drive innovation and success in the ever-evolving data technology landscape.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

FAQs on AWS Kafka

1. What is AWS Kafka used for?

AWS Kafka is used for real-time data streaming and event processing, enabling applications to communicate and share data efficiently and reliably.

2. What is Kafka equivalent in AWS?

The equivalent service to Kafka in AWS is Amazon Managed Streaming for Apache Kafka (Amazon MSK).

3. Can Kafka be used in AWS?

Yes, Kafka can be used in AWS through the Amazon Managed Streaming for Apache Kafka (Amazon MSK) service.

4. What is a Kafka-managed service?

A Kafka-managed service, such as Amazon MSK, is a fully managed platform provided by cloud providers (like AWS) that handles the deployment, configuration, maintenance, and scaling of Apache Kafka clusters.

About the Author

Nishtha

Nishtha is a professional Technical Content Analyst at ProjectPro with over three years of experience in creating high-quality content for various industries. She holds a bachelor's degree in Electronics and Communication Engineering and is an expert in creating SEO-friendly blogs, website copies,