Deep Learning vs Machine Learning -What's the Difference?

Machine Learning vs Deep Learning- Understand the differences between the two and how they differ in terms of data, hardware, and performance levels.

“Machine Learning” and “Deep Learning” – are two of the most often confused and conflated terms that are used interchangeably in the AI world. However, there is one undeniable fact that both machine learning and deep learning are undergoing skyrocketing growth. According to Forbes, the global machine learning market will be worth $30.6 billion by 2024 and the deep learning market size is expected to reach $10.2 billion by 2025, expanding at a CAGR of 42.8% and 52.1% respectively.

Artificial Intelligence, deep learning, machine learning — whatever you’re doing if you don’t understand it — learn it. Because otherwise, you’re going to be a dinosaur within 3 years. -Mark Cuban

“Machine learning and deep learning will create a new set of hot jobs in the next 5 years.” ~Dave Waters.

Deep Learning Project- Real-Time Fruit Detection using YOLOv4

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectThese quotes from industry experts are clear proof of evidence that we can expect a huge surge in demand for deep learning and machine learning engineers in the next decade. Project Advisors at ProjectPro often get asked this question a lot from aspiring data science professionals who want to choose “Data Science” or “Artificial Intelligence” as their career, but they’re not even sure about the difference between the usually placed together terms “Machine Learning” vs “Deep Learning”. Before making a career-defining choice in these fields it is important to understand the differences and similarities between machine learning and deep learning. Machine Learning and Deep Learning when viewed from the surface often seem to be describing the same thing because deep learning is a subset of machine learning. However, the two are not exactly the same because there are a few overlapping characteristics between the two. Fear not! What follows is a straightforward and easy-to-understand primer on “Deep Learning” vs “Machine Learning”.

Table of Contents

- Deep Learning vs Machine Learning – Understanding the Differences

- Machine Learning vs Deep Learning – The Definition

- Deep Learning vs Machine Learning – Which one to choose based on data?

- Deep Learning vs Machine Learning –Which one is performant?

- Machine Learning vs Deep Learning – Which one to choose based on the problem?

- Machine Learning vs Deep Learning – What specialized hardware and computing power are needed?

- Choosing Between Machine Learning and Deep Learning

Deep Learning vs Machine Learning – Understanding the Differences

So, let’s dive in.

Machine Learning vs Deep Learning – The Definition

What is Machine Learning?

When we say machine learning here we are excluding deep learning though it is a subset of machine learning. Machine learning makes use of a set of learning algorithms (supervised or unsupervised learning process) to analyze the data, interpret it, learn from it, and make the best possible business decisions based on the learnings. Machine learning engineers use statistical strategies and computer science fundamentals to make machines learn using the existing data and improve from experience without having to be programmed explicitly to that level. Common machine learning algorithms include SVM, K-means clustering, Naïve Bayes, Linear Regression, Logistic Regression, Neural Networks, Ensemble Methods, and Decision Trees.

Evolution of Machine Learning Applications in Finance : From Theory to Practice

New Projects

Let’s understand this with an example where you want the machine learning algorithm to categorize the images in the collection according to the two categories of fruits: Apples and Oranges. But how will the ML algorithm know which one is Apple and which one is Orange? You label the images of apples and oranges to define specific features of each fruit. This data is enough for the ML algorithm to learn and classify millions of other images of apples and oranges based on the features it has learned through the given labels. Basically, traditional machine learning requires you to manually select features from the data and train the model to recognize patterns in data and make predictions on the new data that arrives within the machine learning system.

Here's what valued users are saying about ProjectPro

Ameeruddin Mohammed

ETL (Abintio) developer at IBM

Ray han

Tech Leader | Stanford / Yale University

Not sure what you are looking for?

View All ProjectsWhat is Deep Learning?

Deep Learning is a subset of machine learning inspired by the structure of the human brain that teaches machines to do what comes naturally to humans (learn by example). Deep learning models work similarly to how humans pass queries through different hierarchies of concepts and find answers to a question. From self-driving cars to chatbots or personal assistants like Siri and Alexa, deep learning has garnered a lot of attention lately and obviously for good reasons. Deep learning models are gaining popularity for the fact that they can achieve state-of-the-art accuracy which at times can outperform humans. The word “deep” in deep learning refers to the number of hidden layers present in a neural network. In traditional neural networks, there are only 2 or 3 hidden layers while a deep learning neural network can have as many as 150 hidden layers and hence the name “Deep Learning”. Common deep learning algorithms include deep Q networks, recurrent neural networks, and convolutional neural networks. Deep learning models usually perform Classification tasks directly from sound, text, or images (unstructured data).

Let’s consider the same example of classifying Apples and Oranges that we discussed for machine learning. To solve this problem, a deep learning model would take a completely different approach. Deep learning models don’t require structured or labeled data so there is no need to classify the two fruits. The ANN using deep learning sends the collection of images through various layers of the neural network wherein each layer of the network hierarchically defines features of the images. Having processed the data through layers in the network, the system finds the right features for classifying both Apples and Oranges from their images. Now, what if you add the images of bananas into your data? A traditional machine learning algorithm would be confused because machine learning performs efficiently only a predetermined set of features and becomes inaccurate when new features are introduced within the system. This is where deep learning proves efficient and works best.

Another simple real-life example of deep learning is an on-demand video streaming service like Netflix, Hotstar, or Amazon Prime. For these video streaming services to decide on which new movies or genres to recommend to a viewer, the models behind the scenes associate the preferences of a viewer with other users of the service who have a similar taste. The key behind Netflix’s recommender systems is that they don’t need the user to interact with every movie in the list of recommended movies. Instead, even if the user interacts with at least one movie on the list, the recommendations are said to have worked. This process of personalized recommendation is often touted as artificial intelligence and is used widely across many services today.

From the definition of deep learning and machine learning it is clear that traditional machine learning models do become better at whatever they are trained to but they still need some kind of human intervention. For example, if a traditional ML algorithm returns an inaccurate prediction then a machine learning engineer has to step in and adjust features and other variables for achieving accuracy. However, deep learning algorithms can automatically determine if the prediction is accurate or not through the neural network.

Get FREE Access to Machine Learning and Data Science Example Codes

Deep Learning vs Machine Learning – Which one to choose based on data?

For any given task at hand, having an in-depth understanding of the dataset helps identify whether to use deep learning or machine learning. Data is the governor when it comes to deciding on choosing between deep learning and machine learning. Traditional machine learning algorithms work well with structured data that can either be numeric or categorical (data that is in tabular format and organized into rows and columns). When the dataset has non-tabular data such as text, sensor data, signal data, videos, or images, deep learning works best. Though it is possible to apply machine learning to non-tabular data, it requires data manipulation. For example, if you are working sensor data then to apply traditional machine learning algorithms the sensor data needs to converted by extracting windowed features using statistical metrics like skewness, mean, media, etc.

Apart from the type of data you are working with, the amount of data also plays a vital role in deciding whether to use machine learning or deep learning. Deep learning is gaining popularity because of its ability to handle complex data and extract complex patterns and relationships from this data. Thus, deep learning models require lots and lots of training data (unlike traditional machine learning models) say millions of quality labeled data points for a simple classification task.

“The analogy to deep learning is that the rocket engine is the deep learning models and the fuel is the huge amounts of data we can feed to these algorithms.” - Andrew Ng, the chief scientist of China’s major search engine Baidu and one of the leaders of the Google Brain Project

To apply deep learning to a given dataset, you need incredibly huge amounts of training data to make sure the model does not overfit. A good example of this is Tesla’s autonomous driving software that requires millions of video hours and images to function efficiently. On the contrary, machine learning works well even with a smaller and simpler training dataset, say just a few thousands of data points, unlike deep learning that requires millions of data points.

Deep Learning vs Machine Learning –Which one is performant?

Our brain needs rest and fuel to charge otherwise it gets tired and is prone to make careless mistakes. However, that is not the case with deep learning models. Deep learning is said to have outperformed humans in a few image classification and recognition tasks. If a deep learning model is trained accurately, it can perform hundreds of thousands of repetitive tasks in lesser time than a human brain would.

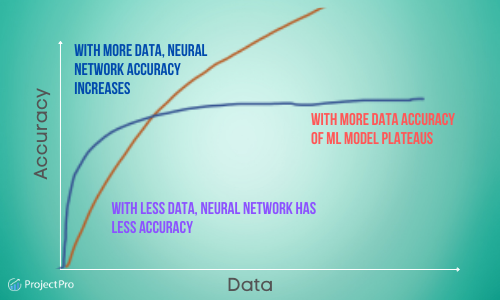

As the amount of training data increases, the accuracy of deep learning models also increases. However, common machine learning models like Naïve Bayes and SVM stop showing improvement after a certain saturation level. The quality and performance of a deep learning model never diminish unless the data used for training the model is not relevant to the business problem being solved. This implies that a deep learning model often excels in performance while a traditional machine learning model will degrade at some point regardless of how much more training data is fed to the model.

Having said that deep learning models are accurate when compared to traditional machine learning models they are almost impossible to interpret as they model extremely complex non-linear problems. When making a choice between machine learning and deep learning there is a trade-off between the accuracy of the model and interpretability of the model. Traditional machine learning models are easy to understand and interpret because of direct feature engineering. In deep learning models, feature engineering is done by the machine so it is very difficult for humans to understand and interpret the model. Though deep learning minimizes human intervention through automatic feature engineering, model interpretability is one of the biggest challenges with deep learning.

Machine Learning vs Deep Learning – Which one to choose based on the problem?

Traditional machine learning algorithms do produce a good enough model for many problems but for problems involving tasks like NLP, machine learning might not work well in the past and deep learning is a better choice. Deep learning models work best in the case of non-linear separation problems because they have the ability to convert non-separable problems to separable linear problems. Deep learning neither requires feature engineering nor it requires structured data so it is a good choice for NLP tasks, image classification and recognition, complex predictive systems, and adaptive imitation applications. Also, deep learning models require minimal human intervention minimizing the likelihood of human bias making it a good choice for these tasks.

Traditional machine learning models should be preferred for problems where you need quicker results because they can be trained faster and also need less computing power. The training time of a traditional machine learning model is directly proportional to the number of features and observations. However, machine learning engineers need to spend a good amount of time in feature engineering to improve the accuracy of the model.

Access Solved End-to-End Data Science and Machine Learning Projects

Machine Learning vs Deep Learning – What specialized hardware and computing power are needed?

The speed of a machine learning or a deep learning algorithm is dependent on the dataset size, complexity of the data, the nature of the algorithms, and the hardware on which the model is trained or deployed in production. Determining whether to choose machine learning or deep learning for a given problem is contingent on the hardware used. Traditional machine learning models train faster and require less computation power over deep learning models which might take a few weeks to train based on the computing power and available hardware. Despite the fact that publicly available datasets and pre-trained networks have minimized the time to train deep learning models through transfer learning, when a deep network model is deployed in production there is a possibility of underestimating the real-world practicalities.

Deep learning models perform complex operations like convolutions and hence need specialized hardware for computing requirements, unlike machine learning models that can be trained on normal desktop CPUs. Though a deep learning model can be trained on a CPU it will take a lot more time, so it is advisable to choose deep learning if GPUs are available because of high computational requirements.

Build a Job-Winning Data Science Portfolio. Start working on Solved End-to-End Real-Time Machine Learning and Data Science Projects

Choosing Between Machine Learning and Deep Learning

Deep learning and machine learning are both integral parts of artificial intelligence. Both the processes begin with training a learning model on training and test data and then move through the optimization phase to identify weights that make a model best fit the data. Machine learning and deep learning work well for regression and classification problems, however, there are a few machine learning applications like language translation and object recognition where deep learning models are a good fit over machine learning models. The task at hand determines if a data science project is more suited to machine learning or is a better fit for deep learning. Some of the common tasks where machine learning or deep learning can be applied include – identifying and classifying images, enhancing signals, predicting an output, response to text or speech, moving in simulation or physically, and uncovering. While tasks like uncovering trends or predicting an output might be a good fit for machine learning, the end-to-end AI system implementation would consist of several steps which when considered together might be a great fit for deep learning.

Have a machine learning or deep learning project in mind but need some expert help implementing it? Schedule a demo with our project advisors to explore your idea and find out how our library of 50+ solved end-to-end data science and machine learning project templates can be of help. Start working on diverse hands-on projects on the ProjectPro platform to implement your next brilliant idea.

Recommended Reading

- CNN vs RNN- Choose the Right Neural Network for Your Project

- Senior Data Scientist Salary : How Much Will You Make in 2021?

- 15 Popular Machine Learning Frameworks for Model Training

- Access Job Recommendation System Project with Source Code

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,