MapReduce vs. Pig vs. Hive

Hadoop MapReduce vs Pig and Hive- Mapreduce vs Pig vs Hive performance.

Build a big data pipeline with AWS Quicksight, Druid, and Hive

Downloadable solution code | Explanatory videos | Tech Support

Start Project

MapReduce vs. Pig vs. Hive - Comparison between the key tools of Hadoop

Google’s CEO, Eric Schmidt said: “There were 5 exabytes of information created by the entire world between the dawn of civilization and 2003. Now that same amount is created every two days.”

The most impressive thing about this is that mankind is capable of storing, processing and analysing this incredible bulk of data using open source frameworks like Hadoop in reasonable time.

Once big data is loaded into Hadoop, what is the best way to use this data? This is the question that hinders most of the hadoop developers. Collecting huge amounts of unstructured data does not help unless there is an effective way to draw meaningful insights from it. Hadoop Developers have to filter and aggregate the data to leverage it for business analytics. Any big data problem requires hadoop developers to use the right tool for the job to get it done faster and better. To do this, there are various coding approaches like using the Hadoop MapReduce or alternate components like Apache Pig and Hive. Each of these coding approaches has some pros and cons. It is up to the hadoop developers to evaluate which coding approach will work best for their business requirements and skills.

New Projects

For programmers who are not well-versed with what Hadoop MapReduce is, here is an explanation. It is a framework or a programming model in the Hadoop ecosystem to process large unstructured data sets in distributed manner by using large number of nodes. Pig and Hive are components that sit on top of Hadoop framework for processing large data sets without the users having to write Java based MapReduce code.

Pig and Hive open source alternatives to Hadoop MapReduce were built so that hadoop developers could do the same thing in Java in a less verbose way by writing only fewer lines of code that is easy to understand. Pig, Hive and MapReduce coding approaches are complementary components on the Hadoop stack. A hadoop developer who know Pig and/or Hive does not have to learn Java, however there are certain jobs which can be executed more effectively using Hadoop MapReduce coding approach rather than using Pig or Hive scripts and vice versa.

Coding Approach Using Hadoop MapReduce

MapReduce is a powerful programming model for parallelism based on rigid procedural structure. Hadoop MapReduce allows programmers to filter and aggregate data from HDFS to gain meaningful insights from big data. The Map and Reduce algorithmic functions can also be implemented using C, Python and Java. The only drawback to use the coding approach of Hadoop MapReduce is that hadoop developers need to write several lines of basic java code requiring extra effort and time for code review and QA. Thus, to simplify this Apache offers other options like Pig Latin and Hive SQL languages that help in constructing MapReduce programs easily.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

By using Hadoop MapReduce as the coding approach - it is hard to achieve join functionality making it difficult and time consuming to implement complex business logic. There is lot of development effort required to decide on how different Map and Reduce joins will take place and there could be chances that hadoop developers might not be able to map the data into the particular schema format. However, the advantage is that MapReduce provides more control for writing complex business logic when compared to Pig and Hive. At times, the job might require several hive queries for instance 12 levels of nested FROM clauses then it becomes difficult for Hadoop developers to write using MapReduce coding approach.

Most of the jobs can be run using Pig and Hive but to make use of the advanced application programming interfaces, hadoop developers must make use of MapReduce coding approach. If there are any large data sets that Pig and Hive cannot handle for instance key distribution then Hadoop MapReduce comes to the rescue. There are certain circumstances when hadoop developers can choose to use Hadoop MapReduce over Pig and Hive-

- When hadoop developers need definite driver program control then they should make use of Hadoop MapReduce instead of Pig and Hive.

- Whenever the job requires implementing a custom partitioner, hadoop developers can choose MapReduce over Pig and Hive.

- If there already exists pre-defined library of Java Mappers or Reducers for a job then it is a wise decision to use Hadoop MapReduce instead of Pig and Hive.

- If the hadoop developers require good amount of testability when combining lots of large data sets then they should use MapReduce instead of Pig and Hive.

- If the application demands legacy code requirements that command physical structure then Hadoop MapReduce is a better option.

- If the job requires optimization at a particular stage of processing by making the best use of tricks like in-mapper combining then Hadoop MapReduce can prove to be a better coding approach over Pig and Hive.

- If the job has some tricky usage of distributed cache (replicated join), cross products, groupings or joins then Hadoop MapReduce is a better programming approach over Pig and Hive.

Here's what valued users are saying about ProjectPro

Ed Godalle

Director Data Analytics at EY / EY Tech

Ray han

Tech Leader | Stanford / Yale University

Not sure what you are looking for?

View All ProjectsHowever, the above choices depend on various non-technical limitations like design, money, coupling decisions, time and expertise. It is an undeniable fact that Hadoop MapReduce is characteristically the best with performance. Pig and Hive are slowly and openly entering into the above list by intensifying their feature sets.

Coding Approach Using Pig and Hive

As Donald Miner, NYC Pig User Group Member rightly said-“If you can do it with Pig, save yourself from the pain because developer time is always worth more than the machine time.

Pig’s programming language referred to as Pig Latin is a coding approach that provides high degree of abstraction for MapReduce programming but is a procedural code not declarative. Pig Latin code can be extended through various user defined functions that are written in Python, Java, Groovy, JavaScript, and Ruby. Pig has tools for data storage, data execution and data manipulation. Pig Latin is highly promoted by Yahoo as all the data engineers at Yahoo use Pig for processing data on the biggest hadoop clusters in the world.

Hive was started by Facebook to provide hadoop developers with more of a traditional data warehouse interface for MapReduce programming. Apache Hive is similar to an SQL engine that has its own metastore on HDFS and the tables can be queried through a SQL like query language known as HQL (Hive Query Language). Hive queries are converted to MapReduce programs in the background by the hive compiler for the jobs to be executed parallel across the Hadoop cluster. This helps hadoop developers to focus more on the business problem rather than having to focus on complex programming language logic.

For hadoop developers who know SQL, Hive is like a companion to them that makes them feel at home with all familiar and know SQL clauses like FROM, WHERE, GROUP BY ORDER BY. The drawback to using Hive is that hadoop developers have to compromise on optimizing the queries as it depends on the Hive optimizer and hadoop developers need to train the Hive optimizer on efficient optimization of queries. Hive is generally used for processing structured data in the form of tables. Hive eliminates tricky coding and lots of boiler plate that would otherwise be an overhead if they were following MapReduce coding approach.

To the contrary, Pig Latin has most of the general processing concepts of SQL like selecting, filtering, grouping and ordering, however the syntax of Pig Latin is somewhat different from SQL, SQL users are required to make some conceptual adjustments to learn Pig. Apache Pig requires more verbose coding when compared to Apache Hive, however it is still a fraction when compared to what Java MapReduce programs require. Apache Pig offers more optimization and control on the data flow than Hive.

If you consider a simple word count example –

package org.myorg;

import java.io.IOException;

import java.util.*;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.conf.*;

import org.apache.hadoop.io.*;

import org.apache.hadoop.mapreduce.*;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

public class WordCount {

public static class Map extends Mapper {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String line = value.toString();

StringTokenizer tokenizer = new StringTokenizer(line);

while (tokenizer.hasMoreTokens()) {

word.set(tokenizer.nextToken());

context.write(word, one);

}

}

}

public static class Reduce extends Reducer {

public void reduce(Text key, Iterable values, Context context)

throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

context.write(key, new IntWritable(sum));

}

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job = new Job(conf, "wordcount");

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

job.setMapperClass(Map.class);

job.setReducerClass(Reduce.class);

job.setInputFormatClass(TextInputFormat.class);

job.setOutputFormatClass(TextOutputFormat.class);

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.waitForCompletion(true);

}

}

Script Credit - wiki

The Java MapReduce word count program has 48 lines of java code and also in the very beginning of the code there are ten in-built libraries that have been imported.

If we compare it with its equivalent Pig Latin script shown below then has only 7 lines of code thus making it faster for hadoop developers to code in Pig Latin rather than using Hadoop MapReduce programming approach.

input_lines = LOAD '/tmp/word.txt' AS (line:chararray);

words = FOREACH input_lines GENERATE FLATTEN(TOKENIZE(line)) AS word;

filtered_words = FILTER words BY word MATCHES '\\w+';

word_groups = GROUP filtered_words BY word;

word_count = FOREACH word_groups GENERATE COUNT(filtered_words) AS count, group AS word;

ordered_word_count = ORDER word_count BY count DESC;

STORE ordered_word_count INTO '/tmp/results.txt';

Pig Latin Script Credit : pig.apache.org

There is no need to import any additional libraries and anyone with basic knowledge of SQL and without a Java background can easily understand it. The learning curve for Java MapReduce is high when compared to that of Pig Latin or HiveQL. Thus, using higher level languages like Pig Latin or Hive Query Language hadoop developers and analysts can write Hadoop MapReduce jobs with less development effort. The thumb rule here is that writing Pig Latin script requires 5% of the development effort when compared to writing Hadoop MapReduce program while the runtime performance is reduced only by 50%.Despite the fact that Pig and Hive coding approaches are not as fast Hadoop MapReduce, they are considered to be superior when it comes to enhancing the productivity of data analysts and engineers.

Get More Practice, More Big Data and Analytics Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

Theoretically, any Pig Latin job or Hive query can be rewritten using MapReduce code, thus making Hadoop MapReduce programming approach faster than the other two. The performance penalty of Pig and Hive can actually be overcome by making use of some extra machines. The development cost using Hadoop MapReduce coding approach is much greater than the cost incurred for adding some extra machines plus the system admin’s time plus the power using Pig and Hive.

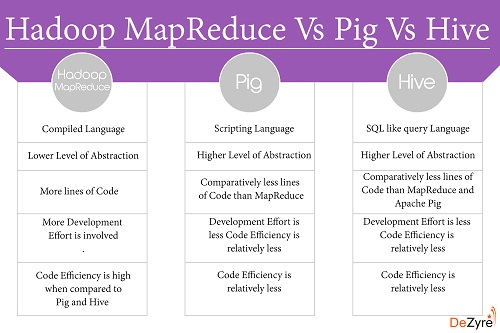

In Conclusion – MapReduce vs. Pig vs. Hive

For Hadoop, there are many ways to run the jobs. The 3 programming approaches considered above i.e. MapReduce, Pig and Hive have their own pros and cons. The discussion is summarized below-

- Hadoop MapReduce is a compiled language whereas Apache Pig is a scripting language and Hive is a SQL like query language.

- Pig and Hive provide higher level of abstraction whereas Hadoop MapReduce provides low level of abstraction.

- Hadoop MapReduce requires more lines of code when compared to Pig and Hive. Hive requires very few lines of code when compared to Pig and Hadoop MapReduce because of its SQL like resemblance.

- Hadoop MapReduce requires more development effort than Pig and Hive.

- Pig and Hive coding approaches are slower than a fully tuned Hadoop MapReduce program.

- When using Pig and Hive for executing jobs, Hadoop developers need not worry about any version mismatch.

- There is very limited possibility for the developer to write java level bugs when coding in Pig or Hive.

- Pig has problems in dealing with unstructured data like images, videos, audio, text that is ambiguously delimited, log data, etc.

- Pig cannot deal with poor design of XML or JSON and flexible schemas.

If you have a different opinion or have any comments on the above then please feel free to share in the comments section below!

Related Posts

How much Java is required to learn Hadoop?

Top 100 Hadoop Interview Questions and Answers 2016

Difference between Hive and Pig - The Two Key components of Hadoop Ecosystem

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,