A Data Engineer’s Guide To Real-time Data Ingestion

Dive into our comprehensive guide on real-time data ingestion, exploring top strategies and tools to streamline and optimize your data handling processes effectively. | ProjectPro

Navigating the complexities of data engineering can be daunting, often leaving data engineers grappling with real-time data ingestion challenges. But fear not! Our comprehensive guide will explore the real-time data ingestion process, enabling you to overcome these hurdles and transform your data into actionable insights.

“I kind of have to be a master of cleaning, extracting, and trusting my data before I do anything with it.” — Scott Nicholson

Absolutely right! For data engineers or anyone passionate about data, mastering data ingestion is like knowing how to extract and leverage valuable information hidden in vast amounts of data. A good data ingestion strategy isn't just about collecting data – it's also about changing, cleaning, and arranging data neatly. But how can a data engineer acquire such knowledge? The answer is simple- by gaining a solid grasp of the real-time data ingestion process. This comprehensive guide sheds light upon the core concepts of real-time data ingestion, exploring its various types, patterns, tools, services, and architectures, along with some real-world examples to solidify your understanding. Let us take you on an exciting journey where you will master the art of real-time data ingestion.

Table of Contents

- What is Real-Time Data Ingestion?

- Benefits of Real-Time Data Ingestion

- Data Ingestion Patterns

- Steps Involved In The Real-time Data Ingestion Process

- Data Collection

- Data Processing

- Data Storage

- Real-time Data Ingestion Architecture

- Real-Time Data Ingestion Tools

- Real-Time Data Ingestion Services

- Real-Time Data Ingestion Examples For Practice

- Learn Real-Time Data Ingestion with Azure Purview

- Build A Streaming Data Pipeline Using GCP DataFlow and Pub/Sub

- Build A Data Pipeline in AWS using NiFi, Spark, and ELK Stack

- Master The Art Of Real-time Data Ingestion With ProjectPro

- FAQs on Real-Time Data Ingestion

What is Real-Time Data Ingestion?

Einav Baraban, Data Team Lead at Fabric, shares the following data ingestion definition in one of her articles-

Saikat Dutta, Senior Azure Data Engineer at LTIMindtree, defines what is real-time ingestion of data in one of his articles-

Real-time data ingestion refers to the continuous and immediate process of collecting, processing and analyzing data as it is generated. It involves ingesting data in near real-time, enabling faster insights and actions based on current information.

Imagine a ride-sharing app that collects and processes location data, passenger details, and trip status instantly as rides occur. Through real-time data ingestion, the app processes this incoming data at the moment, enabling the app to dynamically update ride availability, optimize driver assignments, and provide accurate arrival times to users. This immediate data ingestion and processing allow for real-time monitoring and decision-making, enhancing user experience and operational efficiency in the ride-sharing service.

Benefits of Real-Time Data Ingestion

Vineeth Rajan, Global Master Data Solutions Lead at Julphar, shares some key business benefits of data ingestion in one of his articles-

Here's what valued users are saying about ProjectPro

Ray han

Tech Leader | Stanford / Yale University

Savvy Sahai

Data Science Intern, Capgemini

Not sure what you are looking for?

View All ProjectsData Ingestion Patterns

Data ingestion patterns represent various methods to ingest, collect, and process data from various sources into storage or processing systems. These patterns handle different data volume, velocity, and processing requirements. Some common ingestion patterns include-

-

Batch Ingestion

Batch data ingestion involves gathering and processing data in predefined intervals or batches. Data is gathered, processed, and loaded into the system at scheduled intervals. Batch ingestion is ideal for scenarios where real-time processing is unnecessary, such as historical analysis.

-

Real-Time or Stream Ingestion

This process ingests streaming data continuously and immediately as it is generated. Streaming data ingestion involves processing and loading data in near real-time, making it ideal for scenarios requiring immediate data availability and processing, like financial transactions or IoT sensor data streams.

-

Change Data Capture (CDC)

It focuses on capturing only the changes made to a database since the last update. It minimizes processing load and ensures data accuracy by identifying and replicating these changes in near real-time.

Steps Involved In The Real-time Data Ingestion Process

The real-time data ingestion process transforms and combines streaming data from multiple sources into a unified and consistent format for further analysis. Let us understand the key steps involved in real-time data ingestion into HDFS using Sqoop with the help of a real-world use case where a retail company collects real-time customer purchase data from point-of-sale systems and e-commerce platforms.

-

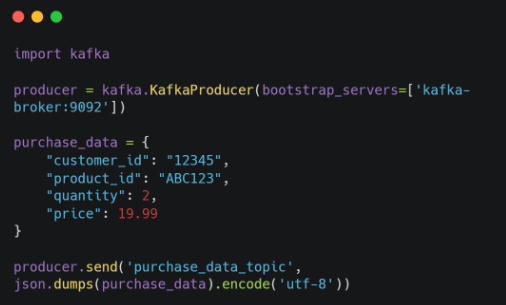

Data Collection

The first step is to collect real-time data (purchase_data) from various sources, such as sensors, IoT devices, and web applications, using data collectors or agents. These collectors send the data to a central location, typically a message broker like Kafka.

-

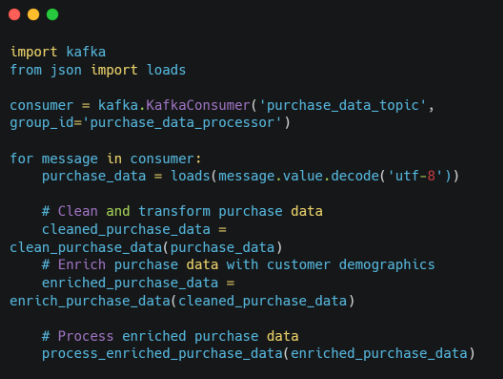

Data Processing

In this step, the collected data is processed in real-time to clean, transform, and enhance it. This may involve filtering out irrelevant data, converting data formats, and adding additional information from external sources.

For this example, we will clean the purchase data to remove duplicate entries and standardize product and customer IDs. They also enhance the data with customer demographics and product information from their databases.

-

Data Storage

Next, the processed data is stored in a permanent data store, such as the Hadoop Distributed File System (HDFS), for further analysis and reporting. You can use data loading tools like Sqoop or Flume to transfer the data from Kafka to HDFS.

We will load the processed purchase data into HDFS using Sqoop for historical analysis and trend identification.

This real-time data ingestion process enables the retail company to collect, process continuously, and store customer purchase data, providing valuable insights into customer behavior, product trends, and sales performance.

Real-time Data Ingestion Architecture

A real-time data ingestion architecture, or a real-time data ingestion framework, is designed to facilitate the seamless collection, processing, and analysis of data as it streams in real-time from various sources. This architecture typically consists of several layers, each serving a specific purpose in handling and processing data instantaneously-

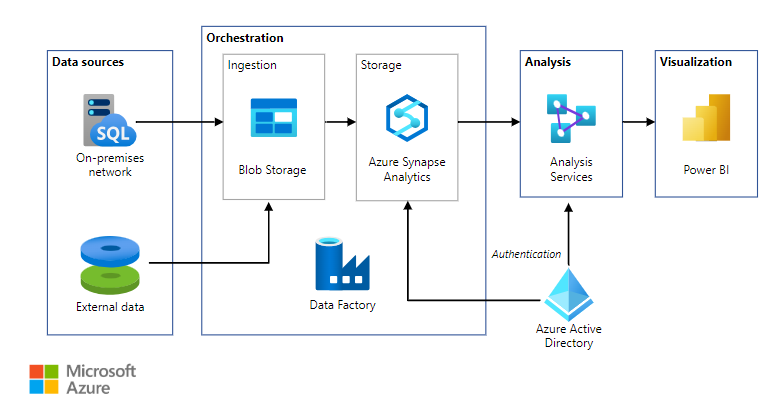

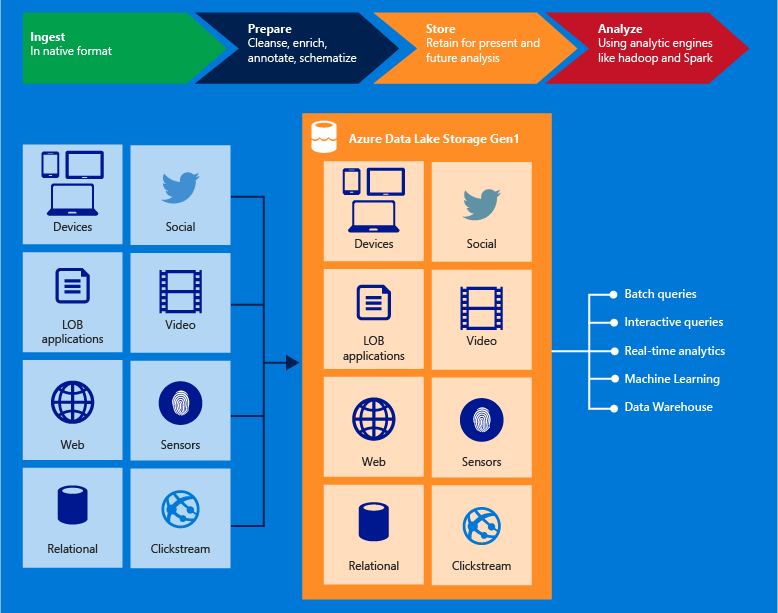

Source- Microsoft Azure Official Documentation

-

Data Ingestion Layer

At the forefront of the architecture, this layer is responsible for the initial acquisition and ingestion of data streams from diverse sources. It involves connectors or agents that capture data in real-time from sources like IoT devices, social media feeds, sensors, or transactional systems using popular ingestion tools like Azure Synapse Analytics, Azure Event Hubs, Apache Kafka, or AWS Kinesis.

-

Streaming Processing Layer

This layer processes the ingested data streams in real-time using tools like Apache Flink, Apache Spark Streaming, or AWS Kinesis Data Analytics for data transformations, aggregations, filtering, and enhancements. The data is continually processed while it moves through the pipeline.

-

Storage And Persistence Layer

Once processed, the data is stored in this layer. Stream processing engines often have in-memory storage for temporary data, while durable storage solutions like Apache Hadoop, Amazon S3, or Google Cloud Storage serve as repositories for long-term storage of processed data.

-

Event Processing And Analytics Layer

This layer focuses on performing real-time analytics and deriving insights from the processed data. Complex event processing (CEP) engines or analytics platforms like Apache Druid, Elasticsearch, or Apache Pulsar enable real-time querying, pattern detection, and analytics on streaming data.

-

Data Visualization Layer

The final layer involves visualizing insights derived from real-time data for decision-making or triggering immediate actions. Tools like Tableau, Grafana, or custom dashboards present real-time analytics and alerts, allowing stakeholders to make timely decisions based on the streaming data.

Throughout the real-time data ingestion architecture, every layer is essential to capturing, processing, storing, analyzing, and presenting data in real-time. This allows organizations to leverage instantaneous data insights for predictive analysis, operational efficiency, and real-time decision-making.

Real-Time Data Ingestion Tools

Data ingestion tools are crucial in collecting and transferring data from various sources into a centralized location for further processing or analysis. These tools have become essential for organizations that must manage and analyze large amounts of data from various sources. Here's a detailed overview of five popular real-time data ingestion tools-

-

Apache Kafka

With over 1000 use cases, Apache Kafka is a leading distributed streaming platform enabling high-throughput, low-latency real-time data feed handling. Nearly 80% of Fortune 100 companies use Kafka to build real-time data pipelines and applications that require handling large volumes of data with minimal delay.

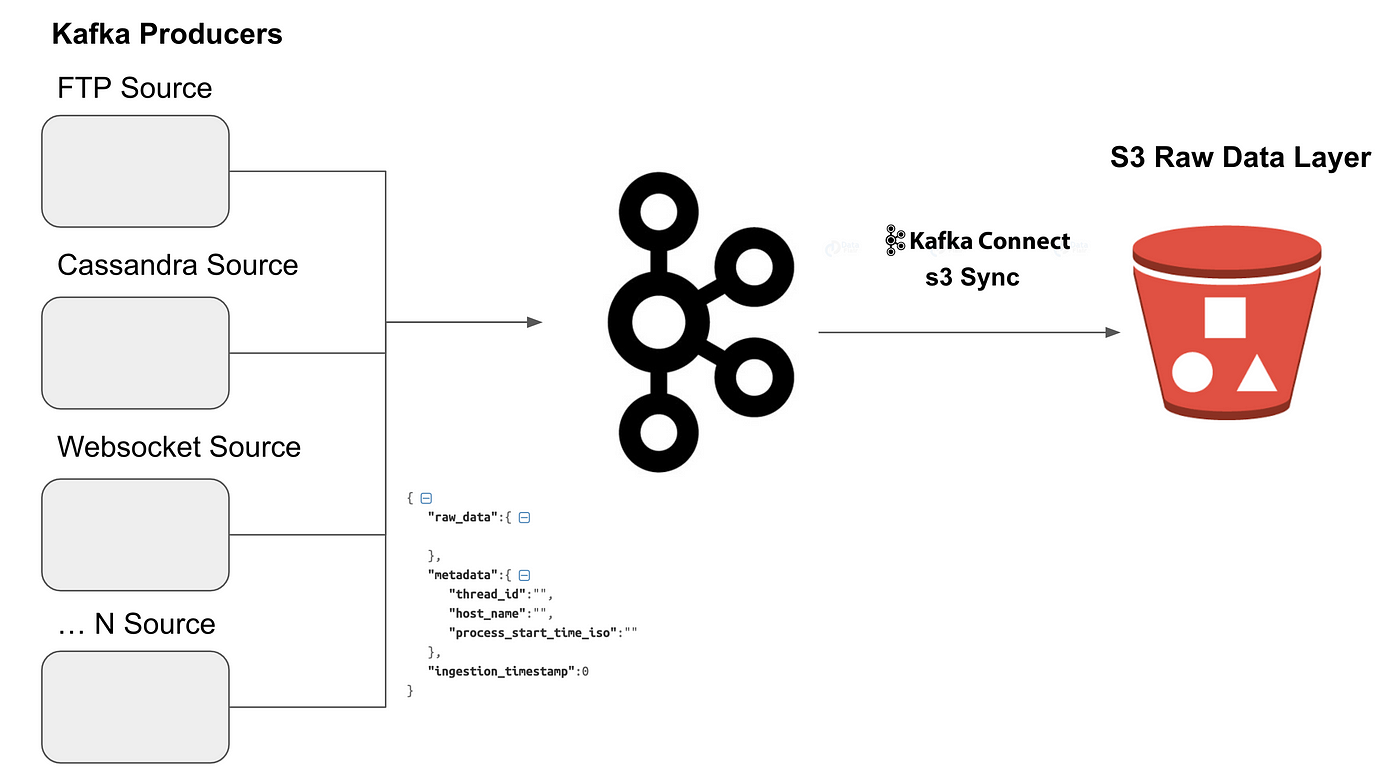

Source- Medium

Apache Kafka Use Cases

Data scientists and engineers commonly use Kafka for the following scenarios-

-

Processing real-time user activity data for web analytics,

-

Handling high-volume IoT sensor data for real-time monitoring,

-

Enabling real-time fraud detection in financial transactions.

Real-Time Data Ingestion Using Apache Kafka

This exciting real-time big data ingestion project combines PySpark's capabilities with Confluent Kafka and Amazon Redshift for data processing. You will dive into ETL and ELT operations, learning to extract, transform, and load data seamlessly. Your journey involves streaming data ingestion into Kafka, extracting required data from Kafka, and using PySpark to transform and load this data back into Kafka.

Source- PySpark Project- Build a Data Pipeline using Kafka and Redshift

You can also explore this Real-time Data Ingestion Project using Hadoop and Kafka, which analyzes publicly available COVID-19 datasets.

-

Apache NiFi

With over 4.1k GitHub stars, Apache NiFi is another leading data flow management platform that offers a drag-and-drop interface for building and managing data pipelines. It is popular for its versatility and ease of use, making it suitable for batch and streaming data ingestion scenarios.

Apache NiFi Use Cases

-

Ingesting and transforming data from various sources for data warehouses or data lakes,

-

Building data integration pipelines for enterprise applications,

-

Combining and processing data from IoT devices, sensors, and other endpoints.

Learn more about how NiFi helps ingest real-time data efficiently by working on this Real-Time Streaming of Twitter Sentiments AWS EC2 NiFi Project.

-

Apache Spark

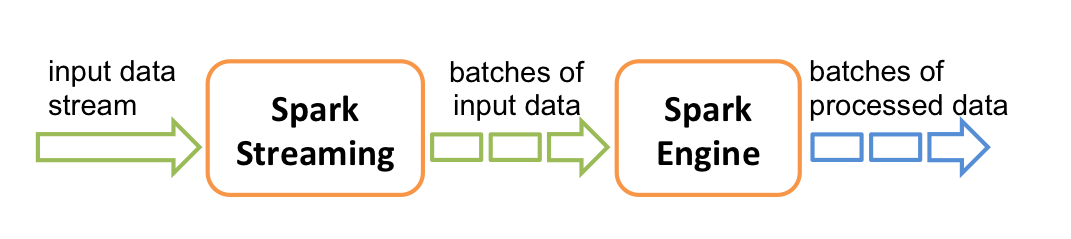

Apache Spark is a robust distributed computing framework that supports in-memory data processing capabilities. It offers libraries for batch processing, streaming, machine learning, and graph processing. More than 2,000 individuals from business and academics contribute to Apache Spark, and this open-source tool is leveraged by thousands of organizations, including 80% of the Fortune 500. Spark Streaming allows real-time data ingestion and processing from various sources, making it ideal for efficiently handling various data processing tasks.

Source- Spark.Apache.org

Apache Spark Use Cases

-

Analyzing large datasets for business intelligence and reporting,

-

Building real-time machine learning models for fraud detection or anomaly detection,

-

Processing and analyzing real-time data streams from social media or IoT devices.

Apache Spark Real-time Data Ingestion Projects

Here are two innovative real-time data ingestion projects using Apache Spark-

-

Real-time Data Ingestion Using Spark, HBase, And Phoenix

This innovative project aims to build a real-time streaming data pipeline for an application that monitors oil wells using Apache Spark, HBase, and Apache Phoenix. Sensors in oil rigs generate streaming data processed by Spark and stored in HBase for use by various analytical and reporting tools. Working on this real-time data ingestion project will show you how to read data from HDFS storage and write it into the HBase table using Spark.

Source- Streaming Data Pipeline using Spark, HBase, and Phoenix Project

-

Real-time Data Ingestion Example Using Flume And Spark

You should also check out this real-time Twitter data analysis project using Flume and Kafka. This hands-on project is a deep dive into real-time Twitter data analysis, employing Flume and Kafka's prowess. Flume connects to Twitter, fetching continuous streaming data, which is refined and directed to Kafka for storage. Spark Streaming consumes this data from Kafka, conducting essential processing tasks before forwarding it to a Flask server. The Flask server, receiving insights from Spark, creates intuitive dashboards showcasing the analyzed Twitter data.

Source- Real-time Twitter Data Analytics Project Using Flume

-

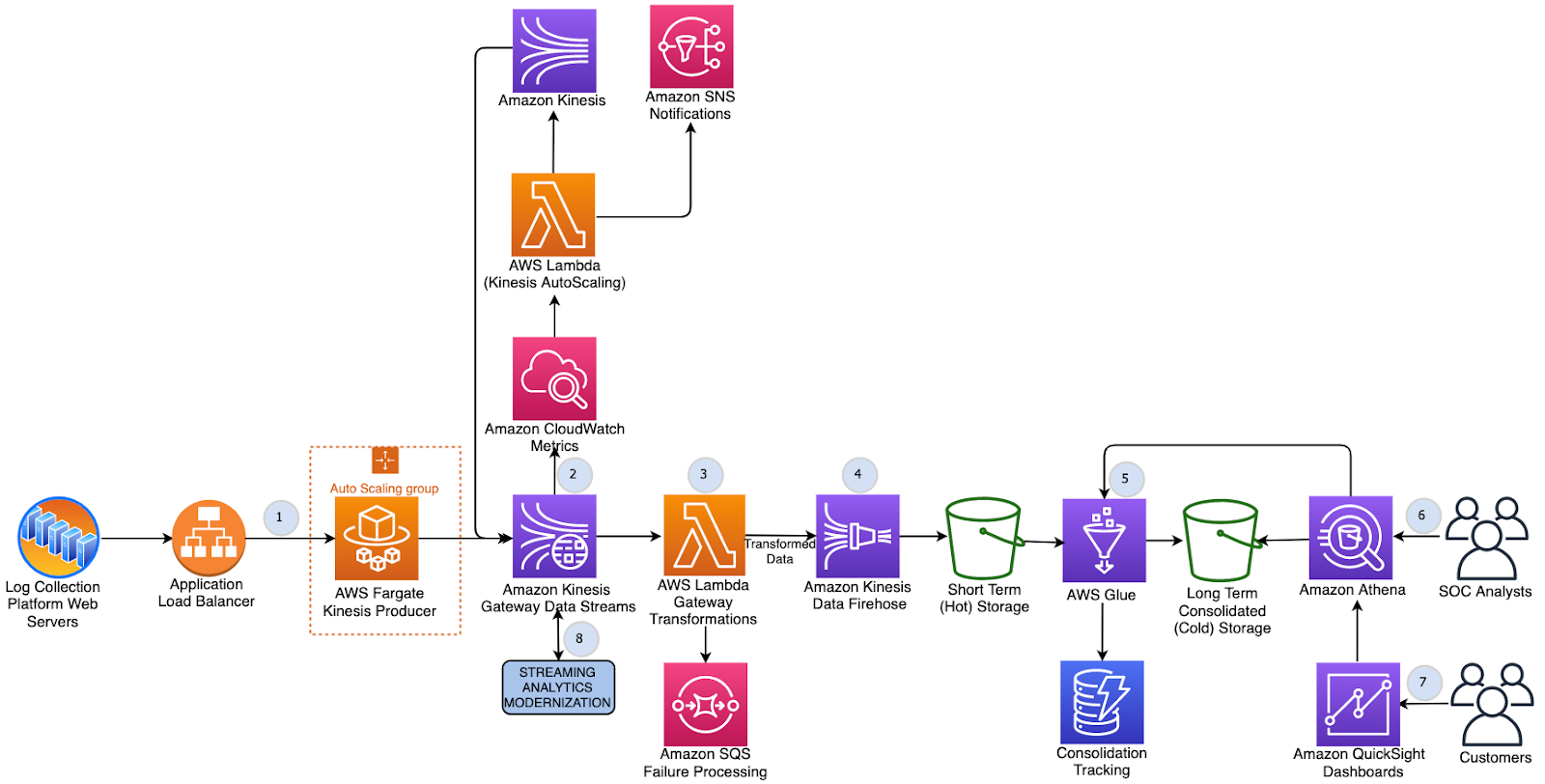

AWS Kinesis

Amazon Kinesis is a managed streaming service on Amazon Web Services (AWS) designed for handling real-time data at scale. It enables ingestion, processing, and analysis of streaming data in real-time. Kinesis Data Streams facilitates the ingestion of large volumes of data, while Kinesis Data Firehose simplifies data loading into other AWS services for analytics and storage.

Source- Amazon Kinesis

AWS Kinesis Use Cases

-

Processing real-time stock market data for financial applications,

-

Analyzing real-time traffic data for traffic management systems,

-

Collecting and processing data from IoT devices for smart city applications.

Check out this Build a Real-time Streaming Data Pipeline using Flink and Kinesis Project to understand how Kinesis helps ingest streaming data.

-

Google Cloud DataFlow

With 4.6 ratings on Gartner and over 6K followers on Github, DataFlow is a popular fully managed service on Google Cloud Platform (GCP) that provides a serverless approach to handle batch and stream data processing. It allows for parallel execution of data pipelines using Apache Beam, supporting batch and real-time data ingestion, transformation, and analysis.

GCP DataFlow Use Cases

-

Ingesting and processing large datasets from cloud storage services like Google Cloud Storage,

-

Building data pipelines for ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) processes,

-

Analyzing real-time data streams for fraud detection or anomaly detection.

You can learn more about how to ingest real-time data using DataFlow by working on this Build a Scalable Event Based GCP Data Pipeline using DataFlow Project.

Let us move on to the various services that help you with efficient and seamless data ingestion.

Real-Time Data Ingestion Services

This section will discuss the various real-time data ingestion services such as AWS, Azure, GCP, and Snowflake and list a few relevant projects to help you understand how to perform real-time data ingestions using these services.

-

AWS Real-Time Data Ingestion Services

The AWS Cloud enables customers to connect to and extract data from both streaming and batch data easily. AWS offers two types of data ingestion-

-

Batch Ingestion- This method involves collecting and processing data in batches, typically at regular intervals. This approach is suitable for large volumes of data that do not require real-time processing.

-

Streaming Ingestion- This method involves processing data as it is generated, giving real-time insights and enabling immediate actions. This approach is ideal for applications that require low latency and continuous data analysis.

The key AWS real-time data ingestion services include-

-

Kinesis

Source- Amazon

AWS Kinesis is a fully managed real-time data ingestion platform that enables real-time data collection, processing, and analysis from various sources, including web clicks, social media feeds, and IoT devices.

-

S3

Amazon S3 is a popular cloud object storage service that offers a secure, scalable, and cost-effective repository for storing vast amounts of unstructured data, such as images, videos, and log files.

-

Data Pipeline

Amazon Data Pipeline is a fully managed service that simplifies building and managing data pipelines for moving and transforming data between AWS services.

-

DynamoDB

AWS DynamoDB is a fully managed NoSQL database service offering high-throughput, low-latency data access, making it ideal for real-time applications and large-scale data storage.

-

Amazon Managed Streaming for Apache Kafka

AWS MSK is a fully managed Apache Kafka service that provides a scalable and reliable platform for streaming data processing and analysis.

AWS Real-Time Data Ingestion Projects For Practice

Here are a few AWS projects worth exploring-

-

AWS Snowflake Data Pipeline Example using Kinesis and Airflow

-

To learn how to perform real-time data ingestion using Redshift, you must explore this project that builds a near real-time logistics dashboard using Amazon Redshift and Amazon Managed Grafana.

-

Azure Real-time Data Ingestion Services

Microsoft Azure offers robust services and tools to facilitate efficient real-time data ingestion, catering to different data sources, formats, and processing needs.

-

Azure Data Factory

Source- Microsoft

Azure Data Factory is a cloud-based real-time data integration service that simplifies the process of building and managing data pipelines for moving and transforming data between Azure services and on-premises data sources.

-

Azure Data Lake Storage

Source- Microsoft

Azure Data Lake Storage is a scalable and secure cloud data lake platform that allows you to store and analyze vast amounts of raw data in its native format flexibly and cost-effectively.

-

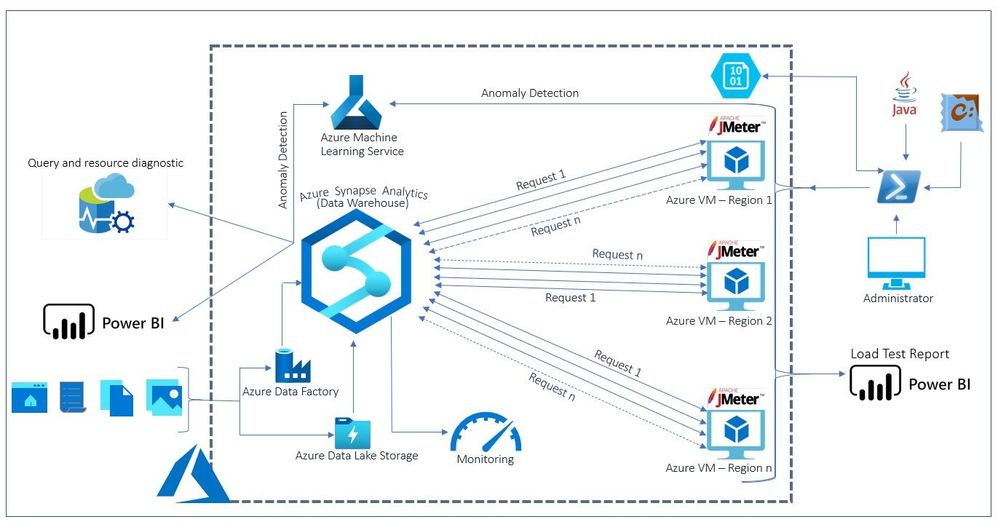

Azure Synapse Analytics

Source- Techcommunity.microsoft.com

Azure Synapse Analytics is a cloud-based solution that combines data warehousing, big data processing, and business intelligence capabilities, enabling organizations to perform large-scale data analysis and generate insight.

Azure Real-Time Data Ingestion Projects For Practice

Here are a few Azure projects worth exploring-

-

GCP Real-time Data Ingestion Services

Google Cloud Platform (GCP) offers robust services and tools to facilitate efficient and scalable real-time data ingestion processes that support various data sources, formats, and processing requirements.

-

Cloud Dataflow

GCP DataFlow is a fully managed cloud service for building and running data pipelines. It enables users to process and transform data from various sources, including streaming, batch, and data stored in data lakes and warehouses.

-

Cloud Pub/Sub

Source- Cloud.google.com

GCP Pub/Sub is a fully managed real-time messaging service that enables high-throughput, low-latency message publishing and subscribing. It is ideal for building real-time data pipelines and applications that require low-latency data processing.

-

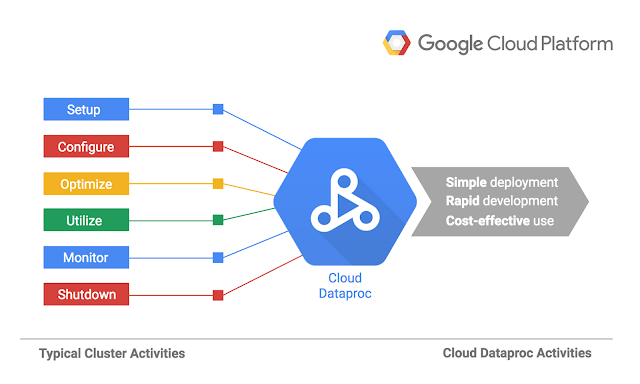

Cloud Dataproc

Source- Cloudplatform.googleblog.com

GCP DataProc is a fully managed cloud service for Apache Spark and Hadoop workloads. It offers a scalable and cost-effective platform for processing and analyzing large datasets.

-

Cloud BigQuery

Source- Cloud.google.com

Image Name: Google Cloud BigQuery, Alt Tag: Real-time Data Ingestion Using Cloud BigQuery, Alt Desc- Image for GCP BigQuery Real-time Data Ingestion

Google BigQuery is a fully managed cloud data warehouse that enables large-scale data analysis and business intelligence. It offers high performance, low latency, and cost-effective data querying and analysis capabilities.

GCP Real-Time Data Ingestion Projects For Practice

Here are a few GCP projects worth exploring-

-

Snowflake Real-time Data Ingestion

Snowflake, the cloud-based data warehouse, offers a powerful suite of data ingestion tools to streamline the process of collecting, processing, and storing data from diverse sources. Whether you are dealing with structured or semi-structured data, real-time or batch loads, Snowflake supports the following tools to bring in data from diverse sources and formats-

-

Snowflake Data Loading

Snowflake supports loading data from different sources, including files (CSV, JSON, Parquet), streaming services, and cloud storage solutions. It allows direct data loading into Snowflake tables from these sources, eliminating the need for intermediate staging areas.

-

Snowpipe

Source- Docs.snowflake.com

Snowpipe is a built-in, continuous data ingestion service that automatically loads streaming data into Snowflake. It enables real-time data ingestion by automatically detecting new data files arriving in a designated stage and loading them into Snowflake tables without manual intervention.

-

Snowflake Connectors

Snowflake provides connectors for various data sources and integration tools, facilitating easy ingestion from on-premises databases, cloud-based applications, and popular ETL (Extract, Transform, Load) tools. These connectors simplify data movement and integration with Snowflake.

Snowflake Real-time Data Ingestion Projects For Practice

Here are a few Snowflake projects worth exploring

Real-Time Data Ingestion Examples For Practice

Here are some real-time data ingestion examples you must explore to gain hands-on experience in performing real-time data ingestion using various big data tools and technologies.

-

Learn Real-Time Data Ingestion with Azure Purview

This innovative Azure project aims to prepare and ingest data into Azure Purview, a data governance service. It involves various stages, starting with introducing Azure Purview and understanding the project architecture, as shown above. This project uses Azure Logic Apps to orchestrate data flows, an Azure Storage Account to store raw and processed data, and Azure Data Factory (ADF) for data movement and transformation. Working on this project will also teach you how to create an Azure SQL Database to store structured data and integrate it with ADF for seamless data processing. Additionally, this project solution explores Purview workflows for data discovery and cataloging and helps you understand ADF concepts and triggers, which are crucial for automating data ingestion and processing tasks.

Source- Learn Real-Time Data Ingestion with Azure Purview

-

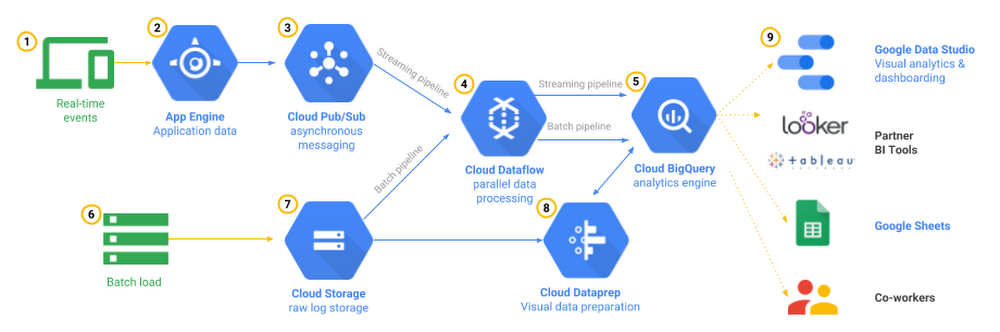

Build A Streaming Data Pipeline Using GCP DataFlow and Pub/Sub

In this GCP project, you will manage a fleet of NYC taxi cabs and track real-time performance using a streaming data pipeline. You will create a dynamic management dashboard by gathering taxi-related data like revenue, passenger count, and ride status from the NYC Taxi & Limousine Commission’s open dataset. The first step involves setting up a BigQuery dataset to store the data aggregated from Pub/Sub messages. Then, using Cloud DataFlow, you will set up a streaming data pipeline to extract sensor data from Pub/Sub, compute maximum temperatures within specific time frames, and store this information in BigQuery. This setup allows for continuous analysis of the streaming taxi data using BigQuery, enabling further insights through data aggregations for detailed reporting and real-time monitoring of your taxi business.

Source- Build A Streaming Data Pipeline Using GCP DataFlow and Pub/Sub

-

Build A Data Pipeline in AWS using NiFi, Spark, and ELK Stack

In this AWS project, you will learn to create a robust data pipeline using several powerful tools and services. Firstly, Apache NiFi will help you fetch data from an API, transform it, and store it securely in an AWS S3 bucket. Then, using Logstash, you will seamlessly ingest this data from the S3 bucket into Amazon OpenSearch for efficient indexing and searching. Next, you will leverage the capabilities of Apache Spark (PySpark) for detailed data analysis. Moreover, setting up an Amazon EMR cluster will enhance data processing and computation capabilities. Finally, leveraging Kibana, you will visualize and explore the stored data in OpenSearch, allowing for data visualization and analysis. This hands-on project will provide a comprehensive understanding of how to build a complete data pipeline using various AWS services and Apache tools, enabling you to manage, process, analyze, and visualize data effectively.

Source- Build A Data Pipeline in AWS using NiFi, Spark, and ELK Stack

Master The Art Of Real-time Data Ingestion With ProjectPro

Now, the question is, do you want to be the data engineer who can confidently handle any real-time data ingestion challenge or just another data expert who struggles with the nitty-gritty of real-time data ingestion? The answer is clear. Explore the power of real-time data ingestion and become the data engineering expert you aspire to be. ProjectPro's end-to-end solved big data projects offer the perfect platform to get your hands dirty and learn from real-world scenarios. Working on these projects from the ProjectPro repository will help you grasp the entire real-time data ingestion process and give you insights into building effective big data solutions that drive business value. Let ProjectPro become your guiding light and transform you into a data engineering expert!

FAQs on Real-Time Data Ingestion

What are the common challenges in real-time data ingestion?

Some common challenges in real-time data ingestion include dealing with large volumes of data, ensuring data quality and integrity, handling diverse data formats and sources, addressing real-time data processing requirements, and ensuring secure and compliant data transfer and storage.

How does real-time ingestion differ from batch ingestion?

Real-time data ingestion involves immediate processing and analysis of data as it arrives, which is suitable for time-sensitive applications like IoT or financial transactions. In contrast, batch ingestion involves collecting and processing data in predefined intervals or batches, more suitable for historical analysis or periodic updates.

About the Author

Daivi

Daivi is a highly skilled Technical Content Analyst with over a year of experience at ProjectPro. She is passionate about exploring various technology domains and enjoys staying up-to-date with industry trends and developments. Daivi is known for her excellent research skills and ability to distill