The A-Z Guide to Gradient Descent Algorithm and Its Variants

A-Z guide to understanding the working of gradient descent optimization algorithm, its types, and the importance of learning rate in gradient descent.

If you have heard of Machine Learning and Deep Learning, you must have also heard about cost (error or loss) functions. But, even if you haven't, fret not! The cost function, in simple words, is a way of measuring the performance of a Machine Learning model by attributing a cost to every 'mistake' or wrong prediction that the model makes.

But as we know from personal experience, one can gain little by simply knowing that a mistake has occurred or how many, for that matter, if you have no clue how to fix it. Advice, instructions, and gentle nudges in the right direction can help in such situations.

Loan Eligibility Prediction using Gradient Boosting Classifier

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectSimilarly, in machine learning models (trying to learn just like us), poorly estimated or untrained parameters and weights cause mistakes and flawed predictions. Thus, while the cost function can give a quantitative measure of the performance, what they need is a systematic way to adjust the parameter values to reduce these errors and, therefore, the cost function - one such way being Gradient Descent.

In this article, we will go methodically over what gradient descent is, its types and even demonstrate its working with a simple follow-along implementation of the method in Python.

Table of Contents

What is Gradient Descent?

Gradient descent is an efficient first-order optimization algorithm for finding a differentiable function's global or local minimum. It estimates the values of parameters or coefficients that minimize a cost function.

The gradient descent method has proved to be especially useful as it can be adopted in spaces of any number of dimensions. The gradient descent method can be used when parameters cannot be calculated analytically and is a good choice for the differentiable cost function.

How does Gradient Descent work?

Image Credit: Neural Networks and Deep Learning

To get an intuitive idea of how Gradient Descent works, let us consider the entire range of values the parameters can take. Here the axes w and b represent the range of values the parameters w and b can take, respectively. In this case, these parameters express a simple linear unit. Hence, the curved surface shown represents the cost function J(w, b) would vary for different values of w and b. While not all cost function surfaces are this smooth in appearance and will have multiple local maxima and minima, we can naturally generalize by observing the figure that minimizing the cost function can therefore be achieved by finding the global minima of the cost surface.

In practice, Gradient Descent does precisely this by altering the parameters or weights to find the direction in which this surface provides the steepest descent as indicated by the red arrows.

New Projects

Gradient Descent Formulae

While we have understood the working of gradient descent in a general sense, we cannot ignore the fact that machines work more with numbers. Thus, now it is necessary to find the quantitative value of what we call 'steepest descent.'

For this, let us consider a generalized cost function J(w), where w is a weight vector. Then, we can calculate the partial derivative of J for each value in w to arrive at a vector â–½J(w).

We should alter weights to achieve gradient descent, and we can do this by updating each of the component weights wi in was,

where

The above gradient descent formula is the weight update rule of gradient descent written in component form.

Get Closer To Your Dream of Becoming a Data Scientist with 70+ Solved End-to-End ML Projects

Here η is a positive constant called the learning rate, which establishes the step size in gradient descent. The negative sign is because we want to minimize J.

However, to implement this on a machine, we need to go one step further as we require an iterative algorithm. We can do this by deriving the value of Jwiand then substituting this value into the weight update rule of gradient descent.

Therefore we arrive at,

Where D is the number of training examples, td is the target value, od is the output value, and xid is the component input value of the dth training example.

Here's what valued users are saying about ProjectPro

Savvy Sahai

Data Science Intern, Capgemini

Jingwei Li

Graduate Research assistance at Stony Brook University

Not sure what you are looking for?

View All ProjectsGradient Descent Method

Therefore we can achieve gradient descent as follows:

- Initialize the weight vector w.

- Until the termination condition (i.e., sufficiently small J value or, more commonly, the number of iterations), do

- Compute each of the â–³wi following the weight update rule for each training example in D

- Update each wi in w to obtain the new weight vector

Now that we have understood and summarized the gradient descent method let us test the working and convince ourselves with a simple linear regression example.

Say we have a data set with the input and output as follows,

Using the above explanation, we can derive the various gradient descent equations and implement the algorithm as,

Observe that we have begun with a slope and intercept value of 0. In this case, these are the 'weights' (or parameters) we are trying to estimate and are subject to updates at every epoch of the gradient descent method.

Get FREE Access to Machine Learning Example Codes for Data Cleaning, Data Munging, and Data Visualization

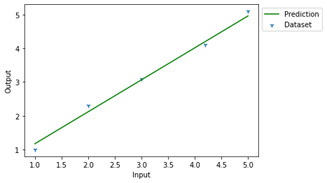

The gradient descent method iteratively minimizes the cost function to find the minimum and accurately estimates the parameter values. The results can be observed by plotting the resultant predicted line as follows.

While we have successfully demonstrated the working of the gradient descent method with this exercise, we must remember that not all the data sets are this simple -in real-life scenarios, very few are.

For instance, with linear units, the surface of the cost function is parabolic with a single global minimum. However, other kinds of inputs that might have multiple local minima resulting in our gradient descent method converging at one of the local minima rather than the global minimum.

We can overcome such drawbacks and improve performance in the gradient descent approach with a few clever modifications. This makes us arrive at different types of gradient descent.

Types of Gradient Descent

1. Batch Gradient Descent

Batch Gradient Descent is essentially another name for vanilla gradient descent. This simplistic approach has found its place in one of the 'types' because, despite all its drawbacks, it is still quite popular.

What drawbacks, you ask?

As mentioned earlier, there is little this approach can do to avoid falling into a local minimum. Additionally, as we have observed with this method, we seek to process all the training examples for each iteration. While this might seem ideal and only fitting for, say, 1000 training examples, what happens when we deal with a million or a billion?

The memory you'd require to store the entire training set and the sheer computational expense you can imagine would follow such an endeavor should have you looking for alternatives (which is what people did!). And this is what has brought us to stochastic gradient descent and mini-batch gradient descent!

2. Stochastic Gradient Descent

With batch gradient descent converging to a local minimum can not only be relatively slow but if there is also no guarantee that the global minimum will be found when there are multiple local minima. Stochastic or incremental gradient descent is used to alleviate these issues and the previously mentioned problems.

In stochastic gradient descent, weights are updated at each training example rather than summing the error over the entire training set.

The flow of the stochastic gradient descent process roughly as follows:

- Initialize the weight vector w.

- Until the termination condition (i.e., sufficiently small J value or, more commonly, the number of iterations), do

- Randomize the samples in the training data set.

- Update each wi as wi = wi + η(t-o) xifor one training example

From above, we can observe that the flow of the gradient descent process is almost identical to its predecessor, with a few modifications that make all the difference. One such change is the need to randomize the training data set in the first step. This is done to mix up the order in which the coefficients are updated.

Consequently, as you can imagine, the cost function's descent and minimization will not be smooth but rather slightly erratic and jumpy. But by adopting this 'random walk,' one will accordingly be better equipped to avoid falling into the multiple local minima and more hopeful of moving towards the global minima. But one seeming disadvantage with this method is that the error function is not as well minimized as in its predecessor. However, the close approximations that you get for the parameter values are often enough because this method keeps them oscillating around the optimal values.

As a cherry on the top, the learning could be much faster with stochastic gradient descent even for a large data set, often necessitating only a few passes to arrive at a good enough set of parameters for your model.

Get More Practice, More Data Science and Machine Learning Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

3. Mini-Batch Gradient Descent

Mini-Batch gradient descent is a derivative of the previous two variants, developed almost to harness the best of both worlds, making it the usual go-to method.

In this method, the training dataset is split into small batches. The weight update rule is then performed for each of these batches. This compromise between the two former variants enables mini-batch gradient descent to retain both the robustness of stochastic gradient descent and the benefits of batch gradient descent. The cost function, in turn, will show a jumpy pattern as in the case of stochastic gradient descent but will also portray the overall downwards trend like that in batch gradient descent.

You will be able to appreciate the similarity of the three variants if you can observe that when all the D training examples are taken into one mini-batch, we will essentially be making batch gradient descent. Alternatively, if we adopt a mini-batch size of one, we'd be making stochastic gradient descent. Therefore, to gain benefits from both these methods, we would have to choose a mini-batch size somewhere between these two extremes. One other advantage of mini-batch gradient descent is that we will use vectorization, unlike in stochastic gradient descent, and still not require the storage we would need to achieve this in batch gradient descent.

Now that we have explored the variants of the gradient descent method and understood their advantages and disadvantages, it's time we look into the one lone parameter that could make or break even the most well-modified variant - the Learning Rate.

Importance of Learning Rate in Gradient Descent

To understand the significance of choosing the correct learning rate while estimating your parameters using gradient descent, let us consider a scenario where blindfolded in an unfamiliar room and asked to find the exit. Disregarding why you might find yourself in such a situation, I think you'd agree that your immediate reaction would be to gingerly walk to the closest wall you can find and then follow it along till you manage to find the way out.

Now, if you take an overconfident man's way of doing this, you'd probably take five or ten steps before you touch the wall to check for an exit. With this, you may miss the exit despite walking around the room a couple of times. On the contrary, if you take a more cautious approach and make sure to feel the wall at every little step, you'd probably take longer to go around the room, but you'd more likely than not find the exit.

It is a similar case with gradient descent as well! Except for maybe, the algorithm tries to find the minimum in the cost function surface, which is usually shaped like a bowl rather than the exit to a room. With a larger learning rate (which is synonymous with your level of confidence or well overconfidence and not how much you are learning), you are willing to let a larger range of parameters unexplored with the hope that you will reach the minimum faster. Just like in the earlier case, with larger unknown spaces, you could end up missing the minimum point and hence the optimal set of parameters. If you still can't quite visualize how that would occur, the following image should help.

On the other end, you might choose a minimal learning rate, altering the parameters so minutely at each step that you complete the number of iterations before you even find the minimum. Hence, you might be forced to increase the number of epochs which will, in turn, increase the time required to determine the optimal parameters. Another drawback of low learning rates is that you might fall into a small local minimum and not find your way out.

Therefore, it is necessary to find a good balance and ensure that the learning rate is neither too high nor too low. This is where the clever choice of graphs and other methods to help us choose a suitable learning rate and get the most out of gradient descent can help.

How to get the most out of Gradient Descent?

Here are some suggestions and tips to improve your chances of obtaining good results while using the gradient descent algorithm:

1. Plotting the Cost curve: Plotting the cost curve tried-and-true method to ensure that your algorithm is performing as expected. Here we plot the cost function values after each iteration to observe the decreasing trend. As shown below, a general observation of trend patterns can also provide hints if the learning rate is inappropriate and must be changed.

Remember that this trend will not be smooth in stochastic and mini-batch gradient descent; however, an overall trend with small spikes and drops can be observed.

2. Setting the Learning Rate: There is no hard and fast rule to decide the exact value of the learning rate, it is general practice (based on prior observations of values that give good performance) to start by using a small learning rate of the range 0.01 or even 0.001. You can then tweak this value based on your observations of the resultant cost curve.

3. Vectorization: Vectorization essentially involves representing your data in the form of vectors which can reduce the number of for loops and drastically reduce the execution time.

4. Normalizing: If normalizing is not done, the algorithm will more likely take a zigzag learning path due to the dominance of larger-scale parameters. Consequently, it will take much longer to reach the minimum. The cost function becomes less skewed and distorted with normalization, enabling a smooth and straight descent towards minimum.

Conclusion

Now, you know all the fundamentals to get started with learning the concepts of gradient descent. Please give yourself a tap on the back for having made it here because a start is indeed the hardest. All the other pesky little nuances will come in time as you implement, so don't be afraid to go ahead and get your hands dirty. Hopefully, the follow-along exercise will give you a little jump start on that!

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,