Why learn Scala Programming for Apache Spark

Apache Spark supports many programming languages like Java, Python, Scala Programming and R, but programming in Scala is ideal for working on Apache Spark.

The most difficult thing for big data developers today is choosing a programming language for big data applications.Python and R programming, are the languages of choice among data scientists for building machine learning models whilst Java remains the go-to programming language for developing hadoop applications. With the advent of various big data frameworks like Apache Kafka and Apache Spark- Scala programming language has gained prominence amid big data developers.

A Hands-On Approach to Learn Apache Spark using Scala

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectWith support for multiple programming languages like Java, Python, R and Scala in Spark –it often becomes difficult for developers to decide which language to choose when working on a Spark project. A common question that industry experts at ProjectPro are asked is – “What language should I choose for my next Apache Spark project?”

The answer to this question varies, as it depends on the programming expertise of the developers but preferably Scala programming language has become the language of choice for working with big data framework like Apache Spark and Kafka.

New Projects

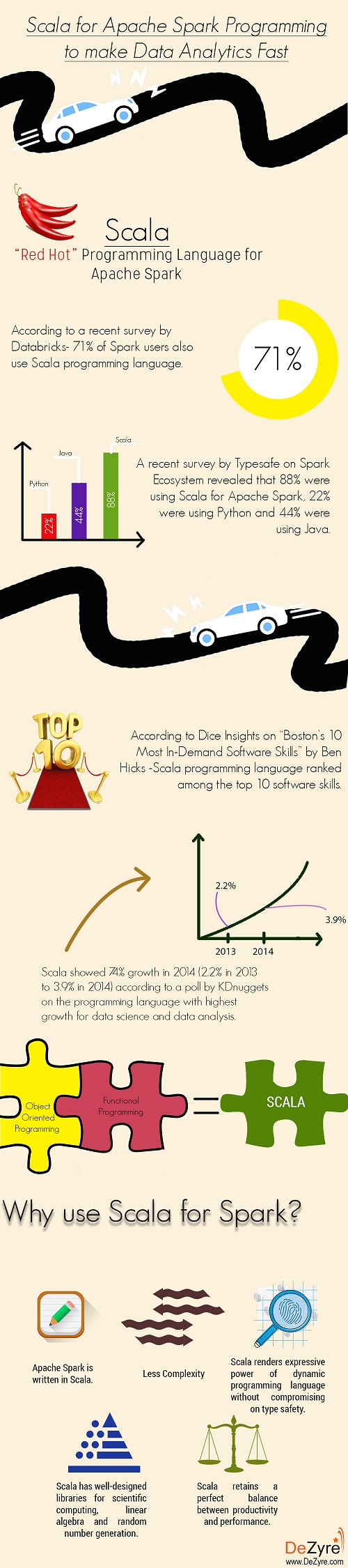

According to a recent survey by Databricks- 71% of Spark users also use Scala programming language - making it the de facto language for Spark programming.

A recent survey by Typesafe on Spark Ecosystem revealed that 88% were using Scala for Apache Spark, 22% were using Python and 44% were using Java. (*The survey questions allowed for more than one answer so the total percentages were greater than 100.)

Scala programming showed 74% growth in 2014 (from 2.2% in 2013 to 3.9% growth in 2014) according to a poll by KDnuggets on “the programming language with the highest growth for data science and data analysis”.

According to Dice Insights on “Boston’s 10 Most In-Demand Software Skills” by Ben Hicks -Scala programming language ranked among the top 10 software skills - predicting it to be the skill with growing demand.

All these statistics reports show how Scala programming is becoming the choice for Apache Spark, to make data analytics faster.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

Scala Programming = Object Oriented Programming + Functional Programming

Scala (Scalable Language) is an open-source language created by Professor Martin Odersky, the founder of Typesafe, which promotes and provides commercial support for Scala programming language. It is a multi-paradigm programming language and supports functional as well as object-oriented paradigms. From the functional programming perspective- each function in Scala is a value and from the object-oriented aspect - each value in Scala is an object.

Scala is a JVM based statistically typed language that is safe and expressive. With its extensions that can be easily integrated into the language-Scala is considered as the language of choice to achieve extensibility.

Scala programming language can be found in use at some of the best tech companies like LinkedIn, Twitter, and FourSquare. Scala’s performance has ignited interest amongst several financial institutions to use it for derivative pricing in EDF Trading. The biggest names in the digital economy are investing in Scala programming for big data processing are - Kafka created by LinkedIn and Scalding created by Twitter. With powerful monoids, combinators, pattern matching features, provision to create DSL’s and more -Scala as a tool for big data processing on Apache Spark is definitely a certainty.

Why should you learn Scala for Apache Spark?

“Being successful in any Spark project, whether its architecture, code reviews, best practices or production support, calls for the best expertise in the world.” – said Jamie Allen, senior director of global services at Typesafe.

Scala Programming language, developed by the founder of Typesafe provides the confidence to design, develop, code and deploy things the right way by making the best use of capabilities provided by Spark and other big data technologies.

There is always a best programming tool for every task. When it comes to processing big data and machine learning – Scala programming has dominated the big data world and here’s why:

1) Apache Spark is written in Scala and because of its scalability on JVM - Scala programming is most prominently used programming language, by big data developers for working on Spark projects. Developers state that using Scala helps dig deep into Spark’s source code so that they can easily access and implement the newest features of Spark. Scala’s interoperability with Java is its biggest attraction as java developers can easily get on the learning path by grasping the object oriented concepts quickly.

2) Scala programming retains a perfect balance between productivity and performance. Most of the big data developers are from Python or R programming background. Syntax for Scala programming is less intimidating when compared to Java or C++. For a new Spark developer with no prior experience, it is enough for him/her to know the basic syntax collections and lambda to become productive in big data processing using Apache Spark. Also, the performance achieved using Scala is better than many other traditional data analysis tools like R or Python. Over the time, as the skills of a developer develop- it becomes easy to transition from imperative to more elegant functional programming code to improve performance.

Here's what valued users are saying about ProjectPro

Ed Godalle

Director Data Analytics at EY / EY Tech

Gautam Vermani

Data Consultant at Confidential

Not sure what you are looking for?

View All Projects3) Organizations want to enjoy the expressive power of dynamic programming language without having to lose type safety- Scala programming has this potential and this can be judged from its increasing adoption rates in the enterprise.

4) Scala is designed with parallelism and concurrency in mind for big data applications. Scala has excellent built-in concurrency support and libraries like Akka which make it easy for developers to build a truly scalable application.

5) Scala collaborates well within the MapReduce big data model because of its functional paradigm. Many Scala data frameworks follow similar abstract data types that are consistent with Scala’s collection API’s. Developers just need to learn the standard collections and it would easy to work with other libraries.

6) Scala programming language provides the best path for building scalable big data applications in terms of data size and program complexity. With support for immutable data structures, for-comprehensions, immutable named values- Scala provides remarkable support for functional programming.

7) Scala programming is comparatively less complex unlike Java. A single complex line of code in Scala can replace 20 to 25 lines of complex java code making it a preferable choice for big data processing on Apache Spark.

8) Scala has well-designed libraries for scientific computing, linear algebra and random number generation. The standard scientific library Breeze contains non-uniform random generation, numerical algebra, and other special functions. Saddle is the data library supported by Scala programming which provides a solid foundation for data manipulation through 2D data structures, robustness to missing values, array-backed support, and automatic data alignment.

9) Efficiency and speed play a vital role regardless of increasing processor speeds. Scala is fast and efficient making it an ideal choice of language for computationally intensive algorithms. Compute cycle and memory efficiency are also well tuned when using Scala for Spark programming.

10) Other programming languages like Python or Java have lag in the API coverage. Scala has bridged this API coverage gap and is gaining traction from the Spark community. The thumb rule here is that by using Scala or Python - developers can write most concise code and using Java or Scala they can achieve the best runtime performance. The best trade-off is to use Scala for Spark as it makes use of all the mainstream features, instead of developers having to master the advanced constructs.

Build an Awesome Job Winning Project Portfolio with Solved End-to-End Big Data Projects

With growing realization among the developing community that Scala not only gives the traditional agile language a close run but also helps companies take their products to the next level with ease- 2016 is the best time to learn Scala for Spark programming to adapt to the changing technology needs for big data processing. Scala programming might be a difficult language to master for Apache Spark but the time spent on learning Scala for Apache Spark is worth the investment. Its winning combination of both object oriented and functional programming paradigms might be surprising to beginners and they might take some time to pick up the new syntax. Hands-on experience in working with Scala for Spark projects comes as an added advantage for developers who want to enjoy programming in Apache Spark in a hassle-free way.

2016 projects increasing number of lucrative career opportunities for Scala and Spark developers in the big data world. Developers should stop asking the question –“Why use Scala for Spark programming?” and start learning Spark and Scala now, to see how far Scala programming can take them in the big data world!

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,