Working with Spark RDD for Fast Data Processing

Understand the advantages of using the fundamental data structure of Spark- Resilient Distributed Datasets i.e. RDD Spark for batch analytics.

Hadoop MapReduce well supported the batch processing needs of users but the craving for more flexible developed big data tools for real-time processing, gave birth to the big data darling Apache Spark. Spark is setting the big data world on fire with its power and fast data processing speed. According to a survey by Typesafe, 71% people have research experience with Spark and 35% are using it. The survey reveals hockey stick like growth for Apache Spark awareness and adoption in the enterprise. It has taken over Hadoop in the big data room in terms of fast data processing of iterative machine learning algorithms and interactive data mining algorithms.

Deploying auto-reply Twitter handle with Kafka, Spark and LSTM

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectThe centre of attraction for Apache Spark is fast data processing speed and its ability to handle event streaming. These features make the open source superstar Apache Spark the sweetheart of the big data world. All thanks to the main abstractions- Resilient Distributed Datasets which have made Apache Spark the big data darling of many enterprises. Spark RDD is the bread and butter of the Apache Spark ecosystem and to learn Spark - mastering the concepts of apache spark RDD is extremely important. In this post we will learn what makes Resilient Distributed Datasets, the soul of the Apache Spark framework in making it an efficient programming model for batch analytics.

What are Resilient Distributed Datasets (RDDs)?

According to the original paper -Resilient Distributed Datasets: A Fault-Tolerant Abstraction for In-Memory Cluster Computing, RDDs in Spark can be defined as follows-

Resilient Distributed Datasets (RDDs) are a distributed memory abstraction that lets programmers perform in-memory computations on large clusters in a fault-tolerant manner.

According to the scaladoc of org.apache.spark.rdd.RDD:

A Resilient Distributed Dataset (RDD), the basic abstraction in Spark, represents an immutable, partitioned collection of elements that can be operated on in parallel.

If you would like more information about Big Data careers, please click the orange "Request Info" button on top of this page.

Here's what valued users are saying about ProjectPro

Ray han

Tech Leader | Stanford / Yale University

Ed Godalle

Director Data Analytics at EY / EY Tech

Not sure what you are looking for?

View All ProjectsLet’s understand RDDs in Spark through their full form-Resilient Distributed Datasets:

New Projects

Resilient

Meaning it provides fault tolerance through lineage graph. A lineage graph keeps a track of transformations to be executed after an action has been called. RDD lineage graph helps recomputed any missing or damaged partitions because of node failures.

Distributed

RDDs are distributed - meaning the data is present on multiple nodes in a cluster.

Datasets

Collection of partitioned data with primitive values.

Apache Spark allows users to consider input files just like any other variables which is not possible in case of Hadoop MapReduce

Features of Spark RDDs

Immutable

​They read only abstraction and cannot be changed once created. However, one RDD can be transformed into another RDD using transformations like map, filter, join, cogroup, etc. Immutable nature of RDD Spark helps attain consistencies in computations.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

Partitioned

RDDs in Spark have collection of records that contain partitions. RDDs in Spark are divided into small logical chunks of data - known as partitions, when an action is executed, a task will be launched per partition. Partitions in RDDs are the basic units of parallelism. Apache Spark architecture is designed to automatically decide on the number of partitions that an RDD can be divided into. However, the number of partitions an RDD can be divided into can be specified when creating an RDD. Partitions of an RDD are distributed through all the nodes in a network.

Lazy Evaluated

RDDs are computed in a lazy manner, so that the transformations can be pipelined. Data inside RDDs will not be transformed unless, an action that triggers the execution of transformations is invoked.

Persistence

The persistence of RDDs makes them good for fast computations. Users can specify which RDD they want to reuse and select the desired storage for them -whether they would like to store them on disk or in-memory. RDDs are cacheable i.e. they can hold all the data in desired persistent storage.

Fault Tolerance

Spark RDDs log all transformation in a lineage graph so that whenever a partition is lost, lineage graph can be used to reply the transformation instead of having to replicate data across multiple nodes (like in Hadoop MapReduce).

Parallel

RDDs in Spark process data in parallel.

Typed

Spark RDDs have various types –RDD [int], RDD [long], RDD [string].

Limitations of Hadoop MapReduce that Spark RDDs solve

Quora user Stan Kladko –Cofounder of Galactic Exchange.io, explains the limitations of Hadoop MapReduce that Spark RDDs solve in a very simple way and readers are bound to love his explanation-

Complex applications and interactive queries both need efficient data sharing primitives which Spark RDDs provide.

There Exists a Synchronization Barrier between Map and Reduce Tasks

In Hadoop MapReduce, there always exists a synchronisation barrier between the map and reduce tasks, thus the data should be persisted to the disc. Though this kind of design helps the job to recover whenever there is a failure, it does not leverage the memory of the Hadoop cluster in a complete form. Spark RDDs let the users transparently store data in memory and persevere it to the disc only if it is needed. So, there does not exist any synchronisation barrier that can slow down the data processing speed making the Spark execution engine really fast.

Data Sharing is slow with Hadoop MapReduce - Spark RDDs solve this.

Hadoop MapReduce cannot handle iterative (Machine learning and graph processing tasks) and interactive (running ad-hoc queries on same subset of data) applications. If data is kept in-memory for both these applications then the performance can be improved to a great extent. In iterative distributed data processing where data needs to be processed over multiple jobs in machine learning algorithms like K-Means Clustering or Logistic Regression or Page Rank, it is very common that data is shared or reused between multiple jobs.

Iterative Operations on Hadoop MapReduce

Hadoop MapReduce is fault tolerant but it is very difficult to reuse intermediate results across multiple jobs. Data reuse or sharing is very slow in Hadoop MapReduce as the data needs to be stored into intermediate stable storage like Amazon S3 or HDFS. This requires multiple IO operations, serializations and data replication slowing down the overall computation of jobs.

Suppose that there is a web server log that needs to be analysed for a specific error code. You write Hadoop MapReduce code using regular expressions to look for the specific error code using grep. On executing the Hadoop MapReduce code on server a set of files containing the grepped error_code will be returned, the cluster will be closed and all the retrieved files will be stored on an Amazon S3 location mentioned in the Hadoop MapReduce code. You look at the retrieved files and notice that you need to write another piece of Hadoop MapReduce code to retrieve few more files. This will require additional time to bring those files, process them and return the desired results.

The below image demonstrates iterative processing in MapReduce, which shows the intermediate stable storage required in Hadoop MapReduce for processing incurring an additional overhead -

.png)

Iterative Operations on Spark RDDs

Resilient Distributed Datasets solve this problem through fault tolerant distributed in-memory processing by storing the data in-memory so that the user can re-query the subset of data. The below image depicts how RDDs store the intermediate outputs in distributed memory instead of stable storage, making the execution faster than Hadoop MapReduce -

.png)

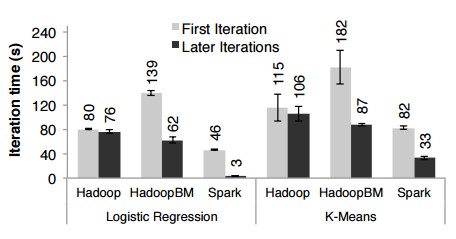

RRDs in Spark outperform Hadoop MapReduce by up to 20 times in iterative applications that require querying large volume of data. The below graph shows the performance evaluation for iterative machine learning algorithms using Hadoop and Spark-

Image Credit : Orginal Paper on RDD's by Matei Zaharia

Interactive Operations on MapReduce

In interactive data mining applications users need to run multiple ad-hoc queries on the same subset of data. In Hadoop MapReduce, it is not efficient to run interactive ad-hoc queries because every query will perform disk I/O on the stable storage which is likely to surpass the overall execution time of the interactive application.

Get More Practice, More Big Data and Analytics Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

.png)

Interactive Operation on Spark RDDs

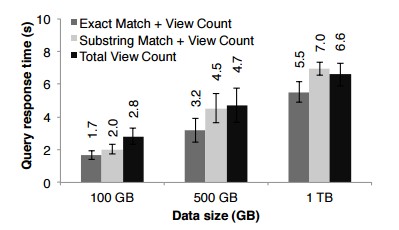

Apache Spark has been evaluated for interactive use by querying 1 TB of dataset with latency of 5 to 7 seconds. Users can run different queries on the same subset of data continuously by keeping it in-memory for improved execution times. The below image depicts how interactive operations are performed in Spark in-memory.

.png)

The below graph shows the performance evaluation for interactive applications using Hadoop and Spark-

Image Credit : Orginal Paper on RDD's by Matei Zaharia

Summary of the Limitations of Hadoop MapReduce over Spark RDDs

- Hadoop MapReduce uses coarse grained operations for processing that are just too heavy for processing iterative algorithms.

- Hadoop MapReduce cannot cache intermediate data in-memory but instead flushes intermediate data to disk after every step.

Types of RDDs in Spark

- A resultant RDD obtained by calling operations like map (), flatMap () is known as MapPartitions RDD.

- An RDD that provides functionality for reading data stored in HDFS is known as HadoopRDD.

- A resultant RDD obtained by calling operations like coalesce and repartition is known as a Coalesced RDD.

There are many other interesting types of RDDs in Spark like SequenceFileRDD, PipedRDD, CoGroupedRDD, and ShuffledRDD.

Build an Awesome Job Winning Project Portfolio with Solved End-to-End Big Data Projects

RDDs bring in many benefits to the Spark ecosystem and are best suited for batch analytic applications as they have all the required information in the lineage graph to reconstruct in parallel on different nodes after a failure. RDDs do have many advantages but cannot be used for all kinds of applications. RDDs might not be well-suited for applications like – a storage system for a web application or an incremental web crawler, that make asynchronous fine grained updates to shared state.

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,