Data Scientist, Boeing

Data and Blockchain Professional

Global Data Community Lead | Lead Data Scientist, Thoughtworks

Director of Business Intelligence , CouponFollow

In this PySpark project, you will simulate a complex real-world data pipeline based on messaging. This project is deployed using the following tech stack - NiFi, PySpark, Hive, HDFS, Kafka, Airflow, Tableau and AWS QuickSight.

Get started today

Request for free demo with us.

Schedule 60-minute live interactive 1-to-1 video sessions with experts.

Unlimited number of sessions with no extra charges. Yes, unlimited!

Give us 72 hours prior notice with a problem statement so we can match you to the right expert.

Schedule recurring sessions, once a week or bi-weekly, or monthly.

If you find a favorite expert, schedule all future sessions with them.

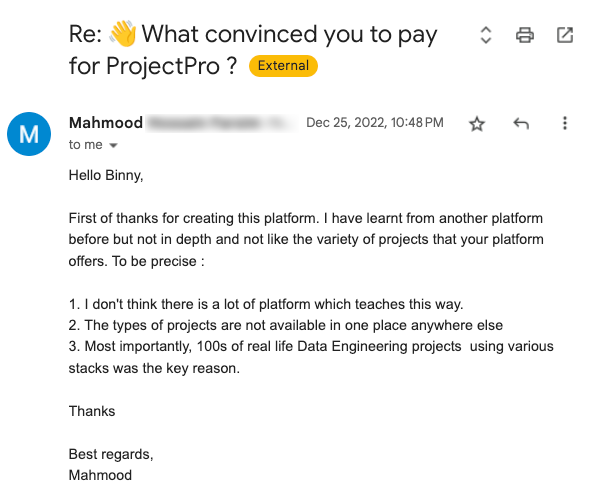

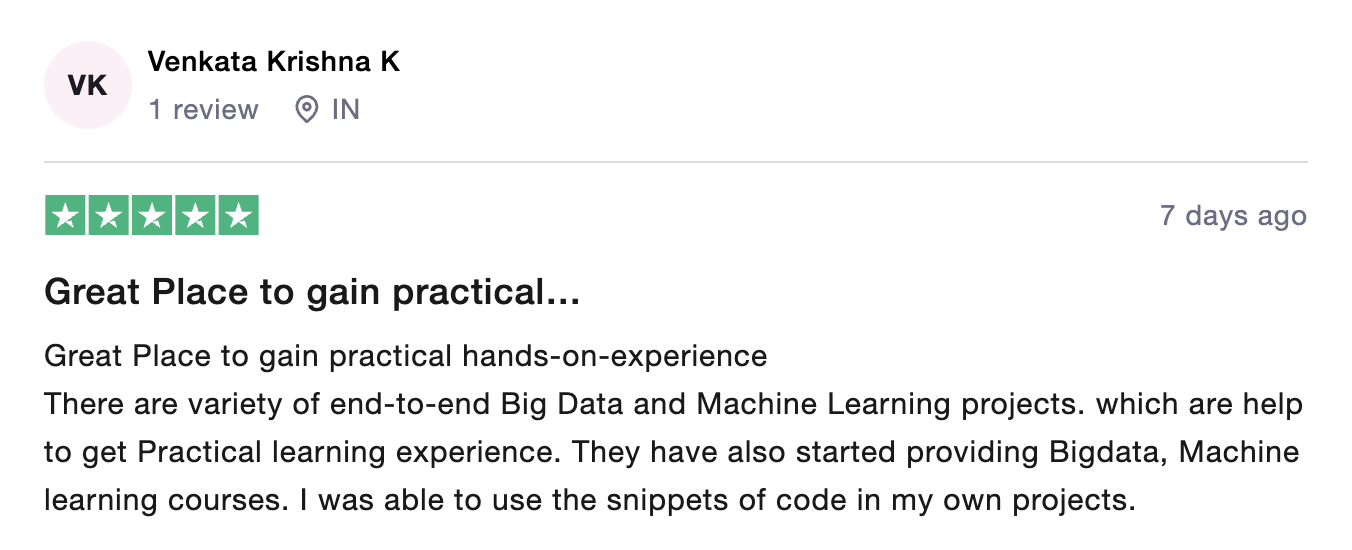

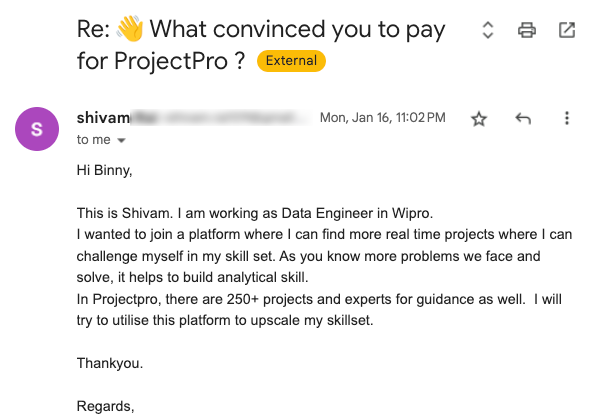

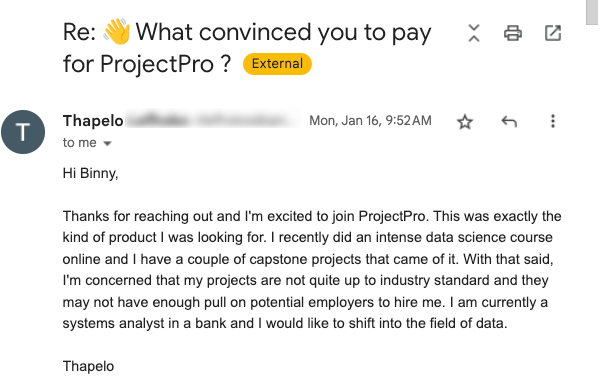

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

250+ end-to-end project solutions

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

15 new projects added every month

New projects every month to help you stay updated in the latest tools and tactics.

500,000 lines of code

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

600+ hours of videos

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

Cloud Lab Workspace

New projects every month to help you stay updated in the latest tools and tactics.

Unlimited 1:1 sessions

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

Technical Support

Chat with our technical experts to solve any issues you face while building your projects.

7 Days risk-free trial

We offer an unconditional 7-day money-back guarantee. Use the product for 7 days and if you don't like it we will make a 100% full refund. No terms or conditions.

Payment Options

0% interest monthly payment schemes available for all countries.

PySpark is a Python API for Apache Spark that was created to facilitate Apache Spark-Python integration. In addition, PySpark in Apache Spark and Python allows you to work with Resilient Distributed Datasets (RDDs). PySpark Py4J is a popular PySpark tool that allows Python to dynamically communicate with JVM objects. PySpark includes a number of libraries that can assist you in writing efficient programs.

PySpark is a useful tool for data scientists since it simplifies the process of turning prototype models into production-ready model workflows. Model workflows for model training and serving can be created with PySpark in cluster environments. PySpark can be used for exploratory data analysis and developing machine learning pipelines, which is important in a data science workflow.

Apache Hive is a data warehouse framework that can process huge amounts of data. The datasets are typically stored in Hadoop Distributed File Systems and other platforms' databases. Hive is a framework for reading, writing, and managing data that is based on top of Hadoop. The query language used with Apache Hive to do querying and analytics is HQL or HiveQL.

Hive is a database designed for batch transformations and massive analytical queries, with restricted write capabilities and interaction. RDBMS experts adore Apache Hive because it allows them to map HDFS files to Hive tables and query the data with ease. HBase tables can also be mapped and Hive can be used to process the data.

In this Big Data project, a senior Big Data Architect will demonstrate how to implement a Big Data pipeline on AWS at scale. You will be using the Covid-19 dataset. This will be streamed in real-time from an external API using NiFi. The complex JSON data will be parsed into CSV format using NiFi and the result will be stored in HDFS.

Then this data will be sent to Kafka for data processing using PySpark. The processed data will then be consumed from Spark and stored in HDFS. Then a Hive external table is created on top of HDFS. Finally the cleaned, transformed data is stored in the data lake and deployed. Visualization is then done using Tableau and AWS QuickSight.

This PySpark pipeline project involves working on the Covid-19 dataset. The dataset includes the total number of confirmed cases, the total number of recovered cases, the total number of deaths, country name, country code, etc.

Apache NiFi is a data logistics platform that automates the transfer of data across different systems. It gives real-time control, making data transfer among any source and any target simple to monitor. To build a data pipeline using spark in this project, you first need to extract the data using NiFi. After the data has been successfully extracted, the next step is to encrypt certain information (country code) to ensure data security. This is done by applying various hashing algorithms to the data. Also, you must ensure that all encrypted data are in uppercase format for the algorithms to function properly.

Apache Kafka is a pub-sub (publish-subscribe) messaging service and a powerful queue that can manage a large amount of data and allows you to send messages from one terminal to another. Kafka may be used to accept messages both offline and online. To avoid data loss, Kafka messages are stored on a disc and replicated throughout the cluster. The Kafka messaging system is based on the ZooKeeper synchronization service. For real-time streaming data processing, it works well with Apache Storm and Spark. This data engineering project entails publishing the real-time streaming data into Kafka using the PublishKafka processor. Once the data is stored in Kafka topic, it needs to be streamed into PySpark for further processing.

PySpark is a Python Spark framework for executing Python programs employing Apache Spark capabilities. PySpark is widely used in the Data Science and Machine Learning industry since many popular data science libraries are written in Python, such as NumPy and TensorFlow. It's also popular since it can handle enormous datasets quickly. The next step of this PySpark pipeline project is to read the streaming data from the Kafka topic and perform some operations on it using PySpark. Once the data has been processed, it is streamed into the output Kafka topic.

Apache Hive is a fault-tolerant distributed data warehouse that allows for huge analytics. Hive users can read, write, and manage huge amounts of data using SQL. Hive is built on top of Apache Hadoop, an open-source platform for storing and processing large amounts of data. As a result, Hive is inextricably linked to Hadoop and is designed to process gigabytes of data efficiently. Hive is characterized by its capability to search large datasets with a SQL-like interface utilizing Apache Tez or MapReduce. In this project, once the data is stored in HDFS, an external table is created using Hive on top of HDFS. This is done to perform queries on the stored data.

Amazon QuickSight is a cloud-based business intelligence (BI) tool that is scalable, serverless, embeddable, and powered by machine learning. Businesses may use Amazon QuickSight BI to build and analyze data visualizations and extract easy-to-understand insights to help them make better business decisions. Quicksight allows you to easily integrate the interactive dashboards into various apps, platforms, and websites. This PySpark HIve project involves creating multiple dashboards using Bar graphs, Pie charts, Scatter plots, etc. The dashboards depict data such as the average of total confirmed cases, the average of total recovered cases, the average of total deaths, etc.

Tableau is a visual analytics tool capable of managing a company's full data landscape. The analytics tool focuses on providing engaging data graphics, with a focus on business scenarios. Tableau offers a variety of baseline visualizations. Line charts, heat maps, and other visual aids are among them. To create and access advanced visualizations, the tool does not require the user to have specialized coding expertise. During the analysis, users can include as many data points as they want. Tableau also offers low-cost/free non-profit tools as well as other academic alternatives. In this spark pipeline project, Tableau is used for data visualization with help of an Area chart, Bar graph, Bubble chart, etc. The various dashboards show the country-wise analysis such as the average of total confirmed cases, the average of total deaths, etc.

A pipeline in Apache Spark is an object that combines convert, evaluate, and fit steps into a single object. A pipeline is made up of several stages, each of which is an Estimator or a Transformer.

Apache Spark is a popular and effective framework for ETL, i.e. it is used for processing, querying, and analyzing large amounts of data. By setting up a cluster of several nodes, you can easily load and handle huge amounts of data.

Recommended

Projects

Best MLOps Certifications To Boost Your Career In 2024

Chart your course to success with our ultimate MLOps certification guide. Explore the best options and pave the way for a thriving MLOps career. | ProjectPro

Your A-Z Guide to AWS Data Engineer Certification Roadmap

The ultimate AWS Data Engineer Certification Roadmap - a step-by-step guide for mastering data engineering on Amazon Web Services. | ProjectPro

Learning Artificial Intelligence with Python as a Beginner

Explore the world of AI with Python through our blog, from basics to hands-on projects, making learning an exciting journey.

Get a free demo