Is it necessary to learn Hadoop to become a Data Scientist?

Is it necessary to learn Hadoop to become a Data Scientist? Understand why it helps to learn Hadoop for data science but learning Hadoop is not a necessity.

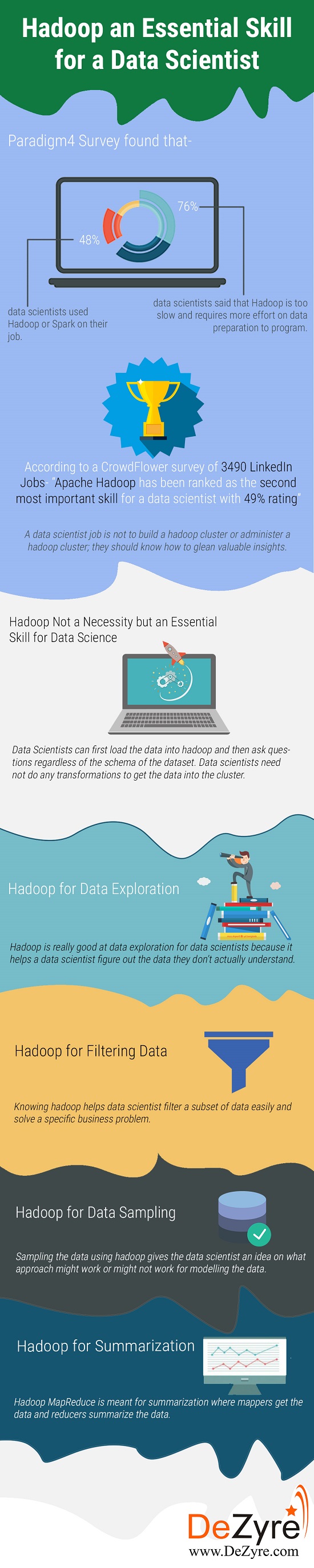

A study of more than 100 data scientists by Paradigm4 found that only 48% of data scientists used Hadoop or Spark on their jobs whilst 76% of the data scientists said that Hadoop is too slow and requires more effort on data preparation to program. Contrary to this, a recent analysis by CrowdFlower on 3490 LinkedIn jobs for data science ranked Apache Hadoop as the second most important skill for a data scientist with 49% rating. With various definitions doing rounds on the web about who is a data scientist and what skills they must possess, many aspiring data scientists have this doubt– Is it necessary to learn Hadoop to become a Data Scientist? This article helps one understand if learning Hadoop is mandatory for a career in data science.

A data scientist’s job is not to build a Hadoop cluster or administer a Hadoop cluster; they should know how to glean valuable insights from the data regardless of where it is coming from. Data scientists have several technical skills like Hadoop, NoSQL, Python, Spark, R, Java and more. However, finding a unicorn data scientist with varied technical skills is extremely difficult, as most of them “pick up” some of the skills on the job. Apache Hadoop is a prevalent technology and is an essential skill for a data scientist but definitely is not the golden hammer.

Classification Projects on Machine Learning for Beginners - 1

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectEvery data scientist must know how to get the data out in the first place to do analysis and Hadoop is the technology that actually stores large volumes of data - which data scientists can work on. With so many definitions for what skills should a data scientist have, nobody agrees to one particular definition of data scientist skills. For some, a data scientist should have the ability to manage data using Hadoop along with a good ability to run statistics against the data set. For others, a data scientist should be able to ask the right questions and continue asking them till the analysis results reveal what is actually needed.

Table of Contents

Why a data scientist should use Hadoop for Data Science?

Suppose there is a job on data analysis that takes 20 minutes to execute. Considering the same job and the same data size, if the number of computers is doubled, then it will take only 10 minutes to complete the job. This might not seem to be a significant factor at small scale but on a large scale it definitely does matter. Hadoop helps achieve linear scalability through hardware. If a data scientist wants to speed up data exploration, then he or she can buy more computers.

Data Scientists can first load the data into Hadoop and then ask questions regardless of the schema of the dataset. Thus, data scientists can just relax without having to do any transformations to get the data into the cluster.

Lastly, and the most important point, a data scientist need not be a master of distributed systems to work with Hadoop for data science, without having to get into things like inter-process communication, message-passing, network programming, etc. Hadoop provides transparent parallelism as data scientists just have to write java based MapReduce code or use other big data tools on top of Hadoop like Pig, Hive, etc.

New Projects

Hadoop for Data Science – An important tool for Data Scientists.

Hadoop is an important tool for data science when the volume of data exceeds the system memory or when the business case requires data to be distributed across multiple servers. Under these circumstances, Hadoop comes to the rescue of a data scientist by helping them transport data to different nodes on a system at a faster pace.

Get Closer To Your Dream of Becoming a Data Scientist with 70+ Solved End-to-End ML Projects

Hadoop for Data Exploration

80% of a data scientist’s time is spent in data preparation and data exploration plays a vital role in it. Hadoop is really good at data exploration for data scientists because it helps a data scientist figure out the complexities in the data, that which they don’t understand. Hadoop allows data scientists to store the data as is, without understanding it and that’s the whole concept of what data exploration means. It does not require the data scientist to understand the data when they are dealing from “lots of data” perspective.

Here's what valued users are saying about ProjectPro

Savvy Sahai

Data Science Intern, Capgemini

Ray han

Tech Leader | Stanford / Yale University

Not sure what you are looking for?

View All ProjectsHadoop for Filtering Data

Under rare circumstances data scientists build a machine learning model or a classifier on the entire dataset. They need to filter data based on the business requirements. Data Scientists might want to look at a record in its true form but only a few of them might be relevant. When filtering data, data scientist pick up on rotten or dirty data that are useless. Knowing Hadoop helps data scientist filter a subset of data easily and solve a specific business problem.

Hadoop for Data Sampling

A data scientist cannot just go about building a model by taking the first 1000 records from the dataset because the way the data is usually written - similar kind of records might be grouped together. Without sampling the data, a data scientist cannot get a good view of what’s there in the data as a whole. Sampling the data using Hadoop gives the data scientist an idea on what approach might work or might not work for modelling the data. Hadoop Pig has a cool keyword utility “Sample” that helps trim down the number of records.

Hadoop for Summarization

Summarizing the data as a whole, using Hadoop MapReduce helps data scientist get a bird’s-eye of better data building models. Hadoop MapReduce is meant for summarization where mappers get the data and reducers summarize the data.

Hadoop is widely used in the most important part of the data science process (data preparation) but it is not the only big data tool that can manage and manipulate voluminous data. It is good for a data scientist to be familiar with concepts like distributed systems, Hadoop MapReduce, Pig, Hive but a data scientist cannot be merely judged on the knowledge of these subjects. Data Science is a multi-disciplinary field and with the zeal and motivation to learn can help you become exceptionally good at your job.

Get FREE Access to Machine Learning Example Codes for Data Cleaning, Data Munging, and Data Visualization

A data scientist can take the help of a data engineer to handle the data from 1000 structured CSV files containing the required data and he can focus on delivering valuable insights from the data without having to spread the computation across several machines. He can emphasize on developing machine learning models, analysing them using visualization, combining the data with external data points, etc. A data scientist might have to use Hadoop to test the developed model and apply it on the entire dataset. So knowing Hadoop does not make someone a data scientist and at the same time not knowing Hadoop does not debar anyone from becoming a data scientist.

Hadoop an Essential Skill for a Data Scientist but not a Necessity.

Many novel business analytic use cases require powerful algorithms and computational resources and at times, this might not be possible to achieve, using Hadoop. Under such circumstances, data scientists have to look for novel ways to leverage organizations big data, through other promising alternatives to Hadoop, like Apache Storm or Apache Spark.

Apache Hadoop is ideal for parallel problems but at times it misses the mark for complex analytics involving machine learning, principal component analysis, etc. Hadoop might be unworkable for data science -when there are millions of products and customers for recommendations or when it requires processing genetic sequencing data on huge arrays or if the business use case requires gleaning real-time insights from sensor or graphical data through powerful noise reduction algorithms. Hadoop has been regarded as a disruptive big data solution but it cannot be considered always for highly data parallel analytics tasks which require sharing the entire data at once and communicating the intermediary results to other processes.

Get More Practice, More Data Science and Machine Learning Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

22% of data scientists (nearly a quarter) say that - with increasing number of diverse data sources - even with the use of Hadoop, their job has become more difficult over time, as some of the shortcomings of Hadoop come as a major roadblock in performing complex analytics.

Takeaway

Having said that data scientists will have to interface with Hadoop technology - there are rare cases where they might be required to wear the dual hat of a Hadoop developer and a data scientist. So, if you want to become a data scientist, learning Hadoop is useful to speed up the process of becoming a data scientist. However, not knowing Hadoop will in no way disqualify you as a data scientist. To become a data scientist, you can learn data science programming tools like Python and R for your analytics to operate on a subset of data, even without an in-depth working knowledge of the Hadoop framework.

About the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,