Zookeeper and Oozie: Hadoop Workflow and Cluster Managers

Zookeeper is a tool that focuses on coordination between the distributed applications and Oozie is Java based web application used for Job scheduling.

We generate petabytes of data every day, which is processed by farms of servers distributed across the geographical location of the globe. With big data seeping into every facet of our lives, we are trying to build robust systems which can process petabytes of data in a fast and accurate manner. Apache Hadoop, an open source framework is used widely for processing gigantic amounts of unstructured data on commodity hardware. Four core modules form the Hadoop Ecosystem: Hadoop Common, HDFS, YARN and MapReduce. On top of these modules, other components can also run alongside Hadoop, of which, Zookeeper and Oozie are the widely used Hadoop admin tools. Hadoop requires a workflow and cluster manager, job scheduler and job tracker to keep the jobs running smoothly. Apache Zookeeper and Oozie are the Hadoop admin tools used for this purpose.

We have heard a lot about the Distributed Systems and their processing power in the past. Hadoop’s main advantage is its ability to harness the power of distributed systems. A distributed system, in its simple term is a system comprising of various software components running on multiple physical machines independently and concurrently. But with distributed systems, one has to face many challenges like message delays, processor speed, system failure, master detection, crash detection, metadata management and etc. All these challenges make the distributed systems faulty. Having pointed this out, a perfect solution has not yet been achieved but Zookeeper provides a very simple, easy and nice framework to deal with these problems.

Table of Contents

What is Apache Zookeeper?

Apache Zookeeper is an application library, which primarily focuses on coordination between the distributed applications. It exposes simple services like naming, synchronization, configuration management and grouping services, in a very simple manner, relieving the developers to program them from start. It provides off the shelf support for queuing, and leader election.

What is the Data Model for Apache Zookeeper?

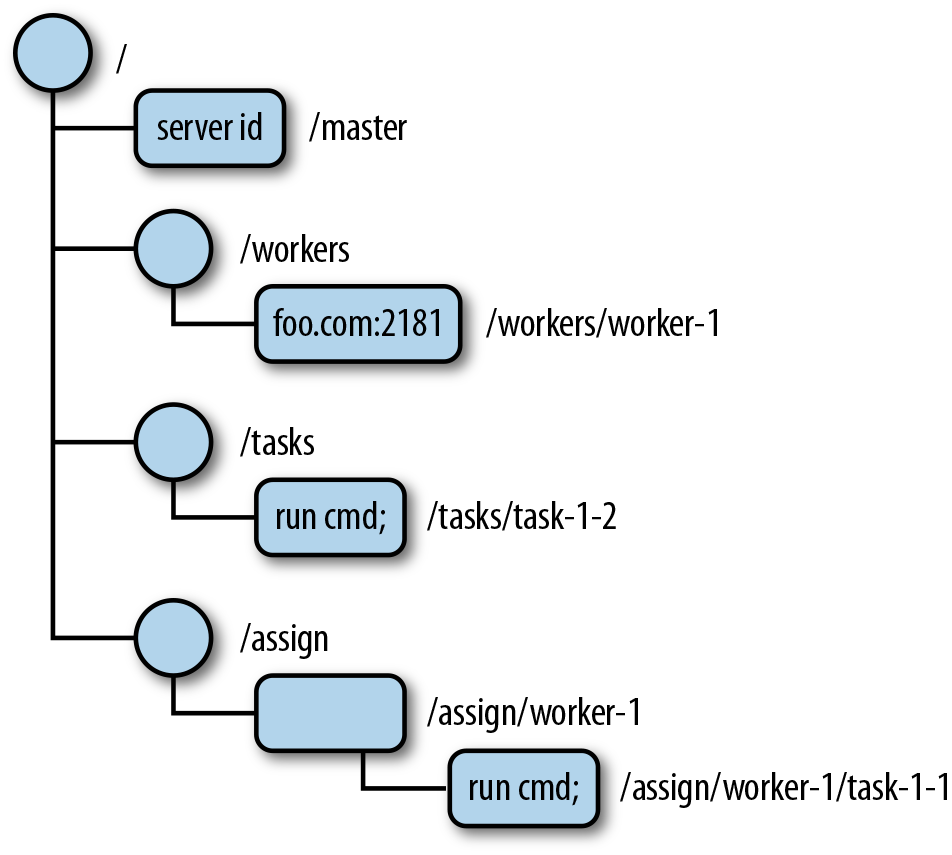

Apache Zookeeper has a file system-like data model, referred to as “Znode”. Just like a file system has directories, Znodes are also directories, and can have data associated with them. The ZNode can be referenced through absolute path separated with a slash. Each server in the ensemble, stores the Znode hierarchy in the memory, which makes the response quick and scalable as well. Each server maintains a transaction log - which logs each ‘write’ request on the disk. Before sending the response to the client, the transaction has to be synchronized on all the servers making it performance critical. Although it appears to be a file system based architecture, it is advisable that it shouldn’t be used as a general purpose file system. It should be used for storage of small amount of data so that it can be reliable, fast, scalable, available and should be in coordination to distributed application.

Image Credit : ebook -Zookeeper-Distributed Process Coordination from O'Reilly

New Projects

How does Apache Zookeeper work?

The architecture of Zookeeper is based on a simple Client Server model. The clients are the nodes which request the server for service and the server is the node which serves the requests. There can be multiple Zookeeper servers which form the ensemble. From the group of these servers, a leader is elected, when services get started. Each client is connected to only one server and all the reads are performed by that server only. When a ‘write’ request comes to a server, it is first sent to the leader, the leader then asks the quorum. Quorum is a strict majority of nodes available in the ensemble that decide on this request. The ‘write’ request is considered successful, if the quorum responds positively. That’s why, a ‘write’ request takes more time than a ‘read’ request and it is suggested that Zookeeper should be implemented in the distributed system where there are fewer ‘write’ requests and more of ‘read’ requests.

The beauty of Zookeeper is that it doesn’t stop you from choosing the count of servers you want to run. If you want to run one server, Zookeeper will allow you to have one server, but it won’t be highly available or reliable.

Get FREE Access to Data Analytics Example Codes for Data Cleaning, Data Munging, and Data Visualization

What is ZNode?

ZNode can be thought of as directories in the file system. The only difference is that Znodes have the capability of storing data as well. ZNode’s task is to maintain the statistics of the structure and version numbers for data changes. Data ‘read’ and ‘write’ happen in its entirety as Zookeeper does not allow partial ‘read’ and ‘write’.

Image Credit : ebook -Zookeeper-Distributed Process Coordination from O'Reilly

Types of ZNodes-

- Persistent ZNodes-

It is the default Znode in Zookeeper. It can be deleted only by calling the delete functionality of Zookeeper.

- Ephemeral ZNodes-

Data gets deleted as soon as the session gets terminated or connection is closed by the client.

- Sequential Znodes- A 10 digit number is given at the end of the Znode’s name.

What are Watches and Notification?

Zookeeper works on APIs - client accesses the ZNode every time it needs to know if anything has changed. This is a very expensive task in terms of resource and latency. Zookeeper requires higher latency and more operations to render this information. To reduce Polling, Zookeeper works on Publish Subscribe model, where ZNode has to publish the APIs and client has to subscribe to those APIs. Whenever there is change in the content of the Znode, all clients subscribed to that API, will receive a Notification.

Here's what valued users are saying about ProjectPro

Ray han

Tech Leader | Stanford / Yale University

Gautam Vermani

Data Consultant at Confidential

Not sure what you are looking for?

View All ProjectsWhat Zookeeper Doesn’t Do?

The Zookeeper is used for managing ‘coordination related’ data. It should not be used for bulk storage.

Zookeeper is used to implement core set of applications which primarily require coordination between distributed applications. Zookeeper provides the tool for implementing the tasks, for example - which processes are non-responsive? It is the implementer, who decides what task to implement for coordination.

Zookeeper Use Cases

1) Apache HBase

It is a data store generally used with Hadoop. Zookeeper is used for electing cluster leader, tracking of servers and tracking of server metadata.

2) Apache Solr

It is a distributed enterprise search platform. It uses Zookeeper to store and update the metadata.

3) Facebook Messages

Uses Zookeeper for controlling the sharding, for failover and also for service discovery.

Now since Zookeeper is there to help us deal with the problems of distributed systems, there is one more problem which comes up when we deploy Hadoop in enterprise environments. The problem is scheduling of MapReduce, Pig and Hive jobs or any other Hadoop jobs. Apache Oozie, another open source web based job scheduling application, helps solve this problem.

Get More Practice, More Big Data and Analytics Projects, and More guidance.Fast-Track Your Career Transition with ProjectPro

Need for Oozie

With Apache Hadoop becoming the open source de-facto standard for processing and storing Big Data, many other languages like Pig and Hive have followed - simplifying the process of writing big data applications based on Hadoop.

Although Pig, Hive and many others have simplified the process of writing Hadoop jobs, many times a single Hadoop Job is not sufficient to get the desired output. Many Hadoop Jobs have to be chained, data has to be shared in between the jobs, which makes the whole process very complicated.

Oozie –The Savior for Hadoop job scheduler

Apache Oozie is the Java based web application used for Job scheduling. It combines the multistage Hadoop job in a single job, which can be termed as “Oozie Job”.

Image Credit : ebook -Apache Oozie Workflow Scheduler for Hadoop from O'Reilly

Types of Oozie Jobs

Oozie supports job scheduling for the full Hadoop stack like Apache MapReduce, Apache Hive, Apache Sqoop and Apache Pig.

1) Periodical/Coordinator Job

These are recurrent jobs which run based on a particular time or they can be configured to run when data is available. Coordinator jobs can manage multiple workflow based jobs as well as where the output of one workflow can be the input for another workflow. The chained behavior is known as “data application pipeline”.

2) Oozie Hadoop Workflow

It is Directed Acyclic Graph (DAG) which consists of collection of actions. The Control nodes decide the chronological order, setting of rules, execution path decision, joining the nodes and fork. Whereas, Action node triggers the execution.

3) Oozie Bundle

An Oozie bundle is collection of many coordinator jobs which can be started, suspended and stopped periodically. The jobs in this bundle are usually dependent on each other.

Build an Awesome Job Winning Project Portfolio with Solved End-to-End Big Data Projects

Oozie Architecture

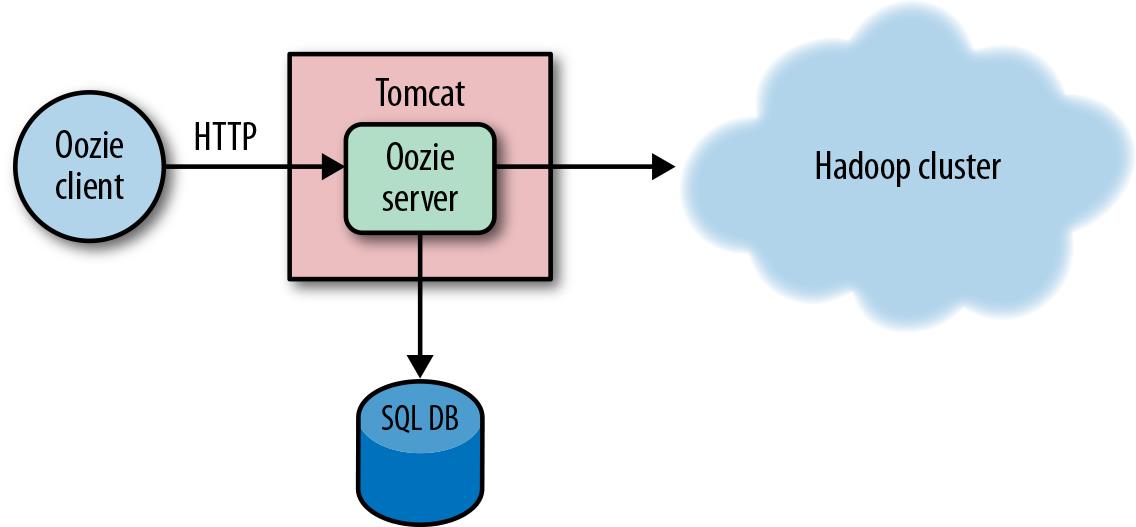

Image Credit : ebook -Apache Oozie Workflow Scheduler for Hadoop from O'Reilly

Oozie Architecture has a Web Server and a database for storing all the jobs. The default web server is Apache Tomcat, which is the open source implementation of Java Servlet Technology. Oozie server is a stateless web application and does not store any information regarding the user and job in-memory. All this information is stored in the SQL database and Oozie retrieves the job state from the database at the time of processing the request. The users or Oozie clients can interact with the server, using either the command line tool, Java Client API or, HTTP REST API.

This type of design pattern helps Oozie support thousands of jobs with low configuration hardware. The transaction nature of SQL provides reliability of the Oozie jobs even if the Oozie server crashes.

Oozie itself has two main components which do all the work, the Command and the ActionExecutor classes.

Use Cases for Oozie

Yahoo has around 40,000 nodes across multiple Hadoop clusters and Oozie is the primary Hadoop workflow engine. The largest Hadoop cluster at Yahoo processes 60 bundles and 1600 coordinators totaling to 80,000 daily workflows on 3 million workflow nodes.

Let us know how you have used Oozie and Zookeeper for Hadoop workflow and Hadoop cluster management. Please leave a comment below.

Implementing OLAP on Hadoop using Apache Kylin

Downloadable solution code | Explanatory videos | Tech Support

Start ProjectAbout the Author

ProjectPro

ProjectPro is the only online platform designed to help professionals gain practical, hands-on experience in big data, data engineering, data science, and machine learning related technologies. Having over 270+ reusable project templates in data science and big data with step-by-step walkthroughs,