Chief Scientific Officer, Machine Medicine Technologies

Senior Data Engineer, Hogan Assessment Systems

Senior Applied Scientist, Amazon

Principal Software Engineer, Afiniti

Use the dataset on aviation for analytics to simulate a complex real-world big data pipeline based on messaging with AWS Quicksight, Druid, NiFi, Kafka, and Hive.

Get started today

Request for free demo with us.

Schedule 60-minute live interactive 1-to-1 video sessions with experts.

Unlimited number of sessions with no extra charges. Yes, unlimited!

Give us 72 hours prior notice with a problem statement so we can match you to the right expert.

Schedule recurring sessions, once a week or bi-weekly, or monthly.

If you find a favorite expert, schedule all future sessions with them.

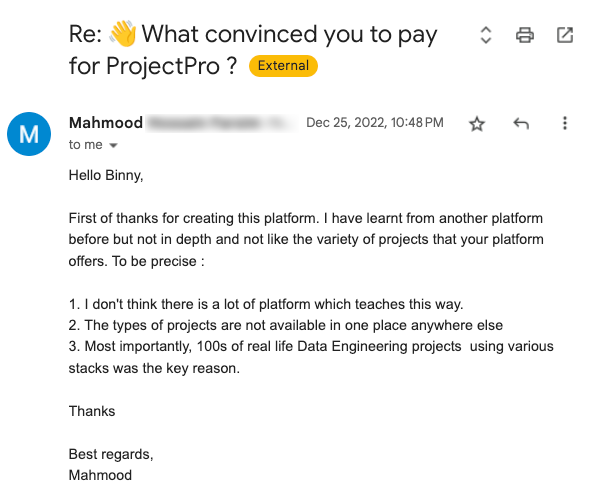

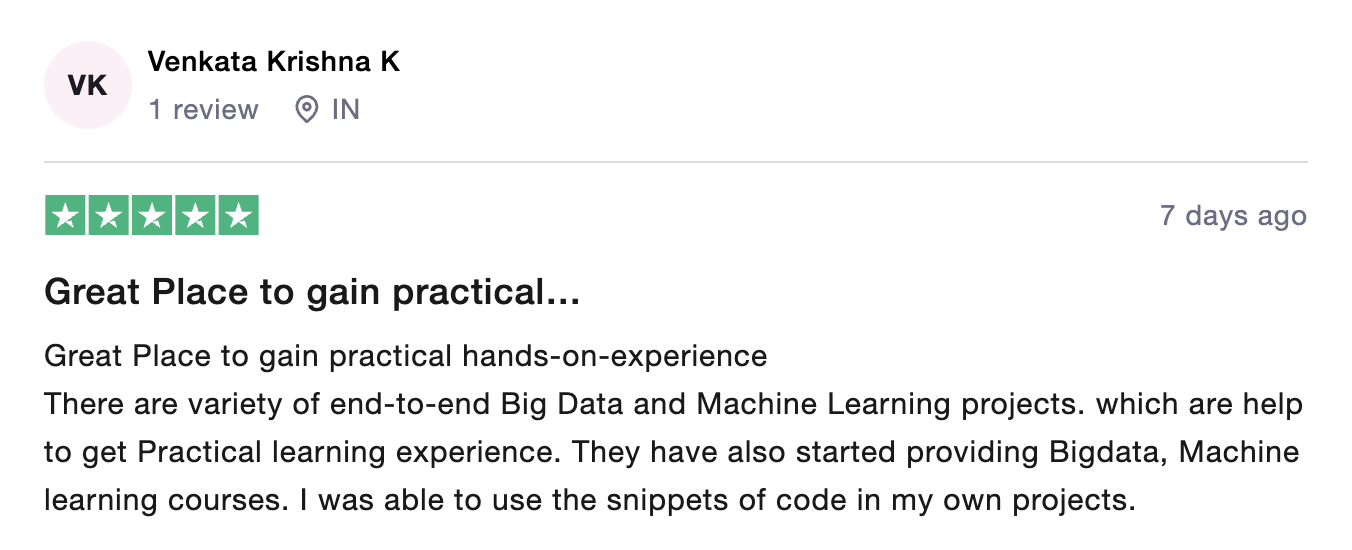

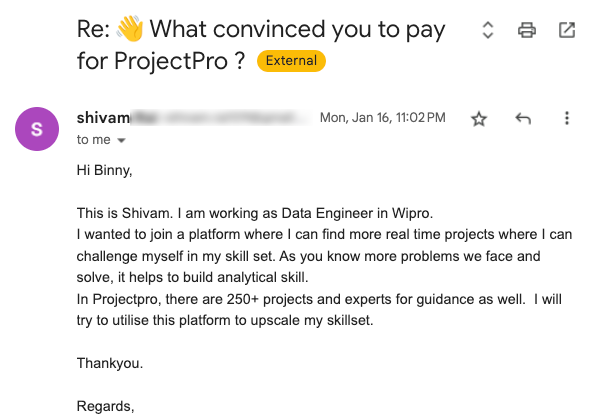

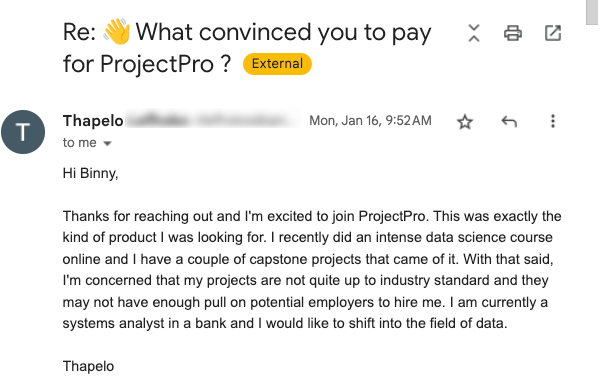

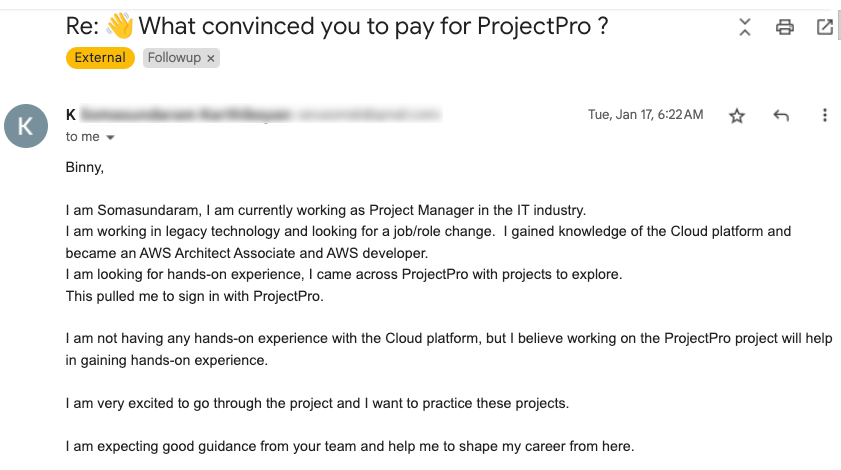

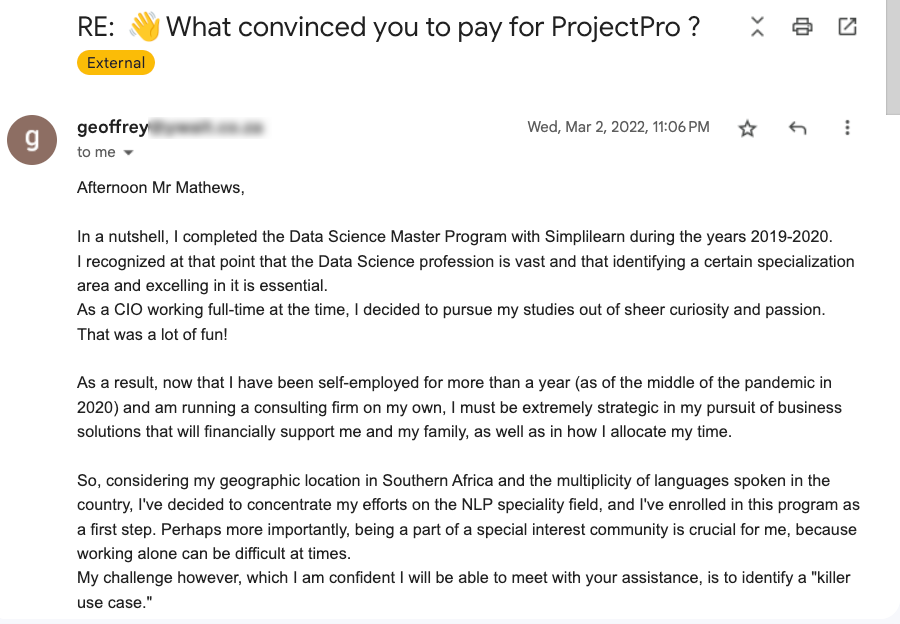

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

250+ end-to-end project solutions

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

15 new projects added every month

New projects every month to help you stay updated in the latest tools and tactics.

500,000 lines of code

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

600+ hours of videos

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

Cloud Lab Workspace

New projects every month to help you stay updated in the latest tools and tactics.

Unlimited 1:1 sessions

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

Technical Support

Chat with our technical experts to solve any issues you face while building your projects.

7 Days risk-free trial

We offer an unconditional 7-day money-back guarantee. Use the product for 7 days and if you don't like it we will make a 100% full refund. No terms or conditions.

Payment Options

0% interest monthly payment schemes available for all countries.

In this Big Data project, a senior Big Data Architect will demonstrate how to implement a Big Data pipeline on AWS at scale. You will be using the Aviation dataset. Analyse Aviation data using highly competitive technology big data stack such as NiFi, Kafka, HDFS ,Hive, Druid, AWS quicksight to derive metrics out of the existing data . Big data pipelines built on AWS to serve both batch and real time streaming ingestions of the data for various consumers according to their needs . This project is highly scalable and implemented on a very large scale organisation set up .

Recommended

Projects

8 Deep Learning Architectures Data Scientists Must Master

From artificial neural networks to transformers, explore 8 deep learning architectures every data scientist must know.

5 Top Machine Learning Projects using KNN

Explore the application of KNN machine learning algorithm with these machine learning projects using knn with source code.

Evolution of Data Science: From SAS to LLMs

Explore the evolution of data science from early SAS to cutting-edge LLMs and discover industry-transforming use cases with insights from an industry expert.

Get a free demo