University of Economics and Technology, Instructor

Director of Data Science & AnalyticsDirector, ZipRecruiter

Dev Advocate, Pinecone and Freelance ML

Senior Data Engineer, Slintel-6sense company

This big data project focuses on solving the small file problem to optimize data processing efficiency by leveraging Apache Hadoop and Spark within AWS EMR by implementing and demonstrating effective techniques for handling large numbers of small files.

Get started today

Request for free demo with us.

Schedule 60-minute live interactive 1-to-1 video sessions with experts.

Unlimited number of sessions with no extra charges. Yes, unlimited!

Give us 72 hours prior notice with a problem statement so we can match you to the right expert.

Schedule recurring sessions, once a week or bi-weekly, or monthly.

If you find a favorite expert, schedule all future sessions with them.

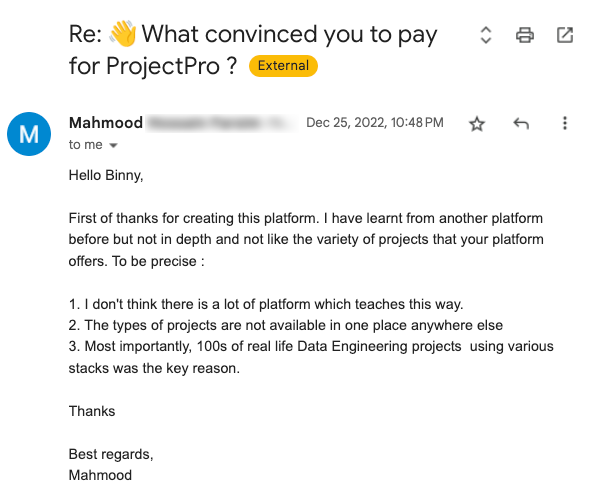

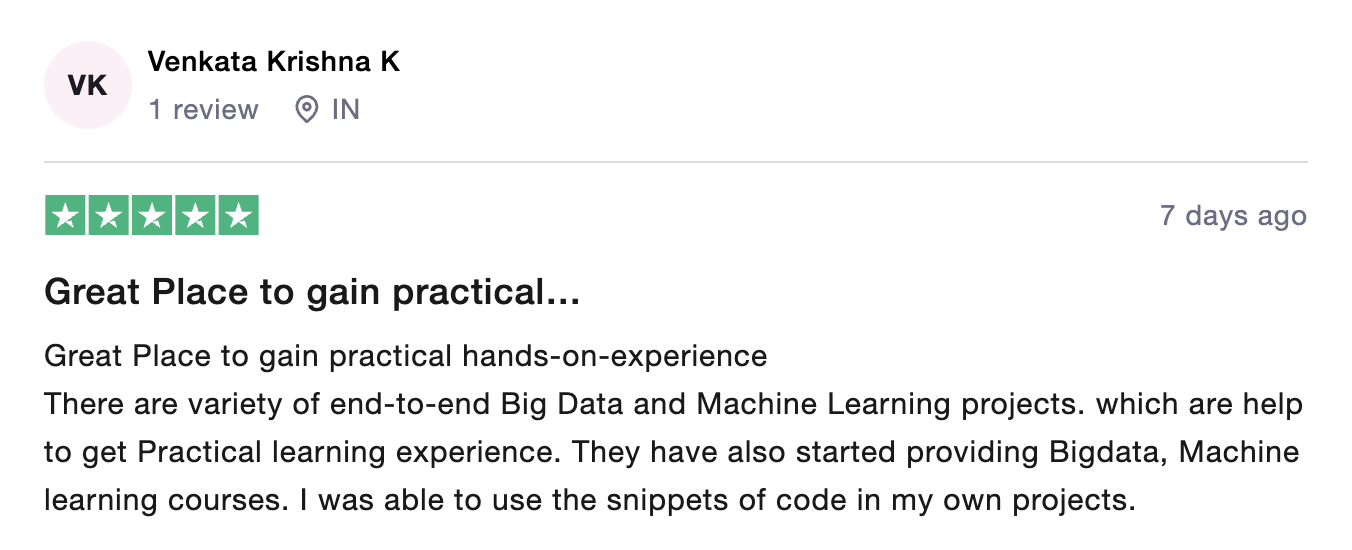

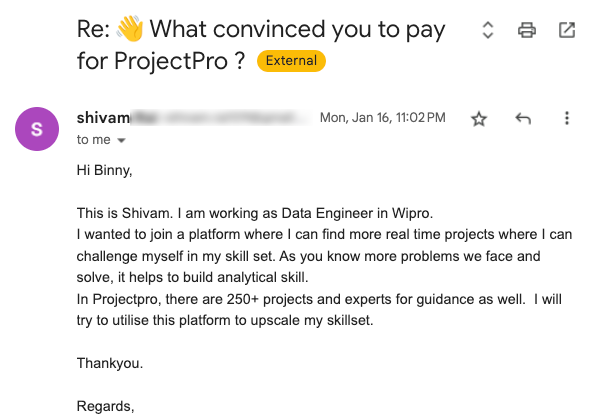

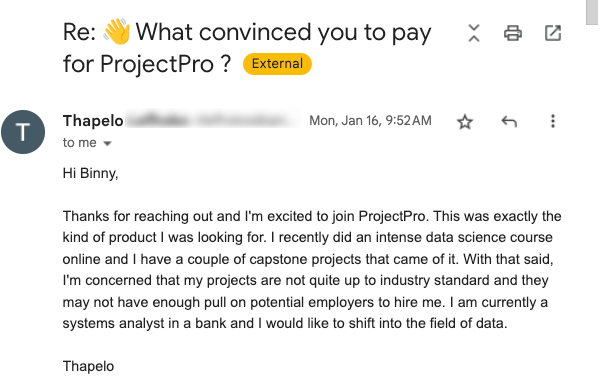

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

Source: ![]()

250+ end-to-end project solutions

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

15 new projects added every month

New projects every month to help you stay updated in the latest tools and tactics.

500,000 lines of code

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

600+ hours of videos

Each project solves a real business problem from start to finish. These projects cover the domains of Data Science, Machine Learning, Data Engineering, Big Data and Cloud.

Cloud Lab Workspace

New projects every month to help you stay updated in the latest tools and tactics.

Unlimited 1:1 sessions

Each project comes with verified and tested solutions including code, queries, configuration files, and scripts. Download and reuse them.

Technical Support

Chat with our technical experts to solve any issues you face while building your projects.

7 Days risk-free trial

We offer an unconditional 7-day money-back guarantee. Use the product for 7 days and if you don't like it we will make a 100% full refund. No terms or conditions.

Payment Options

0% interest monthly payment schemes available for all countries.

In the current era, where data is generated at an unprecedented scale—from social media interactions and IoT devices to enterprise operations and user-generated content—the efficient management and processing of this data become paramount for businesses aiming to harness actionable insights. However, this burgeoning data comes with challenges, notably the small file problem, especially within distributed computing frameworks like Hadoop and Spark. This issue, while technical, has far-reaching implications for business operations, analytics, and strategic decision-making.

The small file problem arises when the data ecosystem becomes inundated with many small files rather than a manageable count of larger files. Small files can significantly degrade performance in environments like Hadoop's HDFS or when processing data with Spark. Each file, directory, and block for Hadoop consumes memory in the NameNode. Thus, many small files can quickly exhaust available memory resources, leading to scalability issues and decreased system efficiency. For Spark, the overhead of managing a high volume of tasks for each small file can lead to decreased parallel processing efficiency and increased processing time.

This challenge impacts the technical performance of data processing tasks and has broader business implications. From increased operational costs due to underutilized resources and the need for additional storage and processing capacity to longer data processing times, which delay insights and decision-making, the small file problem can be a significant bottleneck. Moreover, it can affect data quality and analytics fidelity, as managing many small files can lead to increased complexity in data handling and higher risks of data loss or corruption.

Addressing the small file problem, therefore, is not merely a technical necessity but a business imperative. Solutions to this issue can lead to more efficient data processing workflows, cost savings on storage and compute resources, and faster time-to-insight for data-driven decision-making. Furthermore, optimizing the handling of small files can enhance data governance and security practices by simplifying data management and reducing the attack surface area for cybersecurity threats.

In this context, our project aims to tackle the small file problem head-on, developing a comprehensive solution methodology that leverages both Hadoop and Spark's capabilities within the AWS EMR environment. By demonstrating effective techniques for managing small files—from file consolidation strategies and custom input formats to utilizing optimized file systems and formats—we aim to showcase a scalable, efficient approach to big data processing. This has the potential to improve technical operations significantly and drive business competitiveness in an increasingly data-centric world.

The project aims to develop a comprehensive solution to the small file problem encountered in distributed computing frameworks, specifically Hadoop and Spark. The project seeks to improve performance, enhance efficiency, and optimize resource utilization across big data processing workflows by implementing and demonstrating effective techniques for handling large numbers of small files.

The project utilizes a synthetic dataset designed to mimic real-world scenarios where the small file problem is evident. The dataset comprises thousands of small files, each containing structured data in formats such as CSV or JSON. These files represent typical data chunks that a big data ecosystem might ingest from various sources, including IoT devices, logs, and transaction records. For this project, we utilized the T-Drive Trajectory dataset, a comprehensive collection of taxi trajectory data to facilitate research in mobility, urban planning, and big data analytics. The dataset represents a week-long compilation of trajectories for over 10,000 taxis within Beijing, providing insights into urban taxi movements, service patterns, and city dynamics. The following fields are included in the Dataset-

Taxi ID: A unique identifier for each taxi. This allows for individual tracking of taxis and analysis of patterns on a per-taxi basis.

Date-Time: Timestamps indicating each recorded location point's specific date and time. The format is typically YYYY-MM-DD HH:MM:SS, enabling precise temporal analysis.

Longitude and Latitude: Geospatial coordinates representing the taxi's location at the recording time. These points facilitate mapping trajectories and understanding spatial movement across the city.

Language: Java, Scala

Framework: Apache Hadoop, Apache Spark

Services: AWS Elastic MapReduce (EMR), AWS S3

Tools: Hadoop Distributed File System (HDFS), Parquet, ORC

Apache Hadoop

Apache Hadoop is an open-source framework designed for distributed storage and processing large data sets across clusters of computers using simple programming models. It is built on two main components: the Hadoop Distributed File System (HDFS) and the MapReduce programming model. HDFS provides high-throughput access to application data by distributing storage across many machines, while MapReduce offers a powerful mechanism to process the data in a parallel and fault-tolerant manner. Hadoop scales from single servers to thousands of machines, each offering local computation and storage. This scalability and efficiency make Hadoop a foundational framework for working with big data, allowing for the processing and analyzing of data sizes ranging from gigabytes to petabytes.

Apache Spark

Apache Spark is an open-source, distributed computing system that provides an interface for programming entire clusters with implicit data parallelism and fault tolerance. Initially developed at UC Berkeley's AMPLab, Spark extends the MapReduce model to efficiently support more computations, including interactive queries and stream processing. One of Spark's key features is its in-memory cluster computing that increases processing speed by caching data in memory across multiple parallel operations, making it significantly faster than Hadoop MapReduce for certain applications. Spark supports numerous languages (Scala, Java, Python, and R), allowing for easy development of applications.

Amazon EMR

Amazon Elastic MapReduce (EMR) is a cloud big data platform for processing massive amounts of data using open-source tools such as Apache, Hadoop, Spark, HBase, Presto, and Flink. EMR is designed to efficiently process, analyze, and visualize large data sets by distributing the data across a resizable cluster of Amazon EC2 instances. It simplifies running big data frameworks for processing and analyzing large datasets, handling all cloud resource provisioning, configuration, and tuning. EMR is highly scalable and customizable, enabling users to optimize costs by adjusting the number and type of instances and performing data processing tasks in a secure, managed cloud environment.

Amazon S3

Amazon Simple Storage Service (S3) is an object storage service that offers industry-leading scalability, data availability, security, and performance. S3 is designed to make web-scale computing easier for developers, providing a simple web services interface that can be used to store and retrieve any amount of data, anytime, from anywhere on the web. It provides cost-effective storage for large data collections, offering multiple management features for organizing data and configuring finely-tuned access controls. S3 is commonly used for backup and recovery, data archiving, big data analytics, and serving website content, making it a critical component of cloud computing infrastructure.

Recommended

Projects

15+ Best Generative AI Projects for Practice

Navigate through this list of cutting-edge 15+ Generative AI projects by ProjectPro, with a project suited to every expertise level in AI.

How to Learn Cloud Computing Step by Step in 2024?

Wondering how to learn Cloud Computing in 2024! Check out this blog that guides you through the journey to becoming a cloud engineer. | ProjectPro

Understanding LLM Hallucinations and Preventing Them

A beginner-friendly handbook for understanding LLM hallucinations and exploring various prevention methods.

Get a free demo