Explain PySpark Union() and UnionAll() Functions

This recipe will cover the essentials of PySpark Union() and UnionAll() functions, mastering these powerful tools for data consolidation and analysis. | ProjectPro

Recipe Objective - Explain PySpark Union() and UnionAll() functions

In PySpark, the Dataframe union() function/method of the DataFrame is widely used and is defined as the function used to merge two DataFrames of the same structure or the schema. In the union() function, if the schemas are not the same then it returns an error. The DataFrame unionAll() function or the method of the data frame is widely used and is deprecated since the Spark ``2.0.0” version and is further replaced with union(). The PySpark union() and unionAll() transformations are being used to merge the two or more DataFrame’s of the same schema or the structure. The union() function eliminates the duplicates but unionAll() function merges the /two datasets including the duplicate records in other SQL languages. The Apache PySpark Resilient Distributed Dataset(RDD) Transformations are defined as the spark operations that when executed on the Resilient Distributed Datasets(RDD), further results in the single or the multiple new defined RDD’s. As the RDD mostly are immutable, the transformations always create the new RDD without updating an existing RDD, which results in the creation of an RDD lineage. RDD Lineage is defined as the RDD operator graph or the RDD dependency graph. RDD Transformations are also defined as lazy operations in which none of the transformations get executed until an action is called from the user.

Data Ingestion with SQL using Google Cloud Dataflow

System Requirements

-

Python (3.0 version)

-

Apache Spark (3.1.1 version)

This recipe explains what the union() and unionAll() functions and explains their usage in PySpark.

PySpark Union() Function

The union() function in PySpark is used to combine the rows of two DataFrames with the same schema. Unlike unionAll(),union() performs a distinct operation on the DataFrames, removing any duplicate rows. This function is particularly useful when you want to merge datasets while ensuring unique records.

Syntax of PySpark Union()

DataFrame.union(other)

DataFrame: The DataFrame on which the union operation is performed.

other: The DataFrame to be combined with the first one.

PySpark UnionAll() Function

The unionAll()function in PySpark combines the rows of two DataFrames without eliminating duplicates. It's a straightforward concatenation of rows from both DataFrames.

Syntax of PySpark UnionAll()

DataFrame.unionAll(other)

DataFrame: The DataFrame on which the union operation is performed.

other: The DataFrame to be combined with the first one.

PySpark Project to Learn Advanced DataFrame Concepts

How to Implement the PySpark union() and unionAll() functions in Databricks?

Let’s now understand the implementation and usage of union and union all in PySpark with the following practical example -

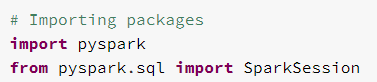

# Importing packages

import pyspark

from pyspark.sql import SparkSession

The Sparksession is imported in the environment so as to use union() and unionAll() functions in the PySpark .

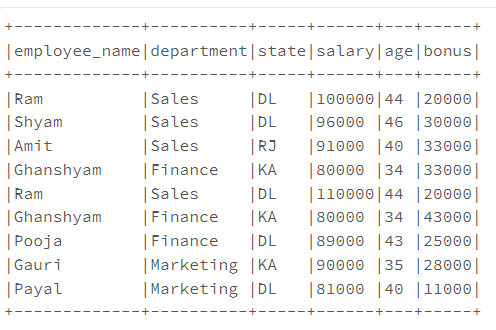

# Implementing the union() and unionAll() functions in Databricks in PySpark

spark = SparkSession.builder.appName('Select Column PySpark').getOrCreate()

sample_Data = [("Ram","Sales","DL",100000,44,20000), \

("Shyam","Sales","DL",96000,46,30000), \

("Amit","Sales","RJ",91000,40,33000), \

("Ghanshyam","Finance","KA",80000,34,33000) \

]

sample_columns= ["employee_name","department","state","salary","age","bonus"]

dataframe = spark.createDataFrame(data = sample_Data, schema = sample_columns)

dataframe.printSchema()

dataframe.show(truncate=False)

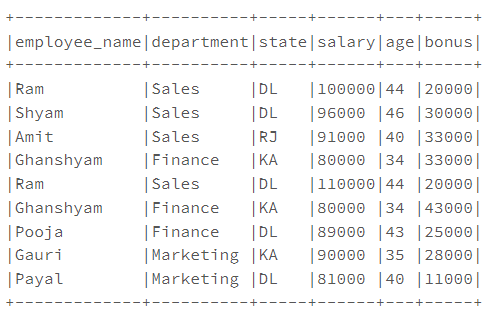

sample_Data2 = [("Ram","Sales","DL",110000,44,20000), \ ("Ghanshyam","Finance","KA",80000,34,43000), \ ("Pooja","Finance","DL",89000,43,25000), \

("Gauri","Marketing","KA",90000,35,28000), \

("Payal","Marketing","DL",81000,40,11000) \

]

sample_columns2= ["employee_name","department","state","salary","age","bonus"]

dataframe2 = spark.createDataFrame(data = sample_Data2, schema = sample_columns2)

dataframe2.printSchema()

dataframe2.show(truncate=False)

# Using union() function

union_DF = dataframe.union(dataframe2)

union_DF.show(truncate=False)

dis_DF = dataframe.union(dataframe2).distinct()

dis_DF.show(truncate=False)

# Using unionAll() function

unionAll_DF = dataframe.unionAll(dataframe2)

unionAll_DF.show(truncate=False)

The Spark Session is defined. The "sample_Data" and "sample_columns'' are defined. Further, the DataFrame ``data frame" is defined using data and columns. The "sample_data2" and "sample_columns2" are defined. Further, the "dataframe2" is defined using data and columns. The dataframe1 and dataframe2 are merged using the union() function. The distinct keyword is used to return just one record when a duplicate exists using the distinct() function.

Use Cases: Union and Union All in PySpark

Union(): Use this when you want to merge datasets and ensure unique records.

UnionAll(): Use this when you need a straightforward concatenation of rows, including duplicates.

Master the Practical Implementation of PySpark Functions with ProjectPro!

PySpark union() and unionAll() functions open up a world of possibilities for seamless data manipulation. Through this exploration, we've gained a comprehensive understanding of when to deploy these functions based on distinct use cases. However, transitioning from theoretical knowledge to practical expertise is where true mastery is achieved. This is where ProjectPro, a one-stop platform for data science and big data projects, comes into play. With a repository boasting over 270+ projects, ProjectPro provides the hands-on experience necessary to solidify your understanding of PySpark and its diverse functionalities.

Download Materials