Display the tail of a file and its aggregate length in the HDFS

This recipe explains what tail of a file and aggregate length and how to display it in the HDFS

Recipe Objective: How to display the tail of a file and its aggregate length in the HDFS?

In this recipe, we see how to display the tail of a file in the HDFS and find its aggregate length.

Table of Contents

- Recipe Objective: How to display the tail of a file and its aggregate length in the HDFS?

- Prerequisites:

- Steps to set up an environment:

- Displaying the tail of a file in HDFS:

- Step 1: Switch to root user from ec2-user using the “sudo -i” command.

- Step 2: Displaying the previous few entries of the file

- Finding the aggregate length of a file:

- hdfs dfs -dus <file path>

- hdfs dfs -du -h <file path>

Prerequisites:

Before proceeding with the recipe, make sure Single node Hadoop is installed on your local EC2 instance. If not already installed, follow this link (click here ) to do the same.

Build a Real-Time Dashboard with Spark, Grafana and Influxdb

Steps to set up an environment:

- In the AWS, create an EC2 instance and log in to Cloudera Manager with your public IP mentioned in the EC2 instance. Login to putty/terminal and check if HDFS is installed. If not installed, please find the links provided above for installations.

- Type “<your public IP>:7180” in the web browser and log in to Cloudera Manager, where you can check if Hadoop is installed.

- If they are not visible in the Cloudera cluster, you may add them by clicking on the “Add Services” in the cluster to add the required services in your local instance.

Displaying the tail of a file in HDFS:

We come across scenarios where the content of the file is extensive, which usually is the case in Big Data, then simply displaying the entire file content would end up drying the resources. In such cases, we use the “tail” argument to display only the last 30 rows of the file

Step 1: Switch to root user from ec2-user using the “sudo -i” command.

Step 2: Displaying the previous few entries of the file

Passing the “-tail” argument in the hadoop fs command, followed by the full path of the file we would like to display, returns only the last few entries. The syntax for the same is given below:

hadoop fs -tail <file path>

Below is the sample output when I tried displaying the tail of a file “flights_data.txt.”

Finding the aggregate length of a file:

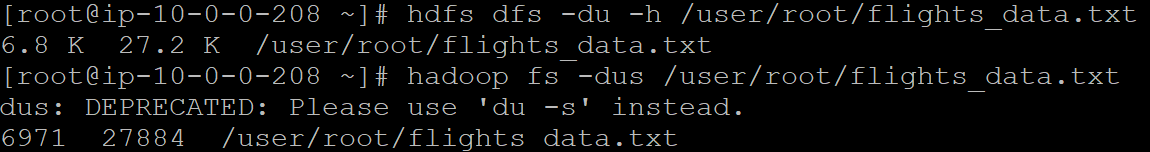

The “-du” parameter helps us find the length of a file in the HDFS. It returns three columns: the size of the file, disk space consumed with all replicas, and full pathname.

hdfs dfs -dus <file path>

This returns the aggregate length of the file followed by the total size of its replicas and its entire path. Please note, “-dus” and “-du -s” are the same. We can pass them in either way in the command.

However, if we wish to display the result in a more readable format, pass the “-h” option in the above command. The syntax for the same is:

hdfs dfs -du -h <file path>

For example, let us see the aggregate length of the “flights_data.txt” file. From the output in the below picture, we can observe the difference in how the result is displayed while using “-dus” and “-du -h.”