How to query JSON data from the table in Snowflake

This recipe helps you query JSON data from the table in Snowflake

Recipe Objective: How to query JSON data from the table in Snowflake?

Snowflake is one of the few enterprise-ready cloud data warehouses that brings simplicity without sacrificing features. It automatically scales, both up and down, to get the right balance of performance vs. cost. Snowflake's claim to fame is that it separates computation from storage. This is significant because almost every other database, Redshift included, combines the two, meaning you must size for your largest workload and incur the cost that comes with it. In this scenario, we will learn how to import or load the data into the target table in the snowflake and query the JSON data.

Build Log Analytics Application with Spark Streaming and Kafka

Table of Contents

- Recipe Objective: How to query JSON data from the table in Snowflake?

- System requirements :

- Step 1: Log in to the account

- Step 2: Select Database

- Step 3: Create File Format for JSON

- Step 4: Create an Internal stage

- Step 5: Create Table in Snowflake using Create Statement

- Step 6: Load JSON file to internal stage

- Step 7: Copy the data into Target Table

- Step 8: Querying the data directly

- Step 9: Querying the JSON object

- Conclusion

System requirements :

- Steps to create Snowflake account Click Here

- Steps to connect to Snowflake by CLI Click Here

Step 1: Log in to the account

We need to log in to the snowflake account. Go to snowflake.com and then log in by providing your credentials. Follow the steps provided in the link above.

Step 2: Select Database

To select the database which you created earlier, we will use the "use" statement

Syntax of the statement:

Use database [database-name];

Example of the statement:

use database demo_db;

The output of the statement:

Step 3: Create File Format for JSON

Creates a named file format that describes a set of staged data to access or load into Snowflake tables.

Syntax of the statement:

CREATE [ OR REPLACE ] FILE FORMAT [ IF NOT EXISTS ]

TYPE = { CSV | JSON | AVRO | ORC | PARQUET | XML } [ formatTypeOptions ]

[ COMMENT = '' ]

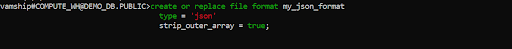

Example of the statement:

create or replace file format my_json_format

type = 'json'

strip_outer_array = true;

The output of the statement:

Step 4: Create an Internal stage

Here we are going to create an internal stage that is json_temp_int_stage with the file format is JSON type

Syntax of the statement:

-- Internal stage

CREATE [ OR REPLACE ] [ TEMPORARY ] STAGE [ IF NOT EXISTS ]

[ FILE_FORMAT = ( { FORMAT_NAME = '' | TYPE = { CSV | JSON | AVRO | ORC | PARQUET | XML } [ formatTypeOptions ] ) } ]

[ COPY_OPTIONS = ( copyOptions ) ]

[ COMMENT = '' ]

Example of the statement:

create temporary stage custome_temp_int_stage

file_format = my_json_format;

The output of the statement:

Step 5: Create Table in Snowflake using Create Statement

Here we are going to create a temporary table using the Create statement as shown below. It creates a new table in the current/specified schema or replaces an existing table.

Syntax of the statement:

CREATE [ OR REPLACE ] TABLE [ ( [ ] , [ ] , ... ) ] ;

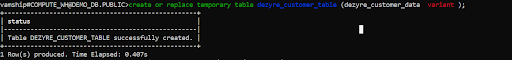

Example of the statement:

create or replace temporary table dezyre_customer_table (dezyre_customer_data variant );

The output of the statement:

Step 6: Load JSON file to internal stage

Here we will load the JSON data file from your local system to the staging of the Snowflake as shown below.

Example of the statement:

put file://D:\customer.json @custome_temp_int_stage;

The output of the statement:

Step 7: Copy the data into Target Table

Here we will load the JSON data to the target table, which we loaded earlier into the internal stage, as shown below.

Example of the statement:

copy into dezyre_customer_table

from @json_temp_int_stage/customer.json

on_error = 'skip_file';

The output of the statement:

Step 8: Querying the data directly

Here we will verify the data loaded into the target table by running a select query as shown below.

Example of the statement:

SELECT * from DEZYRE_EMP_TABLE;

The output of the statement:

Step 9: Querying the JSON object

Here we are going to query the JSON object using the select statement as shown below.

Example of the statement:

SELECT DEZYRE_CUSTOMER_DATA,

DEZYRE_CUSTOMER_DATA:address.city::string as City,

DEZYRE_CUSTOMER_DATA:address.state::string as state,

DEZYRE_CUSTOMER_DATA:address.streetAddress::string as streetNo

from DEZYRE_CUSTOMER_TABLE;

The output of the query: As you can see, in the below image, we are select the individual attributes from the JSON object and create columns.

Conclusion

Here we learned to query JSON data from the table in Snowflake.