How to return checksum information of files in HDFS

This recipe helps you return checksum information of files in HDFS

Recipe Objective: How to return checksum information of files in HDFS?

Data Integrity in HDFS. A checksum is a small-sized block of data derived from another block of digital data to detect errors that may have been introduced during its transmission or storage. By themselves, checksums are often used to verify data integrity but are not relied upon to verify data authenticity. HDFS transparently checksums all data written to it and by default verifies checksums when reading data. A separate checksum is created for every I/O byte. In this recipe, we see how to return checksum information of files in HDFS.

Build a Real-Time Dashboard with Spark, Grafana and Influxdb

Prerequisites:

Before proceeding with the recipe, make sure Single node Hadoop (click here ) is installed on your local EC2 instance.

Steps to set up an environment:

- In the AWS, create an EC2 instance and log in to Cloudera Manager with your public IP mentioned in the EC2 instance. Login to putty/terminal and check if Hadoop is installed. If not installed, please find the links provided above for installations.

- Type “<your public IP>:7180” in the web browser and log in to Cloudera Manager, where you can check if Hadoop is installed.

- If they are not visible in the Cloudera cluster, you may add them by clicking on the “Add Services” in the cluster to add the required services in your local instance.

Returning checksum information of a file in HDFS:

Let us take a file present in the root directory of HDFS as an example and find the checksum information of that file. Command to do this:

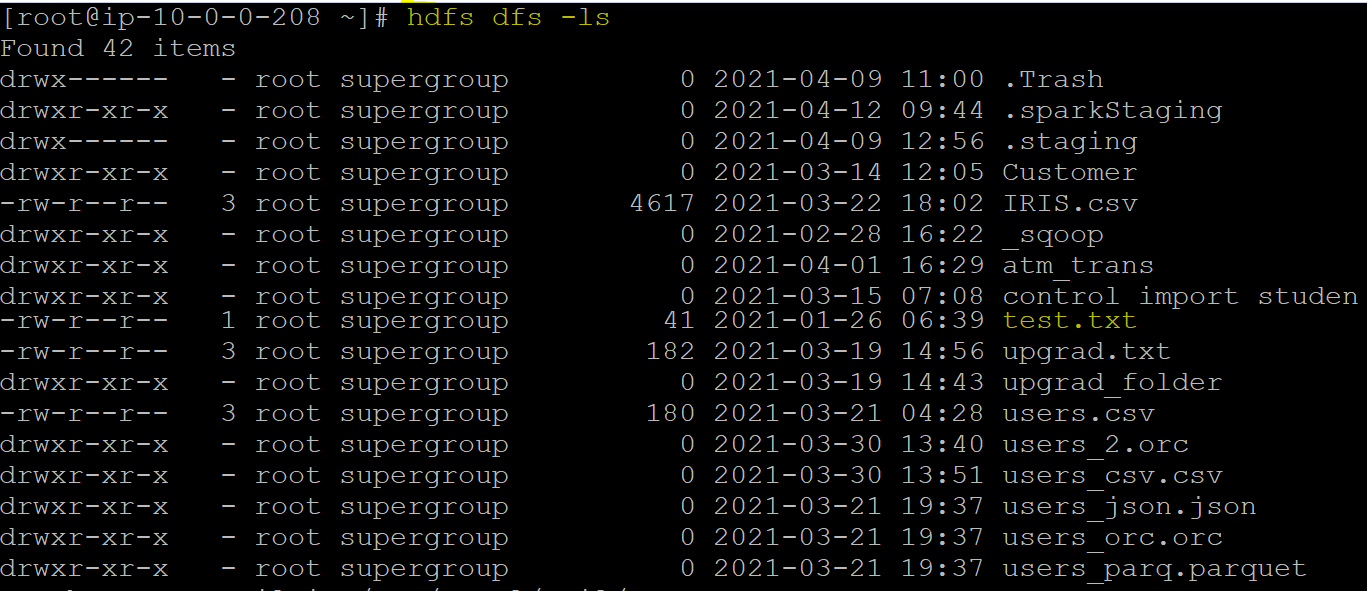

hdfs dfs -ls

It returns the list of files present in the HDFS root directory. The output of the same looks similar to:

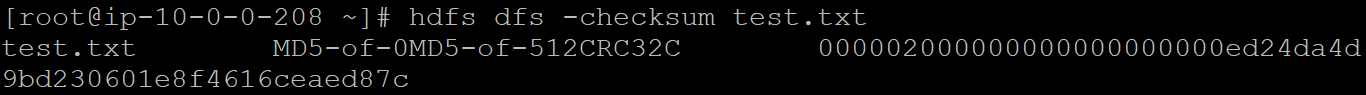

The checksum of a file can be returned using the command:

hdfs dfs -checksum <file>

Let’s find the checksum info of the “test.txt” file present in our root directory. The output of the same is given below.