How to archive file partitions for file count reduction in Hive

This recipe helps you archive file partitions for file count reduction in Hive

Recipe Objective: How to Archive file partitions for file count reduction in Hive?

The in-built support in Hive to convert files in existing partitions to Hadoop Archive (HAR) is one approach to reducing the number of files in sections, as the number of files in the filesystem directly affects the memory consumption in the Namenode. In this recipe, we learn about archiving file partitions for file count reduction in Hive.

Table of Contents

Prerequisites:

Before proceeding with the recipe, make sure Single node Hadoop and Hive are installed on your local EC2 instance. If not already installed, follow the below link to do the same.

Steps to set up an environment:

- In the AWS, create an EC2 instance and log in to Cloudera Manager with your public IP mentioned in the EC2 instance. Login to putty/terminal and check if HDFS and Hive are installed. If not installed, please find the links provided above for installations.

- Type “<your public IP>:7180” in the web browser and log in to Cloudera Manager, where you can check if Hadoop is installed.

- If they are not visible in the Cloudera cluster, you may add them by clicking on the “Add Services” in the cluster to add the required services in your local instance.

Archiving for file count reduction in Hive:

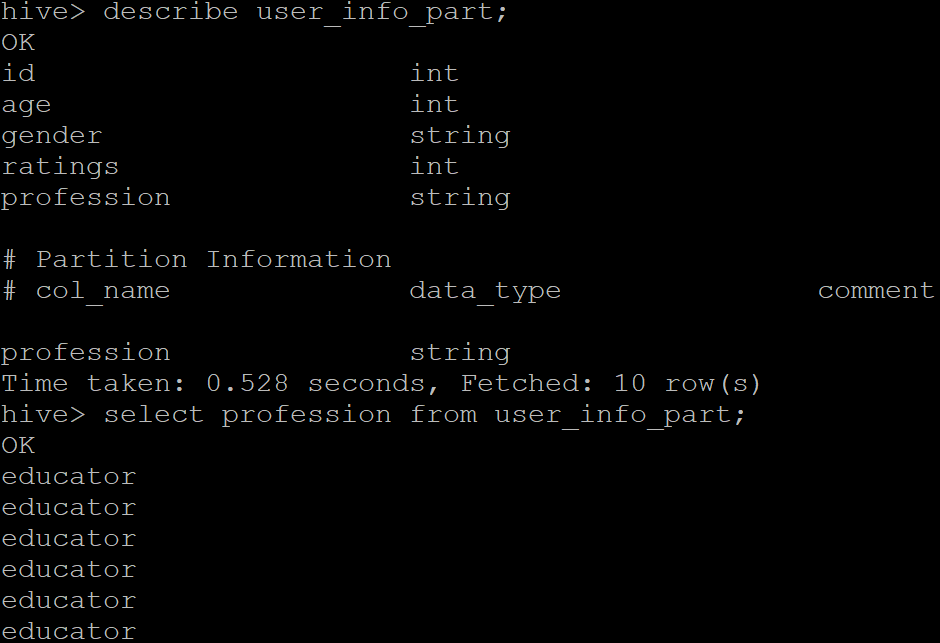

In this recipe, we use the “user_info_part” partition created by partitioning the data based on the “profession” of the user. Let us first describe the partition table and check the data present in it.

Firstly, make sure the following settings are configured before using the archive.

hive> set hive.archive.enabled=true;

hive> set hive.archive.har.parentdir.settable=true;

hive> set har.partfile.size=1099511627776;

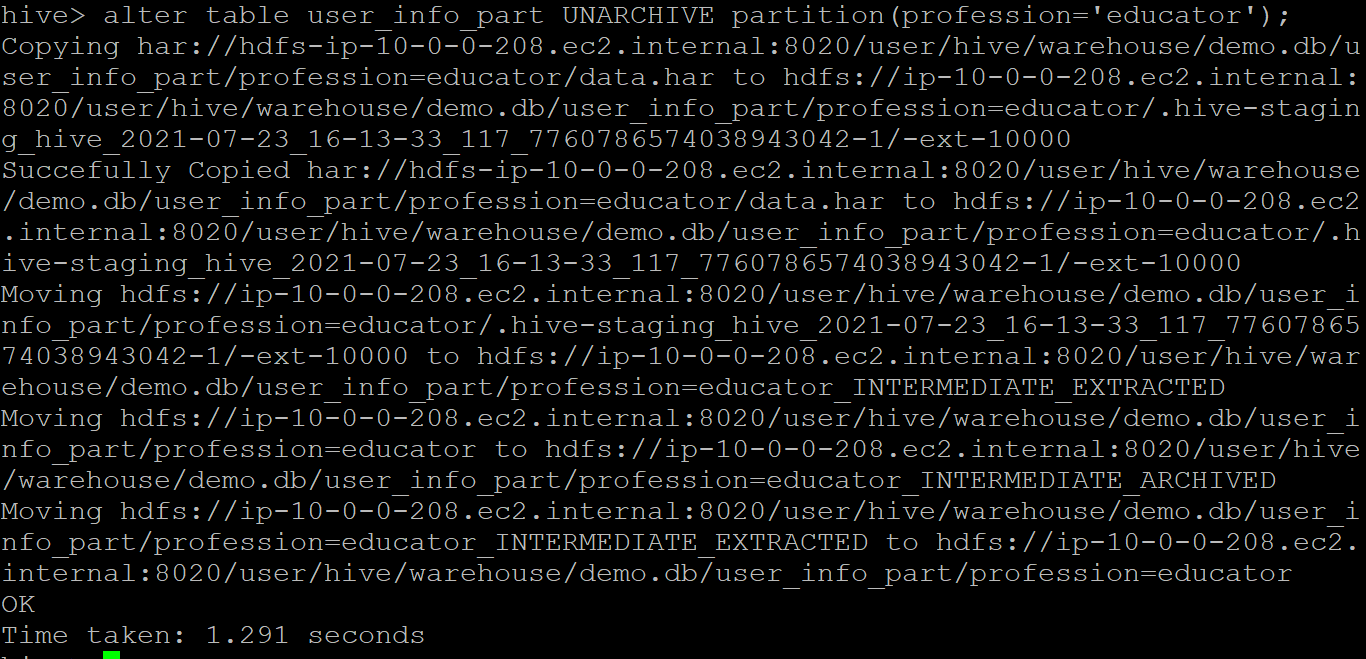

A partition can be archived using the “ARCHIVE” keyword in the “alter table” command. Once the command is issued, a MapReduce job will perform the archiving. Unlike Hive queries, there is no output on the CLI to indicate the process. The syntax for archiving is given below:

alter table <table partition> ARCHIVE partition (<col used for partition>);

If necessary, the partition can be reverted to its original files with the unarchive command. The sample output is given below.