How to check consumer position in Kafka

This recipe helps you check consumer position in Kafka

Recipe Objective: How to check consumer position in Kafka?

In this recipe, we see how to check consumer position in Kafka.

Kafka Interview Questions to Help you Prepare for your Big Data Job Interview

Prerequisites:

Before proceeding with the recipe, make sure Kafka cluster and Zookeeper are set up in your local EC2 instance. In case not done, follow the below link for the installations.

- Kafka cluster and Zookeeper set up - click here

Steps to verify the installation:

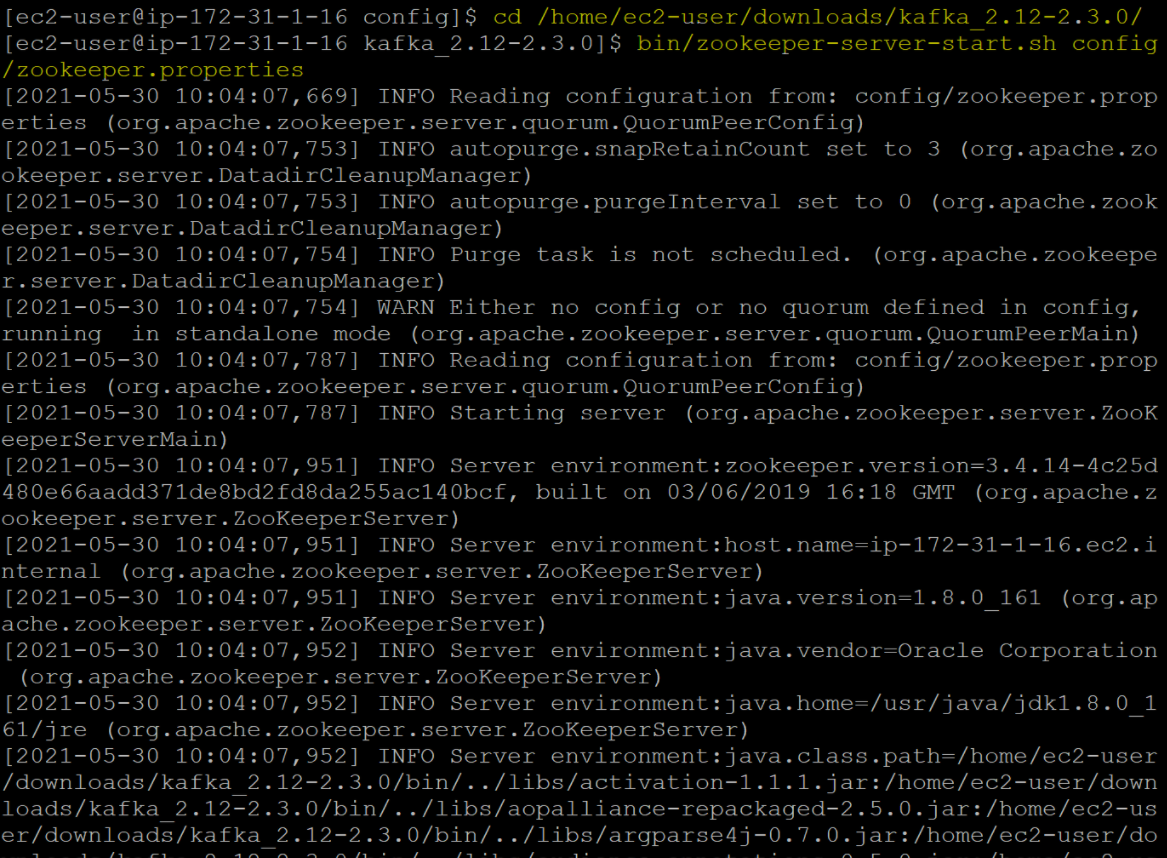

To verify the zookeeper installation, follow the steps listed below.

- You need to get inside the Kafka directory. Go to the Kafka directory using the cd kafka_2.12-2.3.0/ command and then start the Zookeeper server using the bin/zookeeper-server-start.sh config/zookeeper.properties command. You should get the following output.

Verifying Kafka installation:

Before going through this step, please ensure that the Zookeeper server is running. To verify the Kafka installation, follow the steps listed below:

- Leave the previous terminal window as it is and log in to your EC2 instance using another terminal.

- Go to the Kafka directory using the cd downloads/kafka_2.12-2.3.0 command.

- Start the Kafka server using the bin/kafka-server-start.sh config/server.properties command.

- You should get an output that displays a message something like "INFO [KafkaServer id=0] started (kafka.server.KafkaServer)."

Checking consumer position in Kafka:

Kafka maintains a numerical offset for each record in a position. This offset acts as a unique identifier of a record within that partition and denotes the consumer's position in the division. There are two notions of position relevant to the user of the consumer. One: the position of the consumer. It gives the offset of the next record that will be given out. Other, the committed position. It is the last offset that has been stored securely. If a process fails and restarts, then this is the offset that the consumer will recover to. For example, a consumer at position five has consumed records with offsets 0 to 4, then the consumer's position will be five, and the committed position will be 4.

The two main settings affecting offset management are whether auto-commit is enabled and the offset reset policy. Suppose you set to enable.auto.commit, then the consumer will automatically commit offsets periodically at the interval set by auto.commit.interval.ms. The default is 5 seconds. Second, use auto.offset.reset to define the consumer's behavior when there is no committed position or when an offset is out of range. This is a policy for resetting offsets on OffsetOutOfRange errors. "earliest" will move to the oldest available message, "latest" will move to the most recent. Any other value will raise the exception. The default is "latest." You can also select "none" if you would instead set the initial offset yourself, and you are willing to handle out-of-range errors manually.

Download Materials