Display free space and sizes of files in HDFS

This recipe helps you display free space and sizes of files and directories contained in the given directory in HDFS. The hdfs command

Recipe Objective: How to display free space and sizes of files and directories contained in the given directory in HDFS?

It is always essential to keep track of the available free space and size of files and directories present in the HDFS. In this recipe, we learn how to find these values for a given directory in the HDFS.

Access Source Code for Airline Dataset Analysis using Hadoop

Prerequisites:

Before proceeding with the recipe, make sure Single node Hadoop (click here ) is installed on your local EC2 instance.

Steps to set up an environment:

- In the AWS, create an EC2 instance and log in to Cloudera Manager with your public IP mentioned in the EC2 instance. Login to putty/terminal and check if Hadoop is installed. If not installed, please find the links provided above for installations.

- Type "<your public IP>:7180" in the web browser and log in to Cloudera Manager, where you can check if Hadoop is installed.

- If they are not visible in the Cloudera cluster, you may add them by clicking on the "Add Services" in the cluster to add the required services in your local instance.

Displaying free space & sizes of files and directories contained in the given directory:

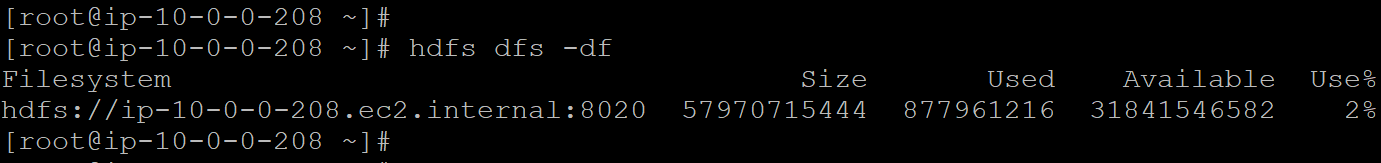

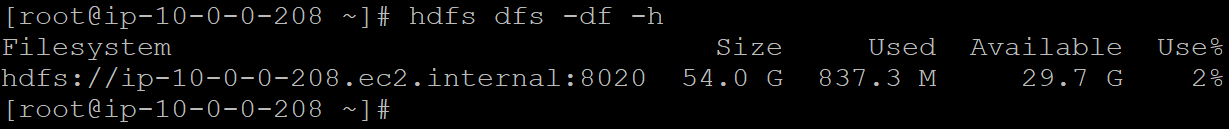

The hdfs command "-df" is used to find the available free space and file and directory sizes in the HDFS directories. The syntax for the same is:

hdfs dfs -df

The below pic shows the result of this command, which contains the data about the file system, its total allocated memory size, the memory used, available memory, and percentage of memory used.

Using "-count": We can provide the paths to the required files in this command, which returns the output containing columns - "DIR_COUNT," "FILE_COUNT," "CONTENT_SIZE," "FILE_NAME." The command for the same is:

This is how we display the space and size-related information about the files and directories in a given directory in HDFS.