Explain the Usage of PySpark MapType Dict in Databricks

This recipe will help you master PySpark MapType Dict in Databricks, equipping you with the knowledge to optimize your data processing workflows.

Recipe Objective - Explain the Usage of PySpark Map Dictionary in Databricks

PySpark is a powerful tool in the world of data processing, and it comes with an interesting feature called MapType Dict. This feature is quite noteworthy as it provides a flexible and efficient solution for handling structured data. In this recipe, our aim is to explore the functionalities of PySpark's MapType Dict in a simple yet detailed manner. We'll specifically look into its versatile applications, with a focus on how it can be used effectively within the Databricks environment.

Table of Contents

- Recipe Objective - Explain the Usage of PySpark Map Dictionary in Databricks

- What is MapType in PySpark?

- Why Choose PySpark MapType Dict in Databricks?

- How to Use PySpark MapType Dict in Databricks?

- System Requirements

- Practical Applications of PySpark MapType Dict in Databricks

- Expand PySpark Understanding with ProjectPro!

What is MapType in PySpark?

MapType in PySpark, (also called map type) is the data type which is used to represent the Python Dictionary (dict) to store the key-value pair that is a MapType object which comprises of three fields that are key type (a DataType), a valueType (a DataType) and a valueContainsNull (a BooleanType). The PySpark Map Type datatype is also used to represent the map key-value pair similar to the python Dictionary (Dict). The MapType also extends the DataType class which is the superclass of all types in the PySpark and takes two mandatory arguments keyType and valueType of the type DataType and one optional boolean argument that is valueContainsNull. The "keyType" and "valueType" can be any type that further extends the DataType class for e.g the StringType, IntegerType, ArrayType, MapType, StructType (struct) etc.

Why Choose PySpark MapType Dict in Databricks?

The following are top 3 features that make PySpark dictionary particularly valuable for handling complex data transformations and aggregations.

-

PySpark MapType Dict allows for the compact storage of key-value pairs within a single column, reducing the overall storage footprint. This becomes crucial when dealing with large datasets in a Databricks environment.

-

With MapType Dict, transforming and manipulating data becomes more intuitive. Whether you're aggregating values based on keys or extracting specific elements, the dictionary structure facilitates streamlined operations.

-

The MapType Dict provides a flexible schema, accommodating dynamic and evolving data structures. This is particularly beneficial in scenarios where the data schema might change over time.

PySpark Project-Build a Data Pipeline using Kafka and Redshift

How to Use PySpark MapType Dict in Databricks?

The following tutorial provides a step-by-step guide on implementing and working with PySpark's MapType in Databricks, including creating, accessing, and manipulating MapType elements within a DataFrame.

System Requirements

-

Python (3.0 version)

-

Apache Spark (3.1.1 version)

Importing Required Packages

We start by importing the necessary PySpark modules and functions. These include SparkSession for creating a session, Row for representing a row of data, and various functions for handling MapType data.

# Importing packages

import pyspark

from pyspark.sql import SparkSession, Row

from pyspark.sql.types import MapType, StringType

from pyspark.sql.functions import col, explode, map_keys, map_values

from pyspark.sql.types import StructType, StructField, StringType, MapType

The Sparksession, Row, MapType, StringType, col, explode, map_keys, map_values, StructType, StructField, StringType, MapType are imported in the environment to use MapType (Dict) in PySpark.

Setting Up SparkSession

Before working with PySpark, it's essential to set up a SparkSession to interact with Spark functionalities.

# Implementing the MapType datatype in PySpark in Databricks

spark = SparkSession.builder.appName('Conversion of PySpark RDD to Dataframe PySpark').getOrCreate()

Creating MapType

Define a MapType with the desired key and value types. In this example, we use StringType for both key and value and specify False to indicate that the map is not nullable.

# Creating MapType

map_Col = MapType(StringType(),StringType(),False)

Creating MapType from StructType

We can create a more complex schema using StructType and StructField. This is useful when the data involves nested structures.

# Creating MapType from StructType

sample_schema = StructType([

StructField('name', StringType(), True),

StructField('properties', MapType(StringType(),StringType()),True)

])

Creating a DataFrame

Create a DataFrame using the defined schema and a sample data dictionary.

Data_Dictionary = [

('Ram',{'hair':'brown','eye':'blue'}),

('Shyam',{'hair':'black','eye':'black'}),

('Amit',{'hair':'grey','eye':None}),

('Aupam',{'hair':'red','eye':'black'}),

('Rahul',{'hair':'black','eye':'grey'})

]

dataframe = spark.createDataFrame(data = Data_Dictionary, schema = sample_schema)

dataframe.printSchema()

dataframe.show(truncate=False)

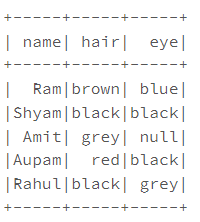

Accessing PySpark MapType Elements

We demonstrate accessing MapType elements using PySpark RDD operations.

# Accessing PySpark MapType elements

dataframe3 = dataframe.rdd.map(lambda x: \

(x.name,x.properties["hair"],x.properties["eye"])) \

.toDF(["name","hair","eye"])

dataframe3.printSchema()

dataframe3.show()

# Using getItem() function of Column type

dataframe.withColumn("hair",dataframe.properties.getItem("hair")) \

.withColumn("eye",dataframe.properties.getItem("eye")) \

.drop("properties") \

.show()

dataframe.withColumn("hair",dataframe.properties["hair"]) \

.withColumn("eye",dataframe.properties["eye"]) \

.drop("properties") \

.show()

# Using explode() function in MapType

dataframe.select(dataframe.name,explode(dataframe.properties)).show()

# Using map_keys() function in MapType

dataframe.select(dataframe.name,map_keys(dataframe.properties)).show()

# Using map_values() function in MapType

dataframe.select(dataframe.name,map_values(dataframe.properties)).show()

The Spark Session is defined. The "map_Col" is defined using the MapType() datatype. The MapType is created by using the PySpark StructType & StructField, StructType() constructor which takes the list of the StructField, StructField takes a field name and type of value. Further, the PySpark map transformation is used to read the values of the properties (MapType column) to access the PySpark MapType elements. Also, the value of a key from Map using getItem() of the Column type is done when a key is taken as an argument and returns the value. The MapType explode() function, map_keys() function to get all the keys and map_values() for getting all map values is done.

PySpark ETL Project for Real-Time Data Processing

Practical Applications of PySpark MapType Dict in Databricks

Listed below are the essential applications of MapType in PySpark -

-

Nested Structures: Utilize MapType Dict to handle nested structures within your data. This is especially useful when dealing with JSON or semi-structured data, allowing for easy navigation and extraction of relevant information.

-

Aggregation and Grouping: Streamline aggregation tasks by employing PySpark MapType Dict for grouping data based on specific keys. This simplifies the process of summarizing information and generating insightful analytics within the Databricks platform.

-

Dynamic Column Addition: Leverage the flexibility of MapType Dict to dynamically add new columns to your PySpark DataFrame. This feature is instrumental in scenarios where the data structure evolves, ensuring adaptability without the need for significant schema modifications.

Expand PySpark Understanding with ProjectPro!

This comprehensive tutorial guides you through each step of implementing and utilizing PySpark's MapType in Databricks, covering creation, access, and manipulation of MapType elements within a DataFrame. PySpark's MapType Dict has significantly broadened our understanding of data processing in Databricks. To truly master this powerful tool, gaining practical experience is a key. ProjectPro is your go-to solution in this journey, offering a diverse repository of over 270+ real-world projects focused on data science and big data. By leveraging ProjectPro, enthusiasts and professionals alike can seamlessly apply their PySpark knowledge in practical scenarios, solidifying their skills and propelling their expertise to new heights.

Explore ProjectPro Repository - the ultimate platform for hands-on learning and project-based learning!

Download Materials