How to save a DataFrame to MySQL in PySpark

This recipe helps you save a DataFrame to MySQL in PySpark

Recipe Objective: How to Save a DataFrame to MySQL in PySpark?

Data merging and aggregation are essential parts of big data platforms' day-to-day activities in most big data scenarios. In this scenario, we will load the dataframe to the MySQL database table or save the dataframe to the table.

Learn Spark SQL for Relational Big Data Procesing

Table of Contents

System requirements :

- Install Ubuntu in the virtual machine click here

- Install single-node Hadoop machine click here

- Install pyspark or spark in Ubuntu click here

- The below codes can be run in Jupyter notebook or any python console.

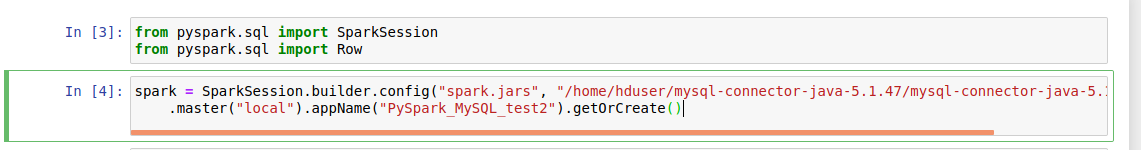

Step 1: Import the modules

In this scenario, we are going to import the pyspark and pyspark SQL modules and create a spark session as below:

import pyspark

from pyspark.sql import SparkSession

from pyspark.sql import Row

spark = SparkSession.builder.config("spark.jars", "/home/hduser/mysql-connector-java-5.1.47/mysql-connector-java-5.1.47.jar") \

.master("local").appName("PySpark_MySQL_test2").getOrCreate()

Explore PySpark Machine Learning Tutorial to take your PySpark skills to the next level!

The output of the code:

Step 2: Create Dataframe to store in MySQL

Here we will create a dataframe to save in MySQL table for that The Row class is in the pyspark.sql submodule. As shown above, we import the Row from class.

studentDf = spark.createDataFrame([

Row(id=1,name='vijay',marks=67),

Row(id=2,name='Ajay',marks=88),

Row(id=3,name='jay',marks=79),

Row(id=4,name='vinay',marks=67),

])

The output of the code:

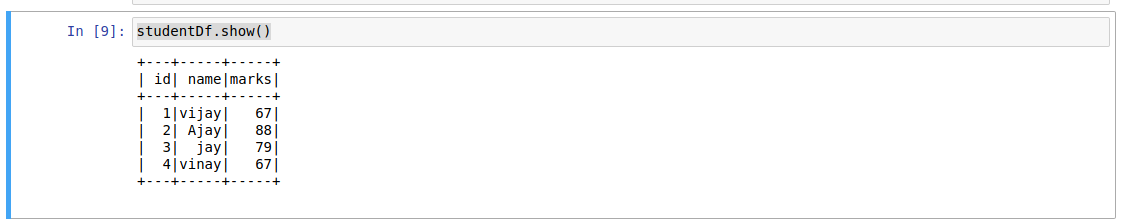

Step 3: To View Data of Dataframe.

Here we are going to view the data top 5 rows in the dataframe as shown below.

studentDf.show(5)

Step 4: To save the dataframe to the MySQL table.

Here we are going to save the dataframe to the MySQL table which we created earlier. To save, we need to use a write and save method as shown in the below code.

studentDf.select("id","name","marks").write.format("jdbc").option("url", "jdbc:mysql://127.0.0.1:3306/dezyre_db&useUnicode=true&characterEncoding=UTF-8&useSSL=false") \

.option("driver", "com.mysql.jdbc.Driver").option("dbtable", "students") \

.option("user", "root").option("password", "root").save()

To check the output of the saved data frame in the MySQL table, log in to the MySQL database. The output of the saved dataframe.

Conclusion

Here we learned to Save a DataFrame to MySQL in PySpark.